Augmented Auto encoders: Implicit 3D Orientation Learning for 6D Object Detection

增强型自动编码器:面向6D物体检测的隐式3D姿态学习

Abstract We propose a real-time RGB-based pipeline for object detection and 6D pose estimation. Our novel 3D orientation estimation is based on a variant of the Denoising Auto encoder that is trained on simulated views of a 3D model using Domain Random iz ation.

摘要 我们提出了一种基于RGB的实时目标检测与6D姿态估计流程。其中创新的3D朝向估计算法基于改进版降噪自编码器(Denoising Autoencoder),该模型通过域随机化(Domain Randomization)技术在3D模型仿真视图上进行训练。

This so-called Augmented Auto encoder has several advantages over existing methods: It does not require real, pose-annotated training data, generalizes to various test sensors and inherently handles object and view symmetries. Instead of learning an explicit mapping from input images to object poses, it provides an implicit representation of object orientations defined by samples in a latent space. Our pipeline achieves stateof-the-art performance on the T-LESS dataset both in the RGB and RGB-D domain. We also evaluate on the LineMOD dataset where we can compete with other synthetically trained approaches.

这种所谓的增强型自动编码器 (Augmented Autoencoder) 相比现有方法具有多项优势:无需真实带有姿态标注的训练数据、可泛化至多种测试传感器、并能天然处理物体与视角对称性问题。该方法并非学习从输入图像到物体姿态的显式映射,而是通过潜在空间中的样本来提供物体朝向的隐式表征。我们的流水线在T-LESS数据集上实现了RGB和RGB-D领域的最先进性能。在LineMOD数据集上的评估结果表明,该方法可与其他基于合成数据训练的方法相媲美。

We further increase performance by correcting 3D orientation estimates to account for perspective errors when the object deviates from the image center and show extended results. Our code is available here 1

我们通过校正3D方向估计来进一步提升性能,以解决物体偏离图像中心时的透视误差问题,并展示了扩展结果。代码可在此处获取1

Keywords 6D Object Detection Pose Estimation Domain Random iz ation Auto encoder Synthetic Data · Symmetries

关键词 6D物体检测 位姿估计 域随机化 自编码器 合成数据 · 对称性

1 Introduction

1 引言

One of the most important components of modern computer vision systems for applications such as mobile robotic manipulation and augmented reality is a reliable and fast 6D object detection module. Although, there are very encouraging recent results from Xiang Wohlhart and Lepetit (2015); Vidal et al. (2018); Hinter s to is ser et al. (2016); Tremblay et al. (2018), a general, easily applicable, robust and fast solution is not available, yet. The reasons for this are manifold. First and foremost, current solutions are often not robust enough against typical challenges such as object occlusions, different kinds of background clutter, and dynamic changes of the environment. Second, existing methods often require certain object properties such as enough textural surface structure or an asymmetric shape to avoid confusions. And finally, current systems are not efficient in terms of run-time and in the amount and type of annotated training data they require.

现代计算机视觉系统在移动机器人操作和增强现实等应用中最关键的组件之一,是可靠且快速的6D物体检测模块。尽管Xiang Wohlhart和Lepetit (2015)、Vidal等人 (2018)、Hinterstoiser等人 (2016)、Tremblay等人 (2018)的研究取得了非常鼓舞人心的成果,但目前仍缺乏一种通用、易用、鲁棒且快速的解决方案。其原因有多方面:首先,现有方案通常对物体遮挡、各类背景干扰以及环境动态变化等典型挑战的鲁棒性不足;其次,现有方法往往要求物体具备特定属性,例如足够的纹理表面结构或非对称形状以避免误判;最后,当前系统在运行效率以及所需标注训练数据的数量与类型方面仍不够高效。

Therefore, we propose a novel approach that directly addresses these issues. Concretely, our method operates on single RGB images, which significantly increases the usability as no depth information is required. We note though that depth maps may be incorporated optionally to refine the estimation. As a first step, we build upon state-of-the-art 2D Object Detectors of (Liu et al. (2016); Lin et al. (2018)) which provide object bounding boxes and identifiers. On the resulting scene crops, we employ our novel 3D orientation estimation algorithm, which is based on a previously trained deep network architecture. While deep networks are also used in existing approaches, our approach differs in that we do not explicitly learn from 3D pose annotations during training. Instead, we implicitly learn representa- tions from rendered 3D model views. This is accomplished by training a generalized version of the Denoising Auto encoder from Vincent et al. (2010), that we call ’Augmented Auto encoder $(A A E)^{\mathstrut}$ , using a novel Domain Random iz ation strategy. Our approach has several advantages: First, since the training is independent from concrete representations of object orientations within $S O(3)$ (e.g. qua tern ions), we can handle ambiguous poses caused by symmetric views because we avoid one-to-many mappings from images to orientations. Second, we learn representations that specifically encode 3D orientations while achieving robustness against occlusion, cluttered backgrounds and generalizing to different environments and test sensors. Finally, the AAE does not require any real pose-annotated training data. Instead, it is trained to encode 3D model views in a self-supervised way, overcoming the need of a large pose-annotated dataset. A schematic overview of the approach based on S under meyer et al. (2018) is shown in Fig 1.

因此,我们提出了一种直接解决这些问题的新方法。具体而言,我们的方法基于单张RGB图像运行,这显著提高了实用性,因为不需要深度信息。不过我们注意到,可以可选地结合深度图来优化估计。首先,我们基于最先进的2D目标检测器(Liu等人(2016);Lin等人(2018))构建,这些检测器提供目标边界框和标识符。在生成的场景裁剪上,我们采用了新颖的3D方向估计算法,该算法基于先前训练的深度网络架构。虽然现有方法也使用深度网络,但我们的方法不同之处在于,在训练期间我们不显式地从3D姿态标注中学习。相反,我们通过渲染的3D模型视图隐式学习表示。这是通过训练Vincent等人(2010)的去噪自动编码器的广义版本实现的,我们称之为“增强自动编码器 $(A A E)^{\mathstrut}$”,并采用了一种新颖的域随机化策略。我们的方法有几个优点:首先,由于训练独立于 $S O(3)$ 内对象方向的具体表示(例如四元数),我们可以处理由对称视图引起的模糊姿态,因为我们避免了从图像到方向的一对多映射。其次,我们学习的表示专门编码3D方向,同时实现了对遮挡、杂乱背景的鲁棒性,并能泛化到不同环境和测试传感器。最后,AAE不需要任何真实的姿态标注训练数据。相反,它以自监督的方式训练编码3D模型视图,克服了对大规模姿态标注数据集的需求。基于Sundermeyer等人(2018)的方法示意图如图1所示。

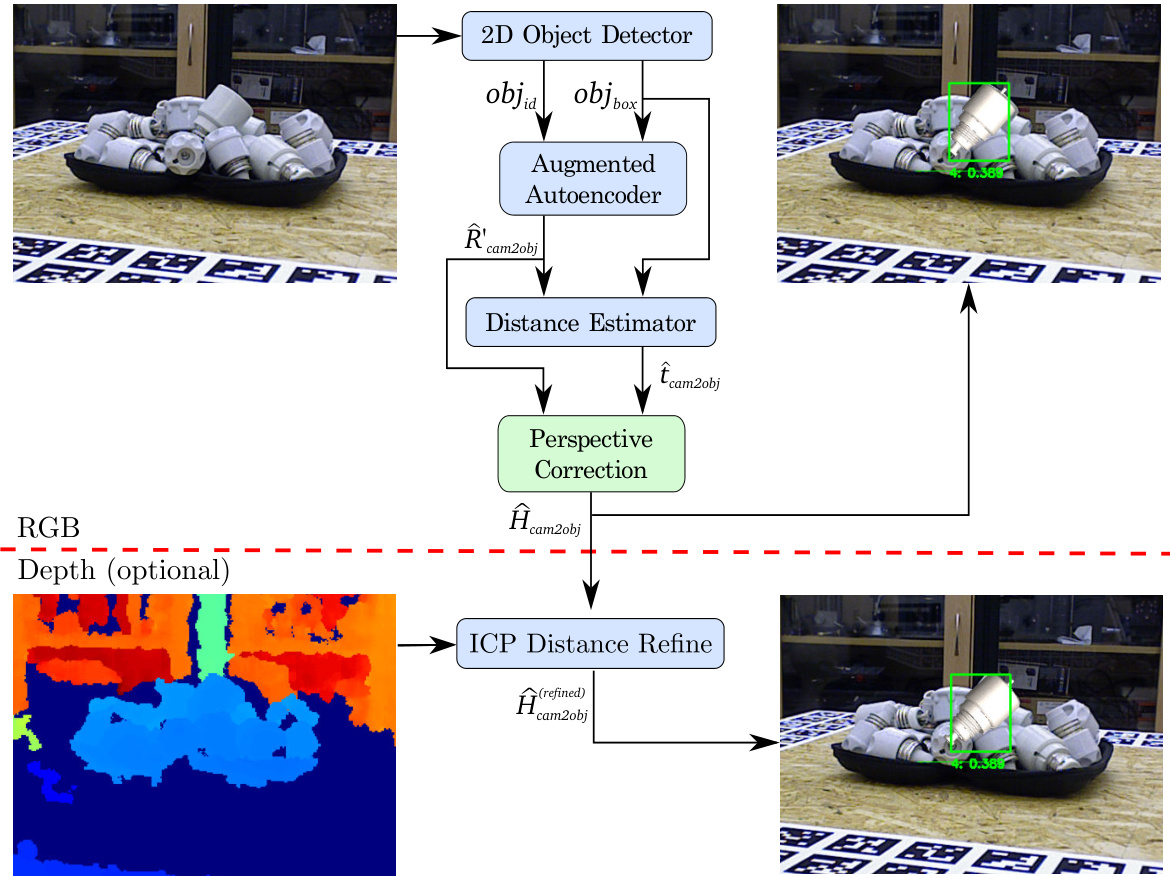

Fig. 1: Our full 6D Object Detection pipeline: after detecting an object (2D Object Detector), the object is quadratically cropped and forwarded into the proposed Augmented Auto encoder. In the next step, the bounding box scale ratio at the estimated 3D orientation $\hat{R}{o b j2c a m}^{\prime}$ is used to compute the 3D translation $\hat{t}{o b j2c a m}$ . The resulting euclidean transformation $\hat{H}^{\prime}{}{o b j2c a m}\in\mathcal{R}^{4x4}$ already shows promising results as presented in S under meyer et al. (2018), however it still lacks of accuracy given a translation in the image plane towards the borders. Therefore, the pipeline is extended by the Perspective Correction block which addresses this problem and results in more accurate 6D pose estimates $\hat{H}{o b j2c a m}$ for objects which are not located in the image center. Additionally, given (dbeoptttho dma).ta, the result can be further refined $(\hat{H}_{o b j2c a m}^{(r e f i n e d)})$ by applying an Iterative Closest Point post-processing

图 1: 我们的完整6D物体检测流程:在检测到物体(2D物体检测器)后,物体被方形裁剪并输入到提出的增强自编码器中。下一步,在估计的3D朝向$\hat{R}{obj2cam}^{\prime}$下使用边界框缩放比例来计算3D平移$\hat{t}{obj2cam}$。得到的欧几里得变换$\hat{H}^{\prime}{}{obj2cam}\in\mathcal{R}^{4x4}$已显示出如S under meyer等人(2018)所展示的良好结果,但对于图像平面中向边缘平移的情况仍缺乏精度。因此,流程通过透视校正模块进行扩展,解决了这一问题,并为不在图像中心的物体提供了更精确的6D姿态估计$\hat{H}{obj2cam}$。此外,给定(dbeoptttho dma)数据,结果可以通过应用迭代最近点后处理进一步细化$(\hat{H}_{obj2cam}^{(refined)})$。

2 Related Work

2 相关工作

Depth-based methods (e.g. using Point Pair Features (PPF) from Vidal et al. (2018); H inters to is ser et al. (2016)) have shown robust pose estimation performance on multiple datasets, winning the SIXD challenge (Hodan, 2017; Hodan et al., 2018). However, they usually rely on the computationally expensive evaluation of many pose hypotheses and do not take into account any high level features. Furthermore, existing depth sensors are often more sensitive to sunlight or specular object surfaces than RGB cameras.

基于深度的方法(例如使用Vidal等人(2018)提出的点对特征(PPF)或Hinterstoisser等人(2016)的技术)已在多个数据集上展现出稳健的姿态估计性能,并赢得了SIXD挑战赛(Hodan, 2017; Hodan et al., 2018)。然而,这些方法通常依赖于计算成本高昂的多姿态假设评估,且未考虑任何高级特征。此外,现有深度传感器对阳光或物体镜面反射的敏感度往往高于RGB相机。

Convolutional Neural Networks (CNNs) have revolutionized 2D object detection from RGB images (Ren et al., 2015; Liu et al., 2016; Lin et al., 2018). But, in comparison to 2D bounding box annotation, the effort of labeling real images with full 6D object poses is magnitudes higher, requires expert knowledge and a complex setup (Hodan et al., 2017).

卷积神经网络 (CNN) 彻底改变了基于RGB图像的2D目标检测 (Ren et al., 2015; Liu et al., 2016; Lin et al., 2018) 。但与2D边界框标注相比,为真实图像标注完整的6D物体姿态所需工作量高出数个数量级,既需要专业知识又依赖复杂配置 (Hodan et al., 2017) 。

Nevertheless, the majority of learning-based pose estimation methods, namely Tekin et al. (2017); Wohlhart and Lepetit (2015); Brachmann et al. (2016); Rad and Lepetit (2017); Xiang et al. (2017), use real labeled images that you only obtain within pose-annotated datasets

然而,大多数基于学习的姿态估计方法,如Tekin等人(2017)、Wohlhart和Lepetit(2015)、Brachmann等人(2016)、Rad和Lepetit(2017)、Xiang等人(2017),都使用只能在带姿态标注数据集中获取的真实标注图像

In consequence, Kehl et al. (2017); Wohlhart and Lepetit (2015); Tremblay et al. (2018); Zakharov et al. (2019) have proposed to train on synthetic images rendered from a 3D model, yielding a great data source with pose labels free of charge. However, naive training on synthetic data does not typically generalize to real test images. Therefore, a main challenge is to bridge the domain gap that separates simulated views from real camera recordings.

因此,Kehl等人 (2017) 、Wohlhart和Lepetit (2015) 、Tremblay等人 (2018) 以及Zakharov等人 (2019) 提出通过从3D模型渲染合成图像进行训练,从而免费获得大量带有姿态标签的数据源。然而,直接在合成数据上进行训练通常无法泛化到真实的测试图像。因此,主要挑战在于弥合模拟视图与真实相机记录之间的领域差距。

2.1 Simulation to Reality Transfer

2.1 从仿真到现实的迁移

There exist three major strategies to generalize from synthetic to real data:

从合成数据泛化到真实数据存在三大主要策略:

2.1.1 Photo-Realistic Rendering

2.1.1 照片级真实感渲染

The works of Movshovitz-Attias et al. (2016); Su et al. (2015); Mitash et al. (2017); Richter et al. (2016) have shown that photo-realistic renderings of object views and backgrounds can in some cases benefit the generalization performance for tasks like object detection and viewpoint estimation. It is especially suitable in simple environments and performs well if jointly trained with a relatively small amount of real annotated images. However, photo-realistic modeling is often imperfect and requires much effort. Recently, Hodan et al. (2019) have shown promising results for 2D Object Detection trained on physically-based renderings.

Movshovitz-Attias等人 (2016)、Su等人 (2015)、Mitash等人 (2017) 和Richter等人 (2016) 的研究表明,在某些情况下,物体视角和背景的逼真渲染可以提升目标检测和视角估计等任务的泛化性能。这种方法尤其适用于简单环境,并且与少量真实标注图像联合训练时表现良好。然而,逼真建模通常不够完美且需要大量精力。最近,Hodan等人 (2019) 展示了基于物理渲染训练的2D目标检测取得了有前景的结果。

2.1.2 Domain Adaptation

2.1.2 领域自适应 (Domain Adaptation)

Domain Adaptation (DA) (Csurka, 2017) refers to leveraging training data from a source domain to a target domain of which a small portion of labeled data (supervised DA) or unlabeled data (unsupervised DA) is available. Generative Adversarial Networks (GANs) have been deployed for unsupervised DA by generating realistic from synthetic images to train class if i ers (Shri vast ava et al., 2017), 3D pose estimators (Bousmalis et al., 2017b) and grasping algorithms (Bousmalis et al., 2017a). While constituting a promising approach, GANs often yield fragile training results. Supervised DA can lower the need for real annotated data, but does not abstain from it.

领域自适应 (DA) (Csurka, 2017) 是指利用源域的训练数据来适应目标域,其中目标域有少量标注数据 (监督式DA) 或未标注数据 (无监督DA) 可用。生成对抗网络 (GAN) 已被用于无监督DA,通过从合成图像生成逼真图像来训练分类器 (Shrivastava et al., 2017)、3D姿态估计器 (Bousmalis et al., 2017b) 和抓取算法 (Bousmalis et al., 2017a)。虽然这是一种有前景的方法,但GAN通常会产生不稳定的训练结果。监督式DA可以减少对真实标注数据的需求,但并不能完全避免使用真实数据。

2.1.3 Domain Random iz ation

2.1.3 领域随机化 (Domain Randomization)

Domain Random iz ation (DR) builds upon the hypothesis that by training a model on rendered views in a variety of semi-realistic settings (augmented with random lighting conditions, backgrounds, saturation, etc.), it will also generalize to real images. Tobin et al. (2017) demonstrated the potential of the DR paradigm for 3D shape detection using CNNs. H inters to is ser et al. (2017) showed that by training only the head network of FasterRCNN of Ren et al. (2015) with randomized synthetic views of a textured 3D model, it also generalizes well to real images. It must be noted, that their rendering is almost photo-realistic as the textured 3D models have very high quality. Kehl et al. (2017) pioneered an end-to-end CNN, called ’SSD6D’, for 6D object detection that uses a moderate DR strategy to utilize synthetic training data. The authors render views of textured 3D object reconstructions at random poses on top of MS COCO background images (Lin et al., 2014) while varying brightness and contrast. This lets the network generalize to real images and enables 6D detection at 10Hz. Like us, for accurate distance estimation they rely on Iterative Closest Point (ICP) post-processing using depth data. In contrast, we do not treat 3D orientation estimation as a classification task.

域随机化 (Domain Randomization, DR) 基于这样一个假设:通过在多种半真实设置(辅以随机光照条件、背景、饱和度等)的渲染视图上训练模型,模型也能泛化到真实图像。Tobin等人 (2017) 展示了DR范式在CNN三维形状检测中的潜力。Hinterstoisser等人 (2017) 表明,仅使用纹理化3D模型的随机合成视图训练Ren等人 (2015) FasterRCNN的头部网络,也能很好地泛化到真实图像。值得注意的是,他们的渲染近乎照片级真实,因为纹理化3D模型质量极高。Kehl等人 (2017) 率先提出名为"SSD6D"的端到端CNN,用于6D物体检测,采用适度DR策略利用合成训练数据。作者在MS COCO背景图像 (Lin等人, 2014) 上随机姿态渲染纹理化3D物体重建视图,同时改变亮度和对比度。这使得网络能泛化到真实图像,并以10Hz频率实现6D检测。与我们类似,为精确估计距离,他们依赖使用深度数据的迭代最近点 (Iterative Closest Point, ICP) 后处理。不同的是,我们并未将3D方向估计视为分类任务。

2.2 Training Pose Estimation with SO(3) targets

2.2 使用SO(3)目标训练姿态估计

We describe the difficulties of training with fixed SO(3) parameter iz at ions which will motivate the learning of view-based representations.

我们描述了使用固定SO(3)参数化进行训练的困难,这将促使学习基于视角的表征。

2.2.1 Regression

2.2.1 回归

Since rotations live in a continuous space, it seems natural to directly regress a fixed SO(3) parameter iz at ions like qua tern ions. However, representational constraints and pose ambiguities can introduce convergence issues as investigated by Saxena et al. (2009). In practice, direct regression approaches for full 3D object orientation estimation have not been very successful (Mahendran et al., 2017). Instead Tremblay et al. (2018); Tekin et al. (2017); Rad and Lepetit (2017) regress local 2D3D correspondences and then apply a Perspective-nPoint (PnP) algorithm to obtain the 6D pose. However, these approaches can also not deal with pose ambiguities without additional measures (see Sec. 2.2.3).

由于旋转存在于连续空间中,直接回归固定的SO(3)参数化方法(如四元数)看似自然。但正如Saxena等人(2009)所研究的,表征约束和位姿歧义性会导致收敛问题。实践中,直接回归方法在完整3D物体朝向估计任务中表现不佳 (Mahendran等人, 2017)。因此Tremblay等人(2018)、Tekin等人(2017)以及Rad和Lepetit(2017)转而回归局部2D-3D对应关系,再通过Perspective-nPoint(PnP)算法获取6D位姿。不过若不采取额外措施,这些方法同样无法处理位姿歧义性问题(参见2.2.3节)。

2.2.2 Classification

2.2.2 分类

Classification of 3D object orientations requires a disc ret iz ation of SO(3). Even rather coarse intervals of $\sim5^{o}$ lead to over 50.000 possible classes. Since each class appears only sparsely in the training data, this hinders convergence. In SSD6D (Kehl et al., 2017) the 3D orientation is learned by separately classifying a discretized viewpoint and in-plane rotation, thus reducing the complexity to $\mathcal{O}(n^{2})$ . However, for non-canonical views, e.g. if an object is seen from above, a change of viewpoint can be nearly equivalent to a change of inplane rotation which yields ambiguous class combinations. In general, the relation between different orientations is ignored when performing one-hot classification.

对3D物体朝向的分类需要对SO(3)进行离散化。即使采用较粗的$\sim5^{o}$间隔,也会产生超过50,000个可能的类别。由于每个类别在训练数据中仅稀疏出现,这会阻碍模型收敛。SSD6D (Kehl et al., 2017)通过分别对离散化视角和平面内旋转进行分类来学习3D朝向,从而将复杂度降低至$\mathcal{O}(n^{2})$。然而,对于非规范视角(例如从上方观察物体时),视角变化可能与平面内旋转变化几乎等效,从而导致类别组合的歧义性。一般而言,在进行独热分类时会忽略不同朝向之间的关联性。

2.2.3 Symmetries

2.2.3 对称性

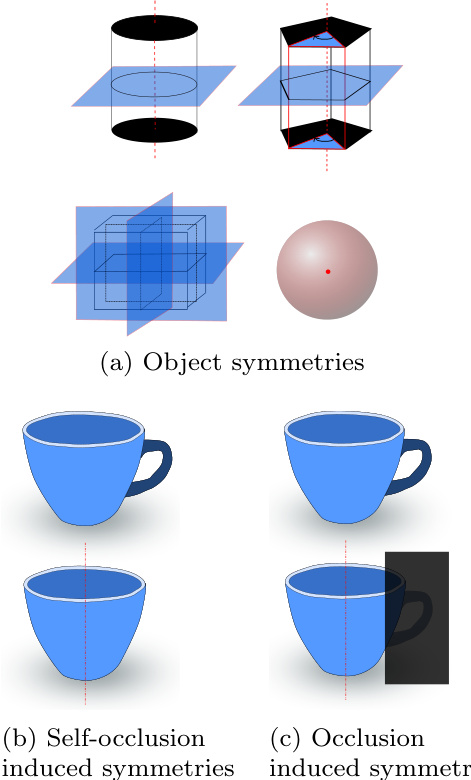

Symmetries are a severe issue when relying on fixed represent at ions of 3D orientations since they cause pose ambiguities (Fig. 2). If not manually addressed, identical training images can have different orientation labels assigned which can significantly disturb the learning process. In order to cope with ambiguous objects, most approaches in literature are manually adapted (Wohlhart and Lepetit, 2015; H inters to is ser et al., 2012a; Kehl et al., 2017; Rad and Lepetit, 2017). The strategies reach from ignoring one axis of rotation (Wohlhart and Lepetit, 2015; H inters to is ser et al., 2012a) over adapt- ing the disc ret iz ation according to the object (Kehl et al., 2017) to the training of an extra CNN to predict symmetries (Rad and Lepetit, 2017). These depict tedious, manual ways to filter out object symmetries (Fig. 2a) in advance, but treating ambiguities due to self-occlusions (Fig. 2b) and occlusions (Fig. 2c) are harder to address.

对称性是依赖固定三维方向表示时面临的严重问题,因为它们会导致姿态歧义(图 2)。若未手动处理,相同的训练图像可能被分配不同方向标签,这将严重干扰学习过程。针对模糊物体,现有文献大多采用人工适配方案 (Wohlhart and Lepetit, 2015; Hinterstoisser et al., 2012a; Kehl et al., 2017; Rad and Lepetit, 2017)。具体策略包括:忽略某旋转轴 (Wohlhart and Lepetit, 2015; Hinterstoisser et al., 2012a)、根据物体调整离散化方案 (Kehl et al., 2017),或训练专用CNN预测对称性 (Rad and Lepetit, 2017)。这些方法虽能预先过滤物体对称性(图 2a),但难以处理自遮挡(图 2b)和遮挡(图 2c)导致的歧义问题。

Symmetries do not only affect regression and classification methods, but any learning-based algorithm that discriminates object views solely by fixed SO(3) representations.

对称性不仅影响回归和分类方法,还会影响任何仅通过固定SO(3)表示来区分物体视图的基于学习的算法。

2.3 Learning Representations of 3D orientations

2.3 三维方向的学习表示

We can also learn indirect pose representations that relate object views in a low-dimensional space. The descriptor learning can either be self-supervised by the object views themselves or still rely on fixed SO(3) represent at ions.

我们还可以学习间接的姿态表示,将物体视角关联到低维空间中。描述符学习可以通过物体视角本身进行自监督,或者仍然依赖于固定的SO(3)表示。

2.3.1 Descriptor Learning

2.3.1 描述符学习

Wohlhart and Lepetit (2015) introduced a CNN-based descriptor learning approach using a triplet loss that minimizes/maximizes the Euclidean distance between similar/dissimilar object orientations. In addition, the distance between different objects is maximized. Although mixing in synthetic data, the training also relies on pose-annotated sensor data. The approach is not immune against symmetries since the descriptor is built using explicit 3D orientations. Thus, the loss can be dominated by symmetric object views that appear the same but have opposite orientations which can produce incorrect average pose predictions.

Wohlhart和Lepetit (2015) 提出了一种基于CNN的描述符学习方法,该方法使用三元组损失来最小化/最大化相似/不相似物体方向之间的欧氏距离。此外,不同物体之间的距离也被最大化。尽管混合了合成数据,训练仍依赖于带有姿态标注的传感器数据。由于描述符是使用显式的3D方向构建的,该方法无法避免对称性问题。因此,损失可能由对称物体视角主导,这些视角看起来相同但方向相反,可能导致错误的平均姿态预测。

Fig. 2: Causes of pose ambiguities

图 2: 姿态模糊性成因

Balntas et al. (2017) extended this work by enforcing proportionality between descriptor and pose distances. They acknowledge the problem of object symmetries by weighting the pose distance loss with the depth difference of the object at the considered poses. This heuristic increases the accuracy on symmetric objects with respect to Wohlhart and Lepetit (2015).

Balntas等人(2017)通过强制描述符距离与位姿距离成比例扩展了这项工作。他们通过用物体在考虑位姿下的深度差异加权位姿距离损失,承认了物体对称性问题。这种启发式方法相较于Wohlhart和Lepetit(2015)提高了对称物体的准确率。

Our work is also based on learning descriptors, but in contrast we train our Augmented Auto encoders (AAEs) such that the learning process itself is independent of any fixed SO(3) representation. The loss is solely based on the appearance of the reconstructed object views and thus symmetrical ambiguities are inherently regarded. Thus, unlike Balntas et al. (2017); Wohlhart and Lepetit (2015) we abstain from the use of real labeled data during training and instead train completely self-supervised. This means that assigning 3D orientations to the descriptors only happens after the training.

我们的工作同样基于学习描述符,但不同的是,我们训练增强自编码器 (AAE) 时,使学习过程本身独立于任何固定的 SO(3) 表示。损失函数仅基于重建物体视图的外观,因此对称性模糊问题被自然纳入考量。因此,与 Balntas 等人 (2017) 和 Wohlhart 与 Lepetit (2015) 不同,我们在训练过程中避免使用真实标注数据,而是完全采用自监督训练。这意味着描述符的 3D 方向分配仅在训练完成后进行。

Kehl et al. (2016) train an Auto encoder architecture on random RGB-D scene patches from the LineMOD dataset H inters to is ser et al. (2011). At test time, descriptors from scene and object patches are compared to find the 6D pose. Since the approach requires the evaluation of a lot of patches, it takes about 670ms per prediction. Furthermore, using local patches means to ignore holistic relations between object features which is crucial if few texture exists. Instead we train on holistic object views and explicitly learn domain invariance.

Kehl等人 (2016) 在LineMOD数据集 [Hinterstoisser等人 (2011)] 的随机RGB-D场景块上训练了一个自动编码器 (Auto encoder) 架构。测试时,通过比较场景和物体块的描述符来寻找6D位姿。由于该方法需要评估大量图像块,每个预测耗时约670毫秒。此外,使用局部块意味着忽略了物体特征间的整体关联性——这在纹理稀少时尤为关键。与之相反,我们在完整物体视图上进行训练,并显式学习域不变性。

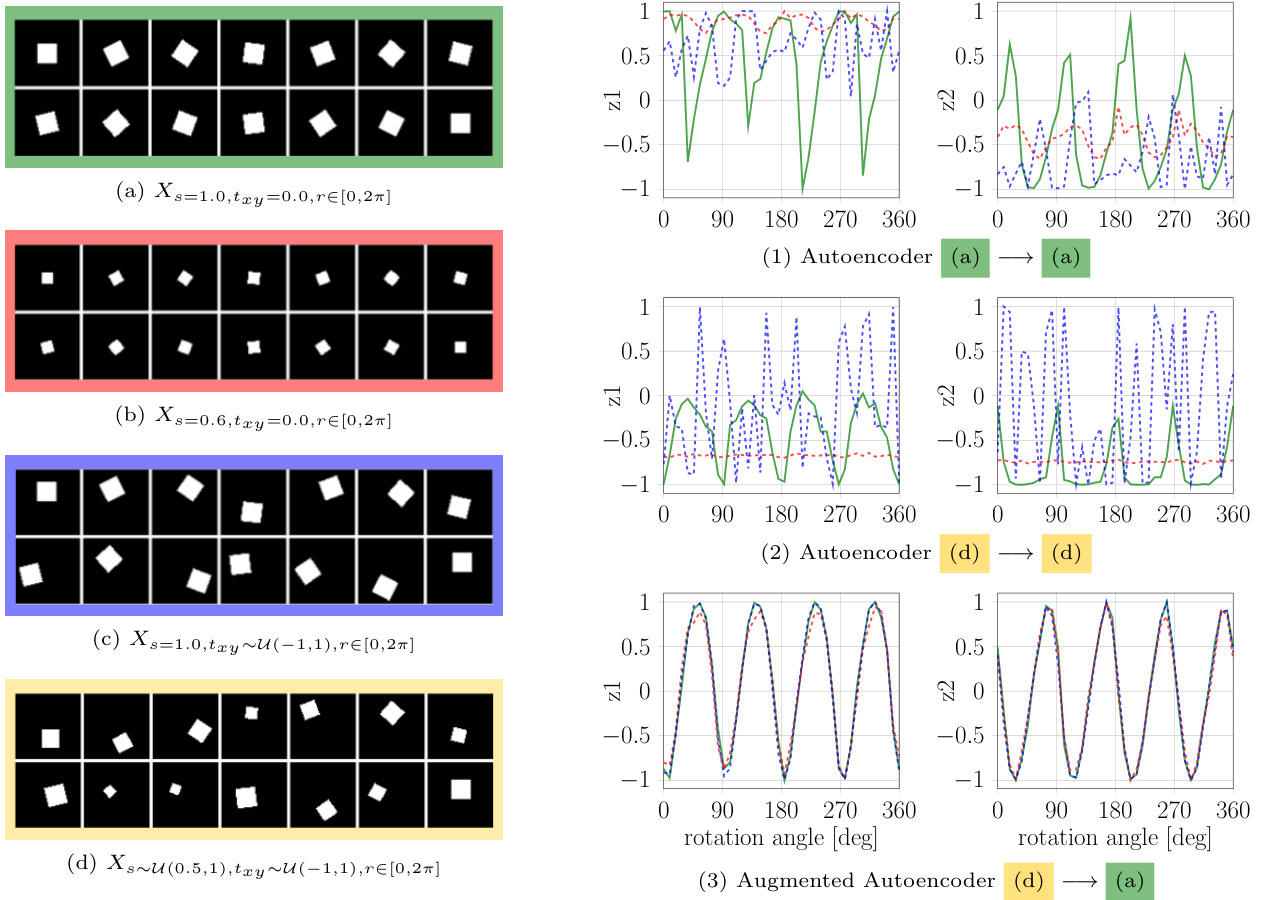

Fig. 3: Experiment on the dsprites dataset of Matthey et al. (2017). Left: $64\mathrm{x64}$ squares from four distributions (a,b,c and d) distinguished by scale (s) and translation $({t_{x y}})$ that are used for training and testing. Right: Normalized latent dimensions $z_{1}$ and $z_{2}$ for all rotations ( $r$ ) of the distribution (a), (b) or (c) after training ordinary Auto encoders (AEs) (1),(2) and an AAE (3) to reconstruct squares of the same orientation.

图 3: Matthey等人(2017)在dsprites数据集上的实验。左: 用于训练和测试的四个分布(a,b,c和d)的$64\mathrm{x64}$正方形,通过尺度(s)和平移$({t_{x y}})$区分。右: 训练普通自编码器(AEs)(1),(2)和AAE(3)重建相同方向正方形后,分布(a)、(b)或(c)所有旋转($r$)的归一化潜在维度$z_{1}$和$z_{2}$。

3 Method

3 方法

In the following, we mainly focus on the novel 3D orientation estimation technique based on the AAE.

以下我们主要介绍基于AAE的新型3D方向估计技术。

3.1 Auto encoders

3.1 自编码器

The original AE, introduced by Rumelhart et al. (1985), is a dimensionality reduction technique for high dimensional data such as images, audio or depth. It consists of an Encoder $\phi$ and a Decoder $\psi$ , both arbitrary learnable function ap proxima tors which are usually neural networks. The training objective is to reconstruct the input $x\in\mathcal{R}^{\mathcal{D}}$ after passing through a low-dimensional bottleneck, referred to as the latent representation $z\in\mathcal{R}^{n}$ with $n<<\mathcal{D}$ :

由Rumelhart等人(1985)提出的原始自编码器(AE)是一种针对图像、音频或深度等高维数据的降维技术。它由编码器$\phi$和解码器$\psi$组成,两者都是任意可学习的函数逼近器,通常为神经网络。训练目标是通过一个低维瓶颈(称为潜在表示$z\in\mathcal{R}^{n}$,其中$n<<\mathcal{D}$)后重构输入$x\in\mathcal{R}^{\mathcal{D}}$:

$$

\hat{x}=(\varPsi\circ\varPhi)(x)=\varPsi(z)

$$

$$

\hat{x}=(\varPsi\circ\varPhi)(x)=\varPsi(z)

$$

The per-sample loss is simply a sum over the pixel-wise L2 distance

每个样本的损失仅是逐像素L2距离之和

$$

\ell_{2}=\sum_{i\in\cal D}\parallel x_{i}-\hat{x}{i}\parallel_{2}

$$

$$

\ell_{2}=\sum_{i\in\cal D}\parallel x_{i}-\hat{x}{i}\parallel_{2}

$$

The resulting latent space can, for example, be used for unsupervised clustering.

生成的潜在空间可用于无监督聚类等任务。

Denoising Auto encoders introduced by Vincent et al. (2010) have a modified training procedure. Here, artificial random noise is applied to the input images $x\in\mathcal{R}^{\mathcal{D}}$ while the reconstruction target stays clean. The trained model can be used to reconstruct denoised test images. But how is the latent representation affected?

Vincent等人(2010)提出的降噪自编码器采用了改进的训练方法。该方法在输入图像$x\in\mathcal{R}^{\mathcal{D}}$上施加人工随机噪声,同时保持重构目标为干净图像。训练后的模型可用于重构降噪后的测试图像。但潜在表征会受到什么影响呢?

Hypothesis 1: The Denoising AE produces latent representations which are invariant to noise because it facilitates the reconstruction of de-noised images.

假设1:去噪自编码器 (Denoising AE) 生成的潜在表征对噪声具有不变性,因为它促进了去噪图像的重建。

We will demonstrate that this training strategy actually enforces invariance not only against noise but against a variety of different input augmentations. Finally, it allows us to bridge the domain gap between simulated and real data.

我们将证明这种训练策略不仅能强制模型对噪声保持不变性,还能应对多种不同的输入增强。最终,它使我们能够弥合仿真数据与真实数据之间的领域差距。

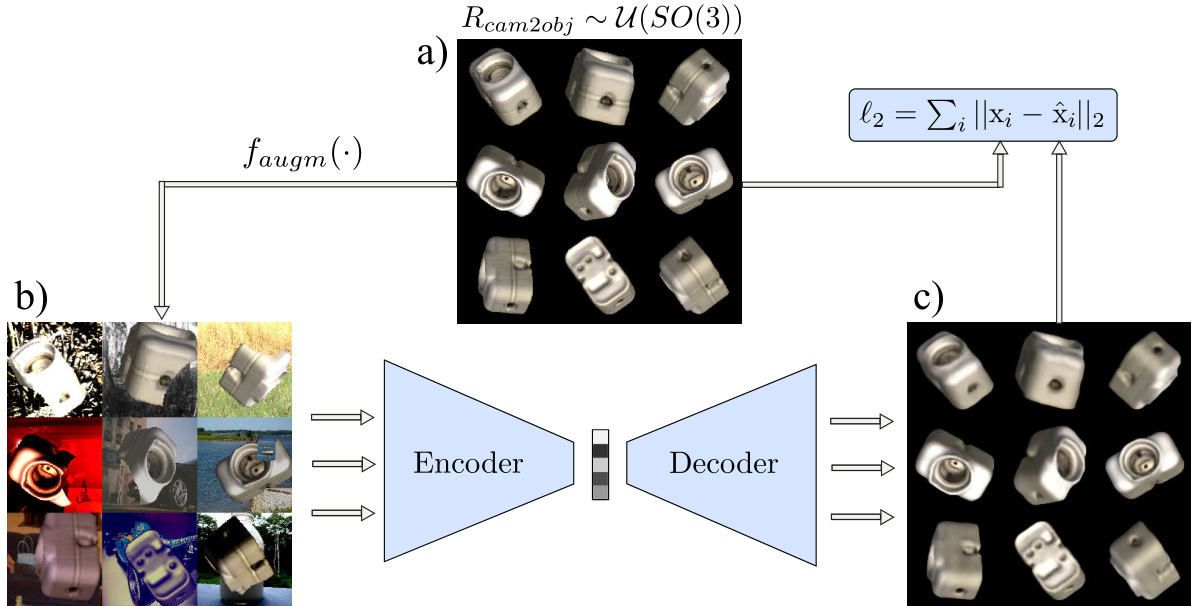

Fig. 4: Training process for the AAE; a) reconstruction target batch $\pmb{x}$ of uniformly sampled SO(3) object views; b) geometric and color augmented input; c) reconstruction $\hat{\pmb{x}}$ after 40000 iterations

图 4: AAE训练过程;a) 均匀采样SO(3)物体视角的重建目标批次$\pmb{x}$;b) 几何与颜色增强的输入;c) 40000次迭代后的重建结果$\hat{\pmb{x}}$

3.2 Augmented Auto encoder

3.2 增强型自动编码器

The motivation behind the AAE is to control what the latent representation encodes and which properties are ignored. We apply random augmentations $f_{a u g m}(.)$ to the input images $x\in\mathcal{R}^{\mathcal{D}}$ against which the encoding should become invariant. The reconstruction target remains eq. (2) but eq. (1) becomes

AAE的动机在于控制潜在表征编码的内容以及忽略哪些属性。我们对输入图像$x\in\mathcal{R}^{\mathcal{D}}$应用随机增强$f_{a u g m}(.)$,编码应对此保持不变。重建目标仍为式(2),但式(1)变为

$$

\hat{x}=(\varPsi\circ\varPhi\circ f_{a u g m})(x)=(\varPsi\circ\varPhi)(x^{\prime})=\varPsi(z^{\prime})

$$

$$

\hat{x}=(\varPsi\circ\varPhi\circ f_{a u g m})(x)=(\varPsi\circ\varPhi)(x^{\prime})=\varPsi(z^{\prime})

$$

To make evident that Hypothesis $\mathbf{1}$ holds for geometric transformations, we learn latent representations of binary images depicting a 2D square at different scales, in-plane translations and rotations. Our goal is to encode only the in-plane rotations $r\in[0,2\pi]$ in a two dimensional latent space $z\in\mathcal{R}^{2}$ independent of scale or translation. Fig. 3 depicts the results after training a CNN-based AE architecture similar to the model in Fig. 5. It can be observed that the AEs trained on reconstructing squares at fixed scale and translation (1) or random scale and translation (2) do not clearly encode rotation alone, but are also sensitive to other latent factors. Instead, the encoding of the AAE (3) becomes invariant to translation and scale such that all squares with coinciding orientation are mapped to the same code. Furthermore, the latent representation is much smoother and the latent dimensions imitate a shifted sine and cosine function with frequency f = 24π respectively. The reason is that the square has two perpendicular axes of symmetry, i.e. after rotating $\frac{\pi}{2}$ the square appears the same. This property of representing the orientation based on the appearance of an object rather than on a fixed para me tri z ation is valuable to avoid ambiguities due to symmetries when teaching 3D object orientations.

为验证假设 $\mathbf{1}$ 在几何变换中的适用性,我们学习了描绘不同尺度、平面内平移和旋转的二维正方形二值图像的潜在表征。目标是在二维潜在空间 $z\in\mathcal{R}^{2}$ 中仅编码与尺度或平移无关的平面内旋转 $r\in[0,2\pi]$。图3展示了采用类似图5模型的CNN自编码器(AE)架构的训练结果。可观察到:在固定尺度和平移(1)或随机尺度和平移(2)条件下训练的自编码器未能清晰独立编码旋转,还受其他潜在因素影响;而对抗自编码器(AAE)(3)的编码对平移和尺度具有不变性,使得所有同方向正方形映射至相同编码。此外,潜在表征更平滑,其维度分别模拟频率f=24π的平移正弦和余弦函数——这是由于正方形具有两条垂直对称轴(旋转$\frac{\pi}{2}$后视觉不变)。这种基于物体外观而非固定参数化来表征方向的特征,对于避免三维物体方向教学中对称性导致的歧义具有重要价值。

3.3 Learning 3D Orientation from Synthetic Object Views

3.3 从合成物体视图中学习3D方向

Our toy problem showed that we can explicitly learn representations of object in-plane rotations using a geometric augmentation technique. Applying the same geometric input augmentations we can encode the whole SO(3) space of views from a 3D object model (CAD or 3D reconstruction) while being robust against inaccurate object detections. However, the encoder would still be unable to relate image crops from real RGB sensors because (1) the 3D model and the real object differ, (2) simulated and real lighting conditions differ, (3) the network can’t distinguish the object from background clutter and foreground occlusions. Instead of trying to imitate every detail of specific real sensor recordings in simulation we propose a Domain Randomization (DR) technique within the AAE framework to make the encodings invariant to insignificant environment and sensor variations. The goal is that the trained encoder treats the differences to real camera images as just another irrelevant variation. Therefore, while keeping reconstruction targets clean, we randomly apply ad- ditional augmentations to the input training views: (1)

我们的玩具问题表明,可以通过几何增强技术显式学习物体平面内旋转的表征。应用相同的几何输入增强方法,我们能从3D物体模型(CAD或三维重建)中编码完整的SO(3)视角空间,同时对不精确的物体检测保持鲁棒性。然而,编码器仍无法关联真实RGB传感器获取的图像区域,原因在于:(1) 3D模型与真实物体存在差异,(2) 模拟与真实光照条件不同,(3) 网络无法将目标物体与背景杂波及前景遮挡区分开来。我们未尝试在仿真中复现真实传感器记录的每个细节,而是在AAE框架内提出域随机化(DR)技术,使编码对环境与传感器无关变化保持恒定。其目标是让训练后的编码器将真实相机图像的差异视作另一种无关变异。因此,在保持重建目标纯净的同时,我们对输入训练视图随机施加以下增强:(1)

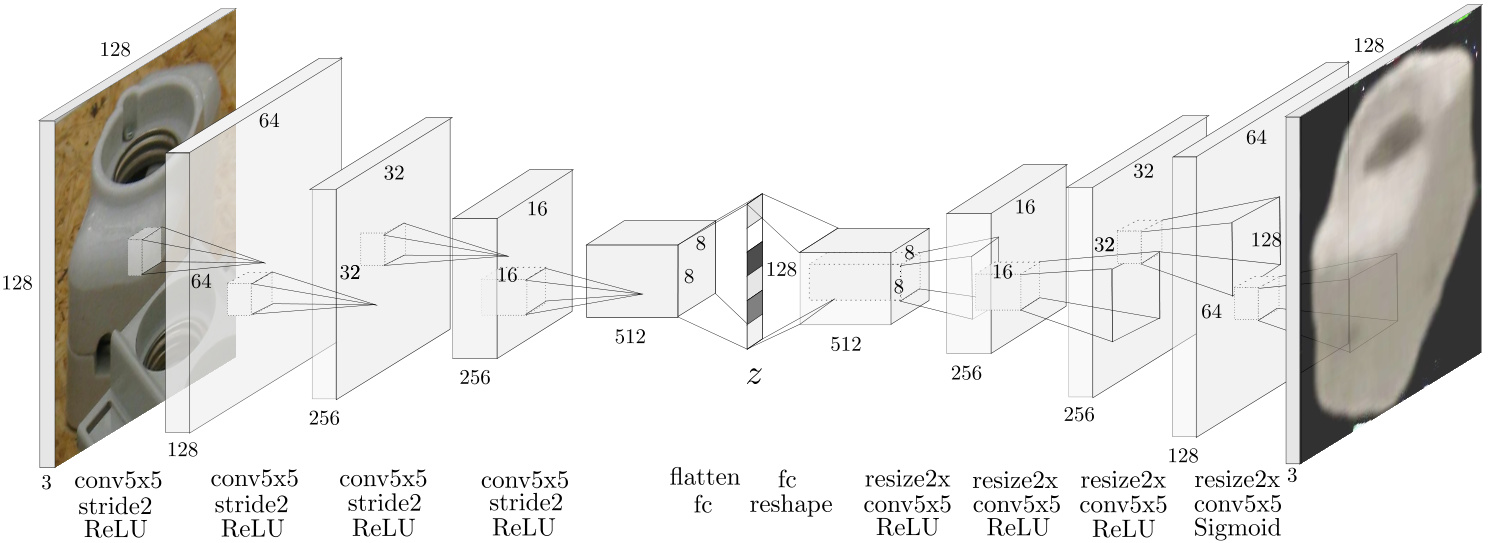

Fig. 5: Auto encoder CNN architecture with occluded test input, ”resize2x” depicts nearest-neighbor upsampling

图 5: 带有遮挡测试输入的自动编码器CNN架构 (Auto encoder CNN architecture with occluded test input),"resize2x"表示最近邻上采样 (nearest-neighbor upsampling)

Table 1: Augmentation Parameters of AAE; Scale and translation is in relation to image shape and occlusion is in proportion of the object mask

表 1: AAE (Adversarial Autoencoder) 增强参数;缩放和平移相对于图像形状,遮挡相对于物体掩模比例

| 50%概率 (每通道30%) | 轻度 (随机位置) &几何变换 | |

|---|---|---|

| 加法 | U(-0.1,0.1) | 环境光 0.4 |

| 对比度 | U(0.4,2.3) | 漫反射 U(0.7,0.9) |

| 乘法 | U(0.6,1.4) | 镜面反射 U(0.2,0.4) |

| 反相高斯模糊 | 0~U(0.0,1.2) | 缩放 U(0.8,1.2) 平移 U(-0.15,0.15) |

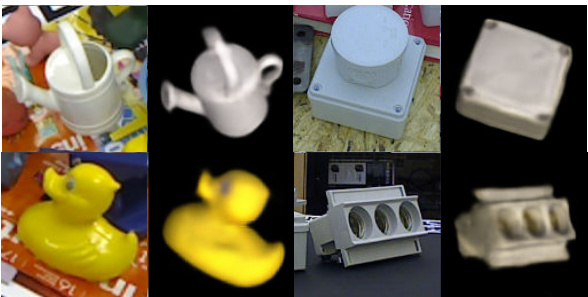

Fig. 6: AAE decoder reconstruction of LineMOD (left) and T-LESS (right) scene crops

图 6: LineMOD (左) 和 T-LESS (右) 场景裁剪的 AAE 解码器重建结果

3.4 Network Architecture and Training Details

3.4 网络架构与训练细节

The convolutional Auto encoder architecture that is used in our experiments is depicted in Fig. 5. We use a boots trapped pixel-wise L2 loss, first introduced by Wu et al. (2016). Only the pixels with the largest reconstruction errors contribute to the loss. Thereby, finer details are reconstructed and the training does not converge to local minima like reconstructing black images for all views. In our experiments, we choose a bootstrap factor of $k=4$ per image, meaning that $\textstyle{\frac{1}{4}}$ of all pixels contribute to the loss. Using OpenGL, we render 20000 views of each object uniformly at random 3D orientations and constant distance along the camera axis (700mm). The resulting images are quadratically cropped using the longer side of the bounding box and resized (nearest neighbor) to $128\times128\times3$ as shown in Fig. 4. All geometric and color input augmentations besides the rendering with random lighting are applied online during training at uniform random strength, parameters are found in Tab. 1. We use the Adam (Kingma and Ba, 2014) optimizer with a learning rate of $2\times10^{-4}$ , Xavier initialization (Glorot and Bengio, 2010), a batch size = 64 and 40000 iterations which takes $\sim4$ hours on a single Nvidia Geforce GTX 1080.

实验中采用的卷积自编码器 (Auto encoder) 架构如图 5 所示。我们使用了 Wu 等人 (2016) 首次提出的基于 bootstrap 的逐像素 L2 损失函数,仅重建误差最大的像素会参与损失计算。这种方法能重建更精细的细节,并避免训练陷入局部最优解(例如对所有视角都重建出黑色图像)。实验中,我们为每幅图像设置 bootstrap 因子 $k=4$,这意味着所有像素中 $\textstyle{\frac{1}{4}}$ 的像素会参与损失计算。

使用 OpenGL 渲染时,我们为每个物体在 700mm 固定相机距离下随机生成 20000 个均匀分布的 3D 视角,如图 4 所示,所得图像以包围盒长边为基准进行方形裁剪,并通过最近邻插值调整为 $128\times128\times3$ 分辨率。除随机光照渲染外,所有几何与色彩输入增强均在训练时以均匀随机强度在线应用,具体参数见表 1。优化器采用 Adam (Kingma and Ba, 2014),学习率设为 $2\times10^{-4}$,使用 Xavier 初始化 (Glorot and Bengio, 2010),批量大小为 64,迭代 40000 次,在单块 Nvidia Geforce GTX 1080 上耗时约 $\sim4$ 小时。

rendering with random light positions and randomized diffuse and specular reflection (simple Phong model (Phong,3.5 Codebook Creation and Test Procedure

3.5 码本创建与测试流程

- in OpenGL), (2) inserting random background images from the Pascal VOC dataset (Everingham et al., 2012), (3) varying image contrast, brightness, Gaussian blur and color distortions, (4) applying occlusions using random object masks or black squares. Fig. 4 depicts an exemplary training process for synthetic views of object 5 from T-LESS (Hodan et al., 2017).

- 在 OpenGL 中), (2) 从 Pascal VOC 数据集 (Everingham et al., 2012) 插入随机背景图像, (3) 改变图像对比度、亮度、高斯模糊和色彩失真, (4) 使用随机物体掩码或黑色方块进行遮挡。图 4 展示了 T-LESS (Hodan et al., 2017) 中物体 5 的合成视图训练过程示例。

After training, the AAE is able to extract a 3D object from real scene crops of many different camera sensors (Fig. 6). The clarity and orientation of the decoder reconstruction is an indicator of the encoding quality. To determine 3D object orientations from test scene crops we create a codebook (Fig. 7 (top)):

训练完成后,AAE能够从多种不同相机传感器的真实场景截图中提取3D物体(图6)。解码器重建的清晰度和方向性是编码质量的指标。为从测试场景截图中确定3D物体朝向,我们创建了一个码本(图7(上)):

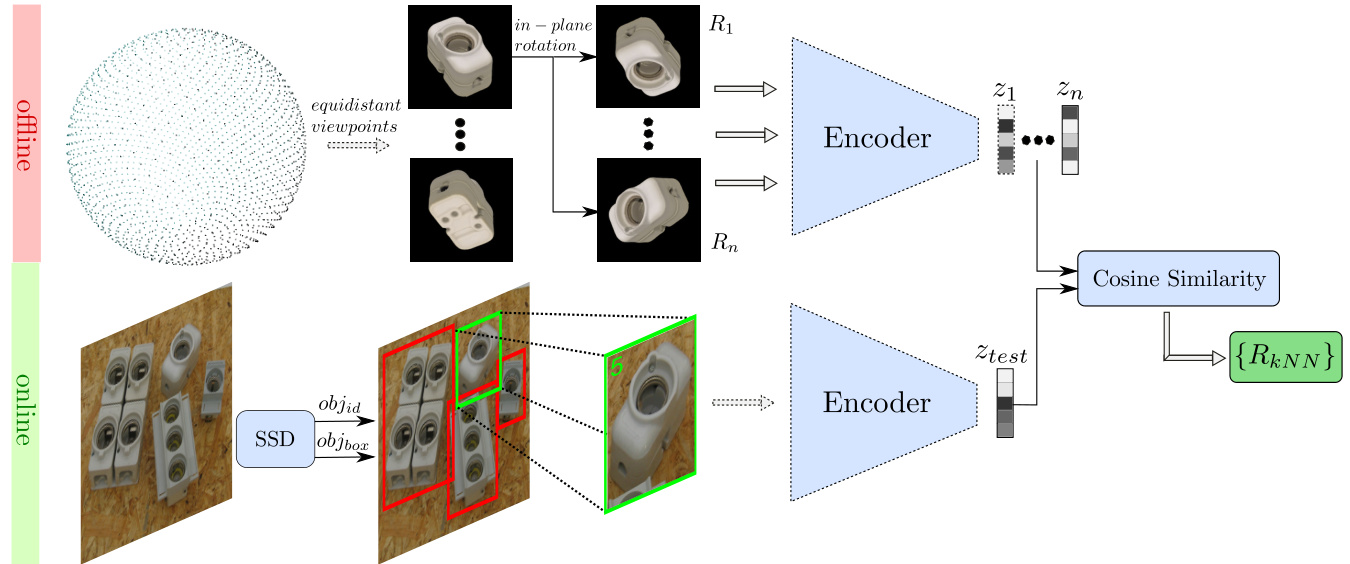

Fig. 7: Top: creating a codebook from the encodings of discrete synthetic object views; bottom: object detection and 3D orientation estimation using the nearest neighbor(s) with highest cosine similarity from the codebook

图 7: 上: 从离散合成物体视图的编码创建码本; 下: 使用码本中余弦相似度最高的最近邻进行物体检测和3D朝向估计

At test time, the considered object(s) are first detected in an RGB scene. The image is quadratically cropped using the longer side of the bounding box multiplied with a padding factor of 1.2 and resized to match the encoder input size. The padding accounts for imprecise bounding boxes. After encoding we compute the cosine similarity between the test code $z_{t e s t}\in\mathcal{R}^{128}$ and all codes $z_{i}\in\mathcal{R}^{128}$ from the codebook:

测试时,首先在RGB场景中检测目标物体。图像以边界框长边乘以1.2的填充因子进行方形裁剪,并调整尺寸以匹配编码器输入大小。该填充用于补偿边界框的不精确性。编码后,我们计算测试编码$z_{test}\in\mathcal{R}^{128}$与码本中所有编码$z_{i}\in\mathcal{R}^{128}$的余弦相似度:

$$

c o s_{i}=\frac{\pmb{z}{i}\pmb{z}{t e s t}}{|\pmb{z}{i}||\pmb{z}_{t e s t}|}

$$

$$

c o s_{i}=\frac{\pmb{z}{i}\pmb{z}{t e s t}}{|\pmb{z}{i}||\pmb{z}_{t e s t}|}

$$

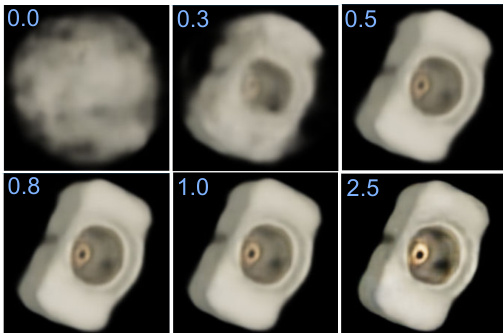

The highest similarities are determined in a k-NearestNeighbor (kNN) search and the corresponding rotation matrices ${R_{k N N}}$ from the codebook are returned as estimates of the 3D object orientation. For the quantitative evaluation we use $k=1$ , however the next neighbors can yield valuable information on ambiguous views and could for example be used in particle filter based tracking. We use cosine similarity because (1) it can be very efficiently computed on a single GPU even for large codebooks. In our experiments we have 2562 equidistant viewpoints $\times~36$ in-plane rotation $=92232$ total entries. (2) We observed that, presumably due to the circular nature of rotations, scaling a latent test code does not change the object orientation of the decoder reconstruction (Fig. 8).

通过k近邻(kNN)搜索确定最高相似度,并从码本中返回相应的旋转矩阵${R_{k N N}}$作为3D物体朝向的估计值。定量评估时我们采用$k=1$,但邻近样本能为模糊视角提供有价值信息,例如可用于基于粒子滤波的追踪。选用余弦相似度的原因是:(1) 即使处理大型码本(实验中采用2562个等距视点$\times~36$个平面内旋转$=92232$个总条目),单块GPU也能高效计算;(2) 我们观察到,可能由于旋转的循环特性,缩放潜在测试编码不会改变解码器重建的物体朝向(图8)。

Fig. 8: AAE decoder reconstruction of a test code $z_{t e s t}\in\mathcal{R}^{128}$ scaled by a factor $s\in[0,2.5]$

图 8: 测试代码 $z_{test}\in\mathcal{R}^{128}$ 的 AAE (Adversarial Autoencoder) 解码器重建结果,缩放因子为 $s\in[0,2.5]$

Table 2: Augmentation Parameters for Object Detectors, top five are applied in random order; bottom part describes phong lighting from random light positions

表 2: 目标检测器的增强参数,前五项按随机顺序应用;底部描述随机光源位置的冯氏光照

| 概率 (每项) | SIXDtrain | 渲染的3D模型 | |

|---|---|---|---|

| 加法 | 0.5 (0.15) | U(-0.08,0.08) | U(-0.1,0.1) |

| 对比度归一化 | 0.5 (0.15) | U(0.5,2.2) | U(0.5,2.2) |

| 乘法 | 0.5 (0.25) | U(0.6,1.4) | U(0.5,1.5) |

| 高斯模糊 | 0.2 | 0~U(0.5,1.0) | = 0.4 |

| 高斯噪声 | 0.1 (0.1) | 0= 0.04 | |

| 环境光 | 1.0 | 0.4 | |

| 漫反射 | 1.0 | u(0.7,0.9) | |

| 镜面反射 | 1.0 | U(0.2,0.4) |

3.6 Extending to 6D Object Detection

3.6 扩展到6D物体检测

3.6.1 Training the 2D Object Detector.

3.6.1 训练2D物体检测器

We finetune the 2D Object Detectors using the object views on black background which are provided in the training datasets of LineMOD and T-LESS. In LineMOD we additionally render domain randomized views of the provided 3D models and freeze the backbone like in Hinter s to is ser et al. (2017). Multiple object views are sequentially copied into an empty scene at random translation, scale and in-plane rotation. Bounding box annotations are adapted accordingly. If an object view is more than 40% occluded, we re-sample it. Then, as for the AAE, the black background is replaced with Pascal VOC images. The random iz ation schemes and parameters can be found in Table 2. In T-LESS we train SSD (Liu et al., 2016) with VGG16 backbone and RetinaNet (Lin et al., 2018) with ResNet50 backbone which is slower but more accurate, on LineMOD we only train RetinaNet. For T-LESS we generate 60000 training samples from the provided training dataset and for LineMOD we generate 60000 samples from the training dataset plus 60000 samples from 3D model renderings with randomized lighting conditions (see Table 2). The RetinaNet achieves 0.73mAP@0.5IoU onT-LESS and 0.62mAP@0.5IoU on LineMOD. On Occluded LineMOD, the detectors trained on the simplistic renderings failed to achieve good detection performance. However, recent work of Hodan et al. (2019) quantitatively investigated the training of 2D detectors on synthetic data and they reached decent detection performance on Occluded LineMOD by fine-tuning FasterRCNN on photo-realistic synthetic images showing the feasibility of a purely synthetic pipeline.

我们使用LineMOD和T-LESS训练数据集中提供的黑色背景物体视图对2D目标检测器进行微调。在LineMOD中,我们还额外渲染了所提供3D模型的域随机化视图,并像Hinterstoisser等人(2017)那样冻结骨干网络。多个物体视图以随机平移、缩放和平面内旋转的方式依次复制到空场景中,边界框标注也相应调整。若物体视图被遮挡超过40%,则重新采样。随后与AAE方法类似,将黑色背景替换为Pascal VOC图像。随机化方案和参数详见表2。

在T-LESS数据集上,我们训练了基于VGG16骨干网络的SSD(Liu等人,2016)和基于ResNet50骨干网络(速度较慢但精度更高)的RetinaNet(Lin等人,2018),而在LineMOD上仅训练RetinaNet。对于T-LESS,我们从提供的训练数据集中生成60000个训练样本;对于LineMOD,则从训练数据集生成60000个样本,外加60000个采用随机光照条件渲染的3D模型样本(见表2)。RetinaNet在T-LESS上达到0.73mAP@0.5IoU,在LineMOD上达到0.62mAP@0.5IoU。

在Occluded LineMOD数据集上,基于简单渲染训练的检测器未能取得良好性能。但Hodan等人(2019)的最新研究定量分析了合成数据训练2D检测器的方法,他们通过在逼真合成图像上微调FasterRCNN,在Occluded LineMOD上取得了不错的检测性能,证明了纯合成管道的可行性。

3.6.2 Projective Distance Estimation

3.6.2 投影距离估计

We estimate the full 3D translation $t_{r e a l}$ from camera to object center, similar to Kehl et al. (2017). Therefore, we save the 2D bounding box for each synthetic object view in the codebook and compute its diagonal length $||b b_{s y n,i}||$ . At test time, we compute the ratio between the detected bounding box diagonal $||b b_{r e a l}||$ and the corresponding codebook diagonal $|b b_{s y n,\mathrm{argmax}(c o s_{i})}|$ , i.e. at similar orientation. The pinhole camera model yields the distance estimate treal,z2

我们估算从相机到物体中心的完整3D平移 $t_{r e a l}$ ,类似于Kehl等人 (2017) 的方法。因此,我们在码本中保存每个合成物体视图的2D边界框,并计算其对角线长度 $||b b_{s y n,i}||$ 。在测试时,我们计算检测到的边界框对角线 $||b b_{r e a l}||$ 与对应码本对角线 $|b b_{s y n,\mathrm{argmax}(c o s_{i})}|$ 的比率(即在相似取向下)。根据针孔相机模型,可得到距离估计值 $t_{real,z2}$。

$$

\hat{t}{r e a l,z}=t_{s y n,z}\times\frac{\left|\left|b b_{s y n,\mathrm{argmax}(c o s_{i})}\right|\right|}{\left|\left|b b_{r e a l}\right|\right|}\times\frac{f_{r e a l}}{f_{s y n}}

$$

$$

\hat{t}{r e a l,z}=t_{s y n,z}\times\frac{\left|\left|b b_{s y n,\mathrm{argmax}(c o s_{i})}\right|\right|}{\left|\left|b b_{r e a l}\right|\right|}\times\frac{f_{r e a l}}{f_{s y n}}

$$

with synthetic rendering distance $t_{s y n,z}$ and focal lengths $f_{r e a l}$ , $f_{s y n}$ of the real sensor and synthetic views. It follows that

合成渲染距离 $t_{syn,z}$ 与真实传感器及合成视图的焦距 $f_{real}$、$f_{syn}$ 满足以下关系:

$$

\begin{array}{c}{\Delta\hat{t}={\hat{t}{r e a l,z}}K_{r e a l}^{-1}b b_{r e a l,c}-{t_{s y n,z}}K_{s y n}^{-1}b b_{s y n,c}}\ {\hat{t}_{r e a l}={t_{s y n}}+\Delta\hat{t}}\end{array}

$$

$$

\begin{array}{c}{\Delta\hat{t}={\hat{t}{r e a l,z}}K_{r e a l}^{-1}b b_{r e a l,c}-{t_{s y n,z}}K_{s y n}^{-1}b b_{s y n,c}}\ {\hat{t}_{r e a l}={t_{s y n}}+\Delta\hat{t}}\end{array}

$$

where $\Delta\hat{t}$ is the estimated vector from the synthetic to the real object center, $K_{r e a l},K_{s y n}$ are the camera matrices, $b b_{r e a l,c},b b_{s y n,c}$ are the bounding box centers in homogeneous coordinates and $\hat{t}{r e a l},t_{s y n}=$ $(0,0,t_{s y n,z})$ are the translation vectors from camera to object centers. In contrast to Kehl et al. (2017), we can predict the 3D translation for different test intrinsics.

其中 $\Delta\hat{t}$ 是从合成物体中心到真实物体中心的估计向量,$K_{real},K_{syn}$ 是相机矩阵,$bb_{real,c},bb_{syn,c}$ 是齐次坐标下的边界框中心,$\hat{t}{real},t_{syn}=$ $(0,0,t_{syn,z})$ 是从相机到物体中心的平移向量。与 Kehl 等人 (2017) 的方法不同,我们能够针对不同测试内参预测 3D 平移量。

3.6.3 Perspective Correction

3.6.3 透视校正

While the codebook is created by encoding centered object views, the test image crops typically do not originate from the image center. Naturally, the appearance of the object view changes when translating the object in the image plane at constant object orientation. This causes a noticeable error in the rotation estimate from the codebook towards the image borders. However, this error can be corrected by determining the object rotation that approximately preserves the appearance of the object when translating it to our estimate $\hat{t}_{r e a l}$ .

虽然码本是通过编码居中的物体视图创建的,但测试图像裁剪通常并非源自图像中心。当物体在图像平面内平移而保持方向不变时,物体视图的外观会自然发生变化。这会导致从码本到图像边缘的旋转估计出现明显误差。不过,这种误差可以通过确定物体旋转来校正,该旋转能使物体平移至我们估计的 $\hat{t}_{real}$ 时大致保持外观不变。

$$

\binom{\alpha_{x}}{\alpha_{y}}=\left(\begin{array}{c}{-\arctan(\hat{t}{r e a l,y}/\hat{t}{r e a l,z})}\ {\arctan(\hat{t}{r e a l,x}/\sqrt{\hat{t}{r e a l,z}^{2}+\hat{t}_{r e a l,y}^{2}})}\end{array}\right)

$$

$$

\binom{\alpha_{x}}{\alpha_{y}}=\left(\begin{array}{c}{-\arctan(\hat{t}{r e a l,y}/\hat{t}{r e a l,z})}\ {\arctan(\hat{t}{r e a l,x}/\sqrt{\hat{t}{r e a l,z}^{2}+\hat{t}_{r e a l,y}^{2}})}\end{array}\right)

$$

$$

\hat{R}{o b j2c a m}=R_{y}(\alpha_{y})R_{x}(\alpha_{x})\hat{R}_{o b j2c a m}^{\prime}

$$

$$

\hat{R}{o b j2c a m}=R_{y}(\alpha_{y})R_{x}(\alpha_{x})\hat{R}_{o b j2c a m}^{\prime}

$$

where $\alpha_{x},\alpha_{y}$ describe the angles around the camera axes and $R_{y}(\alpha_{y})R_{x}(\alpha_{x})$ the corresponding rotation mat rce s to core ct the initial rotation estimate Rbjcam from object to camera. The perspective corrections give a notable boost in accuracy as reported in Table 7. If strong perspective distortions are expected at test time, the training images $x^{\prime}$ could also be recorded at random distances as opposed to constant distance. However, in the benchmarks, perspective distortions are minimal and consequently random online image-plane scaling of $x^{\prime}$ is sufficient.

其中 $\alpha_{x},\alpha_{y}$ 描述相机轴周围的旋转角度,$R_{y}(\alpha_{y})R_{x}(\alpha_{x})$ 表示从物体到相机坐标系初始旋转估计 $R_{bjcam}$ 的校正旋转矩阵。如表7所示,透视校正显著提升了精度。若测试时预期存在强烈透视畸变,训练图像 $x^{\prime}$ 也可采用随机距离拍摄而非固定距离。但在基准测试中,透视畸变极小,因此对 $x^{\prime}$ 进行随机在线图像平面缩放即可满足需求。

3.6.4 ICP Refinement

3.6.4 ICP (Iterative Closest Point) 精配准

Optionally, the estimate is refined on depth data using a point-to-plane ICP approach with adaptive thresholding of correspondences based on Chen and Medioni (1992); Zhang (1994) taking an average of $\sim320m s$ . The refinement is first applied in direction of the vector pointing from camera to the object where most of the RGB-based pose estimation errors stem from and then on the full 6D pose.

可选地,该估计值会基于深度数据通过点对面(point-to-plane) ICP方法进行优化,并采用Chen和Medioni (1992)与Zhang (1994)提出的自适应对应关系阈值策略,平均耗时约$\sim320m s$。优化过程首先沿相机指向物体的向量方向进行(该方向是RGB姿态估计误差的主要来源),随后再应用于完整的6D姿态。

Table 3: Inference time of the RGB pipeline using SSD on CPUs or GPU 24ms

表 3: 使用SSD在CPU或GPU上运行RGB管道的推理时间 24ms

| 4CPUs | GPU | |

|---|---|---|

| SSD | ~17ms | |

| Encoder | ~100ms | ~5ms |

| CosineSimilarity | 2.5ms | 1.3ms |

| NearestNeighbor | 0.3ms | 3.2ms |

| Projective Distance | 0.4ms |

Table 4: Single object pose estimation runtime w/o refinement

表 4: 单目标姿态估计运行时间 (无优化)

| 方法 | 帧率 (fps) |

|---|---|

| Vidal et al. (2018) | 0.2 |

| Brachmann et al. (2016) | 2 |

| Kehl et al. (2016) | 2 |

| RadandLepetit (2017) | 4 |

| Kehl et al. (2017) | 12 |

| OURS | 13 (RetinaNet) |

| Tekin et al. (2017) | 42 (SSD) 50 |

3.6.5 Inference Time

3.6.5 推理时间

The Single Shot Multibox Detector (SSD) with VGG16 base and 31 classes plus the AAE (Fig. 5) with a codebook size of $92232\times128$ yield the average inference times depicted in Table 3. We conclude that the RGBbased pipeline is real-time capable at ${\sim}42\mathrm{Hz}$ on a Nvidia GTX 1080. This enables augmented reality and robotic applications and leaves room for tracking algorithms. Multiple encoders (15MB) and corresponding codebooks (45MB each) fit into the GPU memory, making multiobject pose estimation feasible.

以VGG16为基础、支持31个类别的单次多框检测器(SSD)与码本尺寸为$92232\times128$的对抗自编码器(AAE)(图5)的平均推理时间如表3所示。实验表明,基于RGB的流程在Nvidia GTX 1080显卡上可实现${\sim}42\mathrm{Hz}$的实时处理能力,这为增强现实和机器人应用提供了可能,并为跟踪算法预留了计算余量。多个编码器(15MB)及对应码本(每个45MB)可同时载入GPU显存,使得多目标姿态估计成为可能。

4 Evaluation

4 评估

We evaluate the AAE and the whole 6D detection pipeline on the T-LESS (Hodan et al., 2017) and LineMOD (H inters to is ser et al., 2011) datasets.

我们在 T-LESS (Hodan et al., 2017) 和 LineMOD (Hinters to is ser et al., 2011) 数据集上评估了 AAE 和整个 6D 检测流程。

4.1 Test Conditions

4.1 测试条件

Few RGB-based pose estimation approaches (e.g. Kehl et al. (2017); Ulrich et al. (2009)) only rely on 3D model information. Most methods like Wohlhart and Lepetit (2015); Balntas et al. (2017); Brachmann et al. (2016) make use of real pose annotated data and often even train and test on the same scenes (e.g. at slightly different viewpoints, as in the official LineMOD benchmark). It is common practice to ignore in-plane rotations or to only consider object poses that appear in the dataset (Rad and Lepetit, 2017; Wohlhart and Lepetit, 2015) which also limits applicability. Symmetric object views are often individually treated (Rad and Lepetit, 2017; Balntas et al., 2017) or ignored (Wohlhart and Lepetit, 2015). The SIXD challenge (Hodan, 2017) is an attempt to make fair comparisons between 6D localization algorithms by prohibiting the use of test scene pixels. We follow these strict evaluation guidelines, but treat the harder problem of 6D detection where it is unknown which of the considered objects are present in the scene. This is especially difficult in the T-LESS dataset since objects are very similar. We train the AAEs on the reconstructed 3D models, except for object 19-23 where we train on the CAD models because the pins are missing in the reconstructed plugs.

基于RGB的姿态估计方法中,仅有少数(如Kehl等人(2017)、Ulrich等人(2009))仅依赖3D模型信息。大多数方法(如Wohlhart和Lepetit(2015)、Balntas等人(2017)、Brachmann等人(2016))会利用真实标注的姿态数据,甚至常在相同场景下进行训练和测试(例如LineMOD官方基准中稍有不同的视角)。常见做法是忽略平面内旋转,或仅考虑数据集中出现的物体姿态(Rad和Lepetit(2017)、Wohlhart和Lepetit(2015)),这也限制了方法的适用性。对称物体视角通常被单独处理(Rad和Lepetit(2017)、Balntas等人(2017))或忽略(Wohlhart和Lepetit(2015))。SIXD挑战赛(Hodan(2017))试图通过禁止使用测试场景像素,实现对6D定位算法的公平比较。我们遵循这些严格的评估准则,但处理更困难的6D检测问题——即场景中存在哪些待检测物体是未知的。这在T-LESS数据集中尤为困难,因为物体极其相似。我们基于重建的3D模型训练AAE,但19-23号物体例外(因其重建插头缺失插针而改用CAD模型训练)。

We noticed, that the geometry of some 3D reconstruction in T-LESS is slightly inaccurate which badly influences the RGB-based distance estimation (Sec. 3.6.2) since the synthetic bounding box diagonals are wrong. Therefore, in a second training run we only train on the 30 CAD models.

我们注意到,T-LESS中的部分3D重建几何结构存在轻微误差,这会严重影响基于RGB的距离估计(见第3.6.2节),因为合成边界框对角线数据不准确。因此,在第二轮训练中,我们仅使用30个CAD模型进行训练。

4.2 Metrics

4.2 指标

Hodan et al. (2016) introduced the Visible Surface Discrepancy $(e r r_{v s d})$ , an ambiguity-invariant pose error function that is determined by the distance between the estimated and ground truth visible object depth surfaces. As in the SIXD challenge, we report the recall of correct 6D object poses at $e r r_{v s d}<0.3$ with tolerance $\tau=20m m$ and $>10%$ object visibility. Although the Average Distance of Model Points (ADD) metric introduced by H inters to is ser et al. (2012b) cannot handle pose ambiguities, we also present it for the LineMOD dataset following the official protocol in H inters to is ser et al. (2012b). For objects with symmetric views (eggbox, glue), H inters to is ser et al. (2012b) adapts the metric by calculating the average distance to the closest model point. Manhardt et al. (2018) has noticed inaccurate intrinsics and sensor registration errors between RGB and D in the LineMOD dataset. Thus, purely synthetic RGB-based approaches, although visually correct, suffer from false pose rejections. The focus of our experiments lies on the T-LESS dataset.

Hodan等人 (2016) 提出了可见表面差异 $(err_{vsd})$ ,这是一种由估计与真实可见物体深度表面之间距离决定的、对模糊性不变的位姿误差函数。按照SIXD挑战赛的标准,我们报告在 $err_{vsd}<0.3$ (容忍度 $\tau=20mm$ 且物体可见性 $>10%$ )时的正确6D物体位姿召回率。虽然Hinterstoisser等人 (2012b) 提出的模型点平均距离 (ADD) 指标无法处理位姿模糊性问题,但我们仍依据Hinterstoisser等人 (2012b) 的官方协议为LineMOD数据集提供该指标。对于具有对称视图的物体(蛋盒、胶水瓶),Hinterstoisser等人 (2012b) 通过计算到最近模型点的平均距离来调整该指标。Manhardt等人 (2018) 发现LineMOD数据集中存在RGB与深度传感器标定不准确及配准误差问题,因此纯合成的基于RGB方法虽然视觉上正确,却会遭遇位姿误判。我们的实验重点聚焦于T-LESS数据集。

In our ablation studies we also report the $A U C_{v s d}$ , which represents the area under the ’ $e r r_{v s d}$ vs. recall’ curve:

在我们的消融研究中,我们还报告了$A U C_{v s d}$,它表示'$e r r_{v s d}$ vs. recall'曲线下的面积:

$$

A U C_{v s d}=\int_{0}^{1}r e c a l l(e r r_{v s d})d e r r_{v s d}

$$

$$

A U C_{v s d}=\int_{0}^{1}r e c a l l(e r r_{v s d})d e r r_{v s d}

$$

Fig. 9: Testing object 5 on all 504 Kinect RGB views of scene 2 in T-LESS

图 9: 在T-LESS场景2的所有504个Kinect RGB视图上测试物体5

4.3 Ablation Studies

4.3 消融实验

To assess the AAE alone, in this subsection we only predict the 3D orientation of Object 5 from the TLESS dataset on Primesense and Kinect RGB scene crops. Table 5 shows the influence of different input augmentations. It can be seen that the effect of different color augmentations is cumulative. For texture less objects, even the inversion of color channels seems to be beneficial since it prevents over fitting to synthetic color information. Furthermore, training with real object recordings provided in T-LESS with random Pascal VOC background and augmentations yields only slightly better performance than the training with synthetic data. Fig. 9a depicts the effect of different latent space sizes on the 3D pose estimation accuracy. Performance starts to saturate at $d i m=64$ .

为了单独评估AAE,在本小节中我们仅从TLESS数据集的Primesense和Kinect RGB场景裁剪中预测物体5的三维朝向。表5展示了不同输入增强方式的影响。可以看出,不同颜色增强的效果是累积的。对于无纹理物体,甚至反转颜色通道似乎也有益处,因为它能防止对合成颜色信息的过拟合。此外,使用T-LESS提供的真实物体记录配合随机Pascal VOC背景及增强进行训练,其性能仅略优于使用合成数据的训练。图9a描绘了不同潜在空间尺寸对三维姿态估计精度的影响。性能在$dim=64$时开始趋于饱和。

4.4 Discussion of 6D Object Detection results

4.4 6D物体检测结果讨论

Our RGB-only 6D Object Detection pipeline consists of 2D detection, 3D orientation estimation, projective distance estimation and perspective error correction. Although the results are visually appealing, to reach the performance of state-of-the-art depth-based methods we also need to refine our estimates using a depthbased ICP. Table 6 presents our 6D detection evaluation on all scenes of the T-LESS dataset, which contains a high amount of pose ambiguities. Our pipeline outperforms all 15 reported T-LESS results on the 2018 BOP benchmark from Hodan et al. (2018) in a fraction of the runtime. Table 6 shows an extract of competing methods. Our RGB-only results can compete with the RGB-D learning-based approaches of Brachmann et al. (2016) and Kehl et al. (2016). Previous state-of-the-art approaches from Vidal et al. (2018); Drost et al. (2010) perform a time consuming refinement search through multiple pose hypotheses while we only perform the ICP on a single pose hypothesis. That being said, the codebook is well suited to generate multiple hypotheses using $k>1$ nearest neighbors. The right part of Table 6 shows results with ground truth bounding boxes yielding an upper bound on the pose estimation performance.

我们的纯RGB 6D物体检测流程包含2D检测、3D朝向估计、投影距离估计和透视误差校正。虽然视觉效果出色,但为了达到基于深度的最先进方法性能,我们仍需使用基于深度的ICP(迭代最近点)算法优化估计结果。表6展示了我们在T-LESS数据集所有场景上的6D检测评估,该数据集存在大量位姿歧义。我们的流程在Hodan等人(2018)提出的2018年BOP基准测试中,以更短运行时间超越了全部15个已报告的T-LESS结果。表6展示了部分竞争方法的对比数据。我们的纯RGB结果可与Brachmann等人(2016)和Kehl等人(2016)基于RGB-D的学习方法相媲美。Vidal等人(2018)和Drost等人(2010)提出的先前最优方法需要耗费时间对多个位姿假设进行精细化搜索,而我们仅对单个位姿假设执行ICP。值得一提的是,该码本非常适合使用$k>1$最近邻生成多个假设。表6右侧展示了使用真实标注边界框的结果,这给出了位姿估计性能的上限。

Table 6: T-LESS: Object recall for $e r r_{v s d}<0.3$ on all Primesense test scenes (SIXD/BOP benchmark from Hodan et al. (2018)). $R G B^{\dagger}$ depicts training with 3D reconstructions, except objects 19-23 −→ CAD models; $R G B^{\ddagger}$ depicts training on untextured CAD models only

表 6: T-LESS: 所有Primesense测试场景中 $e r r_{v s d}<0.3$ 的物体召回率 (来自Hodan等人(2018)的SIXD/BOP基准)。$R G B^{\dagger}$ 表示使用3D重建进行训练 (除物体19-23使用CAD模型);$R G B^{\ddagger}$ 表示仅使用无纹理CAD模型进行训练

| AAE | AAE | AAE | AAE | AAE | AAE | AAE | AAE | w/GT 2D BBs | |

|---|---|---|---|---|---|---|---|---|---|

| SSD | RetinaNet | RetinaNet | Brachmann et al. | Kehl et al. | Vidal et al. | Drost et al. | +Depth(ICP) | ||

| Data | RGBt | RGBt | RGB+ | RGBt+Depth(ICP) | RGB-D | RGB-D +ICP Depth +ICP 1 | Depth +edge | RGBt | |

| 1 | 5.65 | 9.48 | 12.67 | 67.95 | 8 | 7 | 43 | 53 | 12.67 |

| 2 | 5.46 | 13.24 | 16.01 | 70.62 | 10 | 10 | 47 | 44 | 11.47 |

| 3 | 7.05 | 12.78 | 22.84 | 78.39 | 21 | 18 | 69 | 61 | 13.32 |

| 4 | 4.61 | 6.66 | 6.70 | 57.00 | 4 | 24 | 63 | 67 | 12.88 |

| 5 | 36.45 | 36.19 | 38.93 | 77.18 | 46 | 23 | 69 | 71 | 67.37 |

| 6 | 23.15 | 20.64 | 28.26 | 72.75 | 19 | 10 | 67 | 73 | 54.21 |

| 7 | 15.97 | 17.41 | 26.56 | 83.39 | 52 | 0 | 77 | 75 | 38.10 |

| 8 | 10.86 | 21.72 | 18.01 | 78.08 | 22 | 2 | 79 | 89 | 24.83 |

| 9 | 19.59 | 39.98 | 33.36 | 88.64 | 12 | 11 | 90 | 92 | 49.06 |

| 10 | 10.47 | 13.37 | 33.15 | 84.47 | 7 | 17 | 68 | 72 | 15.67 |

| 11 | 4.35 | 7.78 | 17.94 | 56.01 | 3 | 5 | 69 | 64 | 16.64 |

| 12 | 7.80 | 9.54 | 18.38 | 63.23 | 3 | 1 | 82 | 81 | 33.57 |

| 13 | 3.30 | 4.56 | 16.20 | 43.55 | 0 | 0 | 56 | 53 | 15.29 |

| 14 | 2.85 | 5.36 | 10.58 | 25.58 | 0 | 9 | 47 | 46 | 50.14 |

| 15 | 7.90 | 27.11 | 40.50 | 69.81 | 0 | 12 | 52 | 55 | 52.01 |

| 16 | 13.06 | 22.04 | 35.67 | 84.55 | 5 | 56 | 81 | 85 | 36.71 |

| 17 | 41.70 | 66.33 | 50.47 | 74.29 | 3 | 52 | 83 | 88 | 81.44 |

| 18 | 47.17 | 14.91 | 33.63 | 83.12 | 54 | 22 | 80 | 78 | 55.48 |

| 19 | 15.95 | 23.03 | 23.03 | 58.13 | 38 | 35 | 55 | 55 | 53.07 |

| 20 | 2.17 | 5.35 | 5.35 | 26.73 | 1 | 5 | 47 | 47 | 38.97 |

| 21 | 19.77 | 19.82 | 19.82 | 53.48 | 39 | 26 | 63 | 55 | 53.45 |

| 22 | 11.01 | 20.25 | 20.25 | 60.49 | 19 | 27 | 70 | 56 | 49.95 |

| 23 | 7.98 | 19.15 | 19.15 | 62.69 | 61 | 71 | 85 | 84 | 36.74 |

| 24 | 4.74 | 4.54 | 27.94 | 62.99 | 1 | 36 | 70 | 59 | 11.75 |

| 25 | 21.91 | 19.07 | 51.01 | 73.33 | 16 | 28 | 48 | 47 | 37.73 |

| 26 | 10.04 | 12.92 | 33.00 | 67.00 | 27 | 51 | 55 | 69 | 29.82 |

| 27 | 7.42 | 22.37 | 33.61 | 82.16 | 17 | 34 | 60 | 61 | 23.30 |

| 28 | 21.78 | 24.00 | 30.88 | 83.51 | 13 | 54 | 69 | 80 | 43.97 |

| 29 | 15.33 | 27.66 30.53 | 35.57 | 74.45 | 6 | 86 | 65 | 84 | 57.82 |

| 30 | 34.63 | 44.33 | 93.65 | 5 | 69 | 84 | 89 | 72.81 | |

| Mean | Time(s) 0.024 | 14.67 | 19.26 | 26.79 | 68.57 | 17.84 | 24.60 | 66.51 | 67.50 |

Table 7: Effect of Perspective Corrections on T-LESS

表 7: 透视校正对 T-LESS 的影响

| 方法 | RGBt |

|---|---|

| 无校正 (w/o correction) | 18.35 |

| 有校正 (W/ correction) | 19.26 (+0.91) |

The results in Table 6 show that our domain randomization strategy allows to generalize from 3D reconstructions as well as untextured CAD models as long as the considered objects are not significantly textured. Instead of a performance drop we report an increased $e r r_{v s d}<0.3$ recall due to the more accurate geometry of the model which results in correct bounding box diagonals and thus a better projective distance estimation in the RGB-domain.

表 6 中的结果表明,只要目标物体没有明显的纹理特征,我们的领域随机化策略就能从 3D 重建和无纹理 CAD 模型中实现泛化。由于模型几何精度提升带来了正确的边界框对角线计算,从而在 RGB 域中获得更优的投影距离估计,我们不仅未观察到性能下降,反而报告了 $err_{vsd}<0.3$ 召回率的提升。

In Table 8 we also compare our pipeline against state-of-the-art methods on the LineMOD dataset. Here, our synthetically trained pipeline does not reach the performance of approaches that use real pose annotated training data.

在表8中,我们还将我们的流程与LineMOD数据集上的最先进方法进行了比较。在这里,我们通过合成数据训练的流程未能达到使用真实位姿标注训练数据的方法的性能。

There are multiple issues: (1) As described in Sec 4.1 the real training and test set are strongly correlated and approaches using the real training set can over-fit to it; (2) the models provided in LineMOD are quite bad which affects both, the detection and pose estimation performance of synthetically trained approaches; (3) the advantage of not suffering from pose-ambiguities does not matter much in LineMOD where most object views are pose-ambiguity free; (4) We train and test poses from the whole SO(3) as opposed to only a limited range in which the test poses lie. SSD6D also trains only on synthetic views of the 3D models and we outperform their approach by a big margin in the RGB-only domain before ICP refinement.

存在多个问题:(1) 如第4.1节所述,真实训练集与测试集高度相关,使用真实训练集的方法容易对其过拟合;(2) LineMOD提供的模型质量较差,这同时影响了基于合成数据训练方法的检测和姿态估计性能;(3) 在大多数物体视角不存在姿态歧义的LineMOD数据集上,不受姿态歧义影响的优势并不明显;(4) 我们在整个SO(3)空间进行训练和测试,而非局限于测试姿态所在的有限范围。SSD6D同样仅使用3D模型的合成视图进行训练,而在ICP精修前的纯RGB领域,我们的方法大幅优于他们。

4.5 Failure Cases

4.5 失败案例

Figure 11 shows qualitative failure cases, mostly stemming from missed detections and strong occlusions. A weak point is the dependence on the bounding box size at test time to predict the object distance. Specifically, under sever occlusions the predicted bounding box tends to shrink such that it does not encompass the occluded parts of the detected object even if it is trained to do so. If the usage of depth data is clear in advance other methods for directly using depth-based methods for distance estimation might be better suited. Furthermore, on strongly textured objects, the AAEs should not be trained without rendering the texture since otherwise the texture might not be distinguishable from shape at test time. The sim2real transfer on strongly reflective objects like satellites can be challenging and might require physically-based renderings. Some objects, like long, thin pens can fail because their tight object crops at training and test time appear very near from some views and very far from other views, thus hindering the learning of proper pose representations. As the object size is unknown during test time, we cannot simply crop a constantly sized area.

图 11: 展示了定性分析中的失败案例,主要源于漏检和严重遮挡问题。一个薄弱环节是测试时依赖边界框尺寸来预测物体距离。具体而言,在严重遮挡情况下,预测边界框往往会收缩,即使经过训练也难以覆盖被检测物体的遮挡部分。若能提前明确深度数据的使用场景,直接采用基于深度的方法进行距离估计可能更为适合。此外,对于纹理丰富的物体,训练AAE (Auto-Encoder) 时必须渲染纹理,否则测试时可能无法区分纹理与形状特征。卫星等高反光物体的仿真到现实迁移具有挑战性,可能需要基于物理的渲染技术。像细长钢笔这类物体可能失败,因为训练和测试时的紧密物体裁剪在某些视角下显得极近,在其他视角下却显得极远,从而阻碍了正确位姿表征的学习。由于测试时物体尺寸未知,我们无法简单地裁剪固定大小的区域。

Table 8: LineMOD: Object recall (ADD H inters to is ser et al. (2012b) metric) of methods that use different amounts of training and test data, results taken from Tekin et al. (2017)

表 8: LineMOD: 不同训练和测试数据量方法的物体召回率 (ADD H inters to is ser et al. (2012b) metric) ,结果取自 Tekin et al. (2017)

Fig. 10: Rotation and translation error histograms on all T-LESS test scenes with our RGB-based (left columns) and ICP-refined (right columns) 6D Object Detection

图 10: 基于RGB (左列) 和ICP优化后 (右列) 的6D物体检测在所有T-LESS测试场景中的旋转和平移误差直方图

4.6 Rotation and Translation Histograms

4.6 旋转和平移直方图

To investigate the effect of ICP and to obtain an intuition about the pose errors, we plot the rotation and translation error histograms of two T-LESS objects (Fig. 10). We can see the view-dependent symmetry axes of both objects in the rotation errors histograms. We also observe that the translation error is strongly improved through the depth-based ICP while the rotation estimates from the AAE are hardly refined. Especially when objects are partly occluded, the bounding boxes can become inaccurate and the projective distance estimation (Sec. 3.6.2) fails to produce very accurate distance predictions. Still, our global and fast 6D Object Detection provides sufficient accuracy for an iterative local refinement method to reliably converge.

为研究ICP(迭代最近点)的效果并直观理解位姿误差,我们绘制了两个T-LESS物体的旋转和平移误差直方图(图10)。通过旋转误差直方图可观察到两个物体具有视角依赖的对称轴。同时发现基于深度的ICP能显著改善平移误差,而AAE(轴角估计器)的旋转估计结果几乎未被优化。特别是当物体被部分遮挡时,边界框可能不准确,此时投影距离估计算法(第3.6.2节)无法生成高精度距离预测。尽管如此,我们快速全局的6D物体检测方法仍能为迭代局部优化提供足够精度,确保可靠收敛。

Fig. 11: Failure cases; Blue: True poses; Green: Predictions; (a) Failed detections due to occlusions and object ambiguity, (b) failed AAE predictions of Glue (middle) and Eggbox (right) due to strong occlusion, (c) inaccurate predictions due to occlusion

图 11: 失败案例;蓝色:真实位姿;绿色:预测结果;(a) 因遮挡和物体歧义导致的检测失败,(b) 因严重遮挡导致Glue(中)和Eggbox(右)的AAE预测失败,(c) 因遮挡导致的不准确预测

Fig. 12: Mobile Net SSD and AAEs on T-LESS objects, demonstrated live at ECCV 2018 on a Jetson TX2

图 12: 在ECCV 2018现场演示的Jetson TX2上运行的Mobile Net SSD与AAEs在T-LESS物体上的表现

4.7 Demonstration on Embedded Hardware

4.7 嵌入式硬件演示

The presented AAEs were also ported onto a Nvidia Jetson TX2 board, together with a small footprint MobileNet from Howard et al. (2017) for the bounding box detection. A webcam was connected, and this setup was demonstrated live at ECCV 2018, both in the demo session and during the oral presentation. For this demo we acquired several of the T-LESS objects. As can be seen in Figure 12, lighting conditions were dramatically different than in the test sequences from the T-LESS dataset which validates the robustness and applicability of our approach outside lab conditions. No ICP was used, so the errors in depth resulting from the scaling errors of the MobileNet, were not corrected. However, since small errors along the depth direction are less perceptible for humans, our approach could be interesting for augmented reality applications. The detection, pose estimation and visualization of the three test objects ran at over 13Hz.

所提出的AAE还被移植到了Nvidia Jetson TX2开发板上,并搭配了Howard等人(2017)提出的轻量级MobileNet进行边界框检测。我们连接了网络摄像头,并在ECCV 2018的演示环节和口头报告期间进行了现场展示。为了这次演示,我们获取了多个T-LESS物体。如图12所示,光照条件与T-LESS数据集测试序列中的情况截然不同,这验证了我们方法在实验室环境之外的鲁棒性和适用性。由于未使用ICP(迭代最近点)算法,因此未校正MobileNet尺度误差导致的深度误差。但由于人类对深度方向的小误差感知较弱,我们的方法在增强现实应用中可能具有优势。三个测试物体的检测、姿态估计和可视化运行频率超过13Hz。

5 Conclusion

5 结论

We have proposed a new self-supervised training strategy for Auto encoder architectures that enables robust 3D object orientation estimation on various RGB sensors while training only on synthetic views of a 3D model. By demanding the Auto encoder to revert geometric and color input augmentations, we learn represent at ions that (1) specifically encode 3D object orientations, (2) are invariant to a significant domain gap between synthetic and real RGB images, (3) inherently regard pose ambiguities from symmetric object views. Around this approach, we created a real-time (42 fps), RGB-based pipeline for 6D object detection which is especially suitable when pose-annotated RGB sensor data is not available.

我们提出了一种针对自编码器 (Auto encoder) 架构的新型自监督训练策略,该策略仅需在3D模型的合成视图上进行训练,即可在各种RGB传感器上实现鲁棒的3D物体朝向估计。通过要求自编码器还原几何和颜色输入增强,我们学习到的表征能够:(1) 专门编码3D物体朝向,(2) 对合成与真实RGB图像之间的显著域差距具有不变性,(3) 本质性地处理对称物体视图带来的姿态歧义。基于该方法,我们构建了一个实时 (42 fps) 的RGB 6D物体检测流程,特别适用于缺乏姿态标注RGB传感器数据的场景。