RegNet: Self-Regulated Network for Image Classification

RegNet: 用于图像分类的自调节网络

Jing Xu, Yu Pan, Xinglin Pan, Steven Hoi, Fellow, IEEE, Zhang Yi, Fellow, IEEE, and Zenglin $\mathrm{Xu^{*}}$

Jing Xu, Yu Pan, Xinglin Pan, Steven Hoi, Fellow, IEEE, Zhang Yi, Fellow, IEEE, 以及 Zenglin $\mathrm{Xu^{*}}$

Abstract—The ResNet and its variants have achieved remarkable successes in various computer vision tasks. Despite its success in making gradient flow through building blocks, the simple shortcut connection mechanism limits the ability of reexploring new potentially complementary features due to the additive function. To address this issue, in this paper, we propose to introduce a regulator module as a memory mechanism to extract complementary features, which are further fed to the ResNet. In particular, the regulator module is composed of convolutional RNNs (e.g., Convolutional LSTMs or Convolutional GRUs), which are shown to be good at extracting spatio-temporal information. We named the new regulated networks as RegNet. The regulator module can be easily implemented and appended to any ResNet architectures. We also apply the regulator module for improving the Squeeze-and-Excitation ResNet to show the generalization ability of our method. Experimental results on three image classification datasets have demonstrated the promising performance of the proposed architecture compared with the standard ResNet, SE-ResNet, and other state-of-the-art architectures.

摘要—ResNet及其变体在各种计算机视觉任务中取得了显著的成功。尽管其在使梯度通过构建块流动方面取得了成功,但简单的快捷连接机制由于加法函数的限制,限制了重新探索新的潜在互补特征的能力。为了解决这个问题,本文提出引入一个调节器模块作为记忆机制来提取互补特征,这些特征进一步输入到ResNet中。具体来说,调节器模块由卷积RNN(例如,卷积LSTM或卷积GRU)组成,这些RNN被证明擅长提取时空信息。我们将这种新的调节网络命名为RegNet。调节器模块可以轻松实现并附加到任何ResNet架构中。我们还应用调节器模块来改进Squeeze-and-Excitation ResNet,以展示我们方法的泛化能力。在三个图像分类数据集上的实验结果表明,与标准ResNet、SE-ResNet和其他最先进的架构相比,所提出的架构具有显著的性能优势。

Index Terms—Residue Networks, Convolutional Recurrent Neural Networks, Convolutional Neural Networks

索引术语—残差网络 (Residue Networks)、卷积循环神经网络 (Convolutional Recurrent Neural Networks)、卷积神经网络 (Convolutional Neural Networks)

I. INTRODUCTION

I. 引言

Convolutional neural networks (CNNs) have achieved abundant breakthroughs in a number of computer vision tasks [1]. Since the champion achieved by AlexNet [2] at the ImageNet competition in 2012, various new architectures have been proposed, including VGGNet [3], GoogLeNet [4], ResNet [5], DenseNet [6], and recent NASNet [7].

卷积神经网络 (Convolutional Neural Networks, CNNs) 在众多计算机视觉任务中取得了大量突破 [1]。自 AlexNet [2] 在 2012 年 ImageNet 竞赛中夺冠以来,各种新架构被提出,包括 VGGNet [3]、GoogLeNet [4]、ResNet [5]、DenseNet [6] 以及最近的 NASNet [7]。

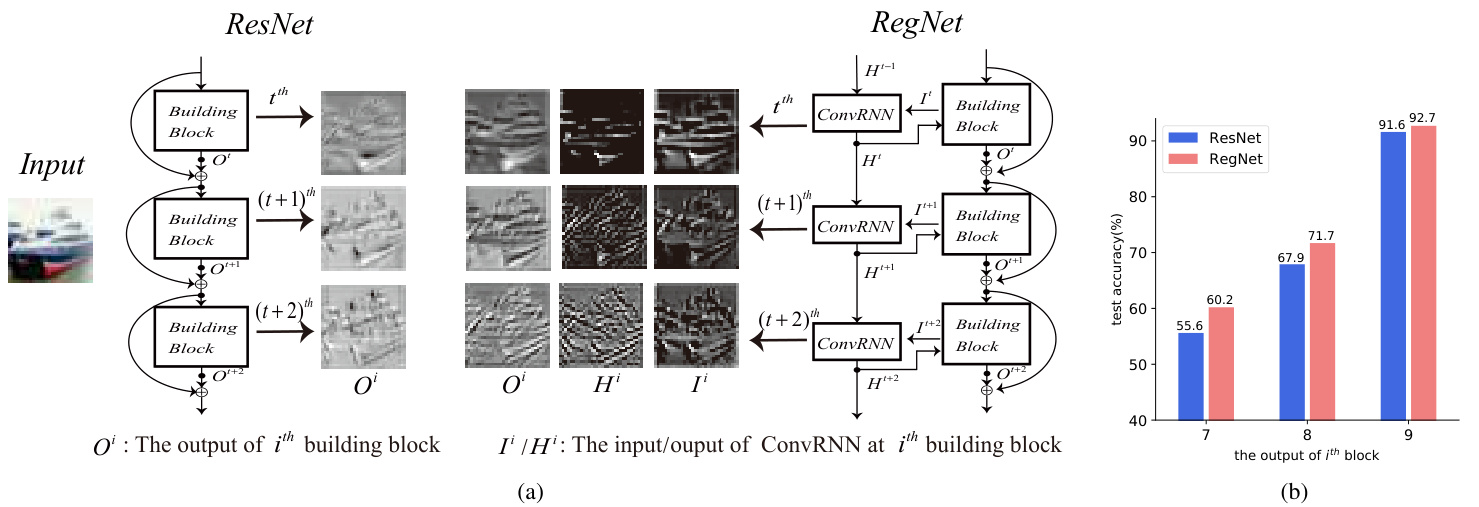

Among these deep architectures, ResNet and its variants [8]–[11] have obtained significant attention with outstanding performances in both low-level and high-level vision tasks. The remarkable success of ResNets is mainly due to the shortcut connection mechanism, which makes the training of a deeper network possible, where gradients can directly flow through building blocks and the gradient vanishing problem can be avoided in some sense. However, the shortcut connection mechanism makes each block focus on learning its respective residual output, where the inner block information communication is somehow ignored and some reusable information learned from previous blocks tends to be forgotten in later blocks. To illustrate this point, we visualize the output(residual) feature maps learned by consecutive blocks in ResNet in Fig. 1(a). It can be see that due to the summation operation among blocks, the adjacent outputs $O^{t}$ , $O^{t+1}$ and $O^{t+2}$ look very similar to each other, which indicates that less new information has been learned through consecutive blocks.

在这些深度架构中,ResNet 及其变体 [8]–[11] 在低层次和高层次视觉任务中表现出色,因此获得了广泛关注。ResNet 的显著成功主要归功于其快捷连接机制,这使得训练更深的网络成为可能,梯度可以直接通过构建模块流动,从而在一定程度上避免了梯度消失问题。然而,快捷连接机制使得每个模块专注于学习其各自的残差输出,模块内部的信息交流被忽视,从前面的模块中学到的可重用信息往往在后面的模块中被遗忘。为了说明这一点,我们在图 1(a) 中可视化了 ResNet 中连续模块学习到的输出(残差)特征图。可以看出,由于模块之间的求和操作,相邻的输出 $O^{t}$、$O^{t+1}$ 和 $O^{t+2}$ 看起来非常相似,这表明通过连续模块学习到的新信息较少。

A potential solution to address the above problems is to capture the spatio-temporal dependency between building blocks while constraining the speed of parameter increasing. To this end, we introduce a new regulator mechanism in parallel to the shortcuts in ResNets for controlling the necessary memory information passing to the next building block. In detail, we adopt the Convolutional RNNs (“ConvRNNs") [12] as the regulator to encode the spatio-temporal memory. We name the new architecture as RNN-Regulated Residual Networks, or “RegNet" for short. As shown in Fig. 1(a), at the $i^{t h}$ building block, a recurrent unit in the convolutional RNN takes the feature from the current building block as the input (denoted by $I^{i}$ ), and then encodes both the input and the serial information to generate the hidden state (denoted by $H^{i}$ ); the hidden state will be concatenated with the input for reuse in the next convolution operation (leading to the output feature $O^{i}$ ), and will also be transported to the next recurrent unit. To better understand the role of the regulator, we visualize the feature maps, as shown in Fig. 1(a). We can see that the $H^{i}$ generated by ConvRNN can complement with the input features $I^{i}$ . After conducting convolution on the concatenated features of $H^{i}$ and $I^{i}$ , the proposed model gets more meaningful features with rich edge information $O^{i}$ than ResNet does. For quantitatively evaluating the information contained in the feature maps, we test their classification ability on test data (by adding average pooling layer and the last fully connected layer to the $O^{i}$ of the last three blocks). As shown in Fig. 1(b), we can find that the new architecture can get higher prediction accuracy, which indicates the effectiveness of the regulator from ConvRNNs.

解决上述问题的一个潜在方案是在限制参数增长速度的同时,捕捉构建块之间的时空依赖性。为此,我们在ResNets的快捷路径旁引入了一种新的调节机制,用于控制必要的记忆信息传递到下一个构建块。具体来说,我们采用卷积循环神经网络(Convolutional RNNs, "ConvRNNs")[12] 作为调节器来编码时空记忆。我们将这种新架构命名为RNN调节残差网络,简称“RegNet”。如图1(a)所示,在第 $i^{t h}$ 个构建块中,卷积RNN中的一个循环单元将当前构建块的特征作为输入(记为 $I^{i}$),然后对输入和序列信息进行编码以生成隐藏状态(记为 $H^{i}$);隐藏状态将与输入拼接,以便在下一个卷积操作中重复使用(生成输出特征 $O^{i}$),同时也会传递到下一个循环单元。为了更好地理解调节器的作用,我们对特征图进行了可视化,如图1(a)所示。可以看到,ConvRNN生成的 $H^{i}$ 可以与输入特征 $I^{i}$ 互补。在对 $H^{i}$ 和 $I^{i}$ 的拼接特征进行卷积后,所提出的模型比ResNet获得了更具意义且边缘信息丰富的特征 $O^{i}$。为了定量评估特征图中包含的信息,我们在测试数据上测试了它们的分类能力(通过在最后三个块的 $O^{i}$ 上添加平均池化层和最后一个全连接层)。如图1(b)所示,我们发现新架构可以获得更高的预测准确率,这表明了ConvRNNs调节器的有效性。

Thanks to the kind of parallel structure of the regulator module, the RNN-based regulator is easy to implement and can be applicable to other ResNet-based structures, such as the SE-ResNet [11], Wide ResNet [8], Inception-ResNet [9], ResNetXt [10], Dual Path Network(DPN) [13], and so on. Without loss of generality, as another instance to demonstrate the effectiveness of the proposed regulator, we also apply the ConvRNN module for improving the Squeeze-and-Excitation ResNet (shorted as “SE-RegNet").

得益于调节器模块的并行结构,基于 RNN 的调节器易于实现,并且可以应用于其他基于 ResNet 的结构,例如 SE-ResNet [11]、Wide ResNet [8]、Inception-ResNet [9]、ResNetXt [10]、Dual Path Network (DPN) [13] 等。为了不失一般性,作为展示所提出调节器有效性的另一个实例,我们还应用 ConvRNN 模块来改进 Squeeze-and-Excitation ResNet (简称为 "SE-RegNet")。

For evaluation, we apply our model to the task of image classification on three highly competitive benchmark datasets, including CIFAR-10, CIFAR-100, and ImageNet. In comparison with the ResNet and SE-ResNet, our experimental results have demonstrated that the proposed architecture can significantly improve the classification accuracy on all the datasets. We further show that the regulator can reduce the required depth of ResNets while reaching the same level of accuracy.

为了评估,我们将模型应用于三个极具竞争力的基准数据集上的图像分类任务,包括 CIFAR-10、CIFAR-100 和 ImageNet。与 ResNet 和 SE-ResNet 相比,我们的实验结果表明,所提出的架构能够显著提高所有数据集上的分类准确率。我们进一步展示了调节器可以在达到相同准确率水平的同时减少 ResNet 所需的深度。

Fig. 1. (a):Visualization of feature maps in the ResNet [5] and RegNet. We visualize the outputs $O^{i}$ feature maps of the $i^{t h}$ building blocks, $i\in{t,t+1,t+2}$ . In RegNets, $I^{i}$ denotes the input feature maps. $H^{i}$ denotes the hidden states generated by the ConvRNN at step $i$ . By applying convolution operations over the concatenation $I^{i}$ with $H^{i}$ , we can get the regulated outputs( denoted by $O^{i}$ ) of the $i^{t h}$ building block. (b): The prediction on test data based on the output feature maps of consecutive building blocks. During the test time, we add an average pooling layer and the last fully connected layer to the outputs of the last three building blocks $(i\in{7,8,9})$ in ResNet-20 and RegNet-20 to get the classification results. It can be seen that the output of each block aided with the memory information results in higher classification accuracy.

图 1: (a) ResNet [5] 和 RegNet 中特征图的可视化。我们可视化了第 $i^{t h}$ 个构建块的输出特征图 $O^{i}$,其中 $i\in{t,t+1,t+2}$。在 RegNet 中,$I^{i}$ 表示输入特征图,$H^{i}$ 表示 ConvRNN 在第 $i$ 步生成的隐藏状态。通过对 $I^{i}$ 和 $H^{i}$ 的拼接应用卷积操作,我们可以得到第 $i^{t h}$ 个构建块的调节输出(用 $O^{i}$ 表示)。(b) 基于连续构建块的输出特征图对测试数据的预测。在测试时,我们在 ResNet-20 和 RegNet-20 的最后三个构建块 $(i\in{7,8,9})$ 的输出上添加一个平均池化层和最后一个全连接层,以获得分类结果。可以看出,每个块的输出在记忆信息的辅助下,分类准确率更高。

II. RELATED WORK

II. 相关工作

Deep neural networks have been achieved empirical breakthroughs in machine learning. However, training networks with sufficient depths is a very tricky problem. Shortcut connection has been proposed to address the difficulty in optimization to some extent [5], [14]. Via the shortcut, information can flow across layers without attenuation. A pioneering work is the Highway Network [14], which implements the shortcut connections by using a gating mechanism. In addition, the ResNet [5] explicitly requests building blocks fitting a residual mapping, which is assumed to be easier for optimization.

深度神经网络在机器学习领域取得了经验性的突破。然而,训练足够深度的网络是一个非常棘手的问题。为了在一定程度上解决优化难题,提出了捷径连接 (shortcut connection) [5], [14]。通过捷径,信息可以在层之间流动而不衰减。一个开创性的工作是 Highway Network [14],它通过使用门控机制实现了捷径连接。此外,ResNet [5] 明确要求构建块拟合残差映射,这被认为更容易优化。

Due to the powerful capabilities in dealing with vision tasks of ResNets, a number of variants have been proposed, including WRN [8], Inception-ResNet [9], ResNetXt [10], , WResNet [15], and so on. ResNet and ResNet-based models have achieved impressive, record-breaking performance in many challenging tasks. In object detection, 50- and 101- layered ResNets are usually used as basic feature extractors in many models: Faster R-CNN [16], RetinaNet [17], Mask RCNN [18] and so on. The most recent models aiming at image super-resolution tasks, such as SRResNet [19], EDSR and MDSR [20], are all based on ResNets, with a little modification. Meanwhile, in [21], the ResNet is introduced to remove rain streaks and obtains the state-of-the-art performance.

由于 ResNet 在处理视觉任务中的强大能力,许多变体被提出,包括 WRN [8]、Inception-ResNet [9]、ResNetXt [10]、WResNet [15] 等。ResNet 和基于 ResNet 的模型在许多具有挑战性的任务中取得了令人印象深刻的、破纪录的性能。在目标检测中,50 层和 101 层的 ResNet 通常被用作许多模型中的基本特征提取器:Faster R-CNN [16]、RetinaNet [17]、Mask RCNN [18] 等。最近针对图像超分辨率任务的模型,如 SRResNet [19]、EDSR 和 MDSR [20],都是基于 ResNet 的,并进行了少量修改。同时,在 [21] 中,ResNet 被引入用于去除雨痕,并获得了最先进的性能。

Despite the success in many applications, ResNets still suffer from the depth issue [22]. DenseNet proposed by [6] concatenates the input features with the output features using a densely connected path in order to encourage the network to reuse all of the feature maps of previous layers. Obviously, not all feature maps need to be reused in the future layers, and consequently the densely connected network also leads to some redundancy with extra computational costs. Recently, Dual Path Network [13] and Mixed link Network [23] are the trade-offs between ResNets and DenseNets. In addition, some module-based architectures are proposed to improve the performance of the original ResNet. SENet [11] proposes a lightweight module to get the channel-wise attention of intermediate feature maps. CBAM [24] and BAM [25] design modules to infer attention maps along both channel and spatial dimensions. Despite their success, those modules try to regulate the intermediate feature maps based on the attention information learned by the intermediate feature themselves, so the full utilization of historical spatio-temporal information of previous features still remains an open problem.

尽管在许多应用中取得了成功,ResNets 仍然面临深度问题 [22]。[6] 提出的 DenseNet 通过密集连接路径将输入特征与输出特征连接起来,以鼓励网络重用之前层的所有特征图。显然,并非所有特征图都需要在后续层中重用,因此密集连接网络也会带来一些冗余,并增加额外的计算成本。最近,Dual Path Network [13] 和 Mixed Link Network [23] 在 ResNets 和 DenseNets 之间进行了权衡。此外,一些基于模块的架构被提出来以改进原始 ResNet 的性能。SENet [11] 提出了一个轻量级模块,用于获取中间特征图的通道注意力。CBAM [24] 和 BAM [25] 设计了模块,以推断通道和空间维度上的注意力图。尽管这些模块取得了成功,但它们试图基于中间特征自身学习的注意力信息来调节中间特征图,因此如何充分利用先前特征的历史时空信息仍然是一个开放问题。

On the other hand, convolutional RNNs (shorted as ConvRNN), such as ConvLSTM [12] and ConvGRU [26], have been used to capture spatio-temporal information in a number of applications, such as rain removal [27], video superresolution [28], video compression [29], video object detection and seg e met ation [30], [31]. Most of those works embed ConvRNNs into models to capture the dependency information in a sequence of images. In order to regulate the information flow of ResNet, we propose to leverage ConvRNNs as a separate module aiming to extracting spatio-temporal information as complementary to the original feature maps of ResNets.

另一方面,卷积循环神经网络(简称 ConvRNN),如 ConvLSTM [12] 和 ConvGRU [26],已被用于捕捉多种应用中的时空信息,例如去雨 [27]、视频超分辨率 [28]、视频压缩 [29]、视频目标检测与分割 [30]、[31]。这些工作大多将 ConvRNN 嵌入到模型中,以捕捉图像序列中的依赖信息。为了调节 ResNet 的信息流,我们提出利用 ConvRNN 作为一个独立模块,旨在提取时空信息,作为 ResNet 原始特征图的补充。

III. OUR MODEL

III. 我们的模型

In the section, we first revisit the background of ResNets and two advanced ConvRNNs: ConvLSTM and ConvGRU. Then we present the proposed RegNet architectures.

在本节中,我们首先回顾 ResNets 的背景以及两种先进的 ConvRNNs:ConvLSTM 和 ConvGRU。然后我们介绍提出的 RegNet 架构。

A. ResNet

A. ResNet

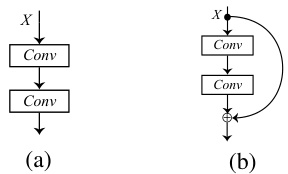

The degradation problem which makes the traditional network hard to converge, is exposed when the architecture goes deeper. The problem can be mitigated by ResNet [5] to some extent. Building blocks are the basic architecture of ResNet, as shown in Fig. 2(b), instead of directly fitting a original underlying mapping, shown in Fig. 2(a). The deep residual network obtained by stacking building blocks has achieved excellent performance in image classification, which proves the competence of the residual mapping.

当网络架构变深时,传统网络难以收敛的退化问题就会暴露出来。ResNet [5] 在一定程度上可以缓解这个问题。构建块是 ResNet 的基本架构,如图 2(b) 所示,而不是直接拟合原始的底层映射,如图 2(a) 所示。通过堆叠构建块获得的深度残差网络在图像分类中取得了优异的性能,这证明了残差映射的能力。

Fig. 2. 2(a) shows the original underlying mapping while 2(b) shows the residual mapping in ResNet [5].

图 2: 2(a) 展示了原始的底层映射,而 2(b) 展示了 ResNet [5] 中的残差映射。

B. ConvRNN and its Variants

B. ConvRNN 及其变体

RNN and its classical variants LSTM and GRU have achieved great success in the field of sequence processing. To tackle the spatio-temporal problems, we adopt the basic ConvRNN and its variants ConvLSTM and ConvGRU, which are transformed from the vanilla RNNs by replacing their fully-connected operators with convolutional operators. Furthermore, for reducing the computational overhead, we delicately design the convolutional operation in ConvRNNs. In our implementation, the ConvRNN can be formulated as

RNN 及其经典变体 LSTM 和 GRU 在序列处理领域取得了巨大成功。为了解决时空问题,我们采用了基本的 ConvRNN 及其变体 ConvLSTM 和 ConvGRU,它们通过将全连接操作替换为卷积操作,从原始的 RNN 转换而来。此外,为了减少计算开销,我们精心设计了 ConvRNN 中的卷积操作。在我们的实现中,ConvRNN 可以表示为

where $X^{t}$ is the input 3D feature map, $H^{t-1}$ is the hidden state obtained from the earlier output of ConvRNN and $H^{t}$ is the output 3D feature map at this state. Both the number of input $X^{t}$ and output $H^{t}$ channels in the ConvRNN are $\mathbf{N}$ .

其中 $X^{t}$ 是输入的3D特征图,$H^{t-1}$ 是从ConvRNN的早期输出中获得的隐藏状态,$H^{t}$ 是当前状态的输出3D特征图。ConvRNN中输入 $X^{t}$ 和输出 $H^{t}$ 的通道数均为 $\mathbf{N}$。

Additionally, ${}^{2N}\mathbf{W}^{N}\mathbf{\Phi}_{*}\mathbf{X}$ denotes a convolution operation between weights $\mathbf{W}$ and input $\mathbf{X}$ with the input channel 2N and the output channel N. To make the ConvRNN more efficient, inspired by [30], [32], given input $\mathbf{X}$ with 2N channels, we conduct the convolution operation in 2 steps:

此外,${}^{2N}\mathbf{W}^{N}\mathbf{\Phi}_{*}\mathbf{X}$ 表示权重 $\mathbf{W}$ 和输入 $\mathbf{X}$ 之间的卷积操作,输入通道为 2N,输出通道为 N。为了使 ConvRNN 更加高效,受 [30]、[32] 启发,给定具有 2N 通道的输入 $\mathbf{X}$,我们分两步进行卷积操作:

Directly applying the original convolutions with $3{\times}3$ kernels suffers from high computational complexity. As detailed in Table I, the new modification reduces the required computation by 18N/11 times with comparable result. Similarly, all the convolutions in ConvGRU and ConvLSTM are replaced with the light-weight modification.

直接应用原始的 $3{\times}3$ 核卷积会导致较高的计算复杂度。如表 1 所示,新的修改将所需的计算量减少了 18N/11 倍,同时保持了相当的结果。同样地,ConvGRU 和 ConvLSTM 中的所有卷积都被替换为轻量级的修改。

C. RNN-Regulated ResNet

C. RNN 调控的 ResNet

To deal with the CIFAR-10/100 datasets and the Imagenet dataset, [5] proposed two kinds of ResNet building blocks: the non-bottleneck building block and the bottleneck building block. Based on those, by applying ConvRNNs as regulators, we get RNN-Regulated ResNet building module and bottleneck RNN-Regulated ResNet building module correspondingly.

为了处理 CIFAR-10/100 数据集和 Imagenet 数据集,[5] 提出了两种 ResNet 构建模块:非瓶颈构建模块和瓶颈构建模块。在此基础上,通过应用 ConvRNN 作为调节器,我们得到了 RNN 调节的 ResNet 构建模块和瓶颈 RNN 调节的 ResNet 构建模块。

TABLE I PERFORMANCE OF REGNET-20 WITH CONVGRU AS REGULATORS ON CIFAR-10. WE COMPARE THE TEST ERROR RATES BETWEEN TRADITIONAL $3{\times}3$ KERNELS AND OUR NEW MODIFICATION.

表 1: 使用 CONVGRU 作为调节器的 RegNet-20 在 CIFAR-10 上的性能。我们比较了传统的 $3{\times}3$ 卷积核与我们新修改的卷积核的测试错误率。

| 卷积核类型 | 错误率 | 参数量 | FLOPs |

|---|---|---|---|

| 3x3 | 7.35 | +330K | +346M |

| 我们的方法 | 7.42 | +44K | +15M |

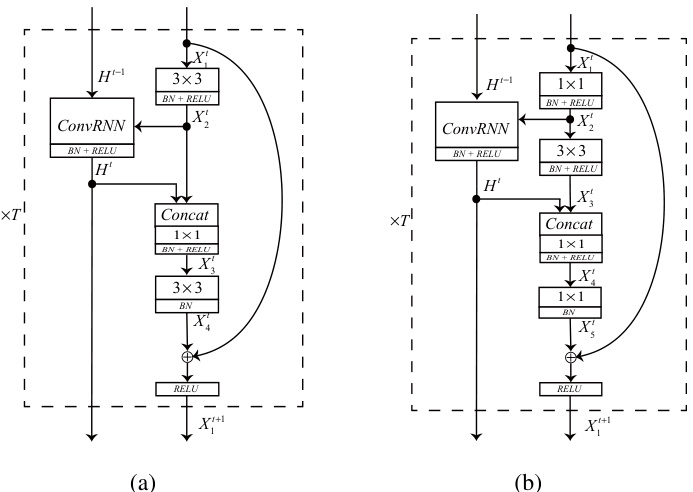

Fig. 3. The RegNet module is shown in 3(a). The bottleneck RegNet block is shown in 3(b). The $T$ denotes the number of building blocks as well as the total time steps of ConvRNN.

图 3: RegNet 模块如图 3(a) 所示。瓶颈 RegNet 块如图 3(b) 所示。$T$ 表示构建块的数量以及 ConvRNN 的总时间步数。

- RNN-Regulated ResNet Module (RegNet module): The illustration of RegNet module is shown in Fig. 3(a). Here, we choose ConvLSTM for expounding. $H^{t-1}$ denotes the earlier output from ConvLSTM, and $H^{t}$ is output of the ConvLSTM at $t$ -th module . $X_{i}^{t}$ denotes the $i$ -th feature map at the $t$ -th module.

- RNN 调控的 ResNet 模块 (RegNet 模块):RegNet 模块的示意图如图 3(a) 所示。这里,我们选择 ConvLSTM 进行说明。$H^{t-1}$ 表示 ConvLSTM 的早期输出,$H^{t}$ 是 ConvLSTM 在第 $t$ 个模块的输出。$X_{i}^{t}$ 表示第 $t$ 个模块的第 $i$ 个特征图。

The $t$ -th RegNet(ConvLSTM) module can be expressed as

第 $t$ 个 RegNet(ConvLSTM) 模块可以表示为

where $\mathbf{W}{i j}^{t}$ denotes the convolutional kernel which mapping feature map $\mathbf{X}{i}^{t}$ to $\mathbf{X}{j}^{t}$ and $\mathbf{b}{i j}^{t}$ denotes the correlative bias. Both $\mathbf{W}{12}^{t}$ and $\mathbf{W}{34}^{t}$ are $3\times3$ convolutional kernels. The $\mathbf{W}_{23}^{t}$ is $1\times1$ kernel. $\mathrm{BN}(\cdot)$ indicates batch normalization. 𝐶𝑜𝑛𝑐𝑎𝑡 $[\cdot]$ refers to the concatenate operation.

其中 $\mathbf{W}{i j}^{t}$ 表示将特征图 $\mathbf{X}{i}^{t}$ 映射到 $\mathbf{X}{j}^{t}$ 的卷积核,$\mathbf{b}{i j}^{t}$ 表示相关的偏置。$\mathbf{W}{12}^{t}$ 和 $\mathbf{W}{34}^{t}$ 都是 $3\times3$ 的卷积核。$\mathbf{W}_{23}^{t}$ 是 $1\times1$ 的卷积核。$\mathrm{BN}(\cdot)$ 表示批量归一化。$𝐶𝑜𝑛𝑐𝑎𝑡[\cdot]$ 表示拼接操作。

Notice that in Eq (2) the input feature $\mathbf{X}_{2}^{t}$ and the previous output of ConvLSTM $\mathbf{H}^{t}$ are the inputs of ConvLSTM in $t$ -th module. According to the inputs, the ConvLSTM automatically decides whether the information in memory cell will be propagated to the output hidden feature map $\mathbf{H}^{t}$ .

注意到在公式 (2) 中,输入特征 $\mathbf{X}_{2}^{t}$ 和 ConvLSTM 的前一个输出 $\mathbf{H}^{t}$ 是第 $t$ 个模块中 ConvLSTM 的输入。根据这些输入,ConvLSTM 会自动决定是否将记忆单元中的信息传播到输出隐藏特征图 $\mathbf{H}^{t}$。

- Bottleneck RNN-Regulated ResNet Module (bottleneck RegNet module): The bottleneck RegNet module based on the bottleneck ResNet building block is shown in Fig. 3(b). The bottleneck building block introduced in [5] for dealing with the pictures with large size. Based on that, the $t$ -th bottleneck RegNet module can be expressed as

- 瓶颈 RNN 调节的 ResNet 模块(瓶颈 RegNet 模块):基于瓶颈 ResNet 构建块的瓶颈 RegNet 模块如图 3(b) 所示。文献 [5] 中引入的瓶颈构建块用于处理大尺寸图片。在此基础上,第 $t$ 个瓶颈 RegNet 模块可以表示为

TABLE II ARCHITECTURES FOR CIFAR-10/100 DATASETS. BY SETTING $\mathrm{N}\in{3,5,7}$ , WE CAN GET THE 20, 32, 56 -LAYERED REGNET.

表 II CIFAR-10/100 数据集的架构。通过设置 $\mathrm{N}\in{3,5,7}$,我们可以得到 20、32、56 层的 RegNet。

| name | outputsize | (6n+2)-layered RegNet |

|---|---|---|

| conv_0 | 32×32 | 3×3,16 |

| conv_1 | 32×32 | ConvRNN1+ 3×3,16 3×3,16 xn |

| conv_2 | 16×16 | ConvRNN2+ 3×3,32 3×3,32 xn |

| conv_3 | 8×8 | ConvRNN3 3×3,64 3×3,64 xn |

| 1×1 | AP,FC,softmax |

TABLE III CLASSIFICATION ERROR RATES ON THE CIFAR-10/100. BEST RESULTS ARE MARKED IN BOLD.

表 III CIFAR-10/100 上的分类错误率。最佳结果以粗体标记。

| 模型 | C10 | C100 |

|---|---|---|

| ResNet-20 [5] RegNet-20(ConvRNN) RegNet-20(ConvGRU) RegNet-20(ConvLSTM) | 8.38 7.60 7.42 7.28 | 31.72 30.03 29.69 29.81 |

| SE-ResNet-20 SE-RegNet-20(ConvRNN) SE-RegNet-20(ConvGRU) SE-RegNet-20(ConvLSTM) | 8.02 7.55 7.25 6.98 | 31.14 29.63 29.08 29.02 |

where $\mathbf{W}{12}^{t}$ and $\mathbf{W}{45}^{t}$ are the two $1\times1$ kernels, and $\mathbf{W}{23}^{t}$ is the $3\times3$ bottleneck kernel. The $\mathbf{W}{34}^{t}$ is a $1\times1$ kernel for fusing feature in our model.

其中 $\mathbf{W}{12}^{t}$ 和 $\mathbf{W}{45}^{t}$ 是两个 $1\times1$ 的核,$\mathbf{W}{23}^{t}$ 是 $3\times3$ 的瓶颈核。$\mathbf{W}{34}^{t}$ 是我们模型中用于融合特征的 $1\times1$ 核。

IV. EXPERIMENTS

IV. 实验

In this section, we evaluate the effectiveness of the proposed convRNN regulator on three benchmark datasets, including CIFAR-10, CIFAR-100, and ImageNet. We run the algorithms on pytorch. The small-scaled models for CIFAR are trained on a single NVIDIA 1080 Ti GPU, and the large-scaled models for ImageNet are trained on 4 NVIDIA 1080 Ti GPUs.

在本节中,我们评估了所提出的 convRNN 调节器在三个基准数据集上的有效性,包括 CIFAR-10、CIFAR-100 和 ImageNet。我们在 pytorch 上运行算法。CIFAR 的小规模模型在单个 NVIDIA 1080 Ti GPU 上进行训练,而 ImageNet 的大规模模型在 4 个 NVIDIA 1080 Ti GPU 上进行训练。

A. Experiments on CIFAR

A. CIFAR 上的实验

The CIFAR datasets [34] consist of RGB image with $32\times$ 32 pixels. Each dataset contains 50k training images and $10\mathrm{k\Omega}$ testing images. The images in CIFAR-10 and CIFAR-100 are drawn from 10 and 100 classes respectively. We train on the training dataset and evaluate on the test dataset.

CIFAR 数据集 [34] 包含分辨率为 $32\times$ 32 像素的 RGB 图像。每个数据集包含 50k 张训练图像和 $10\mathrm{k\Omega}$ 张测试图像。CIFAR-10 和 CIFAR-100 中的图像分别来自 10 个和 100 个类别。我们在训练数据集上进行训练,并在测试数据集上进行评估。

By applying ConvRNNs to ResNet and SE-ResNet, we get the RegNet, and SE-RegNet models separately. Here, we use 20-layered RegNet and SE-RegNet to prove the wide applicability of our method. The SE-RegNet building module in Fig. 3(a) is used to analysis CIFAR datasets. The structural details of SE-RegNet are shown in Table II. The inputs of the network are $32\times32$ images. In each conv $\boldsymbol{\mathbf{\rho}}_{i}$ , $i\in{1,2,3}$ layer, there are n RegNet building modules stacked sequentially, and connected together by a ConvRNN. In summary, there are 3 ConvRNNs in our architecture, and each ConvRNN impacts on the n RegNet building modules. The reduction ratio r in SE block is 8.

通过将 ConvRNN 应用于 ResNet 和 SE-ResNet,我们分别得到了 RegNet 和 SE-RegNet 模型。在这里,我们使用 20 层的 RegNet 和 SE-RegNet 来证明我们方法的广泛适用性。图 3(a) 中的 SE-RegNet 构建模块用于分析 CIFAR 数据集。SE-RegNet 的结构细节如表 II 所示。网络的输入是 $32\times32$ 的图像。在每个 conv $\boldsymbol{\mathbf{\rho}}_{i}$ 层中,$i\in{1,2,3}$,有 n 个 RegNet 构建模块依次堆叠,并通过 ConvRNN 连接在一起。总的来说,我们的架构中有 3 个 ConvRNN,每个 ConvRNN 影响 n 个 RegNet 构建模块。SE 块中的缩减比 r 为 8。

In this experiment, we use SGD with a momentum of 0.9 and a weight decay of 1e-4. We train with a batch size of 64 for 150 epoch. The initial learning rate is 0.1 and divided by 10 at 80 epochs. Data augmentation in [35] is used in training. The results of SE-ResNet on CIFAR are based on our implementation, since the results were not reported in [11].

在本实验中,我们使用动量为0.9、权重衰减为1e-4的SGD(随机梯度下降)。我们以64的批量大小训练150个周期。初始学习率为0.1,并在80个周期时除以10。训练中使用了[35]中的数据增强方法。SE-ResNet在CIFAR上的结果基于我们的实现,因为[11]中未报告这些结果。

- Results on CIFAR: The classification errors on the CIFAR-10/100 test sets are shown in Table III. We can see from the results, with the same layer, both RegNet and SE-RegNet outperform the original models by a significant margin. Compared with ResNet-20, our RegNet-20 with ConvLSTM decreases the error rate by $1.51%$ on CIFAR-10 and $2.04%$ on CIFAR-100. At the same time, compared with SEResNet-20, our SE-RegNet-20 with ConvLSTM decreases the error rate by $1.04%$ on CIFAR-10 and $2.12%$ on CIFAR-100. Using ConvGRU as the regulator can reach the same level of accuracy as ConvLSTM. Due to the vanilla ConvRNN lacks gating mechanism, it performs slightly worse but still makes great progress compared with the baseline model.

- CIFAR 上的结果:CIFAR-10/100 测试集上的分类错误率如表 III 所示。从结果中可以看出,在相同的层数下,RegNet 和 SE-RegNet 都显著优于原始模型。与 ResNet-20 相比,我们的 RegNet-20 结合 ConvLSTM 在 CIFAR-10 上将错误率降低了 $1.51%$,在 CIFAR-100 上降低了 $2.04%$。同时,与 SEResNet-20 相比,我们的 SE-RegNet-20 结合 ConvLSTM 在 CIFAR-10 上将错误率降低了 $1.04%$,在 CIFAR-100 上降低了 $2.12%$。使用 ConvGRU 作为调节器可以达到与 ConvLSTM 相同的精度水平。由于普通的 ConvRNN 缺乏门控机制,其表现稍差,但与基线模型相比仍有显著进步。

- Parameters Analysis: For a fair comparison, we evaluate our model’s ability by regarding the number of models parameters as the contrast reference. As shown in Table IV, we list the test accuracy of 20, 32, 56-layered ResNets and their respective RegNet counterparts on CIFAR-10/100. After adding minimal additional parameters, both our RegNet with ConvGRU and ConvLSTM surpass the ResNet by a large margin. Our 20-layered RegNet with extra 0.04M parameters even outperforms the 32-layered ResNet on both CIFAR10/100: our 20-layered RegNet(ConvLSTM) having 0.32M parameters reaches $7.28%$ error rate on CIFAR-10 surpass the 32-layered ResNet with $7.54%$ error rate which having 0.47M parameters. Fig. 4 demonstrates the parameter efficiency com- parisons between RegNet and ResNet. We show our RegNet are more parameter-efficient than simply stacking layers in vanilla ResNet. On both CIFAR-10/100, our RegNets(GRU) get comparable performance with ResNet-56 with nearly 1/2 parameters.

- 参数分析:为了公平比较,我们以模型参数数量为对比参考,评估我们模型的能力。如表 IV 所示,我们列出了 20、32、56 层 ResNet 及其对应的 RegNet 在 CIFAR-10/100 上的测试准确率。在添加了极少的额外参数后,我们的带有 ConvGRU 和 ConvLSTM 的 RegNet 均大幅超越了 ResNet。我们的 20 层 RegNet 仅增加了 0.04M 参数,甚至在 CIFAR10/100 上超越了 32 层的 ResNet:我们的 20 层 RegNet(ConvLSTM) 拥有 0.32M 参数,在 CIFAR-10 上达到了 $7.28%$ 的错误率,超越了拥有 0.47M 参数、错误率为 $7.54%$ 的 32 层 ResNet。图 4 展示了 RegNet 和 ResNet 之间的参数效率比较。我们展示了我们的 RegNet 比简单地堆叠普通 ResNet 的层数更具参数效率。在 CIFAR-10/100 上,我们的 RegNets(GRU) 以近 1/2 的参数获得了与 ResNet-56 相当的性能。

- Positions of Feature Reuse: In this subsection, we perform ablation experiment to further analyze the effect of the position of feature reuse. We conduct an experiment to analysis that with ConvRNN which layer has the maximum promotion to the final outcome. Some previous studies [36] show that the features in an earlier layer are more general while the features in later layers exhibit more specific. As shown in Table II, the conv_1, conv_2, conv_3 layers are separated by the down sampling operation, which makes the features in conv_1 are more low-level and in conv_3 are more specific for classification. The classification results are shown in Table V.

- 特征重用的位置:在本小节中,我们进行了消融实验,以进一步分析特征重用位置的影响。我们通过实验分析了使用 ConvRNN 时,哪一层对最终结果的提升最大。一些先前的研究 [36] 表明,较早层的特征更为通用,而较后层的特征则更为具体。如表 II 所示,conv_1、conv_2、conv_3 层通过下采样操作分隔,这使得 conv_1 层的特征更为低层次,而 conv_3 层的特征则更具体于分类。分类结果如表 V 所示。

TABLE IV TEST ERROR RATES ON CIFAR-10/100. WE USE CONVGRU AND CONVLSTM AS REGULATORS OF RESNET. WE LIST THE INCREASE OF PARAMETER THE ARCHITECTURES AT THE RIGHT CORNER OF THE ERROR RATES.

表 IV CIFAR-10/100 上的测试错误率。我们使用 ConvGRU 和 ConvLSTM 作为 ResNet 的调节器。我们在错误率的右上角列出了架构参数的增加。

| 层数 | ResNet | +ConvGRU | +ConvLSTM | ResNet | +ConvGRU | +ConvLSTM |

|---|---|---|---|---|---|---|

| 20 | 8.38 | 7.42 (+0.04M) | 7.28 (+0.04M) | 31.72 | 29.69 (+0.04M) | 29.81 (+0.04M) |

| 32 | 7.54 | 6.60 (+0.06M) | 6.88 (+0.07M) | 29.86 | 27.42 (+0.07M) | 28.11 (+0.07M) |

| 56 | 6.78 | 6.39 (+0.11M) | 6.45 (+0.12M) | 28.14 | 27.02 (+0.11M) | 27.26 (+0.12M) |

Fig. 4. Comparison of parameter efficiency on CIFAR-10 between RegNet and ResNet [5]. In both 4(a) and 4(b), the curves of our RegNet is always below ResNet [5] which show that with the same parameters, our models have stronger ability of expression.

图 4: CIFAR-10 上 RegNet 和 ResNet [5] 的参数效率对比。在 4(a) 和 4(b) 中,我们的 RegNet 曲线始终低于 ResNet [5],这表明在相同参数下,我们的模型具有更强的表达能力。

TABLE VI SINGLE-CROP VALIDATION ERROR RATES ON IMAGENET AND COMPLEXITY COMPARISONS. BOTH RESNET AND REGNET ARE 50-LAYER. RESNET∗ MEANS WE REPRODUCE THE RESULT BY OURSELF.

表 VI: IMAGENET 上的单裁剪验证错误率及复杂度比较。RESNET 和 REGNET 均为 50 层。RESNET∗ 表示我们自行复现的结果。

| 模型 | top-1 错误率 | top-5 错误率 | 参数量 | FLOPs |

|---|---|---|---|---|

| ResNet t[5] | 24.7 | 7.8 | 26.6M | 4.14G |

| ResNet* | 24.81 | 7.78 | 26.6M | 4.14G |

| RegNet | 23.43 (-1.38) | 6.93 (-0.85) | 31.3M | 5.12G |

TABLE V TEST ERROR RATES ON CIFAR-10/100. WE USE CONVGRU AND CONVLSTM AS REGULATORS OF RESNET. WE LIST THE INCREASE OF PARAMETER THE ARCHITECTURES. IN EACH OF OUR $\mathsf{R E G N E T}_{(i)}$ MODELS, THERE IS ONLY ONE CONVRNN APPLIED IN LAYER CONV_𝑖, $i\in{1,2,3}$ .

表 V CIFAR-10/100 上的测试错误率。我们使用 ConvGRU 和 ConvLSTM 作为 ResNet 的调节器。我们列出了架构中参数的增加情况。在我们的每个 $\mathsf{R E G N E T}_{(i)}$ 模型中,只有一个 ConvRNN 应用于 CONV_𝑖 层,$i\in{1,2,3}$。

| C-10 | C-100 | |||

|---|---|---|---|---|

| err. | Params | err. | Params | |

| ResNet [5] | 8.38 | 0.273M | 31.72 | 0.278M |

| RegNet(1)(GRU) | 7.52 | 0.279M | 30.40 | 0.285M |

| RegNet(2)(GRU) | 7.48 | 0.285M | 30.34 | 0.291M |

| RegNet(3)(GRU) | 7.49 | 0.306M | 30.30 | 0.312M |

| RegNet(1)(LSTM) | 7.56 | 0.281M | 30.23 | 0.286M |

| RegNet(2)(LSTM) | 7.49 | 0.290M | 30.28 | 0.296M |

| RegNet(3)(LSTM) | 7.52 | 0.325M | 29.92 | 0.331M |

TABLE VII SINGLE-CROP ERROR RATES ON THE IMAGENET VALIDATION SET FOR STATE-OF-THE-ART MODELS. THE RESNET $50^{*}$ MEANS THAT THE RE-IMPLEMENT ION RESULT BY OUR EXPERIMENTS.

表 VII 在 ImageNet 验证集上最先进模型的单次裁剪错误率。ResNet $50^{*}$ 表示我们实验中的重新实现结果。

| 模型 | top-1 | top-5 | 参数量 (M) | FLOPs (G) |

|---|---|---|---|---|

| WRN-18 (widen=2.0) [8] | 25.58 | 8.06 | 45.6 | 6.70 |

| DenseNet-169 [6] | 23.80 | 6.85 | 28.9 | 7.7 |

| SE-ResNet-50 [11] | 23.29 | 6.62 | 26.7 | 4.14 |

| ResNet-50 [5] | 24.7 | 7.8 | ||

| ResNet-50* | 24.81 | 7.78 | 26.6 | 4.14 |

| ResNet-101 [5] | 23.6 | 7.1 | 44.5 | 7.51 |

| RegNet-50 | 23.43 | 6.93 | 31.3 | 5.12 |

In each model, only one ConvRNN is applied. We name the models $\mathsf{R e g N e t}_{(i)}$ , $i\in{1,2,3}$ which denotes that only applying a ConvRNN in layer conv $\underbar{\iota}$ and maintaining the original ResNet structure in the other layers. For a fair comparison, we evaluate the models ability by regarding the number of models parameters as the contrast reference. We can see from the results, using ConvRNNs in a lower layer(conv_1) is more parameter-efficient than higher layer(conv_3). With less parameter increasing in lower layers, they can bring about nearly same improvement in accuracy compared with higher layers. Compared with ResNet, our RegNet 1 (GRU) decrease the test error from $8.38%$ to $7.52%(-0.86%)$ on CIFAR-10 with additional 0.006M parameters and from $31.72%$ to $30.40%(.$ - $1.32%)$ on CIFAR-100 with additional 0.007M parameters. This significant improvement with minimal additional parameters further proves the effectiveness of the proposed method. The concatenate operation in our model can fuse features together to explore new features [13], which is more important for general features in lower layers.

在每个模型中,仅应用一个 ConvRNN。我们将模型命名为 $\mathsf{R e g N e t}_{(i)}$,其中 $i\in{1,2,3}$,表示仅在 conv $\underbar{\iota}$ 层应用 ConvRNN,并在其他层保持原始的 ResNet 结构。为了公平比较,我们通过将模型参数数量作为对比参考来评估模型的能力。从结果中可以看出,在较低层(conv_1)使用 ConvRNN 比在较高层(conv_3)更具参数效率。在较低层增加较少的参数,它们可以带来与较高层几乎相同的精度提升。与 ResNet 相比,我们的 RegNet 1 (GRU) 在 CIFAR-10 上将测试误差从 $8.38%$ 降低到 $7.52%(-0.86%)$,额外增加了 0.006M 参数;在 CIFAR-100 上将测试误差从 $31.72%$ 降低到 $30.40%(.$ - $1.32%)$,额外增加了 0.007M 参数。这种显著的改进和极少的额外参数进一步证明了所提出方法的有效性。我们模型中的拼接操作可以将特征融合在一起以探索新特征 [13],这对于较低层的通用特征更为重要。

B. Experiments on ImageNet

B. ImageNet 上的实验

We evaluate our model on ImageNet 2012 dataset [3] which consists of 1.28 million training images and $50\mathrm{k\Omega}$ validation images from 1000 classes. Following the previous papers, We report top-1 and top-5 classification errors on the validation dataset. Due to the limited resources of our GPUs and without of loss of generality, we run the experiments of ResNets and RegNets only.

我们在 ImageNet 2012 数据集 [3] 上评估了我们的模型,该数据集包含 128 万张训练图像和 $50\mathrm{k\Omega}$ 张验证图像,涵盖 1000 个类别。根据之前的论文,我们报告了验证数据集上的 top-1 和 top-5 分类错误。由于 GPU 资源有限且不失一般性,我们仅运行了 ResNets 和 RegNets 的实验。

The bottleneck RegNet building modules are applied to ImageNet. We use 4 ConvRNNs in RegNet-50. The ${\mathrm{ConvRNN}}_{i}$ , $i\in{1,2,3,4}$ , controls ${3,4,6,3}$ bottleneck RegNet modules respectively. In this experiment, we use SGD with a momentum of 0.9 and a weight decay of 1e-4. We train with batch size 128 for 90 epoch. The initial learning rate is 0.06 and divided by 10 at 50 and 70 epochs. The input of the network is $224\times224$ images, which randomly cropped from the resized original images or their horizontal flips. Data augmentation in [27] is used in training. We evaluate our model by applying a center-crop with $224\times224$ .

瓶颈 RegNet 构建模块应用于 ImageNet。我们在 RegNet-50 中使用了 4 个 ConvRNN。${\mathrm{ConvRNN}}_{i}$,$i\in{1,2,3,4}$,分别控制 ${3,4,6,3}$ 个瓶颈 RegNet 模块。在本实验中,我们使用动量为 0.9、权重衰减为 1e-4 的 SGD。我们以 128 的批量大小训练 90 个 epoch。初始学习率为 0.06,并在第 50 和第 70 个 epoch 时除以 10。网络的输入是 $224\times224$ 的图像,这些图像是从调整大小后的原始图像或其水平翻转中随机裁剪的。训练中使用了 [27] 中的数据增强方法。我们通过应用 $224\times224$ 的中心裁剪来评估我们的模型。

We evaluate the efficiency of baseline models ResNet-50 and its respectively RegNet counterpart. The comparison is based on the computational overhead. As shown in Table VI with additional $4.7\mathbf{M}$ parameters, RegNet outperforms the baseline model by $1.38%$ on top-1 accuracy and $0.85%$ on top-5 accuracy.

我们评估了基线模型 ResNet-50 及其对应的 RegNet 模型的效率。比较基于计算开销。如表 VI 所示,RegNet 在增加了 $4.7\mathbf{M}$ 参数的情况下,在 top-1 准确率上比基线模型高出 $1.38%$,在 top-5 准确率上高出 $0.85%$。

Table VII shows the error rates of some state-of-the-art models on the ImageNet validation set. Compared with the baseline ResNet, our RegNet-50 with 31.3M parameters and 5.12G FLOPs not only surpasses the ResNet-50 but also outperforms ResNet-101 with 44.6M parameters and 7.9G FLOPs. Since the proposed regulator module is essentially a beneficial makeup to the short cut mechanism in ResNets, one can easily apply the regulator module to other ResNet-based models, such as SE-ResNet, WRN-18 [8], ResNetXt [10], Dual Path Network (DPN) [13], etc. Due to computation resource limitation, we leave the implementation of the regulator module in these ResNet extensions as our future work.

表 VII 展示了一些最先进模型在 ImageNet 验证集上的错误率。与基线 ResNet 相比,我们的 RegNet-50 拥有 31.3M 参数和 5.12G FLOPs,不仅超越了 ResNet-50,还优于拥有 44.6M 参数和 7.9G FLOPs 的 ResNet-101。由于所提出的调节器模块本质上是对 ResNet 中短连接机制的有益补充,因此可以轻松地将调节器模块应用于其他基于 ResNet 的模型,例如 SE-ResNet、WRN-18 [8]、ResNetXt [10]、双路径网络 (DPN) [13] 等。由于计算资源限制,我们将这些 ResNet 扩展中调节器模块的实现留作未来的工作。

V. CONCLUSIONS

V. 结论

In this paper, we proposed to employ a regulator module with Convolutional RNNs to extract complementary features for improving the representation power of the ResNets. Experimental results on three image-classification datasets have demonstrated the promising performance of the proposed architecture in comparison with standard ResNets and Squeezeand-Excitation ResNets as well as other state-of-the-art architectures.

在本文中,我们提出使用带有卷积 RNN 的调节器模块来提取互补特征,以提高 ResNets 的表示能力。在三个图像分类数据集上的实验结果表明,与标准 ResNets、Squeeze-and-Excitation ResNets 以及其他最先进的架构相比,所提出的架构具有优异的性能。

In the future, we intend to further improve the efficiency of the proposed architecture and to apply the regulator module to other ResNet-based architectures [8]–[10] to increase their capacity. Besides, we will further explore RegNets for other challenging tasks, such as object detection [16], [17], image super-resolution [19], [20], and so on.

未来,我们计划进一步提高所提出架构的效率,并将调节器模块应用于其他基于 ResNet 的架构 [8]–[10],以提升其能力。此外,我们还将进一步探索 RegNets 在其他具有挑战性的任务中的应用,例如目标检测 [16]、[17]、图像超分辨率 [19]、[20] 等。

ACKNOWLEDGMENT

致谢

This work was partially supported by the National Key Research and Development Program of China (No. 2018AAA0100204).

本工作部分得到了国家重点研发计划(编号:2018AAA0100204)的支持。