Gender Classification from Iris Texture Images Using a New Set of Binary Statistical Image Features.

基于一组新的二元统计图像特征的虹膜纹理图像性别分类

Juan Tapia and Claudia Arellano Universidad Tec no logic a de Chile - INACAP j-tapiaf@inacap.cl A pre-print version of the paper accepted at 12th IAPR International Conference on Biometrics.

Juan Tapia 和 Claudia Arellano

智利技术大学 - INACAP

j-tapiaf@inacap.cl

本文为被第12届IAPR国际生物识别会议接受的预印本版本。

Abstract

摘要

Soft biometric information such as gender can contribute to many applications like as identification and security. This paper explores the use of a Binary Statistical Features (BSIF) algorithm for classifying gender from iris texture images captured with NIR sensors. It uses the same pipeline for iris recognition systems consisting of iris segmentation,normalisation and then classification.Experiments show that applying BSIF is not straightforward since it can create artificial textures causing mis classification. In order to overcome this limitation, a new set of filters was trained from eyeimages and different sized filters with padding bands were tested on a subject-disjoint database. A Modified-BSIF(MBSIF)method was implemented.The latter achieved better gender classification results $(94.6%$ and $9l.33%$ for the left and right eye respectively). These results are competitive with the state of the art in gender classification.In an additional contribution,a novel gender labelled database was created and it will be available upon request.

性别等软生物特征信息可以应用于身份识别和安全等许多领域。本文探讨了使用二元统计特征 (BSIF) 算法对近红外 (NIR) 传感器捕获的虹膜纹理图像进行性别分类的方法。该方法采用了与虹膜识别系统相同的流程,包括虹膜分割、归一化和分类。实验表明,直接应用 BSIF 算法并不理想,因为它可能会产生人工纹理,导致分类错误。为了克服这一限制,本文从眼部图像中训练了一组新的滤波器,并在一个不重叠的数据库上测试了不同尺寸的带填充带的滤波器。本文实现了一种改进的 BSIF 方法 (MBSIF),该方法在性别分类上取得了更好的结果 (左眼和右眼分别为 94.6% 和 91.33%)。这些结果与性别分类领域的最新技术具有竞争力。此外,本文还创建了一个新的性别标注数据库,该数据库将根据请求提供。

1. Introduction

1. 引言

Whenever people log onto computers, access an ATM, pass through airport security, use credit cards, or enter highsecurity areas, their identities need to be verified [5, 6]. There is tremendous interest in reliable and secure identification methods. An active research area of this involves gender classification. Algorithms for automatic gender classification have several applications. They can be used for database binning and retrieval, for intelligent user interfaces or visual surveillance. They can also be used to provide demographic information to improve social services, to facilitate payment methods and for marketing applications in general.

每当人们登录计算机、访问 ATM、通过机场安检、使用信用卡或进入高安全区域时,都需要验证他们的身份 [5, 6]。人们对可靠且安全的身份识别方法有着极大的兴趣。性别分类是这一领域的一个活跃研究方向。自动性别分类算法有多种应用。它们可以用于数据库分类和检索、智能用户界面或视觉监控。它们还可以用于提供人口统计信息以改善社会服务、促进支付方式以及一般的营销应用。

Gender classification based on iris images is promising despite challenging problems presented in terms of image analysis [20, 36, 30]. The human iris is an annular part between the pupil and the white sclera. The iris has an extraordinary structure and includes many interlacing minute features such as freckles, coronas, stripes, furrows, crypts and so on. These visible features, generally called the texture of the iris, are unique to each individual [1, 10, 11]. Research has also shown that the iris is essentially stable throughout a person's life. Furthermore, since the iris is externally visible, iris-based biometrics systems can be non-invasive to their users [10, 11] which is important for practical applications. All these properties (i.e., uniqueness, stability and non-invasive ness) make gender classification suitable and attractive as a complement for achieving highly reliable personal identification.

基于虹膜图像的性别分类在图像分析方面面临挑战,但仍具有前景 [20, 36, 30]。人类虹膜是瞳孔和白色巩膜之间的环形部分。虹膜具有独特的结构,包含许多交错的微小特征,如斑点、日冕、条纹、沟槽、隐窝等。这些可见特征通常被称为虹膜纹理,对每个个体都是独一无二的 [1, 10, 11]。研究还表明,虹膜在人的一生中基本上是稳定的。此外,由于虹膜是外部可见的,基于虹膜的生物识别系统可以对其用户无创 [10, 11],这对于实际应用非常重要。所有这些特性(即唯一性、稳定性和无创性)使得性别分类作为实现高度可靠个人识别的补充方法既合适又具有吸引力。

In this work a gender classification method is proposed. It uses normalised iris texture information which is codified using MBSIF. The outline of this paper is as follows: Section 2 reviews the state of the art in gender classification methods and describes the BSIF algorithm used in this work. Section 3 describes the pipeline of this work and the challenges faced when implementing MBSIF algorithms. Experimental set-up and the results of gender classification using several class if i ers and MBSIF implementation settings are shown in Section 4. Finally, the conclusions are presented in section 5.

在本研究中,提出了一种性别分类方法。该方法使用了通过MBSIF编码的归一化虹膜纹理信息。本文的结构如下:第2节回顾了性别分类方法的最新技术,并描述了本研究中使用的BSIF算法。第3节描述了本研究的流程以及在实现MBSIF算法时面临的挑战。第4节展示了使用多种分类器和MBSIF实现设置的性别分类实验设置和结果。最后,第5节给出了结论。

2. Related work

2. 相关工作

2.1. Gender Classification

2.1. 性别分类

Human faces provide important visual information for gender classification [6, 37]. Most work done to date on gender classification has involved the analysis of facial images and used different pattern analysis to increase the accuracy of classification [14, 2, 13, 30].

人脸为性别分类提供了重要的视觉信息 [6, 37]。迄今为止,大多数关于性别分类的工作都涉及面部图像的分析,并使用不同的模式分析来提高分类的准确性 [14, 2, 13, 30]。

Previous work on gender classification from iris images has focused on handcrafted feature extraction methods using normalised NIR iris images [22, 36, 20, 3, 16, 9, 33]. Some research has utilised uniform patterns or combined uniform patterns with non-uniform patterns to improve performance [38, 27]. A small number of methods have used Deep Learning on Soft-biometrics such as gender with periocular NIR images [18, 34, 28].

以往关于从虹膜图像中进行性别分类的研究主要集中在使用归一化近红外(NIR)虹膜图像的手工特征提取方法 [22, 36, 20, 3, 16, 9, 33]。一些研究利用均匀模式或将均匀模式与非均匀模式结合以提高性能 [38, 27]。少数方法在软生物特征(如性别)上使用了深度学习,并结合了周围近红外图像 [18, 34, 28]。

Tapia et al. [31] classified gender directly from the same binary iris-code that is used for recognition. They found that relevant information for predicting gender is distributed across the iris, rather than localised in particular concentric bands. Therefore, selected features representing a subset of the iris region can achieve better results than when using the whole iris. They have reported $89%$ correct gender prediction by fusing the best features of iris-code from left and right eyes.

Tapia 等人 [31] 直接从用于识别的相同二进制虹膜代码中分类性别。他们发现,预测性别的相关信息分布在虹膜上,而不是集中在特定的同心带中。因此,选择代表虹膜区域子集的特征比使用整个虹膜时能取得更好的结果。他们报告称,通过融合左右眼虹膜代码的最佳特征,性别预测的正确率达到了 $89%$。

Bobeldyk et al. [4] explored gender-prediction accuracy associated with four different regions from NIR iris images: the extended ocular region, the iris-excluded ocular region, the iris-only region, and the normalised iris-only region. They also used a BSIF texture operator to extract features from these four regions. The ocular region demonstrated its best performance at $85.7%$ , while the normalised or unwrapped images exhibited the worst performance, with an almost $20%$ decrease in performance over the ocular region. A summary of gender classification work is presented in Table 1.

Bobeldyk 等人 [4] 探索了与近红外 (NIR) 虹膜图像中四个不同区域相关的性别预测准确性:扩展的眼部区域、排除虹膜的眼部区域、仅虹膜区域以及归一化的仅虹膜区域。他们还使用了 BSIF 纹理算子从这四个区域中提取特征。眼部区域表现最佳,准确率为 $85.7%$,而归一化或展开的图像表现最差,性能比眼部区域下降了近 $20%$。性别分类工作的总结见表 1。

Table 1. Summary of gender classification methods using eye images. NS: Number of Subjects, I: Iris Images, P: Periocular Images, Th: Thermal, CP: Cellphone Images.

表 1: 使用眼部图像的性别分类方法总结。NS: 受试者数量, I: 虹膜图像, P: 眼周图像, Th: 热成像, CP: 手机图像。

| 论文 | I/P | 来源 | NS | 类型 | 准确率 (%) |

|---|---|---|---|---|---|

| V.Thomas 等人 [36] | I | 虹膜 | N/A | 近红外 (NIR) | 75.00 |

| S.Lagree 等人 [20] | I | 虹膜 | 300 | 近红外 (NIR) | 62.17 |

| A. Bansal 等人 [3] | I | 虹膜 | 200 | 近红外 (NIR) | 83.60 |

| J. Tapia 等人 [35] | I | 虹膜 | 1,500 | 近红外 (NIR) | 91.00 |

| M.Fairhurst 等人 [9] | I | 虹膜 | 200 | 近红外 (NIR) | 89.74 |

| J. Tapia 等人 [31] | I | 虹膜 | 1,500 | 近红外 (NIR) | 89.00 |

| D. Bobeldyk 等人 [4] | I/P | 虹膜 | 1,083 | 近红外 (NIR) | 85.70 |

| Kuehlkamp 等人 [18] | I/P | 虹膜 | 1,500 | 近红外 (NIR) | 80.00 |

| J. Tapia [33] | I/P | 虹膜 | 1,500 | 近红外 (NIR) | 79.33 |

| J. Tapia 等人 [34] | I | 虹膜 | 1,500 | 近红外 (NIR) | 83.00 |

| J. Merkow 等人 [21] | P | 面部 | 936 | 可见光 (VIS) | 80.00 |

| C.Chen 等人 [8] | P | 面部 | 1,003 | 近红外/热成像 (NIR/Th) | 93.59 |

| Castrillon-Santana 等人 [7] | P | 面部 | 1,500 | 可见光 (VIS) | 92.46 |

| Rattani 等人 [26] | P | 虹膜 | 550 | 可见光/手机图像 (VIS/CP) | 91.60 |

| J. Tapia 等人 [32] | P | 虹膜 | 120/120 | 近红外/可见光 (NIR/VIS) | 90.00 |

2.2. Binary Statistical Image Feature (BSIF)

2.2. 二值统计图像特征 (Binary Statistical Image Feature, BSIF)

BSIF [16] is a local descriptor constructed by binarising the responses to linear filters. In contrast to previous binary descriptors, the filters learn from thirteen natural images using independent component analysis (ICA). The code value of pixels is considered as a local descriptor of the image intensity pattern in the pixels’ surroundings. The value of each element (i.e bit) in the binary code string is computed by binarising the response of a linear filter with a zero threshold. Each bit is associated with a different filter, and the length of the bit string determines the number of filters used. The set of filters is learned from a training set of natural image patches by maximising the statistical independence of the filter responses [15](See Figure 1). The details of the parameters learned by the linear filters are described below: Given an image patch $X$ of size $l\times l$ pixels and a linear filter $W_{i}$ of the same size, the filter responses $s_{i}$ are obtained by:

BSIF [16] 是一种通过二值化线性滤波器响应构建的局部描述符。与之前的二值描述符不同,这些滤波器通过独立成分分析 (ICA) 从十三张自然图像中学习得到。像素的编码值被视为像素周围图像强度模式的局部描述符。二进制代码字符串中每个元素(即比特)的值通过将线性滤波器的响应以零阈值二值化计算得到。每个比特与不同的滤波器相关联,比特串的长度决定了使用的滤波器数量。滤波器组通过最大化滤波器响应的统计独立性从自然图像块的训练集中学习得到 [15](见图 1)。线性滤波器学习的参数细节如下:给定一个大小为 $l\times l$ 像素的图像块 $X$ 和一个相同大小的线性滤波器 $W_{i}$,滤波器响应 $s_{i}$ 通过以下方式获得:

Where, vector notation is introduced in the latter stage, for instance the vector $w$ and $x$ contain the pixels of $W_{i}$ and $X$ . The binarised feature $b_{i}$ is obtained by setting $b_{i}=1$ if $s_{i}>0$ and $b_{i}=0$ otherwise. Given $n$ linear filters $W_{i}$ ,we may stack them to a matrix $W$ of size $n\times l^{2}$ and compute all responses at once, i.e. $s=W x$ . We obtain the bit string $b$ by binarising each element $s_{i}$ of $s$ as above. Thus, given the linear feature detectors $W_{i}$ , computation of the bit string $b$ is straightforward. Also, it is clear that the bit strings for all image patches of size $l\times l$ , surrounding each pixel of an image can be computed conveniently by $n$ convolutions.

在后续阶段引入了向量表示法,例如向量 $w$ 和 $x$ 分别包含 $W_{i}$ 和 $X$ 的像素。二值化特征 $b_{i}$ 通过以下方式获得:如果 $s_{i}>0$ ,则设置 $b_{i}=1$ ,否则 $b_{i}=0$ 。给定 $n$ 个线性滤波器 $W_{i}$ ,我们可以将它们堆叠成一个大小为 $n\times l^{2}$ 的矩阵 $W$ ,并一次性计算所有响应,即 $s=W x$ 。我们通过如上所述对 $s$ 的每个元素 $s_{i}$ 进行二值化来获得比特串 $b$ 。因此,给定线性特征检测器 $W_{i}$ ,计算比特串 $b$ 是直接的。此外,显然可以通过 $n$ 次卷积方便地计算图像中每个像素周围大小为 $l\times l$ 的所有图像块的比特串。

The final image is obtained by:

最终图像通过以下方式获得:

Where, C'odeIm is an accumulative image, $C r$ is the convolution between the filter and the image that is later binarised and multiplied by the number of bits. For instance, if we use 9 bits then we compute CodeIm for $2^{1}$ later for $2^{2}$ up to $2^{9}$ . The final image will be the sum of the 9 images for each CodeIm.

其中,C'odeIm 是累积图像,$C r$ 是滤波器与图像之间的卷积,随后进行二值化并乘以位数。例如,如果我们使用 9 位,那么我们首先计算 $2^{1}$ 的 CodeIm,然后是 $2^{2}$,直到 $2^{9}$。最终图像将是每个 CodeIm 的 9 张图像的总和。

BSIF have been used for several applications including biometrics from iris images [17, 12, 24]. In this work, a gender classification algorithm using normalised NIR iris images is proposed. It uses a similar pipeline than iris recognition systems. The iris is segmented and occlusions are masked. BSIF can be sensitive to image boundaries and the occlusion mask creating artificial texture which may mislead gender classification results.

BSIF 已被用于多种应用,包括基于虹膜图像的生物识别 [17, 12, 24]。本文提出了一种使用归一化近红外 (NIR) 虹膜图像的性别分类算法。它采用了与虹膜识别系统类似的流程。虹膜被分割,遮挡部分被掩码。BSIF 对图像边界和遮挡掩码可能敏感,这些可能会产生误导性别分类结果的人工纹理。

This paper explores a new set of filters (See Figure 1) trained from thirteen eye images instead of natural images as used in traditional approach. The influence of the filter size, the padding (boundaries) and the number of bits used when implementing MBSIF algorithm are also explored.

本文探讨了一组新的滤波器(见图 1),这些滤波器是从 13 张眼睛图像中训练出来的,而不是传统方法中使用的自然图像。同时还探讨了滤波器大小、填充(边界)以及在实现 MBSIF 算法时使用的比特数的影响。

3. Gender classification using BSIF

3. 使用 BSIF 进行性别分类

This paper proposes the use of the same pipeline that is used for iris recognition systems. The input image is segmented in a pre-process step. The iris region is then transformed to a polar space and codified using MBSIF. Finally, gender classification is performed using a new database and several class if i ers (Section 3.3).

本文提出使用与虹膜识别系统相同的流程。输入图像在预处理步骤中进行分割。然后,虹膜区域被转换到极坐标空间,并使用 MBSIF 进行编码。最后,使用一个新的数据库和多个分类器进行性别分类(第 3.3 节)。

3.1. Iris Segmentation and Normalisation

3.1. 虹膜分割与归一化

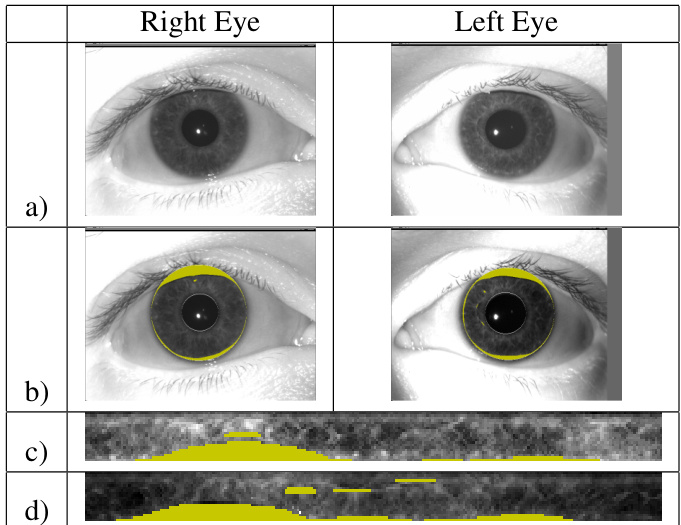

The iris is detected from the input image using commercial software Osiris [23]. A segmentation mask occludes the eyelids, eyelashes and specular refection portions of the iris image which are not useful for gender classification. It is important to note that iris images of different persons, or even the left and right iris images for a given person, may not present exactly the same mask and imaging conditions (see Figure 2). Ml lumi nation by LEDs during capture may come from either side of the sensor, specular highlights may be present in different places in the image. Eyelid and head position may also affect segmentation.

使用商业软件 Osiris [23] 从输入图像中检测虹膜。分割掩码遮挡了虹膜图像中无助于性别分类的眼睑、睫毛和镜面反射部分。需要注意的是,不同人的虹膜图像,甚至同一个人的左右眼虹膜图像,可能不会呈现完全相同的掩码和成像条件(见图 2)。在捕捉过程中,LED 的照明可能来自传感器的任一侧,镜面高光可能出现在图像的不同位置。眼睑和头部位置也可能影响分割。

Figure 1. Left, Example of patches extracted from natural images for traditional BSIF. Right, Example of patches extracted from Eye mages for Modified BSIF.

图 1: 左图,传统 BSIF 从自然图像中提取的补丁示例。右图,改进的 BSIF 从眼部图像中提取的补丁示例。

The segmented iris is normalised or unwrapped with radial $(r)$ and angular $(\theta)$ resolutions which determine the size of the rectangular iris image. The size of the normalised iris can significantly influence the iris recognition rate. In this work, a rectangular image of $20(r)\mathbf{x}~240(\theta)$ created using Osiris software [23] with automatic segmentation is used for all experiments.

分割后的虹膜通过径向 $(r)$ 和角度 $(\theta)$ 分辨率进行归一化或展开,这些分辨率决定了矩形虹膜图像的大小。归一化虹膜的大小会显著影响虹膜识别率。在本研究中,使用 Osiris 软件 [23] 自动分割生成的 $20(r)\mathbf{x}~240(\theta)$ 矩形图像用于所有实验。

Figure 2. Two original images from right and left eye (a). Segmented and masked images with eyelid and eyelash detection using Osiris (b). Images (c) and (d) are normalised images from the right and left eye both with the mask in yellow.

图 2: 来自右眼和左眼的两张原始图像 (a)。使用 Osiris 进行眼睑和睫毛检测后的分割和掩码图像 (b)。图像 (c) 和 (d) 是来自右眼和左眼的归一化图像,均带有黄色掩码。

3.2. BSIF filters application

3.2. BSIF 滤波器应用

BSIF filters compute the convolution with each normalised masked image. Each filter represents a different pattern. The final image is the results of all previous images binarised by $2^{n}$ bits. The best filter size is one that represents the correct size of the mask with the lowest number of bits. If the filter is smaller than the mask, then artificial texture information will be created and the resulting image will not well represent its original information. On the other hand, if the mask of the iris is larger than the filter, a flat area will be obtained and the filter will need to be adjusted by reducing its size. Since the size of the normalised iris imageis $20\times240$ ,special care needs to be taken in order to minimise the effects of boundary and its influence on filter size. A common approach to dealing with border effects is to pad the original image with extra rows and columns based on your filter size.

BSIF 滤波器计算与每个归一化掩码图像的卷积。每个滤波器代表不同的模式。最终图像是所有先前图像通过 $2^{n}$ 位二值化的结果。最佳滤波器大小是能够以最低位数正确表示掩码大小的滤波器。如果滤波器小于掩码,则会创建人工纹理信息,生成的图像将无法很好地表示其原始信息。另一方面,如果虹膜的掩码大于滤波器,则会获得平坦区域,滤波器需要通过减小其大小进行调整。由于归一化虹膜图像的大小为 $20\times240$ ,因此需要特别注意以最小化边界效应及其对滤波器大小的影响。处理边界效应的常见方法是根据滤波器大小在原始图像周围添加额外的行和列。

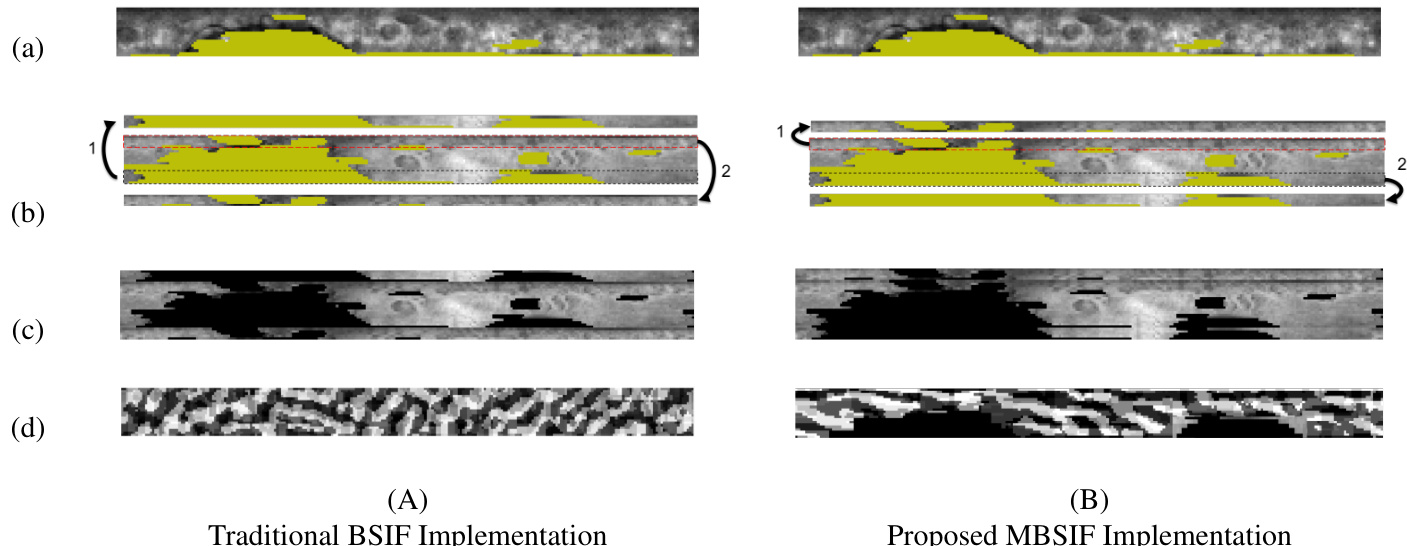

Traditional implementation of BSIF increases the size of the image and wraps the filter around it. Unfortunately, this implementation directly affects the results of the binarised iris image. Figure 3 (A) shows an example where this implementation is used. The first row (a), shows the normalised iris image obtained directly from Osiris software [23]. The second row (b) shows the extra rows added through the wrapping process. A $5\times240$ pixel band is added to the top and bottom of the original image. Addit ional bands of $5\times20$ pixels are added to the vertical sides of the image (left and right). Note that the horizontal band added to the top of the image represents the bottom of the original image (mask area) and, the horizontal band added to the bottom of the image represents the top of the original image (Figure 3, column (A), row (b)). This implementation directly affects the resulting binarised image since the boundary added creates artificial texture as can be seen in the resulting images in Figure 3, column (A), row (d).

传统 BSIF 的实现会增加图像的尺寸并将滤波器环绕在图像周围。不幸的是,这种实现方式会直接影响二值化虹膜图像的结果。图 3 (A) 展示了使用这种实现方式的示例。第一行 (a) 显示了直接从 Osiris 软件 [23] 中获取的归一化虹膜图像。第二行 (b) 展示了通过环绕过程添加的额外行。在原始图像的顶部和底部添加了一个 $5\times240$ 像素的带。在图像的垂直侧(左侧和右侧)添加了 $5\times20$ 像素的额外带。请注意,添加到图像顶部的水平带代表原始图像的底部(掩码区域),而添加到图像底部的水平带代表原始图像的顶部(图 3,列 (A),行 (b))。这种实现方式会直接影响生成的二值化图像,因为添加的边界会创建人工纹理,如图 3 列 (A) 行 (d) 中的结果图像所示。

A alternative way to deal with border effects is to pad the original image with zeros (Or a constant value), reflecting the image at the borders or replicating the first and last row/column as many times as needed

处理边界效应的另一种方法是用零(或常数值)填充原始图像,在边界处反射图像或根据需要多次复制第一行/列和最后一行/列。

Figure 3. Column (A) shows an example of traditional BSIF implementation. (a) corresponds to the input normalised iris image with the mask information in yellow. (b) illustrates padding implemented were bands 1 and 2 are wrapped on the image and (c) the resulting image after applying BSIF flters (11x11 pixels and 9 bits). A similar example is shown in column (B). In this case two bands of pixels (1 and 2) are replicated at the top and bottom of the image. The resulting image in (d) replicates the mask of the input image without adding extra artificial texture

图 3: 列 (A) 展示了传统 BSIF 实现的示例。(a) 对应输入归一化的虹膜图像,黄色部分为掩码信息。(b) 展示了在图像上实现的填充,其中波段 1 和 2 被包裹在图像上,(c) 是应用 BSIF 滤波器 (11x11 像素和 9 位) 后的结果图像。列 (B) 展示了一个类似的示例。在这种情况下,图像顶部和底部复制了两个像素波段 (1 和 2)。(d) 中的结果图像复制了输入图像的掩码,没有添加额外的人工纹理。

In order to overcome the boundary effect of traditional BSIF implementation a portion of the image is replicated in both directions (top and bottom). Figure 3, column (B), shows the details of this implementation and their effect on binarised images. As can be seen in column (B) row (d), the resulting binarised image follows the pattern of the input masked image. Therefore, there is no extra information artificially added to the iris image. This approach should better represent the information contained in the input images. A new set of filters were obtained by using a novel set of eyes images (instead of natural ones). These images were used to extract patches and to train our modified version of the algorithm (MBSIF). In the experimental section several filters size are tested and compared. Two approaches are implemented, MBSIF and MBSIF histogram.

为了克服传统BSIF实现的边界效应,图像的一部分在两个方向(顶部和底部)上进行了复制。图3的(B)列展示了这种实现的细节及其对二值化图像的影响。如(B)列(d)行所示,生成的二值化图像遵循输入掩码图像的模式。因此,没有人为地向虹膜图像添加额外的信息。这种方法应该能更好地表示输入图像中包含的信息。通过使用一组新的眼睛图像(而不是自然图像)获得了一组新的滤波器。这些图像用于提取图像块并训练我们修改后的算法版本(MBSIF)。在实验部分,测试并比较了几种滤波器尺寸。实现了两种方法,MBSIF和MBSIF直方图。

3.3. Gender classification

3.3. 性别分类

Several classification algorithms are used to test gender information from iris texture images. Those algorithms are: Adaboost M1, LogitBoost, Gentle Boost, Robust Boost, LP- Boost, TotalBoost and RusBoost. Additionally, a Random Forest classifier with 500 trees, a Gini Index, and a LIBSVM classifier with Gaussian Kernel (RBF) were also used. A comparison of the results obtained with these class if i ers is shown in section 4.

使用了几种分类算法来测试从虹膜纹理图像中提取的性别信息。这些算法包括:Adaboost M1、LogitBoost、Gentle Boost、Robust Boost、LP-Boost、TotalBoost 和 RusBoost。此外,还使用了包含 500 棵树的随机森林分类器、基尼指数以及带有高斯核 (RBF) 的 LIBSVM 分类器。第 4 节展示了这些分类器结果的比较。

3.4. Databases

3.4. 数据库

GFI-UND: The GFI-UND database used in this paper contains images taken with an LG 40o0 sensor. This dataset is the same used in [31]. The LG 4000 uses near-infrared illumination and acquires 480x640, 8-bit/pixel images. Examples of iris images are shown in Figure 2. The GFI-UND iris database was used to train and test a gender classifier.

GFI-UND:本文使用的 GFI-UND 数据库包含使用 LG 4000 传感器拍摄的图像。该数据集与 [31] 中使用的相同。LG 4000 使用近红外照明并获取 480x640、8 位/像素的图像。虹膜图像的示例如图 2 所示。GFI-UND 虹膜数据库用于训练和测试性别分类器。

For each subject (750 males and 750 females, for a total of 3,0o0 images), one left eye image was selected at random from the set of left eye images, and one right eye image was selected at random from the right eye images. A training portion of the dataset was created by randomly selecting $80%$ of the males and $80%$ of the females. A 5-fold crossvalidation on this training set is used to select parameters for each classifier. Once the parameter selection was finalised, a classifier was trained on the full $80%$ of the training data, and a single evaluation was made on $20%$ of the test data. Experiments are conducted separately for the left iris and the right iris. The masks were set to zero in all images. To the authors’ understanding, the GFI-UND database [31] is the only dataset created exclusively for gender classification from iris images. It it a person-disjoint set with 1,500 different subjects.

对于每个受试者(750名男性和750名女性,共3,000张图像),从左眼图像集中随机选择一张左眼图像,从右眼图像集中随机选择一张右眼图像。通过随机选择80%的男性和80%的女性,创建了数据集的训练部分。在该训练集上进行5折交叉验证,以选择每个分类器的参数。一旦参数选择完成,就在80%的训练数据上训练分类器,并在20%的测试数据上进行一次评估。实验分别对左眼虹膜和右眼虹膜进行。所有图像中的掩码都设置为零。据作者了解,GFI-UND数据库 [31] 是唯一一个专门为虹膜图像性别分类创建的数据集。它是一个包含1,500名不同受试者的人体不相交集。

UNAB-Val: As an additional contribution, a new gender-labelled database was created. This is a persondisjoint dataset that was captured using an iCAM TD-100 NIR sensor. The iCAM TD-100 uses near-infrared illumination and acquires $480\mathrm{x}6408\cdot$ -bit pixels per image. This set of iris images were obtained over 5 sessions with 66 female and 70 male subjects. Each subject has 5 images per eye. In total 660 female images and 700 male images were captured. This database is to be increased continuously since the capturing process is active as of writing. This database will be available upon request. Additional datasets were requested but unfortunately were not available [19, 29, 25].

UNAB-Val:作为额外的贡献,创建了一个新的性别标注数据库。这是一个使用 iCAM TD-100 近红外传感器捕获的人员不相交数据集。iCAM TD-100 使用近红外照明,每张图像获取 $480\mathrm{x}6408\cdot$ 位像素。这组虹膜图像是在 5 个会话中从 66 名女性和 70 名男性受试者中获得的。每个受试者每只眼睛有 5 张图像。总共捕获了 660 张女性图像和 700 张男性图像。由于截至撰写本文时捕获过程仍在进行中,该数据库将持续增加。该数据库将根据请求提供。还请求了其他数据集,但不幸的是无法获得 [19, 29, 25]。

4. Experiments and results

4. 实验与结果

Several experiments were performed in order to test the use of MBSIF for gender classification. Figure 4 shows gender classification results when using the left and right eye data set (from GFI-UND database) and 10 different classifiers. In this experiment, the BSIF algorithm was implemented using the standard padding as shown in Figure 3 (A). The best class if i ers for both eyes are Adaboost and SVM. Several filter sizes (from $5~\times5$ up to $13\times13$ )and number of bits from 5 to 12 were used. The best results are shown in Figure 4. They were achieved when a filter size of $13\times13$ and 8 bits was used for the left eye images and a filter of $13\times13$ and 7 bits for the right eye. The maximum classification rate obtained with this implementation (BSIF) was $65%$ and $67%$ for the left and right eye respectively.

为了测试 MBSIF 在性别分类中的应用,进行了多项实验。图 4 展示了使用左右眼数据集(来自 GFI-UND 数据库)和 10 种不同分类器时的性别分类结果。在本实验中,BSIF 算法使用了如图 3 (A) 所示的标准填充方式。左右眼的最佳分类器分别是 Adaboost 和 SVM。实验中使用了多种滤波器尺寸(从 $5~\times5$ 到 $13\times13$)以及 5 到 12 位的位数。最佳结果如图 4 所示,这些结果是在左眼图像使用 $13\times13$ 的滤波器尺寸和 8 位,右眼图像使用 $13\times13$ 的滤波器尺寸和 7 位时获得的。使用该实现(BSIF)获得的最大分类率分别为左眼 $65%$ 和右眼 $67%$。

Figure 4. Classification rates for the left and right eye when using several class if i ers and standard BSIF implementation.

图 4: 使用几种分类器和标准 BSIF 实现时左右眼的分类率。

In order to find the best classification rate with our proposed MBSIF algorithm, several filter sizes (5x5, 7x7, 9x9, 11x11, 13x13, 15x15 and 17x17) with a different number of bits (from 5 bits up to 12 bits) were tested. The number of bits represent the number of filters used in the convolution. Experiments using the entire image (all the filter sizes and from 5-12 bit) and using the normalised histogram of images were performed (See Figure 5). One of the advantages of using the normalised histogram is that the vector size of each image is smaller. It only depends on the number of bits. For instance, when using 5 bits, the resulting vector has 32 bins, whereas when using 6 bits, the resulting vector has 64 bins and so on. Figure 5 shows results for the left and right eye images using our proposed implementation of BSIF for both cases: when using the entire image and when using the histogram. In the case of left eye images the best result $(94.33%)$ was obtained with the filter 11x11 and 6 bits. This represents 144 correct identification s out of 150 male images and 140 correct identification s out of 150 female images. A slightly improved result was achieved when using the MBSIF histogram $(94.66%)$ . In this case, the best result was obtained with a 11x11 filter and 10 bits (1024 bins). For right eye images the best results when using the proposed MBSIF implementation was $91.66%$ and it was obtained with a 11x11 filter and 10 bits (2,048 bins). Gender classification results were slightly better when using the MBSIF histogram ( $92.00%\$ . In this case, results represent 140 out of 150 for male and 136 out of 150 for female images.

为了找到我们提出的 MBSIF 算法的最佳分类率,我们测试了多种滤波器大小(5x5、7x7、9x9、11x11、13x13、15x15 和 17x17)以及不同的比特数(从 5 比特到 12 比特)。比特数表示卷积中使用的滤波器数量。实验使用了整张图像(所有滤波器大小和 5-12 比特)以及图像的归一化直方图(见图 5)。使用归一化直方图的一个优势是每张图像的向量尺寸更小。它仅取决于比特数。例如,当使用 5 比特时,生成的向量有 32 个 bin,而使用 6 比特时,生成的向量有 64 个 bin,以此类推。图 5 展示了使用我们提出的 BSIF 实现时,左眼和右眼图像在使用整张图像和使用直方图两种情况下的结果。对于左眼图像,使用 11x11 滤波器和 6 比特时获得了最佳结果 $(94.33%)$。这表示在 150 张男性图像中有 144 张正确识别,在 150 张女性图像中有 140 张正确识别。当使用 MBSIF 直方图时,结果略有提升 $(94.66%)$。在这种情况下,使用 11x11 滤波器和 10 比特(1024 个 bin)获得了最佳结果。对于右眼图像,使用我们提出的 MBSIF 实现时,最佳结果为 $91.66%$,使用 11x11 滤波器和 10 比特(2048 个 bin)获得。当使用 MBSIF 直方图时,性别分类结果略好 $(92.00%)$。在这种情况下,结果表示在 150 张男性图像中有 140 张正确识别,在 150 张女性图像中有 136 张正确识别。

A summary of the best results obtained from the experiments is shown in Table 2. The best gender classification rates were achieved when boundaries of the normalised iris texture were replicated (Figure 3(B)) instead of wrapped around (Figure 3(A)). The algorithm was trained using the GFI-UND database and tested using the GFI-UND-Val and UNAB-Val datasets. The difference was only $4%$ on average with both datasets.

实验中获得的最佳结果总结如表 2 所示。当标准化虹膜纹理的边界被复制时(图 3(B)),而不是环绕时(图 3(A)),获得了最佳性别分类率。该算法使用 GFI-UND 数据库进行训练,并使用 GFI-UND-Val 和 UNAB-Val 数据集进行测试。两个数据集的平均差异仅为 $4%$。

Table 2. Summary of gender classification rates using BSIF, MBSIF and MBSIF histogram. FS: Filter Size, NB: Number of bits.

表 2. 使用 BSIF、MBSIF 和 MBSIF 直方图的性别分类率总结。FS: 滤波器大小,NB: 位数。

| 方法 | FS | NB | 数据库 | 左眼 (%) | 右眼 (%) |

|---|---|---|---|---|---|

| BSIF (A) | 11×11 | 12 | GFI-UND | 61.67 | 67.00 |

| MBSIF (B) | 11×11 | 6 | GFI-UND | 94.33 | 91.66 |

| MBSIF-H (B) | 11×11 | 10 | GFI-UND | 94.66 | 92.00 |

5. Conclusions

5. 结论

BSIF filters can extract and encode general patterns present in traditional images such as faces or periocular images but when applied to normalised iris images with masks, artificial textures are produced. These artificial textures can affect gender classification rates. Through this paper experiments have shown that special care needs to be taken on boundaries when dealing with BSIF filters.

BSIF滤波器可以提取和编码传统图像(如面部或眼周图像)中的一般模式,但当应用于带有掩码的归一化虹膜图像时,会产生人工纹理。这些人工纹理可能会影响性别分类的准确率。本文通过实验表明,在处理BSIF滤波器时,需要特别注意边界问题。

The patterns detected by traditional BSIF method do not represent the texture of the iris well. Traditional BSIF use thirteen natural images to create the filter patches. The filter created from Eye-Images was more suitable to capture the texture inside the iris. This also allows the gender classification rate to be improved.

传统 BSIF 方法检测到的模式并不能很好地表示虹膜的纹理。传统 BSIF 使用十三张自然图像来创建滤波器补丁。从眼部图像创建的滤波器更适合捕捉虹膜内部的纹理。这也使得性别分类率得以提高。

Traditional setting of BSIF increases the image size by wrapping the image values. This implementation has an impact on gender classification rates when using masked normalised iris images. Under this setting, gender classification rates of only $61%$ and $67%$ were achieved for right and left eye images respectively. In order to overcome the boundary effect of traditional BSIF implementation a portion of the image is replicated in both directions (top and bottom). This implementation improved the gender classification result considerably up to $94%$ and $92%$ for left and right eye images respectively. The best results were achieved when the MBSIF histogram was used. There are clear computational advantages to predicting gender from the normalised image rather than computing another different texture representation. This method can be easily included in the same pipeline of recognition systems. The use of the normalised iris can reduced computational cost thanks to the small size of the image. This is particularly important when large amounts of data needs to be processed such as gender classification in highly populated countries (i.e India, china). Experiments were validated using two databases and several class if i ers. Gender classification results obtained were competitive with the state of the art. As an additional contribution, a new gender-labelled database was created and will be available to other researchers upon request.

传统BSIF设置通过环绕图像值来增加图像大小。这种实现在使用掩码归一化虹膜图像时对性别分类率有影响。在此设置下,左右眼图像的性别分类率分别仅为$61%$和$67%$。为了克服传统BSIF实现的边界效应,图像的一部分在两个方向(顶部和底部)被复制。这种实现显著提高了性别分类结果,左右眼图像分别达到$94%$和$92%$。使用MBSIF直方图时获得了最佳结果。从归一化图像中预测性别而不是计算另一种不同的纹理表示具有明显的计算优势。这种方法可以轻松地集成到识别系统的同一流程中。由于图像尺寸较小,使用归一化虹膜可以降低计算成本。这在需要处理大量数据时尤为重要,例如在人口众多的国家(如印度、中国)进行性别分类。实验使用两个数据库和多个分类器进行了验证。获得的性别分类结果与现有技术水平相当。作为额外的贡献,创建了一个新的性别标记数据库,并将应要求提供给其他研究人员。

Figure 5. Gender classification results using MBSIF and BSIF histogram for left and right eye images when using different filters size and number of bits from 5 up to 12.

图 5: 使用不同滤波器大小和位数(从 5 到 12)时,左右眼图像的 MBSIF 和 BSIF 直方图的性别分类结果。

References

参考文献

6. Acknowledgments

6. 致谢

This research was partially funded by FONDECYT INICIACION 11170189 and Universidad Andres Bello, DCI.

本研究部分由FONDECYT INICIACION 11170189和Universidad Andres Bello, DCI资助。