MaTVLM: Hybrid Mamba-Transformer for Efficient Vision-Language Modeling

MaTVLM: 用于高效视觉语言建模的混合 Mamba-Transformer

Yingyue Li1 Bencheng Liao2,1 Wenyu Liu1 Xinggang Wang1,B 1 School of EIC, Huazhong University of Science & Technology 2 Institute of Artificial Intelligence, Huazhong University of Science & Technology

李映月1 廖本成2,1 刘文宇1 王兴刚1,B

1 华中科技大学电子与信息工程学院

2 华中科技大学人工智能研究院

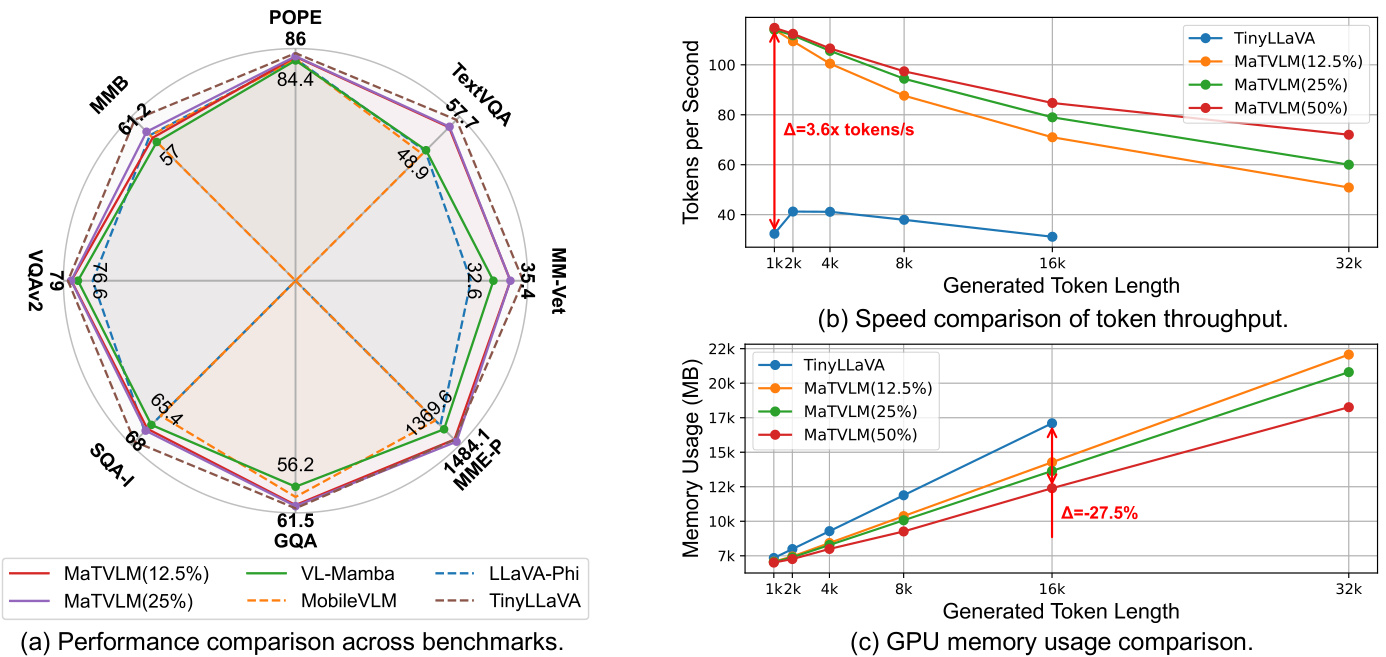

Figure 1. Comprehensive comparison of our MaTVLM. (a) Performance comparison across multiple benchmarks. Our MaTVLM achieves competitive results with the teacher model TinyLLaVA, surpassing existing VLMs with similar parameter scales, as well as Mamba-based VLMs. (b) Speed Comparison of Token Throughput. Tokens generated per second for different token lengths. Our MaTVLM achieves a $3.6\times$ speedup compared to the teacher model TinyLLaVA. (c) GPU Memory Usage Comparison. A detailed comparison of memory usage during inference for different token lengths, highlighting the optimization advantages with a $27.5%$ reduction in usage for our MaTVLM over TinyLLaVA.

图 1: 我们的 MaTVLM 的综合对比。(a) 多个基准测试的性能对比。我们的 MaTVLM 在与教师模型 TinyLLaVA 的竞争中取得了优异的结果,超越了现有参数规模相似的视觉语言模型 (VLM) 以及基于 Mamba 的 VLM。(b) Token 吞吐速度对比。不同 Token 长度下每秒生成的 Token 数量。我们的 MaTVLM 相比教师模型 TinyLLaVA 实现了 $3.6\times$ 的加速。(c) GPU 内存使用对比。不同 Token 长度下推理过程中的内存使用详细对比,展示了我们的 MaTVLM 相比 TinyLLaVA 在内存使用上优化了 $27.5%$ 的优势。

Abstract

摘要

With the advancement of RNN models with linear complexity, the quadratic complexity challenge of transformers has the potential to be overcome. Notably, the emerging Mamba-2 has demonstrated competitive performance, bridging the gap between RNN models and transformers. However, due to sequential processing and vanishing gradients, RNN models struggle to capture long-range dependencies, limiting contextual understanding. This results in slow convergence, high resource demands, and poor performance on downstream understanding and complex reasoning tasks. In this work, we present a hybrid model MaTVLM by substituting a portion of the transformer decoder layers in a pre-trained VLM with Mamba-2 layers. Leveraging the inherent relationship between attention and Mamba-2, we initialize Mamba-2 with correspond- ing attention weights to accelerate convergence. Subsequently, we employ a single-stage distillation process, using the pre-trained VLM as the teacher model to transfer knowledge to the MaTVLM, further enhancing convergence speed and performance. Furthermore, we investigate the impact of differential distillation loss within our training framework. We evaluate the MaTVLM on multiple benchmarks, demonstrating competitive performance against the teacher model and existing VLMs while surpassing both Mamba-based VLMs and models of comparable parameter scales. Remarkably, the MaTVLM achieves up to $3.6\times$ faster inference than the teacher model while reducing GPU memory consumption by $27.5%$ , all without compromising performance. Code and models are released

随着线性复杂度的RNN模型的进步,Transformer的二次复杂度挑战有望被克服。值得注意的是,新兴的Mamba-2已经展示了与Transformer相媲美的性能,缩小了RNN模型与Transformer之间的差距。然而,由于顺序处理和梯度消失问题,RNN模型难以捕捉长距离依赖关系,限制了上下文理解能力。这导致收敛速度慢、资源需求高,且在下游理解和复杂推理任务上表现不佳。在本研究中,我们提出了一种混合模型MaTVLM,通过在预训练的VLM中用Mamba-2层替换部分Transformer解码器层。利用注意力机制与Mamba-2之间的固有关系,我们用相应的注意力权重初始化Mamba-2以加速收敛。随后,我们采用单阶段蒸馏过程,使用预训练的VLM作为教师模型,将知识转移到MaTVLM中,进一步提高了收敛速度和性能。此外,我们还研究了训练框架中差异蒸馏损失的影响。我们在多个基准上评估了MaTVLM,展示了其与教师模型和现有VLM相媲美的性能,同时超越了基于Mamba的VLM和参数规模相当的模型。值得注意的是,MaTVLM在保持性能的同时,推理速度比教师模型快达$3.6\times$,GPU内存消耗减少了$27.5%$。代码和模型已发布。

1. Introduction

1. 引言

Large vision-language models (VLMs) have rapidly advanced in recent years [4, 11, 12, 28, 38, 39, 49, 63]. VLMs are predominantly built on transformer architecture. However, due to the quadratic complexity of transformer with respect to sequence length, VLMs are computationally intensive for both training and inference. Recently, several RNN models [17, 19, 23, 45, 56] have emerged as potential alternatives to transformer, offering linear scaling with respect to sequence length. Notably, Mamba [17, 23] has shown exceptional performance in long-range sequence tasks, surpassing transformer in computational efficiency.

近年来,大视觉语言模型 (VLMs) 取得了快速进展 [4, 11, 12, 28, 38, 39, 49, 63]。VLMs 主要基于 Transformer 架构。然而,由于 Transformer 在序列长度上的二次复杂度,VLMs 在训练和推理过程中计算量巨大。最近,一些 RNN 模型 [17, 19, 23, 45, 56] 作为 Transformer 的潜在替代方案出现,提供了与序列长度成线性比例的计算复杂度。值得注意的是,Mamba [17, 23] 在长序列任务中表现出色,在计算效率上超越了 Transformer。

Several studies [29, 42, 62] have explored integrating Mamba architecture into VLMs by replacing transformerbased large language models (LLMs) with Mamba-based LLMs. These works have demonstrated competitive performance while achieving significant gains in inference speed. However, several limitations are associated with these approaches: (1) Mamba employs sequential processing, which limits its ability to capture global context compared to transformer, thereby restricting these VLMs’ performance in complex reasoning and problem-solving tasks [52, 55]; (2) The sequential nature of Mamba results in inefficient gradient propagation during long-sequence training, leading to slow convergence when training VLMs from scratch. As a result, the high computational cost and the large amount of training data required become significant bottlenecks for these VLMs; (3) The current training scheme for these VLMs is complex, requiring multi-stage training to achieve optimal performance. This process is both time-consuming and computationally expensive, making it difficult to scale Mamba-based VLMs for broader applications.

多项研究 [29, 42, 62] 探索了通过将基于 Transformer 的大语言模型 (LLM) 替换为基于 Mamba 的 LLM,将 Mamba 架构集成到视觉语言模型 (VLM) 中。这些工作展示了具有竞争力的性能,同时在推理速度上取得了显著提升。然而,这些方法存在一些局限性:(1) Mamba 采用顺序处理,与 Transformer 相比,其在捕捉全局上下文方面的能力有限,从而限制了这些 VLM 在复杂推理和问题解决任务中的表现 [52, 55];(2) Mamba 的顺序特性导致在长序列训练期间梯度传播效率低下,导致从头训练 VLM 时收敛速度较慢。因此,高计算成本和所需的大量训练数据成为这些 VLM 的主要瓶颈;(3) 当前这些 VLM 的训练方案复杂,需要多阶段训练才能达到最佳性能。这一过程既耗时又计算成本高,使得基于 Mamba 的 VLM 难以扩展到更广泛的应用中。

To address the aforementioned issues, we propose a novel Mamba-Transformer Vision-Language Model (MaTVLM) that integrates Mamba-2 and transformer components, striking a balance between computational efficiency and overall performance. Firstly, attention and Mamba are inherently connected, removing the softmax from attention transforms it into a linear RNN, revealing its structural similarity to Mamba. We will analyze this relationship in detail in Sec. 3.2. Furthermore, studies applying Mamba to large language models (LLMs) [47, 48] have demonstrated that models hybridizing Mamba outperform both pure Mamba-based and transformer-based models on certain tasks. Motivated by this connection and empirical findings, combining Mamba with transformer components presents a promising direction, offering a trade-off between improved reasoning capabilities and computational efficiency. Specifically, we adopt the TinyLLaVA [63] as the base VLM and replace a portion of its transformer decoder layers with Mamba decoder layers while keeping the rest of the model unchanged.

为了解决上述问题,我们提出了一种新颖的 Mamba-Transformer 视觉-语言模型 (MaTVLM),该模型集成了 Mamba-2 和 Transformer 组件,在计算效率和整体性能之间取得了平衡。首先,注意力机制和 Mamba 本质上是相互关联的,从注意力机制中移除 softmax 会将其转化为线性 RNN,揭示了其与 Mamba 的结构相似性。我们将在第 3.2 节中详细分析这一关系。此外,将 Mamba 应用于大语言模型 (LLM) 的研究 [47, 48] 表明,在某些任务上,混合 Mamba 的模型优于纯 Mamba 和纯 Transformer 模型。基于这种关联和实证结果,将 Mamba 与 Transformer 组件结合是一个有前景的方向,能够在提升推理能力和计算效率之间取得平衡。具体而言,我们采用 TinyLLaVA [63] 作为基础 VLM,并将其部分 Transformer 解码器层替换为 Mamba 解码器层,同时保持模型其余部分不变。

To minimize the training cost of the MaTVLM while maximizing its performance, we propose to distill knowledge from the pre-trained base VLM. Firstly, we initialize Mamba-2 with the corresponding attention’s weights as mentioned in Sec. 3.2, which is important to accelerate the convergence of Mamba-2 layers. Moreover, during distillation training, we employ both probability distribution and layer-wise distillation losses to guide the learning process, making only Mamba-2 layers trainable while keeping transformer layers fixed. Notably, unlike most VLMs that require complex multi-stage training, our approach involves a single-stage distillation process.

为了在最小化 MaTVLM 训练成本的同时最大化其性能,我们提出从预训练的基础 VLM 中蒸馏知识。首先,我们按照第 3.2 节中提到的方法,使用相应的注意力权重初始化 Mamba-2,这对于加速 Mamba-2 层的收敛非常重要。此外,在蒸馏训练过程中,我们采用概率分布和逐层蒸馏损失来指导学习过程,仅使 Mamba-2 层可训练,而保持 Transformer 层固定。值得注意的是,与大多数需要复杂多阶段训练的 VLM 不同,我们的方法仅涉及单阶段蒸馏过程。

Despite the simplified training approach, our model demonstrates comprehensive performance across multiple benchmarks, as illustrated in Fig. 1. It exhibits competitive results when compared to the teacher model, TinyLLaVA, and outperforms Mamba-based VLMs as well as other transformer-based VLMs with similar parameter scales. The efficiency of our model is further emphasized by a $3.6\times$ speedup and a $27.5%$ reduction in memory usage, thereby confirming its practical advantages in real-world applications. These results underscore the effectiveness of our approach, providing a promising avenue for future advancements in model development and optimization.

尽管采用了简化的训练方法,我们的模型在多个基准测试中展现了全面的性能,如图 1 所示。与教师模型 TinyLLaVA 相比,它表现出具有竞争力的结果,并且超越了基于 Mamba 的视觉语言模型 (VLM) 以及其他具有相似参数规模的基于 Transformer 的视觉语言模型。我们模型的效率进一步体现在 $3.6\times$ 的加速和 $27.5%$ 的内存使用减少上,从而确认了其在实际应用中的优势。这些结果凸显了我们方法的有效性,为未来模型开发和优化提供了有前景的方向。

In summary, this paper makes three significant contributions:

总之,本文做出了三项重要贡献:

• We propose a new hybrid VLM architecture MaTVLM that effectively integrates Mamba-2 and transformer components, balancing the computational efficiency with high-performance capabilities. • We propose a novel single-stage knowledge distillation approach for the Mamba-Transformer hybrid VLMs. By leveraging pre-trained knowledge, our method accelerates convergence, enhances model performance, and strengthens visual-linguistic understanding. • We demonstrate that our approach significantly achieves a $3.6\times$ faster inference speed and a $27.5%$ reduction in memory usage while maintaining the competitive performance of the base VLM. Moreover, it outperforms Mamba-based VLMs and existing VLMs with similar parameter scales across multiple benchmarks.

• 我们提出了一种新的混合视觉语言模型 (VLM) 架构 MaTVLM,它有效地集成了 Mamba-2 和 Transformer 组件,在计算效率和高性能能力之间取得了平衡。

• 我们提出了一种新颖的单阶段知识蒸馏方法,适用于 Mamba-Transformer 混合视觉语言模型。通过利用预训练知识,我们的方法加速了收敛,增强了模型性能,并加强了视觉-语言理解能力。

• 我们证明了我们的方法在保持基础视觉语言模型竞争力的同时,显著实现了推理速度提升 $3.6\times$ 和内存使用减少 $27.5%$。此外,它在多个基准测试中优于基于 Mamba 的视觉语言模型和具有相似参数规模的现有视觉语言模型。

2. Related Work

2. 相关工作

2.1. Efficient VLMs

2.1. 高效视觉语言模型 (Efficient VLMs)

In recent years, efficient and lightweight VLMs have advanced significantly. Several academic-oriented VLMs, such as TinyLLaVA-3.1B [63], MobileVLM-3B [14], and LLaVA-Phi [65] have been developed to improve efficiency. Meanwhile, commercially oriented models like Qwen2.5- VL-3B [5], InternVL2.5-2B [10], and others achieve remarkable performance by leveraging large-scale datasets with high-resolution images and long-context text.

近年来,高效且轻量级的视觉语言模型(VLM)取得了显著进展。一些面向学术研究的VLM,如TinyLLaVA-3.1B [63]、MobileVLM-3B [14] 和 LLaVA-Phi [65],已被开发出来以提高效率。与此同时,面向商业的模型如 Qwen2.5-VL-3B [5]、InternVL2.5-2B [10] 等,通过利用包含高分辨率图像和长上下文文本的大规模数据集,实现了卓越的性能。

Our work prioritizes efficiency and resource constraints over large-scale, commercially oriented training. Unlike previous approaches, by integrating Mamba-2 [17], our method achieves competitive performance while significantly reducing computational demands, making it wellsuited for deployment in resource-limited environments.

我们的工作优先考虑效率和资源限制,而非大规模、商业导向的训练。与以往方法不同,通过集成 Mamba-2 [17],我们的方法在显著降低计算需求的同时实现了具有竞争力的性能,非常适合在资源有限的环境中部署。

2.2. Structured State Space Models

2.2. 结构化状态空间模型

Structured state space models (S4) [17, 23, 24, 26, 41, 44] scale efficiently in a linear manner with sequence length. Mamba[23] introduces selective SSMs, while Mamba2 [17] refines this by linking SSMs to attention variants, achieving $ {2-8\times}$ speedup and performance comparable to transformers. Mamba-based VLMs [29, 42, 62, 66] primarily replace the transformer-based large language models (LLMs) entirely with the pre-trained Mamba-2 language model, achieving both competitive performance and enhanced computational efficiency.

结构化状态空间模型 (S4) [17, 23, 24, 26, 41, 44] 能够以线性方式随着序列长度高效扩展。Mamba [23] 引入了选择性 SSMs,而 Mamba2 [17] 通过将 SSMs 与注意力变体联系起来,进一步优化了这一模型,实现了 $ {2-8\times}$ 的加速,并且性能可与 Transformer 相媲美。基于 Mamba 的视觉语言模型 (VLMs) [29, 42, 62, 66] 主要将基于 Transformer 的大语言模型 (LLMs) 完全替换为预训练的 Mamba-2 语言模型,既实现了有竞争力的性能,又提高了计算效率。

Our work innovative ly integrates Mamba and transformer within VLMs, combining their strengths rather than entirely replacing transformers with Mamba-2. By adopting a hybrid approach and introducing a single-stage distillation strategy, we enhance model expressiveness, improve efficiency, and achieve superior performance over previous Mamba-based VLMs while maintaining computational efficiency for practical deployment.

我们的工作创新性地将Mamba和Transformer集成到视觉语言模型(VLM)中,结合了两者的优势,而不是完全用Mamba-2替代Transformer。通过采用混合方法并引入单阶段蒸馏策略,我们增强了模型的表达能力,提高了效率,并在保持计算效率的同时,超越了之前基于Mamba的VLM性能,使其更适合实际部署。

2.3. Hybrid Mamba and Transformer

2.3. 混合 Mamba 和 Transformer

Recent works, such as Mamba In Llama [48] and MOHAWK [6], demonstrate the effectiveness of hybrid Mamba-Transformer architectures in LLMs, achieving notable improvements in efficiency and performance. Additionally, Mamba Vision [27] extends this hybrid approach to vision models, introducing a Mamba-Transformer-based backbone that excels in image classification and other vision-related tasks, showcasing the potential of integrating SSMs with transformers.

最近的研究,如 Mamba In Llama [48] 和 MOHAWK [6],展示了混合 Mamba-Transformer 架构在大语言模型中的有效性,在效率和性能上取得了显著提升。此外,Mamba Vision [27] 将这种混合方法扩展到视觉模型中,引入了一种基于 Mamba-Transformer 的主干网络,在图像分类和其他视觉相关任务中表现出色,展示了将 SSM 与 Transformer 结合的潜力。

Unlike previous studies on LLMs or vision backbones, our work extends the hybrid Mamba-Transformer to VLMs and design a concise architecture with an efficient singlestage distillation strategy, enhancing convergence, reducing inference time, and lowering memory consumption for practical deployment.

与之前关于大语言模型或视觉主干网络的研究不同,我们的工作将混合 Mamba-Transformer 扩展到视觉语言模型 (VLM),并设计了一种简洁的架构,采用高效的单阶段蒸馏策略,增强了收敛性,减少了推理时间,并降低了实际部署中的内存消耗。

2.4. Knowledge Distillation

2.4. 知识蒸馏 (Knowledge Distillation)

More recently, knowledge distillation for LLMs has gained attention [2, 25, 32, 50, 53], while studies on VLM distillation remain limited [20, 51, 54]. DistillVLM [20] uses MSE loss to align attention and feature maps, MAD [51] aligns visual and text tokens, and LLAVADI [54] highlights the importance of joint token and logit alignment.

最近,大语言模型 (LLM) 的知识蒸馏受到了关注 [2, 25, 32, 50, 53],而视觉语言模型 (VLM) 蒸馏的研究仍然有限 [20, 51, 54]。DistillVLM [20] 使用 MSE 损失来对齐注意力和特征图,MAD [51] 对齐视觉和文本 Token,LLAVADI [54] 强调了联合 Token 和 logit 对齐的重要性。

Building on these advancements, we integrate knowledge distillation into a hybrid Mamba-Transformer framework with a single-stage distillation strategy to transfer knowledge from a transformer-based teacher model. This improves convergence, enhances performance, and reduces computational costs for efficient VLM deployment.

在这些进展的基础上,我们将知识蒸馏(knowledge distillation)整合到一个混合的 Mamba-Transformer 框架中,采用单阶段蒸馏策略,从基于 Transformer 的教师模型中传递知识。这提高了收敛性,增强了性能,并降低了计算成本,以实现高效的可视语言模型(VLM)部署。

3. Method

3. 方法

Large vision-language models (VLMs) process longer sequences than LLMs, resulting in slower training and inference. As previously mentioned, Mamba-2 architecture exhibits linear scaling and offers significantly higher efficiency compared to transformer. To leverage these advantages, we propose a hybrid VLM architecture MaTVLM that integrates Mamba-2 and transformer components, aiming to balance computational efficiency with optimal performance.

大型视觉-语言模型 (VLMs) 处理的序列比大语言模型更长,导致训练和推理速度较慢。如前所述,Mamba-2 架构表现出线性扩展性,并且与 Transformer 相比提供了显著更高的效率。为了利用这些优势,我们提出了一种混合 VLM 架构 MaTVLM,它集成了 Mamba-2 和 Transformer 组件,旨在在计算效率和最佳性能之间取得平衡。

3.1. Mamba Preliminaries

3.1. Mamba 基础

Mamba [23] is mainly built upon the structured state-space sequence models (S4) as in Eq. 1, which are a recent development in sequence modeling for deep learning, with strong connections to RNNs, CNNs, and classical state space models.

Mamba [23] 主要建立在结构化状态空间序列模型 (S4) 的基础上,如公式 1 所示。这些模型是深度学习序列建模的最新进展,与 RNNs、CNNs 和经典状态空间模型有很强的联系。

Mamba has introduced the selective state space models (Selective SSMs), as shown in Eq. 2, unlike the standard linear time-invariant (LTI) formulation 1, enables the ability to selectively focus on or ignore inputs at each timestep. Its performance has been shown to surpass LTI SSMs on information-rich tasks such as language processing, especially when the state size $N$ grows, allowing it to handle a larger capacity of information.

Mamba 引入了选择性状态空间模型 (Selective SSMs),如公式 2 所示,与标准的线性时不变 (LTI) 公式 1 不同,它能够在每个时间步选择性地关注或忽略输入。其性能在信息丰富的任务(如语言处理)上已显示出超越 LTI SSMs 的能力,尤其是在状态大小 $N$ 增加时,使其能够处理更大容量的信息。

Mamba-2 [17] advances Mamba’s selective SSMs by introducing the state-space duality (SSD) framework, which establishes a theoretical link between SSMs and various attention mechanisms through different decomposition s of structured semi-separable matrices. Leveraging this framework, Mamba-2 achieves $2{-}8\times$ faster computation while maintaining competitive performance with transformers.

Mamba-2 [17] 通过引入状态空间对偶性 (SSD) 框架,推进了 Mamba 的选择性 SSM(状态空间模型)。该框架通过结构化半可分矩阵的不同分解,建立了 SSM 与各种注意力机制之间的理论联系。利用这一框架,Mamba-2 在保持与 Transformer 竞争性能的同时,实现了 $2{-}8\times$ 的计算加速。

3.2. Hybrid Attention with Mamba for VLMs

3.2. 混合注意力与 Mamba 用于视觉语言模型 (VLMs)

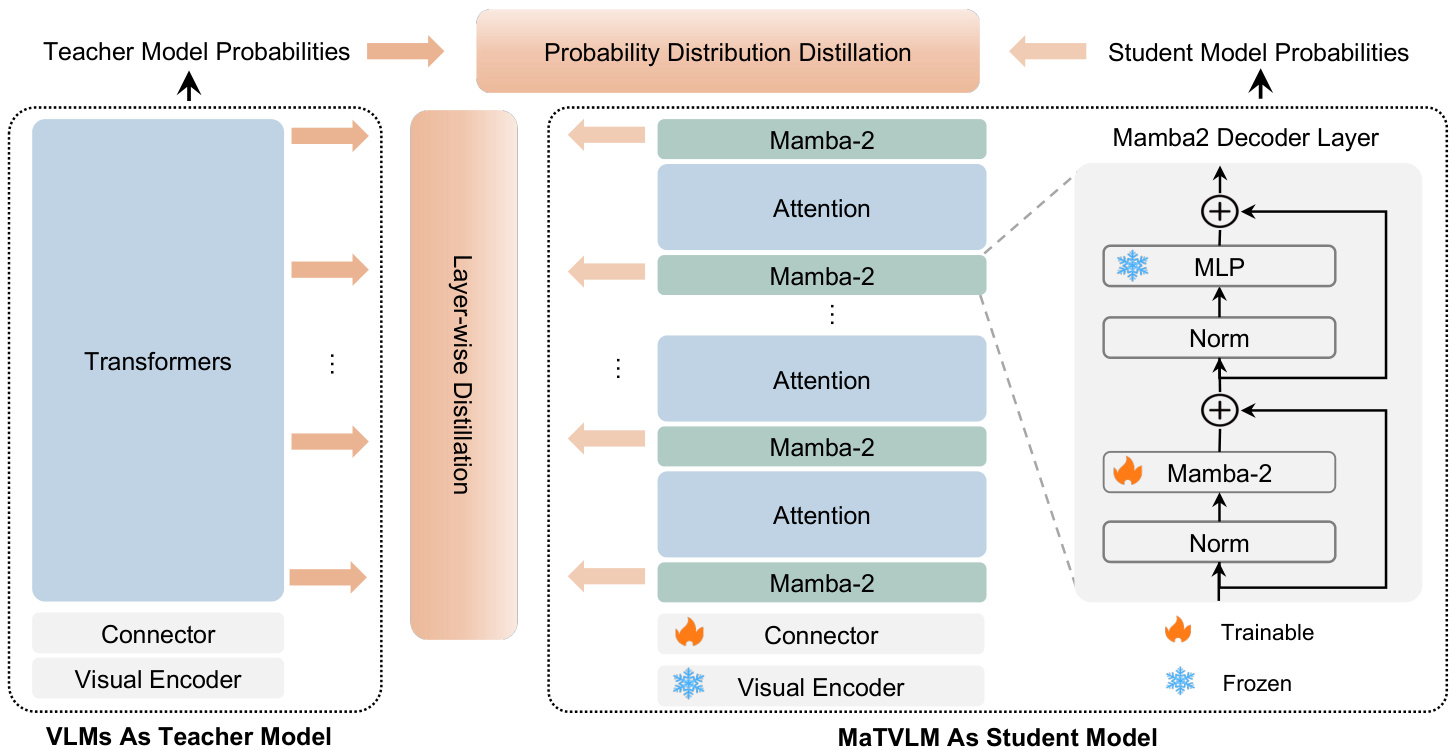

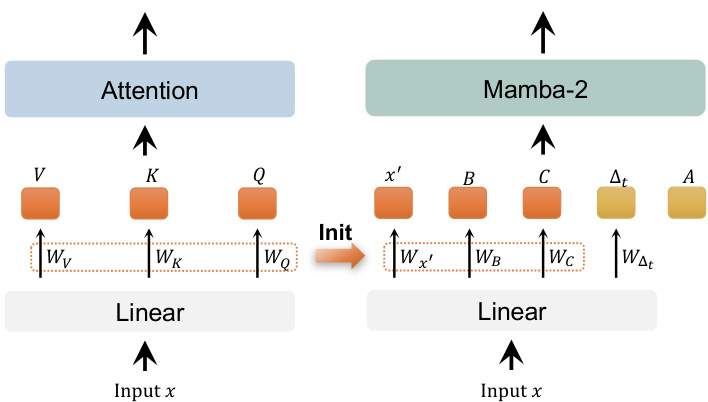

As shown in Fig. 2, the MaTVLM is built upon the pretrained VLMs, comprising a vision encoder, a connector, and a language model. The language model originally consists of transformer decoder layers, some of which are replaced with Mamba-2 decoder layers in our model. This replacement modifies only attention to Mamba-2 while leaving other components unchanged. Based on the configured proportions (e.g., $12.5%$ , $25%$ ) of Mamba-2 decoder layers, we distribute them at equal intervals. Given that Mamba-2 shares certain connections with attention, some weights can be partially initialized from the original transformer layers, as detailed below.

如图 2 所示,MaTVLM 基于预训练的视觉语言模型 (VLM) 构建,包含视觉编码器、连接器和语言模型。语言模型最初由 Transformer 解码器层组成,在我们的模型中,部分层被替换为 Mamba-2 解码器层。这种替换仅将注意力机制修改为 Mamba-2,而其他组件保持不变。根据配置的 Mamba-2 解码器层比例 (例如 $12.5%$ , $25%$ ),我们以等间隔分布它们。鉴于 Mamba-2 与注意力机制存在某些关联,部分权重可以从原始的 Transformer 层部分初始化,具体如下所述。

Figure 2. The proposed MaTVLM integrates both Mamba-2 and transformer components. The model consists of a vision encoder, a connector, and a language model same as the base VLM. The language model is composed of both transformer decoder layers and Mamba2 decoder layers, where Mamba-2 layers replace only attention in transformer layers, while the other components remain unchanged. The model is trained using a knowledge distillation approach, incorporating probability distribution and layer-wise distillation loss. During the distillation training, only Mamba-2 layers and the connector are trainable, while transformer layers remain fixed.

图 2: 提出的 MaTVLM 结合了 Mamba-2 和 Transformer 组件。该模型由视觉编码器、连接器和语言模型组成,与基础 VLM 相同。语言模型由 Transformer 解码器层和 Mamba2 解码器层组成,其中 Mamba-2 层仅替换了 Transformer 层中的注意力机制,其他组件保持不变。该模型采用知识蒸馏方法进行训练,结合了概率分布和逐层蒸馏损失。在蒸馏训练期间,只有 Mamba-2 层和连接器是可训练的,而 Transformer 层保持固定。

Formally, for the $x_{t}$ in then input sequence $x_=$ $[x_{1},x_{2},\ldots,x_{n}]$ , attention in a transformer decoder layer is defined as:

形式上,对于输入序列 $x_=$ $[x_{1},x_{2},\ldots,x_{n}]$ 中的 $x_{t}$,Transformer 解码器层中的注意力机制定义为:

where $d$ is the dimension of the input embedding, and $W_{Q}$ , $W_{K}$ , and $W_{V}$ are learnable weights.

其中 $d$ 是输入嵌入的维度,$W_{Q}$、$W_{K}$ 和 $W_{V}$ 是可学习的权重。

When removing the softmax operation in Eq. 3, the at

当移除公式 3 中的 softmax 操作时,

tention becomes:

注意力变为:

The above results can be reformulated in the form of a linear RNN as follows:

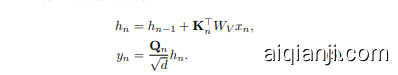

上述结果可以重新表述为以下形式的线性 RNN:

Comparing Eq. 5 with the Eq. 2, we can observe the following mapping relationships between them:

将方程 5 与方程 2 进行比较,我们可以观察到它们之间的以下映射关系:

Consequently, we initialize the aforementioned weights of Mamba-2 layers with the corresponding weights from transformer layers as shown in Fig. 3, while the remaining weights are initialized randomly. Apart from Mamba2 layers, all other weights remain identical to those of the original transformer.

因此,我们使用图 3 中所示的 Transformer 层的相应权重来初始化 Mamba-2 层的上述权重,而其余权重则随机初始化。除了 Mamba-2 层之外,所有其他权重与原始 Transformer 保持一致。

3.3. Knowledge Distilling Transformers into Hybrid Models

3.3. 将 Transformer 知识蒸馏到混合模型中

To further enhance the performance of the MaTVLM, we propose a knowledge distillation method that transfers knowledge from transformer layers to Mamba-2 layers. We use a pre-trained VLM as the teacher model and our MaTVLM as the student model. We will introduce the distillation strategies in the following.

为了进一步提升 MaTVLM 的性能,我们提出了一种知识蒸馏方法,将知识从 Transformer 层转移到 Mamba-2 层。我们使用预训练的 VLM 作为教师模型,并将我们的 MaTVLM 作为学生模型。我们将在下面介绍蒸馏策略。

Figure 3. We initialize certain weights of Mamba-2 from attention based on their correspondence. Specifically, the linear weights of $x,B,C$ in Mamba-2 are initialized from the linear weights of $V,K,Q$ in the attention mechanism. The remaining parameters, including $\Delta_{t}$ and $A$ , are initialized randomly.

图 3: 我们根据对应关系从注意力机制初始化 Mamba-2 的某些权重。具体来说,Mamba-2 中 $x,B,C$ 的线性权重是从注意力机制中 $V,K,Q$ 的线性权重初始化的。其余参数,包括 $\Delta_{t}$ 和 $A$,则是随机初始化的。

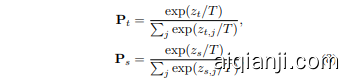

Probability Distribution Distillation First, our goal is to minimize the distance between probability distributions of the models, just the logits output by the models before applying the softmax function. This approach is widely adopted in knowledge distillation, as aligning the output distributions of the models allows the student model to gain a more nuanced understanding from the teacher model’s prediction. To achieve this, we use the kullback-leibler (KL) divergence with a temperature scaling factor as the loss function. The temperature factor adjusts the smoothness of the probability distributions, allowing the student model to capture finer details from the softened distribution of the teacher model. The loss function is defined as follows:

概率分布蒸馏

首先,我们的目标是最小化模型概率分布之间的距离,即模型在应用 softmax 函数之前输出的 logits。这种方法在知识蒸馏中被广泛采用,因为对齐模型的输出分布可以让学生模型从教师模型的预测中获得更细致的理解。为了实现这一点,我们使用带有温度缩放因子的 Kullback-Leibler (KL) 散度作为损失函数。温度因子调整概率分布的平滑度,使学生模型能够从教师模型的软化分布中捕捉到更精细的细节。损失函数定义如下:

The softened probabilities $P_{t}(i)$ and $P_{s}(i)$ are calculated by applying a temperature-scaled softmax function to the logits of the teacher and student models, respectively:

软化概率 $P_{t}(i)$ 和 $P_{s}(i)$ 分别通过对教师模型和学生模型的 logits 应用温度缩放的 softmax 函数来计算:

where $T$ is the temperature scaling factor, a higher temperature produces softer distributions, $z_{t}$ is the logit (presoftmax output) from the teacher model, and $\hat{z}_{s}$ is the corresponding logit from the student model.

其中 $T$ 是温度缩放因子,较高的温度会产生更平滑的分布,$z_{t}$ 是教师模型的 logit(softmax 前的输出),$\hat{z}_{s}$ 是学生模型对应的 logit。

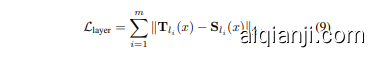

Layer-wise Distillation Moreover, to ensure that each Mamba layer in the student model aligns with its corresponding layer in the teacher model, we adopt a layerwise distillation strategy. Specifically, this approach minimizes the L2 norm between the outputs of Mamba layers in the student model and the corresponding transformer layers in the teacher model when provided with the same input. These inputs are generated from the previous layer of the teacher model, ensuring consistency and continuity of context. By aligning intermediate feature representations, the student model can more effectively replicate the hierarchical feature extraction process of the teacher model, thereby enhancing its overall performance. Assume the Mamba layers’ position in the student model is $l=[l_{1},l_{2},\ldots,l_{m}]$ . The corresponding loss function for this alignment is defined as:

分层蒸馏此外,为了确保学生模型中的每个 Mamba 层与其在教师模型中的对应层对齐,我们采用了分层蒸馏策略。具体来说,当提供相同输入时,该方法最小化学生模型中 Mamba 层输出与教师模型中对应 Transformer 层输出之间的 L2 范数。这些输入由教师模型的前一层生成,确保上下文的一致性和连续性。通过对齐中间特征表示,学生模型可以更有效地复制教师模型的分层特征提取过程,从而提高其整体性能。假设学生模型中 Mamba 层的位置为 $l=[l_{1},l_{2},\ldots,l_{m}]$,则此对齐的损失函数定义为:

where $\mathbf{T}{l{i}}(x)$ and $\mathbf{S}{l{i}}(x)$ represent the outputs of the teacher model and the student model at layer $l_{i}$ , respectively.

其中,$\mathbf{T}{l{i}}(x)$ 和 $\mathbf{S}{l{i}}(x)$ 分别表示教师模型和学生模型在层 $l_{i}$ 的输出。

Sequence Prediction Loss Finally, except of the distillation losses mentioned above, we also calculate the crossentropy loss between the output sequence prediction of the student model and the ground truth. This loss is used to guide the student model to learn the correct sequence prediction, which is crucial for the model to perform well on downstream tasks. The loss function is defined as:

序列预测损失

最后,除了上述提到的蒸馏损失外,我们还计算了学生模型的输出序列预测与真实标签之间的交叉熵损失。该损失用于指导学生模型学习正确的序列预测,这对于模型在下游任务中表现良好至关重要。损失函数定义如下:

where $y$ is the ground truth sequence, and $\hat{y_{s}}$ is the predicted sequence from the student model.

其中 $y$ 是真实序列,$\hat{y_{s}}$ 是学生模型的预测序列。

Single Stage Distillation Training To fully harness the complementary strengths of the proposed distillation methods, we integrate the probability distribution loss, layerwise distillation loss, and the sequence prediction loss into a unified framework for the single stage distillation training. During training, we set their respective weights as follows:

单阶段蒸馏训练

为了充分利用所提出的蒸馏方法的互补优势,我们将概率分布损失、分层蒸馏损失和序列预测损失整合到一个统一的单阶段蒸馏训练框架中。在训练过程中,我们将它们的权重设置如下:

where $\alpha,\beta,\gamma$ are hyper parameters that control the relative importance of each loss component.

其中 $\alpha,\beta,\gamma$ 是控制每个损失分量相对重要性的超参数。

We will conduct a series of experiments to thoroughly investigate the individual contributions and interactions of these three loss functions. By analyzing their effects in isolation and in combination, we aim to gain deeper insights into how each loss function influences the student model’s learning process, the quality of intermediate representations, and the accuracy of final predictions. This will help us understand the specific role of each loss in enhancing the overall performance of the model and ensure that the chosen loss functions are effectively contributing to the optimization process.

我们将进行一系列实验,以深入探讨这三种损失函数的个体贡献及其相互作用。通过分析它们在单独和组合情况下的效果,我们旨在更深入地了解每种损失函数如何影响学生模型的学习过程、中间表示的质量以及最终预测的准确性。这将帮助我们理解每种损失在提升模型整体性能中的具体作用,并确保所选的损失函数能够有效地促进优化过程。

This single-stage framework efficiently combines two distillation objectives and one prediction task, allowing gradients from different loss components to flow seamlessly through the student model. The unified loss function not only accelerates convergence during training but also ensures that the student model benefits from both hierarchical feature distillation and global prediction alignment. Furthermore, the proposed method is flexible and can be easily adapted to various neural architectures and tasks by adjusting the weights of the loss components based on taskspecific requirements. By integrating these two distillation objectives and the prediction task into a single framework, the student model, MaTVLM , achieves significant performance improvements while maintaining computational efficiency, making this approach highly applicable in realworld scenarios.

这一单阶段框架高效地结合了两个蒸馏目标和一个预测任务,使得来自不同损失组件的梯度能够无缝地流经学生模型。统一的损失函数不仅加速了训练过程中的收敛,还确保了学生模型能够从层次特征蒸馏和全局预测对齐中受益。此外,所提出的方法具有灵活性,可以通过根据任务特定需求调整损失组件的权重,轻松适应各种神经架构和任务。通过将这两个蒸馏目标和预测任务整合到一个框架中,学生模型 MaTVLM 在保持计算效率的同时实现了显著的性能提升,使得该方法在现实场景中具有高度适用性。

4. Experiments

4. 实验

4.1. Implementation Details

4.1. 实现细节

We select the TinyLLaVA-Phi-2-SigLIP-3.1B [63] as the teacher vision-language model model for our experiments. The model’s vision encoder is the SigLIP model [60], pretrained on the WebLi dataset [9] at a resolution of $384\times384$ , comprising 400 million parameters. The language model component is Phi-2 [31], featuring 2.7 billion parameters. In the MaTVLM we replace $12.5%$ , $25%$ and $50%$ of the transformer decoder layers in the teacher model with Mamba-2 layers, ensuring an even distribution. As shown in Fig. 2, the trainable parameters of the student model are only the Mamba-2 and connector.

我们选择 TinyLLaVA-Phi-2-SigLIP-3.1B [63] 作为实验中的教师视觉-语言模型。该模型的视觉编码器是 SigLIP 模型 [60],在 WebLi 数据集 [9] 上以 $384\times384$ 的分辨率进行预训练,包含 4 亿个参数。语言模型组件是 Phi-2 [31],具有 27 亿个参数。在 MaTVLM 中,我们用 Mamba-2 层替换教师模型中 $12.5%$、$25%$ 和 $50%$ 的 Transformer 解码器层,确保均匀分布。如图 2 所示,学生模型的可训练参数仅为 Mamba-2 和连接器。

During training, the loss function hyper parameters are set to $\alpha~=\beta=~1.0$ and $\gamma~=~0$ , indicating that the probability distribution loss and layer-wise distillation loss are assigned equal weights, while the sequence prediction loss is omitted. We use a batch size of 64 and optimize the model using the AdamW optimizer with a weight decay of 0.01 and momentum parameters $\beta_{1}~=~0.9$ and $\beta_{2}=0.95$ . The learning rate is set to $2\times10^{-4}$ and follows a warm-up stable decay schedule, with both the warmup and decay phases spanning $10%$ of the total training steps. Following the TinyLLaVA configuration, we adopt the ShareGPT4V [8] SFT dataset for training. This dataset replaces 23K image-text pairs related to the image captioning task in the LLaVA-Mix-665K [38] dataset with an equivalent set of high-quality image-text pairs generated by GPT-4V [1], ensuring enhanced data quality.

在训练过程中,损失函数的超参数设置为 $\alpha~=\beta=~1.0$ 和 $\gamma~=~0$,表示概率分布损失和分层蒸馏损失的权重相等,而序列预测损失被省略。我们使用批量大小为 64,并使用 AdamW 优化器进行模型优化,权重衰减为 0.01,动量参数为 $\beta_{1}~=~0.9$ 和 $\beta_{2}=0.95$。学习率设置为 $2\times10^{-4}$,并遵循预热稳定衰减计划,预热和衰减阶段各占总训练步数的 $10%$。根据 TinyLLaVA 的配置,我们采用 ShareGPT4V [8] SFT 数据集进行训练。该数据集将 LLaVA-Mix-665K [38] 数据集中与图像描述任务相关的 23K 图像-文本对替换为由 GPT-4V [1] 生成的高质量图像-文本对,从而确保数据质量的提升。

4.2. Main Results

4.2. 主要结果

Performance Comparison As shown in Tab. 1, we show the performance comparison of VLMs across multiple benchmarks, including MME [21], MMBench [61], TextVQA [43], GQA [30], MM-Vet [58], ScienceQA [40], POPE [36], MMMU [59] and VQAv2 [22]. We present representative VLMs with diverse architectures [4, 7, 15, 33–35, 38, 57, 64] in the table. For comparison, we specifically highlight models have the similar parameter scales [14, 37, 54, 63, 65] with the MaTVLM and those incorporating Mamba [29, 42, 62]. Firstly, compared to the teacher model TinyLLaVA [63], the MaTVLM achieves a 17.6-point improvement on MME. Across all benchmarks, the performance drop remains within 2.6 points, except for MMBench and ScienceQA, which remain within 4.9 points, demonstrating the model’s competitive performance. Built upon transformer-based large language models (LLMs) [3, 13, 46], the MaTVLM performs comparable on most of the benchmarks, while achieving gains on POPE and MMMU, with performance improvements of 0.2 and 1.6 points,respectively. Furthermore, compared to VLMs with similar-scale parameters, our MaTVLM outperforms them across nearly all benchmarks, with notable improvements of 87.7 points on MME and 7.0 points on TextVQA. Finally, compared to VLMs incorporating Mamba, our MaTVLM achieves the best performance on most benchmarks, ranking second on TextVQA with only a 0.2-point difference and trailing Cobra [62] and ML-Mamba [29] on POPE. In summary, these results highlight that our MaTVLM consistently delivers robust and competitive performance across a diverse set of benchmarks, underscoring its effectiveness and strong potential for practical applications.

性能对比

如表 1 所示,我们展示了多种基准测试下视觉语言模型 (VLM) 的性能对比,包括 MME [21]、MMBench [61]、TextVQA [43]、GQA [30]、MM-Vet [58]、ScienceQA [40]、POPE [36]、MMMU [59] 和 VQAv2 [22]。表中列出了具有多样化架构的代表性 VLM [4, 7, 15, 33–35, 38, 57, 64]。为了对比,我们特别强调了与 MaTVLM 参数规模相近的模型 [14, 37, 54, 63, 65] 以及结合了 Mamba 的模型 [29, 42, 62]。

首先,与教师模型 TinyLLaVA [63] 相比,MaTVLM 在 MME 上实现了 17.6 分的提升。在所有基准测试中,性能下降均控制在 2.6 分以内,除了 MMBench 和 ScienceQA,控制在 4.9 分以内,展现了模型的竞争力。基于 Transformer 的大语言模型 (LLM) [3, 13, 46],MaTVLM 在大多数基准测试中表现相当,同时在 POPE 和 MMMU 上分别实现了 0.2 分和 1.6 分的提升。

此外,与参数规模相近的 VLM 相比,我们的 MaTVLM 在几乎所有基准测试中均表现更优,尤其在 MME 上提升了 87.7 分,在 TextVQA 上提升了 7.0 分。最后,与结合了 Mamba 的 VLM 相比,我们的 MaTVLM 在大多数基准测试中表现最佳,在 TextVQA 上以 0.2 分的微小差距排名第二,在 POPE 上略逊于 Cobra [62] 和 ML-Mamba [29]。

总之,这些结果表明,我们的 MaTVLM 在多样化的基准测试中始终展现出稳健且具有竞争力的性能,凸显了其在实际应用中的有效性和强大潜力。

Inference Speed Comparison We evaluate the inference speed of our MaTVLM against the teacher model TinyLLaVA on the NVIDIA GeForce RTX 3090. As shown in Fig. 1 (b), under the same generated token length setting, the MaTVLM achieves up to $3.6\times$ faster inference compared to the TinyLLaVA with the Flash Attention 2 [16, 18]. In other words, as the generated token length increases, the inference time gap between our MaTVLM and the TinyLLaVA continues to expand. Moreover, a higher hybrid ratio of Mamba-2 layers leads to further improvements in the inference speed. This demonstrates the superior efficiency of our MaTVLM during the inference process, making it more suitable for real-world applications.

推理速度对比

我们在 NVIDIA GeForce RTX 3090 上评估了 MaTVLM 与教师模型 TinyLLaVA 的推理速度。如图 1 (b) 所示,在相同的生成 token 长度设置下,MaTVLM 在使用 Flash Attention 2 [16, 18] 的情况下,推理速度比 TinyLLaVA 快至 $3.6\times$。换句话说,随着生成 token 长度的增加,MaTVLM 与 TinyLLaVA 之间的推理时间差距继续扩大。此外,Mamba-2 层的混合比例越高,推理速度的进一步提升越明显。这证明了 MaTVLM 在推理过程中的卓越效率,使其更适合实际应用。

Memory Usage Comparison We further compare the GPU memory usage of our MaTVLM with the TinyLLaVA on the NVIDIA GeForce RTX 3090. As illustrated in Fig. 1 (c), our MaTVLM demonstrates a substantially lower memory footprint compared to the TinyLLaVA, achieving a peak reduction of $27.5%$ at a token length of 16,384. Notably, the TinyLLaVA encounters an out-of-memory error at a token length of 32,768, whereas our MaTVLM continues to run without issue. This reduction in memory usage is attributed to the MaTVLM’s optimized architecture, which effectively balances computational efficiency and performance, making it more suitable for deployment on resource-constrained devices.

内存使用对比

我们进一步在 NVIDIA GeForce RTX 3090 上对比了 MaTVLM 和 TinyLLaVA 的 GPU 内存使用情况。如图 1 (c) 所示,与 TinyLLaVA 相比,MaTVLM 的内存占用显著降低,在 Token 长度为 16,384 时实现了峰值减少 $27.5%$。值得注意的是,TinyLLaVA 在 Token 长度为 32,768 时遇到了内存不足的错误,而 MaTVLM 则继续运行无碍。这种内存使用的减少归功于 MaTVLM 的优化架构,它有效平衡了计算效率和性能,使其更适合在资源受限的设备上部署。

| 方法 | 大语言模型 | MME-P | MMB | VQAT | GQA | MM-Vet | SQA-I | POPE | MMMU | VQAv2 |

|---|---|---|---|---|---|---|---|---|---|---|

| BLIP-2 [35] | Vicuna-13B [13] | 1293.8 | - | 42.5 | 41.0 | 22.4 | 61 | 85.3 | 41 | |

| MiniGPT-4 [64] | Vicuna-7B [13] | 581.7 | 23.0 | - | 32.2 | |||||

| InstructBLIP [15] | Vicuna-7B [13] | 36.0 | 50.1 | 49.2 | 26.2 | 60.5 | ||||

| InstructBLIP [15] | Vicuna-13B [13] | 1212.8 | - | 50.7 | 49.5 | 25.6 | 63.1 | 78.9 | ||

| Shikra [7] | Vicuna-13B [13] | 58.8 | 77.4 | |||||||

| Otter [34] | LLaMA-7B [46] | 1292.3 | 48.3 | 一 | 24.6 | 32.2 | ||||

| mPLUG-Owl [57] | LLaMA-7B [46] | 967.3 | 49.4 | - | ||||||

| IDEFICS-9B [33] | LLaMA-7B [46] | 48.2 | 25.9 | 38.4 | - | 50.9 | ||||

| Qwen-VL [4] | Qwen-7B [3] | 38.2 | 63.8 | 59.3 | 67.1 | 78.8 | ||||

| Qwen-VL-Chat [4] | Qwen-7B [3] | 1487.5 | 60.6 | 61.5 | 57.5 | - | 68.2 | 35.9 | 78.2 | |

| LLaVA-1.5 [38] | Vicuna-7B [13] | 1510.7 | 64.3 | 58.2 | 62.0 | 30.5 | 66.8 | 85.9 | 78.5 | |

| LLaVA-1.5 [38] | Vicuna-13B [13] | 1531.3 | 67.7 | 61.3 | 63.3 | 35.4 | 71.6 | 85.9 | 36.4 | 80 |

| Teacher-TinyLLaVA [63] | Phi2-2.7B [31] | 1466.4 | 66.1 | 60.3 | 62.1 | 37.5 | 73.0 | 87.2 | 38.4 | 80.1 |

| 类似规模和大语言模型及基于Mamba的模型 | ||||||||||

| LLaVA-Phi [65] | Phi2-2.7B [31] | 1335.1 | 59.8 | 48.6 | 28.9 | 68.4 | 85.0 | - | 71.4 | |

| MoE-LLaVA-2.7Bx4 [37] | Phi2-2.7B [31] | 1396.4 | 65.5 | 50.2 | 61.1 | 31.1 | 68.7 | 85.0 | 77.1 | |

| MobileVLM3B [14] | MobileLLaMA 2.7B [14] | 1288.9 | 59.6 | 47.5 | 59.0 | 61.0 | 84.9 | |||

| LLaVADI [54] | MobileLLaMA 2.7B [14] | 1376.1 | 62.5 | 50.7 | 61.4 | 64.1 | 86.7 | |||

| Cobra [62] | Mamba 2.8B [23] | 57.9 | 88.2 | 76.9 | ||||||

| VL-Mamba [42] | Mamba 2.8B [23] | 1369.6 | 57.0 | 48.9 | 56.2 | 32.6 | 65.4 | 84.4 | 76.6 | |

| ML-Mamba [29] | Mamba-2 2.7B [17] | - | 52.2 | 60.7 | - | 88.3 | - | 75.3 | ||

| MaTVLMHybrid-Mamba-12.5% | 6Phi2-2.7B Hybrid Mamba-2 | 1464.9 | 58.7 | 57.5 | 61.2 | 35.4 | 67.2 | 85.8 | 38.0 | 78.9 |

| MaTVLMHybrid-Mamba-25% | Phi2-2.7B Hybrid Mamba-2 | 21484.1 | 61.2 | 57.7 | 61.5 | 35.4 | 68.0 | 86.0 | 37.3 | 79.0 |

Table 1. Performance comparison of VLMs with our MaTVLM across multiple benchmarks. We use a gray background to indicate larger LLM-based VLMs and a blue background to highlight the baseline model, TinyLLaVA [63]. Additionally, we emphasize models with similar parameter scales to our MaTVLM, as well as VLMs incorporating Mamba. The best performance for each benchmark column is marked in bold, while the second-best is underlined.

Table 2. Performance comparison for different MaTVLM hybrid the Mamba-2 configurations. The best performance for each benchmark column is marked in bold, while the second-best is underlined.

表 1. 我们的 MaTVLM 与多个基准测试中的 VLM 性能对比。我们使用灰色背景表示基于更大 LLM 的 VLM,蓝色背景突出显示基线模型 TinyLLaVA [63]。此外,我们强调了与 MaTVLM 参数规模相似的模型,以及包含 Mamba 的 VLM。每个基准测试列的最佳性能用粗体标记,次佳性能用下划线标记。

| 方法 | MME-P | MMB | VQAT | GQA | MM-Vet | SQA-I | POPE | MMMU | VQAv2 | AVG |

|---|---|---|---|---|---|---|---|---|---|---|

| MaTVLM Hybrid-Mamba-12.5% | 1464.9 | 58.7 | 57.5 | 61.2 | 35.4 | 67.2 | 85.8 | 38.0 | 78.9 | 61.8 |

| MaTVLM Hybrid-Mamba-25% | 1484.1 | 61.2 | 57.7 | 61.5 | 35.4 | 68.0 | 86.0 | 37.3 | 79.0 | 62.3 |

| MaTVLM Hybrid-Mamba-50% | 1427.8 | 59.4 | 54.3 | 60.8 | 30.5 | 64.9 | 85.8 | 34.8 | 78.6 | 60.1 |

表 2. 不同 MaTVLM 混合 Mamba-2 配置的性能对比。每个基准测试列的最佳性能用粗体标记,次佳性能用下划线标记。

4.3. Ablation Study

4.3. 消融研究

Mamba-2 Hybridization Ratio We examine the impact of the Mamba-2 hybridization ratio by varying the proportion of Mamba-2 layers ( $12.5%$ , $25%$ , and $50%$ ) and eval- uating performance across eight multi-modal benchmarks. As shown in Tab. 2, the $25%$ ratio achieves the highest average score, surpassing the $50%$ ratio by 2.2 points, indicating that excessive Mamba-2 layers may weaken global dependency modeling. The $12.5%$ ratio achieves the best performance on MM-Vet and MMMU but falls slightly behind overall, scoring 0.5 points lower than the $25%$ ratio. This finding highlights the importance of balancing Mamba-2 and transformer layers to optimize performance across diverse tasks. While a higher Mamba-2 ratio $(50%)$ may hinder the model’s ability to capture long-range dependencies, a lower ratio $(12.5%)$ retains transformer advantages but may not fully leverage Mamba-2’s benefits.

Mamba-2 混合比例的影响

我们通过改变 Mamba-2 层的比例(12.5%、25% 和 50%)并在八个多模态基准上进行评估,研究了 Mamba-2 混合比例的影响。如表 2 所示,25% 的比例获得了最高的平均分,比 50% 的比例高出 2.2 分,表明过多的 Mamba-2 层可能会削弱全局依赖建模能力。12.5% 的比例在 MM-Vet 和 MMMU 上表现最佳,但总体略逊一筹,比 25% 的比例低 0.5 分。这一发现强调了平衡 Mamba-2 和 Transformer 层以优化多样化任务性能的重要性。虽然较高的 Mamba-2 比例(50%)可能会阻碍模型捕捉长距离依赖的能力,但较低的比例(12.5%)保留了 Transformer 的优势,但可能无法充分利用 Mamba-2 的优势。

Table 3. Effect of different Mamba-2 layer positions. The best performance for each benchmark column is marked in bold, while the second-best is underlined.

表 3. 不同 Mamba-2 层位置的效果。每个基准列的最佳性能以粗体标记,次佳以下划线标记。

| Mamba-2 层位置 | MME-P | MMB | VQAT | GQA | MM-Vet | SQA-I | POPE | MMMU | VQAv2 | AVG |

|---|---|---|---|---|---|---|---|---|---|---|

| 均匀分布 | 1484.1 | 61.2 | 57.7 | 61.5 | 35.4 | 68.0 | 86.0 | 37.3 | 79.0 | 62.3 |

| 全部在开头 | 1406.5 | 60.8 | 56.1 | 61.2 | 32.9 | 64.3 | 86.1 | 33.0 | 78.8 | 60.4 |

| 全部在中间 | 1392.9 | 54.0 | 53.0 | 60.0 | 32.4 | 63.3 | 85.0 | 34.9 | 77.9 | 58.9 |

Table 4. Comparison of different distillation loss functions. The best performance for each benchmark column is marked in bold, while the second-best is underlined.

| 蒸馏损失 | MME-P | MMB | VQAT | GQA | MM-Vet | SQA-I | POPE | MMMU | VQAv2 | AVG |

|---|---|---|---|---|---|---|---|---|---|---|

| Lce | 1284.0 | 56.4 | 45.3 | 57.5 | 29.0 | 59.6 | 86.0 | 28.0 | 74.8 | 55.6 |

| Llayer | 1430.7 | 61.9 | 55.7 | 60.7 | 33.2 | 66.9 | 84.7 | 33.0 | 78.8 | 60.7 |

| Lprob | 1413.4 | 61.5 | 56.8 | 61.0 | 33.3 | 67.0 | 85.7 | 37.7 | 78.8 | 61.4 |

| Lprob + Llayer | 1484.1 | 61.2 | 57.7 | 61.5 | 35.4 | 68.0 | 86.0 | 37.3 | 79.0 | 62.3 |

| Lprob + Llayer + Lce | 1449.8 | 60.9 | 57.0 | 61.0 | 32.6 | 67.6 | 85.8 | 35.4 | 79.1 | 61.3 |

表 4. 不同蒸馏损失函数的比较。每个基准列的最佳性能以粗体标记,次佳性能以下划线标记。

Mamba-2 Hybridization Layer Position We further investigate the impact of Mamba-2 layer placement on the performance. Specifically, we replace the transformer decoder layers with Mamba-2 layers in four configurations: all at the beginning, all in the middle, all at the end, and evenly distributed. Notably, the all-at-the-end configuration fails to enable effective distillation, resulting in incoherent responses. As shown in Tab. 3, the evenly distributed configuration achieves the highest performance across all benchmarks, surpassing the all-at-the-beginning and all-inthe-middle configurations by 1.9 and 3.4 points on the average score, respectively. These results highlight the importance of evenly integrating Mamba-2 layers throughout the model to optimize performance.

Mamba-2 混合层位置

我们进一步研究了 Mamba-2 层位置对性能的影响。具体来说,我们在四种配置中用 Mamba-2 层替换 Transformer 解码器层:全部在开头、全部在中间、全部在末尾以及均匀分布。值得注意的是,全部在末尾的配置无法实现有效的蒸馏,导致响应不连贯。如表 3 所示,均匀分布的配置在所有基准测试中表现最佳,平均得分分别比全部在开头和全部在中间的配置高出 1.9 和 3.4 分。这些结果突显了在整个模型中均匀集成 Mamba-2 层以优化性能的重要性。

Distillation Loss As mentioned in Sec 3.2, we employ three distillation losses: probability distribution loss $L_{p r o b}$ layer-wise distillation loss $L_{l a y e r}$ , and sequence prediction loss $L_{c e}$ . To investigate the impact of each loss on the performance of our MaTVLM, we conduct an ablation study, as shown in Tab. 4. Initially, we use the three losses individually. The results show that $L_{p r o b}$ significantly improves performance over $L_{c e}$ , with a 5.8-point increase in average score. Adding $L_{l a y e r}$ further boosts performance, yielding the highest average score when combining $L_{p r o b}$ and $L_{l a y e r}$ , which is 0.9 points higher than using $L_{p r o b}$ alone. This indicates that both probability distribution alignment and layer-wise feature matching contribute positively to knowledge transfer. However, when $L_{c e}$ is reintroduced alongside $L_{p r o b}$ and $L_{l a y e r}$ , we observe a slight 1.0-point drop, suggesting that direct supervision from $L_{c e}$ may interfere with the distillation process.

蒸馏损失

如第3.2节所述,我们采用了三种蒸馏损失:概率分布损失 $L_{p r o b}$、分层蒸馏损失 $L_{l a y e r}$ 和序列预测损失 $L_{c e}$。为了研究每种损失对我们 MaTVLM 性能的影响,我们进行了消融研究,如表 4 所示。最初,我们分别使用这三种损失。结果表明,$L_{p r o b}$ 显著提高了性能,比 $L_{c e}$ 提高了 5.8 分。添加 $L_{l a y e r}$ 进一步提升了性能,当结合 $L_{p r o b}$ 和 $L_{l a y e r}$ 时,获得了最高的平均分数,比单独使用 $L_{p r o b}$ 高出 0.9 分。这表明概率分布对齐和分层特征匹配都对知识转移有积极贡献。然而,当 $L_{c e}$ 与 $L_{p r o b}$ 和 $L_{l a y e r}$ 一起重新引入时,我们观察到略微下降了 1.0 分,这表明 $L_{c e}$ 的直接监督可能会干扰蒸馏过程。

5. Limitations

5. 局限性

Despite its advantages, MaTVLM has several limitations. While initializing Mamba-2 with pre-trained attention weights aids convergence, it may not fully leverage its implicit state representations. This could be improved through tailored initialization strategies, such as gradient matching or an additional pre training phase. Additionally, due to the limited GPU resources available in this study, we have not explored the performance of our model at larger scales. With access to more computational resources, future work can systematically investigate optimal Mamba-2 integration ratios and conduct hybrid experiments on larger VLMs to evaluate s cal ability and performance. Addressing these challenges will further improve the efficiency and applicability of hybrid architectures in large-scale VLMs.

尽管 MaTVLM 具有优势,但它也存在一些局限性。虽然使用预训练的注意力权重初始化 Mamba-2 有助于收敛,但它可能无法充分利用其隐式状态表示。这可以通过定制的初始化策略来改进,例如梯度匹配或额外的预训练阶段。此外,由于本研究中可用的 GPU 资源有限,我们尚未探索模型在更大规模下的性能。随着获得更多的计算资源,未来的工作可以系统地研究 Mamba-2 的最佳集成比例,并在更大的视觉语言模型 (VLM) 上进行混合实验,以评估其可扩展性和性能。解决这些挑战将进一步提高混合架构在大规模 VLM 中的效率和适用性。

6. Conclusion

6. 结论

We propose a hybrid model, MaTVLM, that enhances a pretrained vision-language model (VLM) by replacing a portion of its transformer decoder layers with Mamba-2 layers. This design leverages the efficiency of RNN-inspired architectures while preserving the expressiveness of transformers. By initializing Mamba-2 layers with attention weights and employing a single-stage distillation process, we improve both convergence speed and overall performance. Notably, our model is trained using only four NVIDIA GeForce RTX 3090 GPUs, demonstrating its computational efficiency. Extensive evaluations show that our approach not only achieves competitive accuracy but also significantly enhances inference speed and reduces GPU memory consumption. These results highlight the potential of hybrid architectures to strike a balance between efficiency and expressiveness. Furthermore, the efficiency of our model in both fine-tuning and inference makes it a cost-effective and scalable solution for deploying large-scale VLMs. This approach enables more practical, resource-efficient deployment of VLMs in real-world applications, reducing computational costs while maintaining high performance.

我们提出了一种混合模型 MaTVLM,通过将预训练的视觉语言模型 (VLM) 中的部分 Transformer 解码器层替换为 Mamba-2 层来增强其性能。该设计结合了 RNN 架构的高效性和 Transformer 的表达能力。通过使用注意力权重初始化 Mamba-2 层并采用单阶段蒸馏过程,我们提高了模型的收敛速度和整体性能。值得注意的是,我们的模型仅使用四块 NVIDIA GeForce RTX 3090 GPU 进行训练,展示了其计算效率。广泛的评估表明,我们的方法不仅实现了具有竞争力的准确性,还显著提高了推理速度并减少了 GPU 内存消耗。这些结果突显了混合架构在效率和表达力之间取得平衡的潜力。此外,我们的模型在微调和推理中的高效性使其成为部署大规模 VLM 的经济高效且可扩展的解决方案。这种方法使得 VLM 在实际应用中的部署更加实用和资源高效,在保持高性能的同时降低了计算成本。