1 AgiBot World Colosseo: A Large-scale Manipulation Platform for Scalable and Intelligent Embodied Systems

1 AgiBot World Colosseo: 一个用于可扩展和智能具身系统的大规模操作平台

Team AgiBot-World* Project website: https : / /agibot-world.com/ Code: https://github.com/Open Drive Lab/AgiBot-World

Team AgiBot-World* 项目网站: https://agibot-world.com/ 代码: https://github.com/OpenDriveLab/AgiBot-World

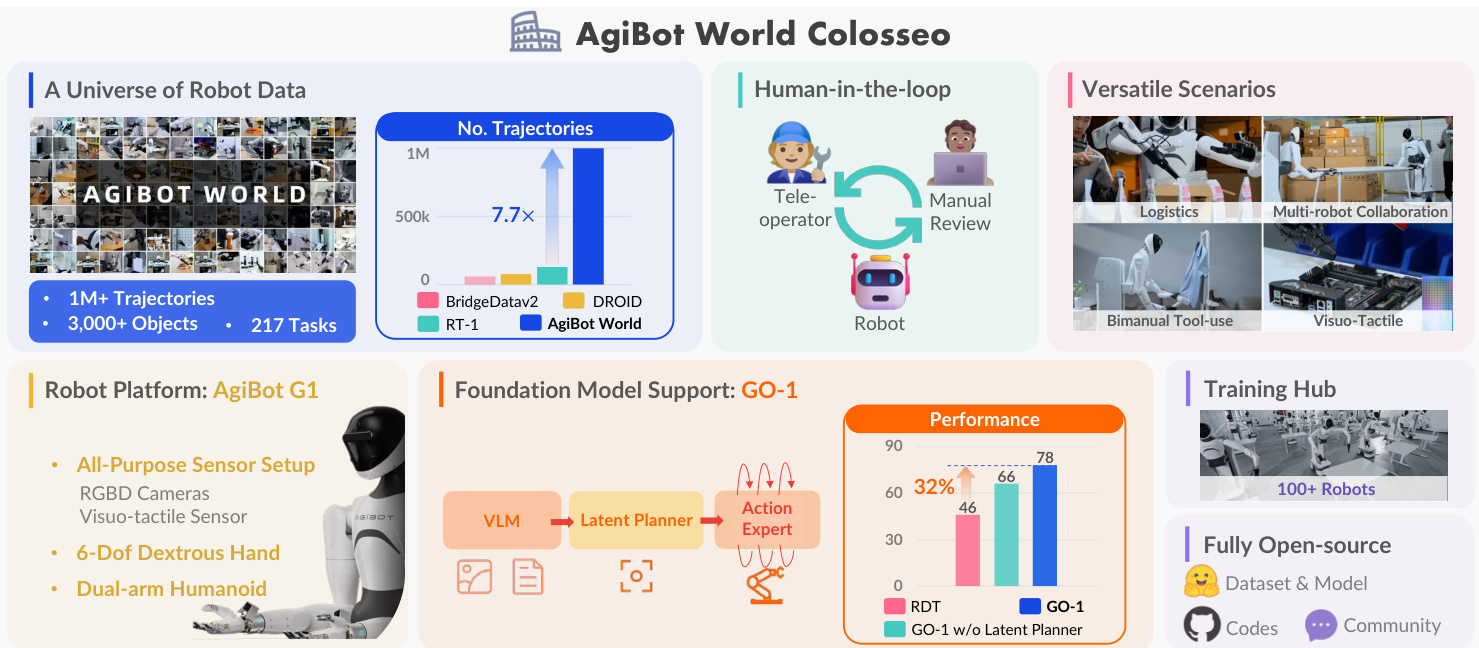

Fig. 1: Introducing AgiBot World Colosseo, an open-sourced large-scale manipulation platform comprising data, models, benchmarks and ecosystem. AgiBot World stands out for its unparalleled scale and diversity compared to prior counterparts. A suite of 100 dual-arm humanoid robots is deployed. We further propose a generalist policy (GO-1) with the latent action planner. It is trained across diverse data corpus with a scalable performance of $32%$ gain compared to prior arts.

图 1: 介绍 AgiBot World Colosseo,一个开源的、包含数据、模型、基准测试和生态系统的大规模操作平台。与之前的同类平台相比,AgiBot World 以其无与伦比的规模和多样性脱颖而出。我们部署了 100 台双臂人形机器人。我们进一步提出了一种通用策略 (GO-1),并配备了潜在动作规划器。该策略在多样化的数据语料库上进行训练,与现有技术相比,性能提升了 $32%$。

Abstract We explore how scalable robot data can address real-world challenges for generalized robotic manipulation. Introducing AgiBot World, a large-scale platform comprising over 1 million trajectories across 217 tasks in five deployment scenarios, we achieve an order-of-magnitude increase in data scale compared to existing datasets.Accelerated by a standardized collection pipeline with human-in-the-loop verification, AgiBot World guarantees high-quality and diverse data distribution. It is extensible from grippers to dexterous hands and visuo-tactile sensors for fine-grained skill acquisition. Building on top of data, we introduce Genie Operator-1 (GO-1), a novel generalist policy that leverages latent action representations to maximize data utilization, demonstrating predictable performance scaling with increased data volume. Policies pre-trained on our dataset achieve an average performance improvement of $30%$ over those trained on Open X-Embodiment, both in in-domain and out-of-distribution scenarios. GO-1 exhibits exceptional capability in real-world dexterous and long-horizon tasks, achieving over $60%$ success rate on complex tasks and outperforming prior RDT approach by $32%$ . By open-sourcing the dataset, tools, and models, we aim to democratize access to large-scale, high-quality robot data, advancing the pursuit of scalable and general-purpose intelligence.

摘要 我们探讨了可扩展的机器人数据如何应对通用机器人操作中的现实世界挑战。通过引入AgiBot World,一个包含五个部署场景中217个任务的超过100万条轨迹的大规模平台,我们实现了数据规模的数量级增长,相较于现有数据集。通过标准化收集流程和人在环验证的加速,AgiBot World保证了高质量和多样化的数据分布。它可以从夹爪扩展到灵巧手和视觉触觉传感器,以获取细粒度技能。基于数据,我们引入了Genie Operator-1 (GO-1),一种新颖的通用策略,利用潜在动作表示最大化数据利用率,展示了随着数据量增加的可预测性能扩展。在我们的数据集上预训练的策略在域内和域外场景中,相比在Open X-Embodiment上训练的策略,平均性能提升了30%。GO-1在现实世界的灵巧和长期任务中表现出色,在复杂任务上成功率超过60%,比之前的RDT方法高出32%。通过开源数据集、工具和模型,我们旨在普及大规模、高质量机器人数据的访问,推动可扩展和通用智能的追求。

1. INTRODUCTION

1. 引言

Manipulation is a cornerstone task in robotics, enabling the agent to interact with and adapt to the physical world. While significant progress has been made in general-purpose foundational models for natural language processing [1] and computer vision [2], robotics lags behind due to the difficulty of (high-quality) data collection. In the controlled lab setting, simple tasks such as pick-and-place have been well studied [3], [4]. Yet for the open-set real-world setting, tasks spanning from fine-grained object interaction, mobile manipulation to collaborative tasks, remains a formidable challenge [5]. These tasks require not only physical dexterity but also the ability to generalize across diverse environment and scenarios, a merit beyond the reach of current robotic systems. The widely accepted reason is the lack of highquality data——unlike images and text, which are abundant and standardized, robotic datasets suffer from fragmented clips due to heterogeneous hardware and un standardized collection procedure, leading to low-quality and inconsistent outcome. In this work we ask, how could we resolve the real-world complexity effectively by scaling up real-world robot data?

操控是机器人技术中的一项核心任务,使智能体能够与物理世界互动并适应物理世界。尽管在自然语言处理 [1] 和计算机视觉 [2] 的通用基础模型方面取得了显著进展,但由于(高质量)数据收集的困难,机器人技术仍然落后。在受控的实验室环境中,诸如拾取和放置等简单任务已经得到了充分研究 [3], [4]。然而,在开放的现实世界环境中,从细粒度物体交互、移动操控到协作任务,仍然是一个巨大的挑战 [5]。这些任务不仅需要物理上的灵巧性,还需要在不同环境和场景中泛化的能力,这是当前机器人系统无法达到的优势。广泛接受的原因是缺乏高质量数据——与图像和文本不同,图像和文本丰富且标准化,而机器人数据集由于硬件异构和收集程序不标准化而呈现出碎片化的片段,导致数据质量低且不一致。在这项工作中,我们提出,如何通过扩展现实世界机器人数据来有效解决现实世界的复杂性?

Recent efforts, such as Open X-Embodiment (OXE) [6], have addressed by aggregating and standardizing existing datasets. Despite advancements on large-scale crossembodiment learning, the resulting policy is constrained within naive, short-horizon tasks and can weakly generalize to out-of-domain scenarios [4]. DROID [7] collected expert data through crowd-sourcing from diverse real-life scenes. The absence of data quality assurance (with human feedback) and the reliance on a constrained hardware setup (i.e., featuring fixed, single-arm robots), limit its real-world applicability and broader effectiveness. More recently, Lin et al. [8] explored scaling laws governing general iz ability across intracategory objects and environments, albeit limited to a few simple, single-step tasks. These efforts represent a notable advancement toward developing generalist policies, moving beyond the traditional focus on single-task learning within narrow domains [9], [3]. Nevertheless, existing robot learning datasets remain constrained by their reliance on short-horizon tasks in highly controlled laboratory environments, failing to adequately capture the complexity and diversity inherent in real-world manipulation tasks. To achieve generalpurpose robotic intelligence, it is essential to develop datasets that scale in size and diversity while capturing real-world variability, supported by general-purpose humanoid robots for robust skill acquisition, a standardized data collection pipeline with assured quality, and carefully curated tasks reflecting real-world challenges.

最近的努力,如 Open X-Embodiment (OXE) [6],通过聚合和标准化现有数据集来解决这一问题。尽管在大规模跨具身学习方面取得了进展,但生成的策略仍局限于简单、短期的任务,并且在域外场景中的泛化能力较弱 [4]。DROID [7] 通过众包从多样化的现实场景中收集了专家数据。缺乏数据质量保证(通过人类反馈)以及对受限硬件设置(即固定的单臂机器人)的依赖,限制了其在现实世界中的适用性和广泛有效性。最近,Lin 等人 [8] 探索了跨类别对象和环境的泛化能力的扩展规律,尽管仅限于一些简单的单步任务。这些努力代表了在开发通用策略方面的显著进展,超越了传统上对狭窄领域内单任务学习的关注 [9], [3]。然而,现有的机器人学习数据集仍然受到在高度控制的实验室环境中依赖短期任务的限制,未能充分捕捉现实世界操作任务中固有的复杂性和多样性。为了实现通用机器人智能,必须开发在规模和多样性上扩展的数据集,同时捕捉现实世界的变异性,并得到通用人形机器人的支持以实现稳健的技能获取,标准化的数据收集管道以确保质量,以及精心策划的任务以反映现实世界的挑战。

As depicted in Fig. 1, we introduce AgiBot World Colosseo, a full-stack large-scale robot learning platform curated for advancing bimanual manipulation in scalable and intelligent embodied systems. A full-scale 4000- square-meter facility is constructed to represent five major domains-domestic, retail, industrial, restaurant, and office environment—all dedicated to high-fidelity data collection in authentic everyday scenarios. With over 1 million trajectories collected from 100 real robots, AgiBot World offers unprecedent ed diversity and complexity. It spans over 100 realworld scenarios, addressing challenging tasks such as finegrained manipulation, tool usage, and multi-robot synergistic collaboration. Unlike prior datasets, AgiBot World dataset collection is carried out with a fully standardized pipeline, ensuring high data quality and s cal ability, while incorporating human-in-the-loop verification to guarantee reliability. Our hardware setup includes mobile base humanoid robots with whole-body control, dexterous hands, and visuo-tactile sensors, enabling rich, multimodal data collection. Each episode is meticulously designed, featuring multiple camera views, depth information, camera calibration, and language annotations for both the overall task and each individual sub-steps. This well-rounded hardware setup, combined with various long-horizon, real-world tasks, opens new avenues for developing next-generation generalist policies and fosters diverse future research in robotics.

如图 1 所示,我们介绍了 AgiBot World Colosseo,这是一个全栈大规模机器人学习平台,旨在推进可扩展和智能的具身系统中的双手操作。我们建造了一个 4000 平方米的设施,代表五个主要领域——家庭、零售、工业、餐厅和办公环境——所有这些都致力于在真实的日常场景中进行高保真数据收集。AgiBot World 从 100 个真实机器人中收集了超过 100 万条轨迹,提供了前所未有的多样性和复杂性。它涵盖了 100 多个现实世界场景,解决了诸如精细操作、工具使用和多机器人协同合作等具有挑战性的任务。与之前的数据集不同,AgiBot World 数据集收集是通过完全标准化的流程进行的,确保了高质量的数据和可扩展性,同时结合了人在环验证以保证可靠性。我们的硬件设置包括具有全身控制的移动基座人形机器人、灵巧的手和视觉触觉传感器,能够进行丰富的多模态数据收集。每个情节都经过精心设计,具有多个摄像机视角、深度信息、摄像机校准以及整体任务和每个子步骤的语言注释。这种全面的硬件设置,结合各种长期、现实世界的任务,为开发下一代通用策略开辟了新途径,并促进了机器人领域的多样化未来研究。

Our experimental results highlight the transformative potential of the AgiBot World dataset. Policies pre-trained on our dataset achieve an average success rate improvement Oof $30%$ compared to those trained on the prior large-scale robot dataset OXE [6]. Notably, even when utilizing only a fraction of our dataset—equivalent to $1/10$ of thedata volume in hours compared to OXE—the general iz ability of pretrained policies is elevated by $18%$ . These findings underscore the dataset's efficacy in bridging the gap between controlled laboratory environments and real-world robotic applications. Following our dataset, to address the limitations of previous robot foundation models that heavily rely on indomain robot datasets, we present Genie Operator-1 (GO1), a novel generalist policy that utilizes latent action represent at ions to enable learning from heterogeneous data and efficiently bridges general-purpose vision-language models (VLMs) with robotic sequential decision-making. Through unified pre-training on web-scale data, spanning human videos to our high-quality robot dataset, GO-1 achieves superior generalization and dexterity, outperforming prior generalist policies such as RDT [10] and our variant without latent action planner. Moreover, we demonstrate that GO1's performance exhibits robust s cal ability with increasing dataset size, underscoring its potential for sustained advancement as larger datasets become available.

我们的实验结果突显了AgiBot World数据集的变革潜力。与在先前的大规模机器人数据集OXE [6]上训练的模型相比,在我们的数据集上预训练的模型平均成功率提高了30%。值得注意的是,即使仅使用我们数据集的一小部分——相当于OXE数据量的1/10——预训练模型的泛化能力也提升了18%。这些发现强调了该数据集在弥合受控实验室环境与现实世界机器人应用之间差距方面的有效性。在我们的数据集之后,为了解决以往机器人基础模型严重依赖领域内机器人数据集的局限性,我们提出了Genie Operator-1 (GO1),这是一种新颖的通用策略,利用潜在动作表示来从异构数据中学习,并有效地将通用视觉语言模型 (VLMs) 与机器人序列决策相结合。通过对网络规模数据的统一预训练,涵盖从人类视频到我们高质量机器人数据集的范围,GO-1实现了卓越的泛化能力和灵活性,超越了之前的通用策略,如RDT [10]以及我们不带潜在动作规划器的变体。此外,我们展示了GO1的性能随着数据集规模的增加而表现出强大的扩展能力,突显了其在更大数据集可用时持续进步的潜力。

Beyond its immediate impact, AgiBot World lays a strong foundation for future research in robotic manipulation. By open-sourcing the dataset, toolchain, and pre-trained models, we aim to foster community-wide innovation, enabling researchers to explore more authentic and diverse applications from household assistant to industrial automation. AgiBot World is more than yet another dataset; it is a step toward scalable, general-purpose robotic intelligence, empowering robots to tackle the complexities of the real world.

除了其直接影响外,AgiBot World 为未来的机器人操作研究奠定了坚实的基础。通过开源数据集、工具链和预训练模型,我们旨在促进社区范围内的创新,使研究人员能够探索从家庭助理到工业自动化的更真实和多样化的应用。AgiBot World 不仅仅是一个数据集;它是迈向可扩展、通用机器人智能的一步,赋予机器人应对现实世界复杂性的能力。

Contribution. 1) We construct AgiBot World dataset, a multifarious robot learning dataset accompanied by opensource tools to advance research on policy learning at scale. As a pioneering initiative, AgiBot World employs an inclusive optimized pipeline, from scene configuration, task design, data collection, to human-in-the-loop verification, which ensures unparalleled data quality. 2) We propose GO1, a robot foundation policy using latent action representations to unlock web-scale pre-training on heterogeneous data. Empowered by AgiBot World dataset, it outperforms prior generalist policies in generalization and dexterity, with performance scaling predictably with dataset size.

贡献。1) 我们构建了 AgiBot World 数据集,这是一个多样化的机器人学习数据集,并附带开源工具,以推动大规模策略学习的研究。作为一项开创性举措,AgiBot World 采用了包容性优化的流程,从场景配置、任务设计、数据收集到人机交互验证,确保了无与伦比的数据质量。2) 我们提出了 GO1,这是一种使用潜在动作表示的机器人基础策略,能够在异构数据上进行网络规模的预训练。借助 AgiBot World 数据集,它在泛化性和灵活性方面优于之前的通用策略,并且性能随数据集大小的增加而可预测地提升。

Limitation. All evaluations are conducted in real-world scenarios. We are currently developing the simulation environment, aligning with the real-world setup and aiming to reflect real-world policy deployment outcome. It would thereby facilitate fast and reproducible evaluation.

限制。所有评估均在现实场景中进行。我们目前正在开发模拟环境,与现实设置保持一致,旨在反映现实世界的策略部署结果。这将有助于快速且可重复的评估。

11. RELATED WORK

11. 相关工作

Data scaling in robotics. Robot learning datasets from automated scripts or human tele operation have enabled policy learning, with early efforts like RoboTurk [19] and BridgeData [12] offering small-scale datasets with 2.1k and $7.2\mathrm{k}$ trajectories, respectively. Larger datasets, such as RT1 [14] ( $130\mathrm{k\Omega}$ trajectories), expand scopes yet remain limited to few environments and skills. Open X-Embodiment [6] aggregates various datasets into a unified format, growing to more than 2.4 million trajectories, as a consequence it suffers from significant variability in embodiment s, observation perspectives, and inconsistent data quality, limiting its overall effectiveness. More recently, DROID [7] moves towards scaling up scenes for greater diversity by crowdsourcing demonstrations yet falls short in data scale and quality control. Prior datasets above generally face limitations in data scale, task practicality, and scenario naturalness, compounded by inadequate quality assurance and hardware restrictions, which impedes generalist policy training. As shown in Tab. I, our dataset addresses these gap adequately. We build a data collection facility spanning five scenarios to reconstruct real-world diversity and authenticity. With over 1 million trajectories gathered by skilled tele operators through rigorous verification protocols, AgiBot World utilizes humanoid robots equipped with visuo-tactile sensors and dexterous hands to enable multimodal demonstrations, setting it apart from previous efforts. Unlike Pumacay et al. [20], which serves as a simulation benchmark for evaluating generalization, what we propose is a full-stack platform with data, models, benchmarks, and ecosystem.

机器人领域的数据扩展。通过自动化脚本或人类远程操作生成的机器人学习数据集已经支持了策略学习,早期的努力如RoboTurk [19] 和 BridgeData [12] 提供了小规模的数据集,分别包含2.1k和 $7.2\mathrm{k}$ 条轨迹。更大的数据集,如RT1 [14] ( $130\mathrm{k\Omega}$ 条轨迹),虽然扩展了范围,但仍局限于少数环境和技能。Open X-Embodiment [6] 将各种数据集整合为统一格式,增长到超过240万条轨迹,但由于其在不同实体、观察视角和数据质量上的显著差异,整体效果受限。最近,DROID [7] 通过众包演示向扩展场景多样性迈进,但在数据规模和质量控制方面仍有不足。上述数据集普遍面临数据规模、任务实用性和场景自然性的限制,加之质量保证不足和硬件限制,阻碍了通用策略的训练。如表 I 所示,我们的数据集充分解决了这些差距。我们构建了一个跨越五个场景的数据收集设施,以重建现实世界的多样性和真实性。通过严格的验证协议,由熟练的远程操作员收集了超过100万条轨迹,AgiBot World 利用配备视觉触觉传感器和灵巧手的仿人机器人实现多模态演示,使其与以往的努力区分开来。与Pumacay等人 [20] 作为评估泛化能力的模拟基准不同,我们提出的是一个包含数据、模型、基准和生态系统的全栈平台。

TABLE I: Comparison to existing datasets. AgiBot World features the largest number of trajectories to date. We replicate real-world environment at a 1:1 scale for the industrial and retail scenarios, which are barely present before. Extensive human annotations are offered, including item, scene, skill (sub-task segmented), and task-level annotations. Notably, to expand data applicability and potential, we include imperfect data (i.e., failure recovery data with annotated error states) and tasks with dexterous hands. To ensure data quality, we adopt a human-in-the-loop philosophy: the policy learning is performed on collected demonstrations. The deployment results are adopted as feedback to improve the collection protocol.

表 1: 与现有数据集的比较。AgiBot World 拥有迄今为止最多的轨迹数据。我们以 1:1 的比例复制了工业和零售场景的真实环境,这些场景在之前几乎没有出现过。提供了广泛的人工标注,包括物品、场景、技能(子任务分割)和任务级别的标注。值得注意的是,为了扩展数据的适用性和潜力,我们包含了不完美的数据(即带有标注错误状态的失败恢复数据)和需要灵巧手的任务。为了确保数据质量,我们采用了人在环中的理念:策略学习是在收集的演示数据上进行的。部署结果被用作反馈来改进收集协议。

| 数据集 | 轨迹数 | 技能数 | 场景数 | 详细标注 | 相机校准 | 手臂类型 | 灵巧手 | 失败恢复 | 人在环中 | 收集方式 |

|---|---|---|---|---|---|---|---|---|---|---|

| RoboNet [11] | 162k | n/a | 10 | 单臂 | × | × | 脚本化 | |||

| BridgeData [12] | 7.2k | 4 | 12 | 单臂 | 人工遥控 | |||||

| BC-Z [13] | 26k | 3 | 1 | 单臂 | × | 人工遥控 | ||||

| RT-1 [14] | 130k | 8 | 2 | 单臂 | × | × | 人工遥控 | |||

| RH20T [15] | 13k | 33 | 7 | 单臂 | × | 人工遥控 | ||||

| RoboSet [16] | 98.5k | 6 | 11 | 单臂 | × | × | 30%人工/70%脚本化 | |||

| BridgeData aV2[17] | 60.1k | 13 | 24 | × | 单臂 | × | × | 85%人工/15%脚本化 | ||

| DROID [7] | 76k | 86 | 564 | 单臂 | × | 人工遥控 | ||||

| RoboMIND [18] | 55k | 36 | n/a | × | 单臂+双臂 | 人工遥控 | ||||

| Open X-Embodiment [6] | 1.4M | 217 | 311 | (×) | 单臂+双臂 | 数据集聚合 | ||||

| AgiBotWorld Dataset | 1M+ | 87 | 106 | 双臂 | 人工遥控 |

Policy learning at scale. Robotic foundation models often co-evolve with the development of dataset scale, equipping robots with escalating general-purpose capabilities through diverse, large-scale training. Several prior arts use web-scale video only to facilitate policy learning given the limited scale of action-labeled robot datasets [21], [22], [23]. Another line of work lies in the use of large, end-to-end models trained on robot trajectories with robotics data scaling up [4], [24], [14], [25]. For instance, RDT [10] employs Diffusion Transformers, initially pre-trained on heterogeneous multirobot datasets and fine-tuned on over 6k dual-arm trajectories, showcasing the benefits of pre-training on diverse sources. $\pi_{0}$ [26] uses a pre-trained VLM backbone and a flow-based action expert, advancing dexterous manipulation for complex tasks like laundry. LAPA [27] introduces the use of latent actions as pre-training targets; however, its latent planning capability is not preserved for downstream tasks. Building on a variety of innovative ideas from recent research, we advance the field by transferring web-scale knowledge to robotic control through the adaptation of vision-language models (VLMs) with latent actions, leveraging both human videos and robot data for scalable training. Our work demonstrates how the integration of a latent action planner enhances long-horizon task execution and enables more efficient policy learning, significantly improving upon existing generalist policies.

大规模策略学习。机器人基础模型通常与数据集规模的发展共同演进,通过多样化、大规模的训练,赋予机器人不断提升的通用能力。鉴于动作标注的机器人数据集规模有限,一些先前的研究仅使用网络规模的视频来促进策略学习 [21], [22], [23]。另一类研究则侧重于使用大规模、端到端的模型,这些模型在机器人轨迹上进行训练,随着机器人数据的扩展而发展 [4], [24], [14], [25]。例如,RDT [10] 采用了 Diffusion Transformers,最初在异构的多机器人数据集上进行预训练,并在超过 6k 的双臂轨迹上进行微调,展示了在多样化数据源上进行预训练的优势。$\pi_{0}$ [26] 使用了预训练的 VLM 骨干网络和基于流的动作专家,提升了复杂任务(如洗衣)中的灵巧操作能力。LAPA [27] 引入了潜在动作作为预训练目标;然而,其潜在规划能力并未保留用于下游任务。基于近期研究中的各种创新思想,我们通过将网络规模的知识转移到机器人控制中,利用视觉语言模型 (VLMs) 和潜在动作,结合人类视频和机器人数据进行可扩展训练,推动了该领域的发展。我们的工作展示了潜在动作规划器的集成如何增强长期任务执行能力,并实现更高效的策略学习,显著改进了现有的通用策略。

III. AGIBOT WORLD: PLATFORM AND DATA

III. AGIBOT 世界:平台与数据

AgiBot World is a full-stack and open-source embodied intelligence ecosystem. Based on the hardware platform developed by us, AgiBot G1, we construct AgiBot World— an open-source robot manipulation dataset collected by more than 100 homogeneous robots, providing high-quality data for challenging tasks spanning a wide spectrum of real-life scenarios. The latest version contains 1,001,552 trajectories, with a total duration of 2976.4 hours, covering 217 specific tasks, 87 skills, and 106 scenes. We go beyond basic tabletop tasks such as pick-and-place in lab environments; instead, concentrate on real-world scenarios involving dual-arm manipulation, dexterous hands, and collaborative tasks. AgiBot World aims to provide an inclusive benchmark to drive the future development of advanced and robust algorithms.

AgiBot World 是一个全栈开源的具身智能生态系统。基于我们开发的硬件平台 AgiBot G1,我们构建了 AgiBot World——一个由 100 多台同构机器人收集的开源机器人操作数据集,为涵盖广泛现实生活场景的具有挑战性的任务提供高质量数据。最新版本包含 1,001,552 条轨迹,总时长为 2976.4 小时,涵盖 217 个具体任务、87 项技能和 106 个场景。我们超越了实验室环境中的基本桌面任务,如拾取和放置;而是专注于涉及双臂操作、灵巧手和协作任务的现实场景。AgiBot World 旨在提供一个包容性的基准,以推动先进且鲁棒的算法的未来发展。

We plan to release all resources to enable the community build upon AgiBot World. The dataset is available under the CC BY-NC-SA 4.o license, along with the model checkpoints and code.

我们计划发布所有资源,以便社区能够在AgiBot World的基础上进行构建。数据集、模型检查点和代码均可在CC BY-NC-SA 4.0许可下获取。

A.Hardware:A Versatile Humanoid Robot

A. 硬件:多功能人形机器人

The hardware platform is the cornerstone of AgiBot World, determining the lower limit of its quality. The standar diz ation of hardware is also the key to streamlining distributed data collection and ensuring reproducible results. We meticulously develop a novel hardware platform for AgiBot World, distinguished by visuo-tactile sensors, durable 6-DoF dexterous hands with humanoid configuration.

硬件平台是AgiBot World的基石,决定了其质量的下限。硬件的标准化也是简化分布式数据收集和确保结果可重复性的关键。我们精心开发了一种新颖的硬件平台,其特点在于视觉触觉传感器、耐用的人形配置6自由度灵巧手。

As illustrated in Fig. 1, our robotic platform features dual 7-DoF arms, a mobile chassis, and an adjustable waist. The end effectors are modular, allowing for the use of either a standard gripper or a 6-DoF dexterous hand, depending on task requirements. For tasks necessitating tactile feedback, a gripper equipped with visuo-tactile sensors is utilized. The robot is outfitted with eight cameras: an RGB-D camera and three fisheye cameras for the front view, RGB-D or fisheye cameras mounted on each end-effector, and two fisheye cameras positioned at the rear. Image observations and proprio ce pti ve states, including joint and end-effector positions, are recorded at a control frequency of $30~\mathrm{Hz}$

如图 1 所示,我们的机器人平台配备了双 7 自由度手臂、一个移动底盘和一个可调节腰部。末端执行器是模块化的,可以根据任务需求使用标准夹持器或 6 自由度灵巧手。对于需要触觉反馈的任务,使用配备视觉触觉传感器的夹持器。机器人配备了八个摄像头:一个 RGB-D 摄像头和三个用于前视的鱼眼摄像头,每个末端执行器上安装的 RGB-D 或鱼眼摄像头,以及位于后部的两个鱼眼摄像头。图像观测和本体感知状态(包括关节和末端执行器位置)以 $30~\mathrm{Hz}$ 的控制频率记录。

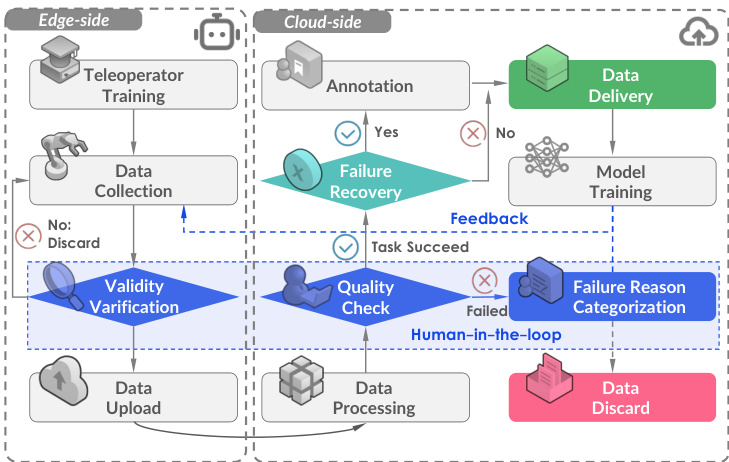

Fig. 2: Data collection pipeline. We embrace a human-inthe-loop framework to ensure high quality, enriched with detailed annotations and error recovery behaviors. Human feedback plays a critical role not only in post-collection review but also in actively guiding the data collection process, which is largely overlooked in prior efforts.

图 2: 数据收集流程。我们采用人机协作框架以确保高质量,并辅以详细的注释和错误恢复行为。人类反馈不仅在收集后的审查中起着关键作用,还在积极引导数据收集过程中发挥重要作用,这一点在之前的工作中大多被忽视。

We employ two tele operation systems: VR headset control and whole-body motion capture control. The VR controller maps the hand gesture to the end-effector translation and rotation, which is subsequently converted to joint angles through inverse kinematics. The thumb sticks and buttons on the controller enable robot base and body movement, while the trigger buttons control end-effector actuation. However, the VR controller restricts the dexterous hand to only a few predefined gestures. To extensively unlock our robot's capabilities, we adapt a motion capture system which records the data of human joints, including the fingers, and maps them to robot posture, enabling more nuanced control, including individual finger movements, torso pose, and head orientation. This system provides posture fexibility and execution precision that are required in achieving more complex manipulation tasks.

我们采用两种远程操作系统:VR头戴设备控制和全身动作捕捉控制。VR控制器将手势映射到末端执行器的平移和旋转,随后通过逆运动学转换为关节角度。控制器上的拇指摇杆和按钮用于控制机器人底座和身体的移动,而触发按钮则控制末端执行器的动作。然而,VR控制器限制了灵巧手只能执行少数预定义的手势。为了充分发挥我们机器人的能力,我们采用了动作捕捉系统,该系统记录包括手指在内的人体关节数据,并将其映射到机器人姿态,从而实现更精细的控制,包括单个手指的移动、躯干姿势和头部方向。该系统提供了实现更复杂操作任务所需的姿态灵活性和执行精度。

B. Data Collection: Protocol and Quality

B. 数据收集:协议与质量

The data collection session, as shown in Fig. 2, can be broadly divided into three phases. (1) Before formally commencing data collection, we first conduct preliminary data acquisition to validate the feasibility of each task and establish corresponding collection standards. (2) After feasibility validation and review of the collection standards, skilled tele operators arrange the initial scene and formally begin data collection according to the established standards. All data undergoes an initial validity verification locally, such as verifying the absence of missing frames. Once the data is confirmed to be complete, it is uploaded to the cloud for the next phase. (3) During post-processing, the data annotators will verify whether each episode meets the collection standards established in phase 1 and provide language annotations.

数据收集会话,如图 2 所示,可以大致分为三个阶段。(1) 在正式启动数据收集之前,我们首先进行初步数据采集,以验证每个任务的可行性并建立相应的收集标准。(2) 在可行性验证和收集标准审查之后,熟练的远程操作员安排初始场景,并根据既定标准正式开始数据收集。所有数据在本地进行初步有效性验证,例如验证是否存在缺失帧。一旦数据被确认为完整,它将被上传到云端进行下一阶段。(3) 在后处理过程中,数据标注员将验证每个片段是否符合第一阶段建立的收集标准,并提供语言注释。

Failure recovery. During data collection, tele operators may occasionally commit errors, such as inadvertently dropping objects while manipulating the robotic arms. However, they are often able to recover from these errors and successfully complete the task without requiring a full re config uration of the setup. Rather than discarding such trajectories, we retain them and manually annotate each with corresponding failure reasons and timestamps. These trajectories, referred to as failure recovery data, constitute approximately one percent of the dataset. We consider them invaluable for achieving policy alignment [28] and failure reflection [29], essential for advancing the next generation of robot foundation models.

故障恢复。在数据收集过程中,远程操作员可能会偶尔犯错,例如在操作机械臂时无意中掉落物体。然而,他们通常能够从这些错误中恢复并成功完成任务,而无需完全重新配置设置。我们不会丢弃这些轨迹,而是保留它们,并手动为每个轨迹标注相应的故障原因和时间戳。这些轨迹被称为故障恢复数据,约占数据集的百分之一。我们认为这些数据对于实现策略对齐 [28] 和故障反思 [29] 至关重要,这对于推动下一代机器人基础模型的发展至关重要。

Human-in-the-loop. Concurrent with feedback collection from data annotators, we adopt a human-in-the-loop approach to assess and refine data quality. This process involves an iterative cycle of collecting a small set of demonstrations, training a policy, and deploying the resulting policy to evaluate data availability. Based on the policy's performance, we iterative ly refine the data collection pipeline to address identified gaps or inefficiencies. For instance, during real-world deployment, the model exhibits prolonged pauses at the onset of actions, aligning with data annotator feedback highlighting inconsistent transitions and excessive idle time in the collected data. In response, we revise the data collection protocols and introduce a post-processing step to eliminate idle frames, thereby enhancing the dataset's overall utility for policy learning. This feedback-driven methodology ensures continuous improvement in data quality.

人机协作。在从数据标注员收集反馈的同时,我们采用人机协作的方法来评估和改进数据质量。这一过程包括一个迭代循环:收集一小部分演示数据,训练策略,并部署生成的策略以评估数据的可用性。根据策略的表现,我们迭代地改进数据收集流程,以解决发现的差距或低效问题。例如,在实际部署中,模型在动作开始时表现出长时间的停顿,这与数据标注员反馈中指出的收集数据中不一致的转换和过多的空闲时间一致。为此,我们修订了数据收集协议,并引入了一个后处理步骤来消除空闲帧,从而提高了数据集在策略学习中的整体效用。这种反馈驱动的方法确保了数据质量的持续改进。

C. Dataset Statistics and Analysis: Beyond Scale

C. 数据集统计与分析:超越规模

AgiBot World is developed through a large-scale data collection facility, which spans over 4,0o0 square meters. This extensive environment contains over 3,oo0 unique objects in a variety of scenes, meticulously designed to reflect realworld settings. The dataset covers a wide range of scenarios and scene setups, ensuring both scale and diversity in the pursuit of general iz able robot policy.

AgiBot World 是通过一个占地超过 4,000 平方米的大规模数据收集设施开发的。这个广阔的环境包含超过 3,000 个独特物体,分布在各种场景中,精心设计以反映现实世界的设置。该数据集涵盖了广泛的场景和场景设置,确保在追求通用机器人策略时兼具规模和多样性。

Reconstructing the diversity of the real world. Key statistics of our dataset are presented in Fig. 3. AgiBot World provides extensive coverage across five key domains: domestic, retail, industrial, restaurant, and office environments. Within each domain, we further define specific scene categories. For instance, the domestic domain includes detailed environments such as bedrooms, kitchens, living rooms, and balconies, while the retail domain features distinct areas like shelving units and fresh produce sections. Our dataset also features over 3,000 distinct objects, systematically categorized across various scenes. These objects span a wide range of everyday items, including food, furniture, clothing, electronic devices, and more. The distribution of object categories, as illustrated in Fig. 3(a), highlights the relative frequency of different object types within each scene.

重建现实世界的多样性。我们的数据集的关键统计数据如图 3 所示。AgiBot World 在五个关键领域提供了广泛的覆盖:家庭、零售、工业、餐厅和办公环境。在每个领域中,我们进一步定义了具体的场景类别。例如,家庭领域包括卧室、厨房、客厅和阳台等详细环境,而零售领域则包括货架和生鲜区等不同区域。我们的数据集还包含超过 3,000 种不同的物体,这些物体在各种场景中进行了系统分类。这些物体涵盖了广泛的日常物品,包括食品、家具、服装、电子设备等。如图 3(a) 所示,物体类别的分布突出了每个场景中不同物体类型的相对频率。

Fig. 3: Dataset Statistics. a) AgiBot World dataset covers the vast majority of robotic application scenarios, as well as a wide range of interactive objects. b) Our dataset features long-horizon tasks, with the majority of trajectories ranging from 30s to 60s. In contrast, widely used datasets, such as DROID, primarily consist of trajectories ranging from 5s to 20s, while OXE v1.0 predominantly contains trajectories within 5s. c) AgiBot World dataset focuses on valuable atomic skills, spanning a wide spectrum of skills, each supported by a minimum of 100 trajectories (red dashed line above).

图 3: 数据集统计。a) AgiBot World 数据集涵盖了绝大多数机器人应用场景,以及广泛的交互对象。b) 我们的数据集以长时任务为特色,大多数轨迹的时长在 30 秒到 60 秒之间。相比之下,广泛使用的数据集(如 DROID)主要由 5 秒到 20 秒的轨迹组成,而 OXE v1.0 主要包含 5 秒以内的轨迹。c) AgiBot World 数据集专注于有价值的原子技能,涵盖了广泛的技能范围,每个技能至少有 100 条轨迹支持(上方的红色虚线)。

Long-horizon manipulation. A distinguishing feature of the AgiBot World dataset is its emphasis on long-horizon manipulation. As shown in Fig. 3(b), prior datasets predominantly focus on tasks involving single atomic skills, with most trajectories lasting no more than 5 seconds. In contrast, AgiBot World is built upon continuous and complete tasks composed by multiple atomic skills, like “make a coffee". Trajectories in our dataset typically span approximately 30 seconds, some of which last over 2 minutes. We also provide key-frame and instruction annotation for each sub-step to facilitate policy learning in such challenging scenarios.

长时程操作。AgiBot World 数据集的一个显著特点是其强调长时程操作。如图 3(b) 所示,先前的数据集主要关注涉及单一原子技能的任务,大多数轨迹持续时间不超过 5 秒。相比之下,AgiBot World 建立在由多个原子技能组成的连续且完整的任务基础上,例如“制作咖啡”。我们数据集中的轨迹通常持续约 30 秒,有些甚至超过 2 分钟。我们还为每个子步骤提供了关键帧和指令注释,以促进在这种具有挑战性的场景中进行策略学习。

Comprehensive skill coverage. In terms of task design, while generic atomic skills, such as “pick-and-place", dominate the majority of tasks, we have intentionally incorporated tasks that emphasize less frequently used but highly valuable skills, such as “chop" and “plug" (as shown in Fig. 3(c)). This ensures that our dataset adequately represents a broad spectrum of skills, providing sufficient data for each to support robust policy learning.

全面的技能覆盖。在任务设计方面,虽然通用的原子技能(如“抓取和放置”)占据了大多数任务,但我们有意加入了强调较少使用但非常有价值的技能的任务,例如“切菜”和“插入”(如图 3(c) 所示)。这确保了我们的数据集能够充分代表广泛的技能范围,为每种技能提供足够的数据以支持稳健的策略学习。

IV. AGIBOT WORLD: MODEL

IV. AGIBOT WORLD: 模型

To effectively utilize our high-quality AgiBot World dataset and enhance the policy's general iz ability, we propose a hierarchical Vision-Language-Latent-Action (ViLLA) framework with three training stages, as depicted in Fig. 4. Compared to Vision-Language-Action (VLA) model where action is vision-language conditioned, the ViLLA model predicts latent action tokens, conditioned on the generation of subsequent robot control actions.

为了有效利用我们高质量的 AgiBot World 数据集并增强策略的泛化能力,我们提出了一个分层的视觉-语言-潜在动作 (ViLLA) 框架,包含三个训练阶段,如图 4 所示。与动作由视觉-语言条件化的视觉-语言-动作 (VLA) 模型相比,ViLLA 模型预测潜在动作 Token,条件化于后续机器人控制动作的生成。

In Stage 1, we project consecutive images into a latent action space by training an encoder-decoder latent action model (LAM) on internet-scale heterogeneous data. This allows the latent action to serve as an intermediate representation, bridging the gap between general image-text inputs and robotic actions. In Stage 2, these latent actions act as pseudolabels for the latent planner, facilitating embodiment-agnostic long-horizon planning and leveraging the general iz ability of the pre-trained VLM. Finally, in Stage 3, we introduce the action expert and jointly train it with the latent planner to support the learning of dexterous manipulation.

在第一阶段,我们通过在互联网规模的异构数据上训练编码器-解码器潜在动作模型(LAM),将连续图像投影到潜在动作空间中。这使得潜在动作能够作为中间表示,弥合通用图像-文本输入与机器人动作之间的差距。在第二阶段,这些潜在动作作为潜在规划器的伪标签,促进与具体实现无关的长时程规划,并利用预训练视觉语言模型(VLM)的泛化能力。最后,在第三阶段,我们引入动作专家,并与潜在规划器联合训练,以支持灵巧操作的学习。

A.Latent Action Model

A. 潜在动作模型

Despite considerable advancements in gathering diverse robot demonstrations, the volume of action-labeled robot data remains limited relative to web-scale datasets. To broaden the data pool by incorporating internet-scale human videos lacking action labels and cross-embodiment robot data, we employ latent actions [30] in Stage 1 to model the inverse dynamics of consecutive frames. This approach enables the transfer of real-world dynamics from heterogeneous data sources into universal manipulation knowledge.

尽管在收集多样化的机器人演示方面取得了显著进展,但与网络规模的数据集相比,带有动作标签的机器人数据量仍然有限。为了通过整合缺乏动作标签的互联网规模人类视频和跨体现机器人数据来扩大数据池,我们在第一阶段采用潜在动作 [30] 来建模连续帧的逆动力学。这种方法能够将来自异构数据源的真实世界动力学转化为通用的操作知识。

To extract latent actions from video frames ${I_{t},I_{t+H}}$ , the latent action model is constructed around an inverse dynamics model-based encoder $\mathbf{I}(z_{t}|I_{t},I_{t+H})$ and a forward dynamics model-based decoder $\mathbf{F}(I_{t+H}|I_{t},z_{t})$ . The encoder employs a spatial-temporal transformer [31] with casual temporal masks following Bruce et al. [30], while the decoder is a spatial transformer that takes the initial frame and disc ret i zed latent action tokens z = [,..,2- as input, with $k$ set to 4. The latent action tokens are quantized using a VQ-VAE objective [32], with a codebook of size $|C|$

为了从视频帧 ${I_{t},I_{t+H}}$ 中提取潜在动作,潜在动作模型围绕基于逆动力学模型的编码器 $\mathbf{I}(z_{t}|I_{t},I_{t+H})$ 和基于前向动力学模型的解码器 $\mathbf{F}(I_{t+H}|I_{t},z_{t})$ 构建。编码器采用了带有因果时间掩码的时空 Transformer [31],遵循 Bruce 等人的方法 [30],而解码器是一个空间 Transformer,它以初始帧和离散化的潜在动作 Token $z = [,..,2-$ 作为输入,其中 $k$ 设置为 4。潜在动作 Token 使用 VQ-VAE 目标 [32] 进行量化,码本大小为 $|C|$。

B.Latent Planner

B. 潜在规划器

With the aim of establishing a solid foundation for scene and object understanding and general reasoning ability, the ViLLA model harnesses a VLM pre-trained on web-scale vision-language data and incorporates a latent planner for embodiment-agnostic planning within the latent action space. We use InternVL2.5-2B [33] as the VLM backbone due to its strong transfer learning capabilities. The two-billion parameter scale has proven effective for robotic tasks in our preliminary experiments, as well as in prior studies [10], [26]. Multiview image observations are first encoded using InternViT before being projected into the language space. The latent planner consists of 24 transformer layers, which enable layer-by-layer conditioning from the VLM backbone with full bidirectional attention.

为了为场景和物体理解以及通用推理能力奠定坚实基础,ViLLA 模型利用了在网页规模视觉语言数据上预训练的 VLM (Vision-Language Model),并在潜在动作空间中集成了一个潜在规划器,以实现与具体实现无关的规划。我们使用 InternVL2.5-2B [33] 作为 VLM 的骨干网络,因为它具有强大的迁移学习能力。在我们的初步实验以及之前的研究 [10], [26] 中,两亿参数的规模已被证明对机器人任务有效。多视角图像观察首先使用 InternViT 进行编码,然后投影到语言空间中。潜在规划器由 24 层 Transformer 组成,这些层能够通过全双向注意力机制从 VLM 骨干网络逐层进行条件化。

Fig. 4: We propose GO-1, a generalist policy featuring general reasoning and long-horizon planning capabilities. The latent action model (LAM) learns universal action representations from web-scale video data (i.e., human videos from Ego4D), and quantizes them into discrete latent action tokens. The latent planner conducts temporal reasoning through latent action prediction, bridging the gap between image-text inputs and robot actions generated by the action expert.

图 4: 我们提出了 GO-1,这是一种具备通用推理和长时规划能力的通用策略。潜在动作模型 (LAM) 从网络规模的视频数据(即来自 Ego4D 的人类视频)中学习通用动作表示,并将其量化为离散的潜在动作 Token。潜在规划器通过潜在动作预测进行时间推理,弥合了图像-文本输入与动作专家生成的机器人动作之间的差距。

Specifically, given multiview input images $\left(I_{t}^{h},I_{t}^{l},I_{t}^{r}\right)$ (typically from the head, left wrist, and right wrist) at timestep $t$ , along with a language instruction $l$ describing the ongoing task, the latent planner predicts latent action tokens: $\bar{\mathbf{P}}\left(z_{t}|I_{t}^{h},I_{t}^{l},I_{t}^{r},l\right)$ , with supervision produced by the LAM encoder based on the head view: $z_{t}:=\mathbf{I}(I_{t}^{h},I_{t+H}^{h})$ .Since the latent action space is orders of magnitude smaller than the disc ret i zed low-level actions used in OpenVLA [4], this approach also facilitates the efficient adaptation of generalpurpose VLMs into robot policies.

具体来说,给定时间步 $t$ 的多视角输入图像 $\left(I_{t}^{h},I_{t}^{l},I_{t}^{r}\right)$ (通常来自头部、左手腕和右手腕),以及描述当前任务的语言指令 $l$,潜在规划器预测潜在动作 Token:$\bar{\mathbf{P}}\left(z_{t}|I_{t}^{h},I_{t}^{l},I_{t}^{r},l\right)$,其监督由基于头部视角的 LAM 编码器生成:$z_{t}:=\mathbf{I}(I_{t}^{h},I_{t+H}^{h})$。由于潜在动作空间比 OpenVLA [4] 中使用的离散化低级动作小几个数量级,这种方法也有助于将通用 VLM 高效适应为机器人策略。

C. Action Expert

C. 行动专家

To achieve high-frequency and dexterous manipulation, Stage 3 integrates an action expert that utilizes a diffusion objective to model the continuous distribution of low-level actions [34]. Although the action expert shares the same architectural framework as the latent planner, their objectives diverge: the latent planner generates disc ret i zed latent action tokens through masked language modeling, while the action expert regresses low-level actions via an iterative denoising process. Both expert modules are conditioned hierarchically on preceding modules, including the action expert itself, ensuring coherent integration and information flow within the dual-expert system.

为了实现高频和灵巧的操作,第三阶段集成了一个动作专家,该专家利用扩散目标来建模低级动作的连续分布 [34]。尽管动作专家与潜在规划器共享相同的架构框架,但它们的目标不同:潜在规划器通过掩码语言建模生成离散的潜在动作 Token,而动作专家则通过迭代去噪过程回归低级动作。这两个专家模块都在层次上依赖于前面的模块,包括动作专家本身,确保双专家系统内的连贯集成和信息流。

The action expert decodes low-level action chunks, denoted by $A_{t}=[a_{t},a_{t+1},...,a_{t+H}]$ with $H=30$ ,using prop rio ce pti ve state $p_{t}$ Oover an interval of $H$ timesteps: $\mathbf{A}\left(A_{t}|I_{t}^{h},I_{t}^{l},I_{t}^{r},p_{t},l\right)$ . During inference, the VLM, latent planner, and action expert are synergistic ally combined within the generalist policy GO-1, which initially predicts $k$ latent action tokens and subsequently conditions the denoising process to produce the final control signals.

动作专家解码低级动作块,表示为 $A_{t}=[a_{t},a_{t+1},...,a_{t+H}]$,其中 $H=30$,使用本体感知状态 $p_{t}$ 在 $H$ 个时间步长内进行解码:$\mathbf{A}\left(A_{t}|I_{t}^{h},I_{t}^{l},I_{t}^{r},p_{t},l\right)$。在推理过程中,视觉语言模型 (VLM)、潜在规划器和动作专家在通用策略 GO-1 中协同工作,首先预测 $k$ 个潜在动作 Token,然后通过去噪过程生成最终的控制信号。

V. EXPERIMENT AND ANALYSIS

五、实验与分析

We evaluate the real-world performance of policies pretrained on different data sources including the AgiBot World dataset, demonstrating the effectiveness credited from the GO-1 model in policy learning.

我们评估了在不同数据源(包括AgiBot World数据集)上预训练策略的实际表现,证明了GO-1模型在策略学习中的有效性。

A. Experiment Setup

A. 实验设置

1) Evaluation Tasks

1) 评估任务

Here we choose a comprehensive set of tasks that span various dimensions of policy capabilities from AgiBot World for evaluation, including tool-usage (Wipe Table), deformable objects manipulation (Fold Shorts), human-robot interaction (Handover Bottle), language-following (Restock Bev- erage), etc. Moreover, we design 2 unseen scenarios for each task, covering position generalization, visual distract or s, and language generalization, delivering thorough generalization evaluations for policies. The evaluated tasks, also partially shown in Fig. 5, are: 1) “Restock Bag": Pick up the snack from the cart and place it on the supermarket shelf; 2) “Table Bussing": Clear tabletop debris into the trash can; 3) “Pour Water': Grasp the kettle handle, lift the kettle and pour water into the cup; 4) “Restock Beverage": Pick up the bottled beverage from the cart and place it on the supermarket shelf; 5)“Fold Shorts": Fold the shorts laid flat on the table in half twice; 6) "Wipe Table": Clean water spills using the sponge. Scoring rubrics. The evaluation metric employs a normalized score, computed as the average across 10 rollouts per task, scenario, and method. Each episode scores 1.0 for full success, with fractional scores for partial success, enabling a nuanced performance assessment.

我们选择了一组全面的任务,这些任务涵盖了来自AgiBot世界的策略能力的多个维度进行评估,包括工具使用(擦桌子)、可变形物体操作(折叠短裤)、人机交互(递送瓶子)、语言跟随(补货饮料)等。此外,我们为每个任务设计了2个未见过的场景,涵盖位置泛化、视觉干扰和语言泛化,为策略提供了全面的泛化评估。评估的任务(部分如图5所示)包括:1)“补货零食”:从购物车中拿起零食并将其放在超市货架上;2)“清理桌面”:将桌面上的垃圾清理到垃圾桶中;3)“倒水”:抓住水壶把手,提起水壶并将水倒入杯中;4)“补货饮料”:从购物车中拿起瓶装饮料并将其放在超市货架上;5)“折叠短裤”:将平放在桌子上的短裤对折两次;6)“擦桌子”:使用海绵清理洒出的水。

评分标准。评估指标采用标准化分数,计算为每个任务、场景和方法在10次运行中的平均值。每次运行在完全成功时得分为1.0,部分成功时得分为分数,从而实现细致的性能评估。

Fig. 5: Is GO-1 a more powerful robot generalist policy? We evaluate GO-1 against previous generalist policy RDT-1B and our baseline without the latent planner, with all policies pre-trained on AgiBot World beta. Across all tasks and comparisons, GO-1 outperforms baselines by a large margin. The incorporation of latent planner boosts performance on complex tasks such as “Fold Shorts"’ and improves general iz ability in task “Restock Beverage" in great extent.

图 5: GO-1 是一个更强大的机器人通用策略吗?我们将 GO-1 与之前的通用策略 RDT-1B 以及没有潜在规划器的基线进行了比较,所有策略都在 AgiBot World beta 上进行了预训练。在所有任务和比较中,GO-1 都大幅超越了基线。潜在规划器的加入显著提升了复杂任务(如“折叠短裤”)的性能,并在“补充饮料”任务中大幅提高了通用能力。

Fig. 6: Does AgiBot World dataset improve policy performance and general iz ability? Policies pre-trained on our dataset outperform those trained on OXE in both seen (0.77 v.s. 0.47) and out-of-distribution scenarios $(0.67~\nu.s.~0.38)$

图 6: AgiBot World 数据集是否提升了策略性能和泛化能力?在我们的数据集上预训练的策略在已知场景 (0.77 对比 0.47) 和分布外场景 $(0.67~\nu.s.~0.38)$ 中均优于在 OXE 上训练的策略。

2) Implementation Details

2) 实现细节

The AgiBot World alpha represents the partial subset of our dataset, constituting approximately $14%$ of the fullversion, AgiBot World beta (a.k.a. last row in Tab. I). Following the completion of the third-stage pre-training, the pre-trained GO-1 exhibits basic competency in task completion. Unless otherwise specified, we further enhance the model by fine-tuning it using high-quality, task-specific demonstrations, enabling adaptation to new tasks for evaluation. For GO-1, fine-tuning is conducted with a learning rate of 2e-5, a batch size of 768, and 30,000 optimization steps.

AgiBot World alpha 是我们数据集的部分子集,约占完整版本 AgiBot World beta (即表 I 中的最后一行) 的 $14%$。在完成第三阶段预训练后,预训练的 GO-1 展现出基本的任务完成能力。除非另有说明,我们通过使用高质量、任务特定的演示对模型进行微调,使其能够适应新任务以进行评估。对于 GO-1,微调时的学习率为 2e-5,批量大小为 768,优化步数为 30,000。

B. Does AgiBot World boost policy learning at scale?

B. AgiBot World 是否促进了大规模策略学习?

We choose the open-source RDT [10] model to study how much the AgiBot World dataset can help policy learning. The task completion scores for three tasks are detailed in Fig. 6. Models pre-trained on the AgiBot World dataset demonstrate a significant improvement in the “Table Bussing” task, nearly tripling performance. On average, the completion score increases by 0.30 and 0.29 for in-distribution and out-of-distribution setups, respectively. Notably, the AgiBot World a lpha dataset, despite having a significantly smaller data volume than OXE (e.g., 236h compared to ${\sim}2000\mathrm{h})$ achieves a higher success rate, underscoring the exceptional data quality of our dataset.

我们选择开源的 RDT [10] 模型来研究 AgiBot World 数据集对策略学习的帮助程度。三个任务的任务完成分数详见图 6。在 AgiBot World 数据集上预训练的模型在“Table Bussing”任务中表现出显著提升,性能几乎提高了三倍。平均而言,在分布内和分布外设置下,完成分数分别提高了 0.30 和 0.29。值得注意的是,AgiBot World alpha 数据集尽管数据量明显小于 OXE(例如,236 小时相比 ${\sim}2000\mathrm{h}$),但取得了更高的成功率,这凸显了我们数据集的数据质量之优异。

Fig. 7: Further analysis on: a) how model performance scales with data size, and b) the impact of filtering undesirable data through manual review on policy learning.

图 7: 进一步分析:a) 模型性能如何随数据规模扩展,以及 b) 通过人工审查过滤不良数据对策略学习的影响。

C. Is GO-1 a more capable generalist policy?

C. GO-1 是否是一个更强大的通用策略?

We evaluate GO-1 on five tasks of varying complexity, categorized by their visual richness and task horizon. The results, as shown in Fig. 5, are averaged over 30 trials per task, with 10 trials conducted in a seen setup and 20 trials under variations or distractions. GO-1 significantly outperforms RDT, particularly in tasks such as “Pour Water", which demands robustness to object positions, and “Restock Beverage", which requires visual robustness and instructionfollowing capabilities. The inclusion of the latent planner in the ViLLA model further improves performance, resulting in an average improvement of 0.12 task completion score.

我们在五个不同复杂度的任务上评估了GO-1,这些任务根据其视觉丰富度和任务范围进行了分类。结果如图5所示,每个任务进行了30次试验的平均值,其中10次试验在已知设置中进行,20次试验在变化或干扰条件下进行。GO-1显著优于RDT,特别是在“倒水”任务中,该任务要求对物体位置的鲁棒性,以及在“补充饮料”任务中,该任务需要视觉鲁棒性和指令跟随能力。ViLLA模型中包含的潜在规划器进一步提高了性能,任务完成分数平均提高了0.12。

D. Does GO-1's ability scale with data size?

D. GO-1 的能力是否随数据规模扩展?

To investigate whether a power-law scaling relationship exists between the size of pre-training data and policy capability, we conduct an analysis using $10%$ subsets of the alpha, $100%$ alpha, and beta dataset, where the number of training trajectories are ranged from $9.2\mathrm{k}$ to1M.We evaluate the out-of-the-box performance of resulting policies on four seen tasks in pre-training. As shown in Fig. 7(a), the policy's performance exhibits a predictable power-law scaling relationship with the number of trajectories, supported by a Pearson correlation coefficient of $r=0.97$

为了探究预训练数据规模与策略能力之间是否存在幂律缩放关系,我们使用 alpha 数据集的 $10%$ 子集、$100%$ 的 alpha 数据集以及 beta 数据集进行分析,其中训练轨迹的数量范围从 $9.2\mathrm{k}$ 到 1M。我们在预训练中的四个已知任务上评估了生成策略的开箱即用性能。如图 7(a) 所示,策略性能与轨迹数量呈现出可预测的幂律缩放关系,Pearson 相关系数为 $r=0.97$。

E. How does data quality impact policy learning?

E. 数据质量如何影响策略学习?

We explore the impact of quality checks introduced in our human-in-the-loop data collection on policy learning. Specifically, we provide an ablation study by fine-tuning an RDT model pre-trained on the alpha dataset using both verified (528 trajectories) and unverified (482 trajectories) data from the “"Wipe Table” task. As shown in Fig. 7(b), being larger in quantity does not necessarily translate to improved performance, while a smaller set of human-verified data yields a 0.18 boost in the completion score, underscoring the importance of high-quality data for policy learning.

我们探讨了在人机交互数据收集中引入的质量检查对策略学习的影响。具体来说,我们通过使用“擦桌子”任务中的已验证数据(528条轨迹)和未验证数据(482条轨迹)对在alpha数据集上预训练的RDT模型进行微调,提供了一个消融研究。如图7(b)所示,数据量更大并不一定意味着性能提升,而较小规模的人工验证数据使完成分数提高了0.18,这突显了高质量数据对策略学习的重要性。

VI. CONCLUSION

VI. 结论

We introduce AgiBot World, an open-source ecosystem aimed at democratizing access to large-scale, high-quality robot learning datasets. It is complete with toolchains and foundation models to advance embodied general intelligence through community collaboration. Our dataset distinguishes itself through unparalleled scale, diversity, and quality, underpinned by carefully crafted tasks. Policy learning evaluations confirm AgiBot World's value in enhancing performance and general iz ability. To further explore its impact, we develop GO-1, a generalist policy utilizing latent actions for webscale pre-training. GO-1 excels in real-world complex tasks, outperforming existing generalist policies and demonstrating scalable performance with increased data volume. We invite the broader community to collaborate in fostering an ecosystem and maximizing the potential of our extensive dataset.

我们推出了 AgiBot World,这是一个旨在普及大规模、高质量机器人学习数据集的开源生态系统。它配备了工具链和基础模型,通过社区合作推动具身通用智能的发展。我们的数据集以其无与伦比的规模、多样性和质量脱颖而出,这些特点得益于精心设计的任务。策略学习评估证实了 AgiBot World 在提升性能和泛化能力方面的价值。为了进一步探索其影响,我们开发了 GO-1,这是一种利用潜在动作进行网络规模预训练的通用策略。GO-1 在现实世界的复杂任务中表现出色,超越了现有的通用策略,并展示了随着数据量增加而扩展的性能。我们邀请更广泛的社区合作,共同培育这一生态系统,并最大化我们广泛数据集的潜力。

REFERENCES

参考文献

[34] Q. Bu, H. Li, L. Chen, J. Cai, J. Zeng, H. Cui, M. Yao, and Y. Qiao, "Towards synergistic, generalized, and efficient dual-system for robotic manipulation”arXiv preprint arXiv:2410.08001,2024.6

[34] Q. Bu, H. Li, L. Chen, J. Cai, J. Zeng, H. Cui, M. Yao, 和 Y. Qiao, "Towards synergistic, generalized, and efficient dual-system for robotic manipulation" arXiv 预印本 arXiv:2410.08001, 2024.6

ACKNOWLEDGEMENT

致谢

CHANGE LOG

更新日志

Jan 2025: agibot-world alpha version release · A sub-split (around $10%$ )of the dataset, containing 92,214 trajectories.

2025年1月:agibot-world alpha版本发布 · 数据集的子集(约10%),包含92,214条轨迹。

We thank Remi Cadene and the LeRobot community for their support and collaboration. In addition, we are grateful to Shu Jiang, Chengshi Shi, Shenyuan Gao, Yixuan Pan, Yi Xin and Peng Gao for their fruitful discussions. We also extend our gratitude to the open-source community for the insightful feedback and engagement throughout this project.

我们感谢 Remi Cadene 和 LeRobot 社区的支持与合作。此外,我们感谢 Shu Jiang、Chengshi Shi、Shenyuan Gao、Yixuan Pan、Yi Xin 和 Peng Gao 的富有成效的讨论。我们还要感谢开源社区在整个项目中提供的深刻反馈和积极参与。

CONTRIBUTIONS

贡献

Core Contributors

核心贡献者

Algorithm

算法

March 2025: full data release and technical report · Initial submission to arXiv and reserach blog · This version includes the complete manuscript with all sections: introduction, methodology, results, discussion, and appendix.

2025年3月:完整数据发布与技术报告 · 首次提交至arXiv和研究博客 · 此版本包含完整的手稿,涵盖所有部分:引言、方法论、结果、讨论和附录。

HOW TO CITE

如何引用

. Github repository

Github 仓库

@misc{contributors 2024 agi bot world repo, title $=$ {AgiBot World Colosseo}, author $=$ {AgiBot World Colosseo contributors}, how published={\url{https://github.com/ Open Drive Lab/AgiBot-World}}, year $=$ {2024}

@misc{contributors 2024 agi bot world repo, title $=$ {AgiBot World Colosseo}, author $=$ {AgiBot World Colosseo contributors}, how published={\url{https://github.com/ Open Drive Lab/AgiBot-World}}, year $=$ {2024}

Product & Ecosystem

产品与生态系统

System architecture design, project management, community engagement Chengyue Zhao, Shukai Yang, Huijie Wang, Yongjian Shen, Jialu Li, Jiaqi Zhao, Jianchao Zhu, Jiaqi Shan

系统架构设计、项目管理、社区参与

陈悦赵、舒凯杨、惠杰王、永健沈、佳璐李、佳琪赵、建超朱、佳琪单

Manuscript Preparation

稿件准备

Manuscript outline, writing, and revising Jisong Cai, Chonghao Sima

稿件大纲、撰写与修订 Jisong Cai, Chonghao Sima

Data Curation

数据管理

Data collection, quality check Cheng Ruan, Jia Zeng, Lei Yang

数据收集、质量检查 阮成、曾佳、杨磊

Hardware & Software Development

硬件与软件开发

Hardware design, embedded software development Yuehan Niu, Cheng Jing, Mingkang Shi, Chi Zhang, Qinglin Zhang, Cunbiao Yang, Wenhao Wang, Xuan Hu

硬件设计、嵌入式软件开发 牛月涵、景程、施明康、张驰、张青林、杨存彪、王文浩、胡轩

Project Co-lead and Advising

项目联合负责人与指导

· In the technical report, we list the authors in alphabetical order by their last names.

· 在技术报告中,我们按作者的姓氏字母顺序列出作者。

@article{contributors 2025 agi bot world, title $=$ {{AgiBot World Colosseo: A Large-scale Manipulation Platform for Scalable and Intelligent Embodied Systems}}, author $=$ {AgiBot-World-Contributors and Bu, Qingwen and Cai, Jisong and Chen, Li and Cui, Xiuqi and Ding, Yan and Feng, Siyuan and Gao, Shenyuan and He, Xindong and Huang, Xu and Jiang, Shu and Jiang, Yuxin and Jing, Cheng and Li, Hongyang and Li, Jialu and Liu, Chiming and Liu, Yi and Lu, Yuxiang and Luo, Jianlan and Luo, Ping and Mu, Yao and Niu, Yuehan and Pan, Yixuan and Pang, Jiangmiao and Qiao, Yu and Ren, Guanghui and Ruan, Cheng and Shan, Jiaqi and Shen, Yongjian and Shi, Chengshi and Shi, Mingkang and Shi, Modi and Sima, Chonghao and Song, Jianheng and Wang, Huijie and Wang, Wenhao and Wei, Dafeng and Xie, Chengen and Xu, Guo and Yan, Junchi and Yang, Cunbiao and Yang, Lei and Yang, Shukai and Yao, Maoqing and Zeng, Jia and Zhang, Chi and Zhang, Qinglin and Zhao, Bin and Zhao, Chengyue and Zhao, Jiaqi and Zhu, Jianchao}, journal $=$ {arXiv preprint arXiv:2503.06669}, year $=$ {2025}

@article{contributors 2025 agi bot world, title $=$ {{AgiBot World Colosseo: 大规模可扩展智能体系统的操作平台}}, author $=$ {AgiBot-World-Contributors and 卜庆文 and 蔡继松 and 陈力 and 崔秀琪 and 丁岩 and 冯思远 and 高申远 and 何新东 and 黄旭 and 江舒 and 姜雨欣 and 景程 and 李宏阳 and 李佳璐 and 刘驰明 and 刘毅 and 陆宇翔 and 罗建兰 and 罗平 and 穆尧 and 牛月涵 and 潘逸轩 and 庞江淼 and 乔宇 and 任光辉 and 阮成 and 单佳琪 and 沈永健 and 石成石 and 石明康 and 石默迪 and 司马崇浩 and 宋建恒 and 王慧杰 and 王文浩 and 魏大风 and 谢晨根 and 徐国 and 严俊驰 and 杨存彪 and 杨磊 and 杨书凯 and 姚茂清 and 曾佳 and 张驰 and 张庆林 and 赵斌 and 赵成岳 and 赵佳琪 and 朱建超}, journal $=$ {arXiv preprint arXiv:2503.06669}, year $=$ {2025}