By moving from information to action—think virtual coworkers able to complete complex workflows-the technology promises a new wave of productivity and innovation.

通过从信息处理转向行动执行——设想能够完成复杂工作流程的虚拟同事——这项技术有望带来新一轮的生产力提升和创新发展。

By Lareina Yee, Michael Chui, and Roger Roberts with Stephen Xu

作者:Lareina Yee、Michael Chui、Roger Roberts,与Stephen Xu合作

Overthe past couple of years, the world has marveled at the capabilities and possibilities unleashed by generative Al (gen Al). Foundation models such as large language models (LLMs) can perform impressive feats, extracting insights and generating content across numerous mediums, such as text, audio, images, and video. But the next stage of gen Al is likely to be more transformative.

在过去的几年里,世界为生成式AI(Generative AI)释放的能力和可能性感到惊叹。大语言模型(LLM)等基础模型能够完成令人印象深刻的任务,在文本、音频、图像和视频等多种媒介中提取洞察并生成内容。但生成式AI的下一阶段可能会更具变革性。

We are beginning an evolution from knowledge-based, gen Al-powered tools—say, chatbots that answer questions and generate content—to gen Al-enabled “agents" that use foundation models to execute complex, multistep workflows across a digital world. In short, the technology is moving from thought to action.

我们正从基于知识的生成式AI工具——例如能回答问题、生成内容的聊天机器人——向启用生成式AI的"智能体"演进,这些智能体能够运用基础模型在数字世界中执行复杂的多步骤工作流。简而言之,这项技术正在从思考迈向行动。

Broadly speaking,"agentic" systems refer to digital systems that can independently interact in a dynamic world. While versions of these software systems have existed for years, the natural-language capabilities of gen Al unveil new possibilities, enabling systems that can plan their actions,use online tools to complete those tasks, collaborate with other agents and people, and learn to improve their performance.GenAl agents eventually could act as skilled virtual coworkers, working with humans in a seamless and natural manner. A virtual assistant, for example, could plan and book a complex personalized travel itinerary, handling logistics across multiple travel platforms. Using everyday language, an engineer could describe a new software feature to a programmer agent, which would then code, test, iterate, and deploy the tool it helped create.

广义而言,"智能体"系统指的是能够在动态世界中独立交互的数字系统。虽然这类软件系统的不同版本已存在多年,但生成式AI的自然语言能力揭示了新的可能性——使系统能够规划行动、使用在线工具完成任务、与其他智能体和人类协作,并学会提升性能。生成式AI智能体最终可能成为熟练的虚拟同事,以无缝自然的方式与人类协同工作。例如,虚拟助手可以规划并预订复杂的个性化旅行行程,处理跨多个旅行平台的物流事务。工程师使用日常语言向编程智能体描述新软件功能后,该智能体便能对协助创建的工具进行编码、测试、迭代和部署。

Agentic systems traditionally have been difficult to implement, requiring laborious, rule-based programming or highly specific training of machine-learning models. Gen Al changes that. When agentic systems are built using foundation models (which have been trained on extremely large and varied unstructured data sets) rather than predefined rules, they have the potential to adapt to different scenarios in the same way that LLMs can respond intelligibly to prompts on which they have not been explicitly trained. Furthermore, using natural language rather than programming code, a human user could direct a gen Al-enabled

传统AI智能体系统一直难以实现,需要基于规则进行繁琐编程或对机器学习模型进行高度专业化训练。生成式AI (Generative AI) 改变了这一现状。当基于基础模型 (在极其庞大多样的非结构化数据集上训练而成) 而非预定义规则构建智能体系统时,它们就具备了适应不同场景的潜力,正如大语言模型能够对未经明确训练的提示做出智能响应那样。此外,人类用户可以使用自然语言而非编程代码来指导具备生成式AI能力的智能体系统。

agent system to accomplish a complex workflow. A multiagent system could then interpret and organize this workflow into actionable tasks, assign workto specialized agents, execute these refined tasks using a digital ecosystem of tools, and collaborate with other agents and humans to iterative ly improve the quality of its actions.

智能体系统来完成复杂的工作流程。多智能体系统随后可以将这个工作流程解释并组织成可执行的任务,分配给专门的智能体,使用工具的数字生态系统执行这些经过优化的任务,并与其他智能体和人类协作,迭代地提高其行动的质量。

In this article, we explore the opportunities that the use of gen Al agents presents. Although the technology remains in its nascent phase and requires further technical development before it's ready for business deployment, it's quickly attracting attention. In the past year alone, Google, Microsoft, OpenAl, and others have invested in software libraries and frameworks to support agentic functionality. LLM-powered applications such as Microsoft Copilot, Amazon Q, and Google's upcoming Project Astra are shifting from being knowledge-based to becoming more action-based. Companies and research labs such as Adept, crewAl, and Imbue also are developing agent-based models and multiagent systems. Given the speed with which gen Al is developing, agents could become as commonplace as chatbots are today.

在本文中, 我们探讨了使用生成式AI智能体 (Generative AI Agents) 所带来的机遇。尽管这项技术仍处于起步阶段, 需要进一步的技术开发才能投入商业应用, 但它正迅速吸引各方关注。仅在过去一年, Google、Microsoft、OpenAI等公司就投资了支持智能体功能的软件库和框架。由大语言模型驱动的应用程序, 如Microsoft Copilot、Amazon Q以及Google即将推出的Project Astra, 正从知识型转向行动型。Adept、crewAI和Imbue等公司与研究实验室也在开发基于智能体的模型和多智能体系统。鉴于生成式AI的发展速度, 智能体有望变得像如今的聊天机器人一样普及。

What value can agents bring to businesses?

AI智能体能给企业带来什么价值?

The value that agents can unlock comes from their potential to automate a long tail of complex use cases characterized by highly variable inputs and outputs—use cases that have historically been difficult to address in a cost-ortime-efficient manner. Something as simple as a business trip, for example,caninvolve numerous possible itineraries encompassing different airlines and flights, not to mention hotel rewards programs, restaurant reservations, and off-hours activities, all of which must be handled across different online platforms. While there have been efforts to automate parts of this process, much of it still must be done manually. This is in large part because the wide variation in potential inputs and outputs makes the process too complicated,costly,ortime-intensive to automate.

AI智能体 (AI Agent) 能够创造的价值,源于其自动化处理长尾复杂用例的潜力——这些用例以高度可变的输入输出为特征,历来难以通过成本或时间高效的方式解决。以简单的商务旅行为例,可能涉及包含不同航空公司和航班的多种行程方案,更不用说酒店奖励计划、餐厅预订和业余活动安排,所有这些都需要在不同在线平台上处理。虽然已有尝试对此流程进行部分自动化,但大部分环节仍需人工操作。这在很大程度上是因为输入输出变量的广泛差异,使得自动化流程过于复杂、昂贵或耗时。

Gen Al-enabled agents can ease the automation of complex and open-ended use cases in three important ways:

生成式AI (Generative AI) 赋能的智能体可通过三种重要方式简化复杂开放式用例的自动化流程:

Agents can manage multiplicity. Many business use cases and processes are characterized by a linear workflow, with a clear beginning and series of steps that lead to a specific resolution or outcome. This relative simplicity makes them easily codified and automated inrule-based systems. But rule-based systems often exhibit "brittleness"-that is, they break down when faced with situations not contemplated by the designers of the explicit rules. Many workflows, for example, are far less predictable, marked by unexpected twists and turns and a range of possible outcomes; these workflows require special handling and nuanced judgment that makes rules-based automation challenging. But gen Al agent systems, because they are based on foundation models, have the potential to handle a wide variety of less-likely situations for a given use case, adapting in real time to perform the specialized tasks required to bring a process to completion.

智能体 (AI Agent) 能够处理多重复杂性。许多商业用例和流程都具有线性工作流的特征,即具有明确的起点和一系列步骤,最终达成特定解决方案或结果。这种相对简单的特性使其易于在基于规则的系统中进行编码和自动化。但基于规则的系统常常表现出"脆弱性"——当遇到规则设计者未预料到的情况时,系统就会崩溃。例如,许多工作流程具有高度不可预测性,充满意外转折和多种可能结果;这些流程需要特殊处理和细致判断,使得基于规则的自动化难以应对。而基于基础模型的生成式AI智能体系统,由于具备适应能力,有望处理特定用例中各类小概率场景,实时调整以执行专业任务,推动流程达成最终结果。

Agent systems can be directed with natural language. Currently, to automate a use case, it first must be broken down into a series of rules and steps that can be codified. These steps are typically translated into computer code and integrated into software systems—an often costly and laborious process that requires significant technical expertise.Because agent ic systems use natural language as a form of instruction, even complex workflows can be encoded more quickly and easily.What's more, the process can potentially be done by nontechnical employees, rather than software engineers. This makes it easier to integrate subject matter expertise, grants wider access to gen Al and Al tools, and eases collaboration between technical and nontechnical teams.

AI智能体 (AI Agent) 系统可通过自然语言进行引导。目前要实现用例自动化,首先需要将其分解为可编码的规则和步骤序列。这些步骤通常需转化为计算机代码并集成到软件系统中——这一过程往往成本高昂且费时费力,需要大量专业技术知识。由于AI智能体系统使用自然语言作为指令形式,即使复杂的工作流也能更快速便捷地完成编码。更重要的是,该过程可能由非技术人员完成,而非必须依赖软件工程师。这使得领域专业知识更易整合,拓宽了生成式AI (Generative AI) 与AI工具的访问范围,并促进了技术团队与非技术团队之间的协作。

Agents can work with existing software tools and platforms.In additionto analyzing and generating knowledge, agent systems can use tools and communicate across a broader digital ecosystem. For instance, an agent can be directed to work with software applications (such as plotting and charting tools), search the web for information, collect and compile human feedback, and even leverage additional foundation models. Digital-tool use is both a

智能体 (Agent) 能够与现有软件工具和平台协同工作。除了分析和生成知识外,智能体系统还可以在更广泛的数字生态系统中使用工具并进行交互。例如,可以指导智能体使用软件应用程序(如绘图和图表工具)、在网络上搜索信息、收集和整理人类反馈,甚至利用额外的基础模型。数字工具的使用既是

defining characteristic of agents (it's one way that they can act in the world) but also a way in which their gen Al capabilities can uniquely be brought to bear. Foundation models can learn how to interface with tools, whether through natural language or other interfaces. Without foundation models, these capabilities would require extensive manual efforts to integrate systems (for example, using extract, transform, and load tools) or tedious manual efforts to collate outputs from different software systems.

智能体 (agent) 的显著特征在于其能够通过某种方式在现实世界中行动,这也是其生成式人工智能 (Generative AI) 能力得以独特发挥的途径。基础模型 (foundation model) 能够学习如何与工具交互,无论是通过自然语言还是其他接口。若没有基础模型,这些能力将需要大量人工努力来集成系统(例如使用提取、转换和加载工具),或需要繁琐的手动操作来整理不同软件系统的输出。

How gen AI-enabled agents could work

生成式AI智能体如何运作

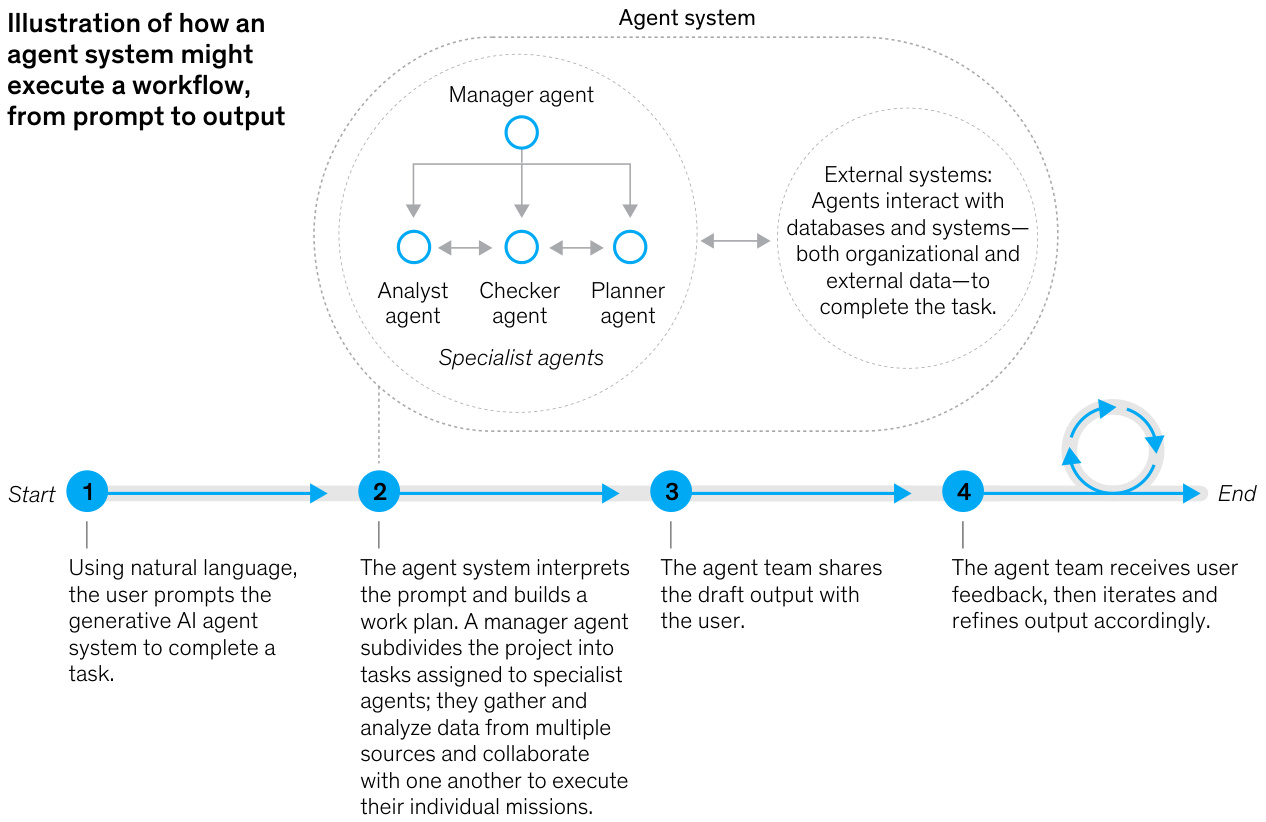

Agents can support high-complexity use cases across industries and business functions, particularly for workflows involving time-consuming tasks or requiring various specialized types of qualitative and quantitative analysis.Agents do this by recursively breaking down complex workflows and performing subtasks across specialized instructions and data sources to reach the desired goal. The process generally follows these four steps (Exhibit 1):

AI智能体 (AI Agent) 能够支持跨行业和业务职能的高复杂度用例,尤其适用于涉及耗时任务或需要各种专业定性和定量分析的工作流。其实现方式是通过递归分解复杂工作流,并基于专业指令和数据源执行子任务以达成目标。该过程通常遵循以下四个步骤 (图 1):

User provides instruction:A user interacts with the Al system by giving a natural-language prompt, much like one would instruct a trusted employee. The system identifies the intended use case, asking the user for additional clarification when required.

用户通过自然语言提示与AI系统交互,类似于指导可信员工的方式。系统会识别预期用例,并在需要时要求用户提供额外说明。

Agent system plans, allocates, and executes work: The agent system processes the prompt into a workflow, breaking it down into tasks and subtasks, which a manager subagent assigns to other specialized sub agents.These sub agents, equipped with necessary domain knowledge and tools, draw on prior"experiences" and codified domain expertise, coordinating with each other and using organizational data and systems to execute these assignments.

AI智能体系统规划、分配并执行工作:AI智能体系统将提示处理为工作流,将其分解为任务和子任务,由管理子智能体分配给其他专业子智能体。这些子智能体具备必要的领域知识和工具,借鉴先前的"经验"和系统化的领域专长,相互协调并利用组织数据和系统来执行这些任务。

Agent system iterative ly improves output: Throughout the process, the agent may request additional user input to ensure accuracy and relevance. The process may conclude with the agent providing final output to the user, iterating on any feedback shared by the user.

智能体系统迭代改进输出:在整个过程中,智能体可能会请求额外的用户输入以确保准确性和相关性。该过程可能以智能体向用户提供最终输出作为结束,并根据用户反馈进行迭代改进。

Agents enabled by generative Al soon could function as hyper efficient virtual coworkers.

生成式AI驱动的智能体即将成为高效虚拟同事

McKinsey & Company

Agent executes action: The agent executes any necessary actions in the world to fully complete the user-requested task.

AI智能体执行操作: AI智能体在世界中执行任何必要的操作, 以完全完成用户请求的任务。

Art of the possible: Three potential use cases

可能性的艺术:三个潜在用例

What do these kinds of systems mean for businesses? The following three hypothetical use cases offer a glimpse of what could be possible in the not-too-distant future.

这类系统对企业意味着什么?以下三个假设用例展示了在不久的将来可能实现的应用场景。

Use case 1: Loan underwriting

用例 1: 贷款审批

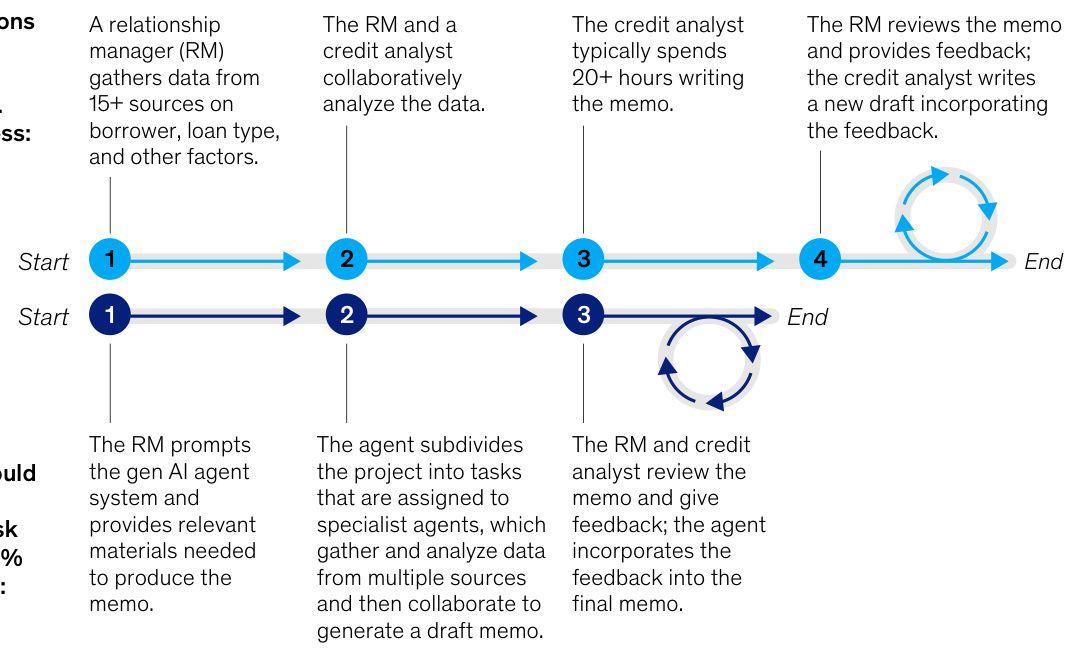

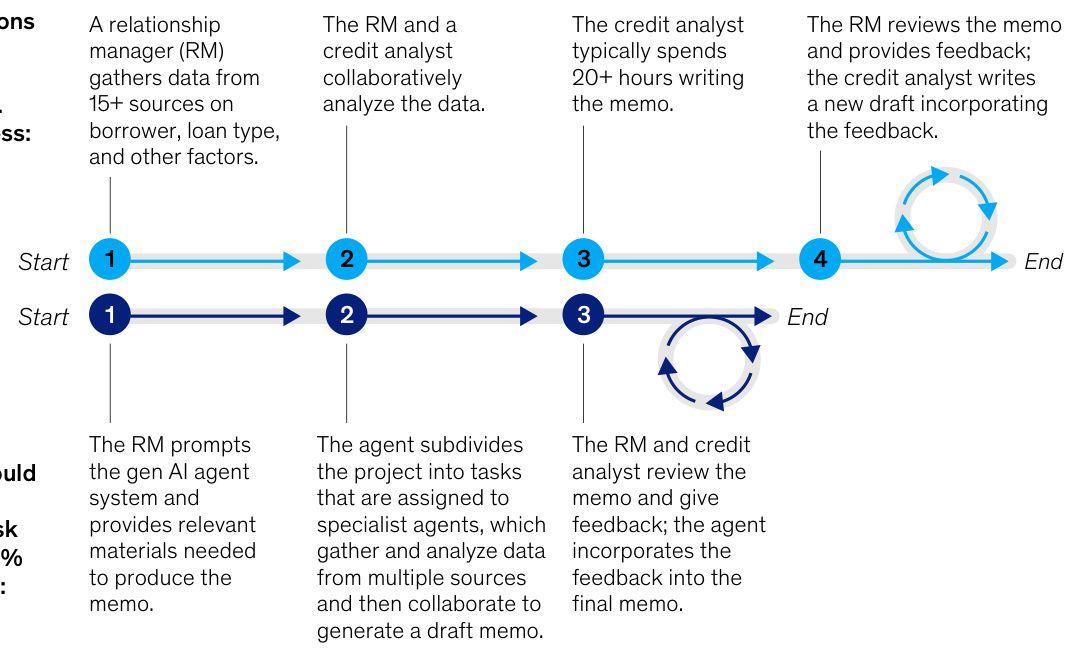

Financial institutions prepare credit-risk memos to assess the risks of extending credit or a loan to a borrower. The process involves compiling, analyzing, and reviewing various forms of information pertaining to the borrower, loan type, and other factors. Given the multiplicity of credit-risk scenarios and analyses required, this tends to be a time-consuming and highly collaborative effort, requiring a relationship manager to work with the borrower, stakeholders, and credit analysts to conduct specialized analyses, which are then submitted to a credit manager for review and additional expertise.

金融机构通过编写信用风险备忘录来评估向借款人提供信贷或贷款的风险。该流程涉及整理、分析和审查与借款人、贷款类型及其他因素相关的各类信息。由于需要处理的信用风险场景和分析类型多样,这往往成为一项耗时且高度协作的工作:客户经理需要与借款人、利益相关方及信贷分析师共同完成专项分析,随后将分析结果提交给信贷经理进行复核并获取专业意见。

Potential agent-based solution:Anagentic system—comprising multiple agents, each assuming a specialized, task-based role—could potentially be designed tohandle a wide range of credit-risk scenarios. A human user would initiate the process by using natural language to provide a high-level work plan of tasks with specific rules, standards, and conditions.Then this team of agents would break down the work into executable subtasks.

基于智能体的潜在解决方案:一个由多个智能体组成的系统——每个智能体承担专业化的、基于任务的特定角色——有望被设计用于处理广泛的信用风险场景。用户可使用自然语言启动流程,提供包含具体规则、标准和条件的顶层工作任务规划。随后这个智能体团队会将工作分解为可执行的子任务。

One agent, for example, could act as the relationship manager to handle communications between the borrower and financial institutions. An executor agent could compile the necessary documents and forward them to a financial analyst agent that would, say, examine debt from cash flow statements and calculate relevant financial ratios, which would then be reviewed by a critic agent to identify discrepancies and errors and provide feedback. This process of breakdown, analysis, refinement,and review would be repeated until the final credit memo is completed (Exhibit 2).

例如, 某个智能体可作为关系经理, 负责处理借款人与金融机构间的沟通。执行者智能体可汇编必要文件并转发给金融分析师智能体, 由后者审查现金流量表中的债务并计算相关财务比率, 再由评审智能体核查差异错误并提供反馈。这种分解、分析、优化和复核的流程将循环往复, 直至最终信贷备忘录完成 (图表 2) 。

Unlike simpler gen Al architectures, agents can produce high-quality content, reducing review cycle times by 20 to 60 percent. Agents are also able to traverse multiple systems and make sense of data pulled from multiple sources. Finally, agents can show their work: credit analysts can quickly drill into any generated text or numbers, accessing the complete chain of tasks and using data sources to produce the generated insights. This facilitates the rapid verification of outputs.

与简单的生成式AI (Generative AI) 架构不同,AI智能体 (AI Agent) 能够生成高质量内容,将审核周期缩短20%至60%。AI智能体还能跨多个系统运行,整合来自不同来源的数据。此外,AI智能体可展示其工作过程:信贷分析师能快速追溯任何生成文本或数字的完整任务链,通过调用数据源验证生成结论的可靠性,这显著加速了输出结果的核查效率。

Use case 2: Code documentation and modernization

用例2: 代码文档化与现代化

Legacy software applications and systems at large enterprises often pose security risks and can slow thepace of business innovation.But modernizing these systems can be complex, costly, and time-intensive, requiring engineers to review and understand millionsof linesof theolder codebase and manual documentation of business logic, and then translating this logic to an updated codebase and integrating it with other systems.

大型企业的遗留软件应用程序和系统通常存在安全风险,并可能拖慢业务创新步伐。但实现这些系统的现代化改造可能复杂、昂贵且耗时,需要工程师审查和理解数百万行旧代码库及业务逻辑的手动文档,然后将这些逻辑转换到更新的代码库中并与其他系统集成。

Potential agent-based solution:Al agentshave the potential to significantly streamline this process. A specialized agent could be deployed as a legacy-software expert, analyzing old code and documenting and translating various code segments. Concurrently, a quality assurance agent could critique this documentation and produce test cases, helping the Al system to iterative ly refine its output and ensure its accuracy and adherence to

基于智能体的潜在解决方案:AI智能体 (AI Agent) 有潜力显著简化这一流程。可部署专业智能体作为遗留软件专家,分析旧代码并对各代码段进行文档记录和翻译。同时,质量保障智能体可对文档进行评审并生成测试用例,帮助AI系统迭代优化输出结果,确保其准确性和合规性。

Generative Al agents have the potential to change the way we work by supercharging productivity.

生成式AI智能体 (Generative AI Agents) 有望通过提升生产力来改变我们的工作方式。

ll lust rat ive use case:credit-riskmemos

说明性用例:信用风险备忘录

Financial institutions often spend 1-4 weeks creating a credit-risk memo. The current process:

金融机构通常需要花费1-4周时间撰写信用风险备忘录。当前流程:

Generative Al (gen Al) agents could cut time spent on creating credit-risk memos by $20{-}60%$ using these steps:

生成式AI (Generative AI) 智能体可通过以下步骤将信用风险备忘录的撰写时间缩短 $20{-}60%$:

organizational standards. The repeatable nature of this process, meanwhile, could produce a flywheel effect, in which components of the agent framework arereused for other software migrations across the organization, significantly improving productivity and reducing the overall cost in software development.

组织标准。同时,这种流程的可重复性可能产生飞轮效应:智能体框架的组件可在组织内其他软件迁移项目中复用,从而显著提升生产力并降低软件开发总成本。

Use case 3: Online marketing campaign creation

用例3:在线营销活动创建

Designing, launching, and running an online marketing campaign tends to involve an array of different software tools, applications, and platforms. And the workflow for an online marketing campaign is highly complex. Business objectives and market trends must be translated into creative campaign ideas. Written and visual material must be created and customized for different segments and geographies. Campaigns must be tested with user groups across various platforms. To accomplish these tasks, marketing teams often use different forms of software and must move outputs from onetool to another,which is often tedious and time-consuming.

设计、启动和运行在线营销活动通常涉及一系列不同的软件工具、应用程序和平台。在线营销活动的工作流程极其复杂:需要将商业目标和市场趋势转化为创意活动构想;必须为不同细分市场和地区创建并定制文字与视觉素材;还需在各类平台上通过用户群体测试活动效果。为完成这些任务,营销团队往往需要使用多种软件形式,并频繁在不同工具间转移输出内容,这一过程通常繁琐且耗时。

Potential agent-based solution: Agents can help connect this digital marketing ecosystem. For example, a marketer could describe targeted users, initial ideas, intended channels, and other parameters in natural language. Then, an agent system-with assistance from marketing professionals—would help develop, test, and iterate different campaign ideas. A digital marketing strategy agent could tap online surveys, analytics from customer relationship management solutions, and other market research platforms aimed at gathering insights to craft strategies using multimodal foundation models.Agents for content marketing, copy writing, and design could then build tailored content, which a human evaluator would review for brand alignment. These agents would collaborate to iterate and refine outputs and align toward an approach that optimizes the campaign's impact while minimizing brand risk.

基于智能体的潜在解决方案: 智能体能够连接这个数字营销生态系统。例如, 营销人员可以用自然语言描述目标用户、初步想法、预期渠道和其他参数。随后, 在营销专业人士的协助下, 智能体系统将帮助开发、测试并迭代不同的营销活动创意。数字营销策略智能体可以借助在线调查、客户关系管理解决方案的分析数据, 以及其他市场研究平台, 通过多模态基础模型收集洞察并制定策略。内容营销、文案撰写和设计智能体随后可制作定制化内容, 并由人工评估者审核其品牌一致性。这些智能体将协同工作, 不断迭代优化输出结果, 最终形成既能最大化营销活动影响力, 又能最小化品牌风险的方案。

How should business leaders prepare for the age of agents?

企业领导者应如何为AI智能体时代做准备?

Although agent technology is quite nascent, increasing investments in these tools could result in agentic systems achieving notable milestones and being deployed at scale over the next few years. As such, it is not too soon for business leaders to learn more about agents and consider whether some of their core processes or business imperatives can be accelerated with agentic systems and capabilities. This understanding can inform future road map planning or scenarios and help leaders stay at the edge of innovation readiness. Once those potential use cases have been identified, organizations can begin exploring the growing agent landscape, utilizing APls, tool kits, and libraries (for example, Microsoft Autogen, Hugging Face, and LangChain) to start understanding what is relevant.

尽管智能体技术尚处于萌芽阶段,但对这些工具日益增长的投资可能促使智能体系统在未来几年取得显著里程碑并实现大规模部署。因此,企业领导者现在了解智能体技术并思考其核心流程或关键业务能否通过智能体系统和能力加速推进正当其时。这种认知可为未来路线图规划或场景构建提供参考,帮助管理者保持创新准备的前沿优势。一旦确定潜在用例,组织便可开始探索不断发展的智能体生态,利用API、工具包和程序库(例如 Microsoft Autogen, Hugging Face 和 LangChain)来识别相关技术方向。

To prepare for the advent of agentic systems, organizations should consider these three factors, which will be key if such systems are to deliver on their potential:

为迎接智能体系统的到来,组织应考虑以下三个关键因素,这些因素对实现此类系统的潜力至关重要:

Codification of relevant knowledge: Implementing complex use cases willikely require organizations to define and document business processes into codified workflows that are then used to train agents. Likewise, organizations might consider how they can capture subject matter expertise, which will be used to instruct agents in natural language, thus streamlining complex processes.

相关知识的规范化:实施复杂用例可能需要组织将业务流程定义并记录成规范化的工作流,随后用于训练AI智能体。同样,组织可以考虑如何获取领域专业知识,这些知识将用于以自然语言指导AI智能体,从而简化复杂流程。

Strategic tech planning:Organizations will need to organize their data and IT systems to ensure that agent systems can interface effectively with existing infrastructure. That includes capturing user interactions for continuous feedback and creating the flexibility to integrate future technologies without disrupting existing operations.

战略技术规划: 组织需要统筹数据与IT系统, 确保智能体系统能与现有基础设施有效对接。这包括采集用户交互数据以实现持续反馈, 并建立灵活架构以无缝整合未来技术, 同时保障现有业务不受干扰。

Human-in-the-loop control mechanisms:As gen Al agents begin interacting with the real world, control mechanisms are essential to balance autonomy and risk (see sidebar, "Understanding the unique risks posed by agentic systems"). Humans must validate outputs for accuracy, compliance, and fairness; work with subject matter experts to maintain and scale agent systems; and create a learning flywheel for ongoing improvement.Organizations should start considering under what conditions and how such human-in-the-loop mechanisms should be deployed.

人机协同控制机制: 随着生成式AI智能体开始与现实世界交互, 控制机制对于平衡自主性和风险至关重要 (详见侧栏 "理解智能体系统带来的独特风险"). 人类必须验证输出的准确性、合规性和公平性; 与领域专家合作维护和扩展智能体系统; 并创建持续改进的学习闭环. 组织应开始考虑在何种条件下以及如何部署这类人机协同机制.

Understanding the unique risks posed by agentic systems

理解智能体系统带来的独特风险

Large language models (LLMs), as we now know, are prone to mistakes and hallucinations. Because agent systems process sequences of LLM-derived outputs, a hallucination within one of these outputs could have cascading effects if protections are not in place. Additionally, because agent systems are designed to operate with autonomy, business leaders must consider additional oversight mechanisms and guardrails. While it is difficult to fully anticipate all the risks that will be introduced with agents, here are some that should be considered.

众所周知,大语言模型 (LLM) 容易出错和产生幻觉 (hallucination) 。由于智能体系统处理的是大语言模型衍生输出的序列,若未设置防护措施,其中某个输出中的幻觉可能会产生连锁效应。此外,由于智能体系统被设计为自主运行,企业领导者必须考虑额外的监督机制和防护措施。虽然难以完全预测智能体将带来的所有风险,但以下几点值得考量:

Potentially harmful outputs

潜在有害输出

Large language models are not always accurate, sometimes providing incorrect information or performing actions with undesirable consequences. These risks are heightened as generative Al (gen Al) agents independently carry out tasks using digital tools and data in highly variable scenarios. For instance, an agent might approve a high-risk loan, leading to financial loss, or it may make an expensive nonrefundable purchase for a customer.

大型语言模型并不总是准确的,有时会提供错误信息或执行产生不良后果的操作。随着生成式AI (Generative AI) 智能体在高度多变的场景中使用数字工具和数据独立执行任务,这些风险会进一步加剧。例如,智能体可能批准高风险贷款导致财务损失,或为客户进行不可退款的高额消费。

Mitigation strategy: Organizations should implement robust accountability measures, clearly defining the responsibilities of both agents and humans while ensuring that agent outputs can be explained and understood. This could be accomplished by developing frameworks to manage agent autonomy (for example, limiting agent actions based on use case complexity) and ensuring human oversight (for example, verifying agent outputs before execution and conducting regular audits of agent decisions). Additionally, transparency and trace ability mechanisms can help users understand the agent's decision making process to identify potentially fraught issues early.

缓解策略: 组织应实施严格的责任制度, 明确界定 AI智能体 和人类的责任, 同时确保智能体的输出可被解释和理解。这可通过制定管理智能体自主权的框架 (例如根据用例复杂度限制智能体行为) 和确保人类监督 (例如执行前验证智能体输出, 定期审计智能体决策) 来实现。此外, 透明度和可追溯机制能帮助用户理解智能体的决策过程, 及早识别潜在问题。

Misuse of tools

工具误用

With their ability to access tools and data, agents could be dangerous if intentionally misused. Agents, for example, could be used to develop vulnerable code, create convincing phishing scams, or hack sensitive information.

借助调用工具和数据的能力,AI智能体若被蓄意滥用可能带来危险。例如,这类智能体可用于编写存在漏洞的代码、制造极具欺骗性的网络钓鱼骗局,或窃取敏感信息。

Mitigation strategy: For potentially high-risk scenarios, organizations should build in guardrails (for example, access controls, limits on agent actions) and create closed environments for agents (for instance, limit the agent's access to certain tools and data sources). Additionally, organizations should apply real-time monitoring of agent activities with automated alerts for suspicious behavior. Regular audits and compliance checks can ensure that guardrails remain effective and relevant.

缓解策略:对于潜在高风险场景,组织应设置防护机制(例如访问控制、智能体操作限制),并为智能体创建封闭环境(例如限制智能体对特定工具和数据源的访问)。此外,组织应对智能体活动实施实时监控,并对可疑行为设置自动警报。定期审计与合规性检查可确保持续有效的防护措施。

Insufficient or excessive human-agent trust

人机信任不足或过度

Just as in relationships with human coworkers, interactions between humans and Al agents are based on trust. If users lack faith in agentic systems, they might scale back the human-agent interactions and information sharing that agentic systems require if they are to learn and improve.Conversely, as agents become more adept at emulating humanlike behavior, some users could place too much trust in them, ascribing to them human-level understanding and judgment. This can lead to users un critically accepting recommendations or giving agents too much autonomy without sufficient oversight.

正如与人类同事的关系一样,人类与AI智能体(AI Agent)之间的互动也建立在信任基础上。如果用户对智能体系统缺乏信任,可能会缩减人机交互和信息共享,而这些正是智能体系统学习改进所必需的。反之,随着智能体越来越擅长模拟人类行为,部分用户可能过度信任它们,赋予其人类层级的理解与判断能力。这可能导致用户不加批判地接受建议,或在缺乏充分监督的情况下赋予智能体过多自主权。

Mitigation strategy: Organizations can manage these issues by prioritizing the transparency of agent decision making, ensuring that users are trained in the responsible use of agents, and establishing a humans-in-the-loop process to manage agent behavior. Human oversight of agent processes

缓解策略:组织可通过以下方式管理这些问题:优先保障智能体决策的透明度,确保用户接受负责任使用智能体的培训,并建立人工介入流程来管理智能体行为。在智能体流程中保持人工监督。

is key to ensuring that users maintain a balanced perspective, critically evaluate agent performance, and retain final authority and accountability in agent actions. Furthermore, agent performance should be evaluated by tying agents' activities to concrete outcomes (for example, customer satisfaction, successful completion rates of tickets).

关键在于确保用户保持平衡的视角,批判性评估智能体性能,并在智能体行为中保留最终权限和责任。此外,应通过将智能体活动与具体成果(例如客户满意度、工单成功完成率)相关联来评估智能体性能。

In addition to addressing these potential risks, organizations should consider the broader issues raised by gen Al agents:

除了应对这些潜在风险,各组织还应考虑生成式AI智能体 (Generative AI Agents) 引发的更广泛问题:

Value alignment: Because agents are akin to coworkers, their actions should embody organizational values. What values should agents embody in their decisions? How can agents be regularly evaluated and trained to align with those values?

价值对齐:由于智能体类似于同事,其行为应体现组织价值观。智能体在决策中应体现哪些价值观?如何定期评估和训练智能体以确保与这些价值观保持一致?

Anthropomorphism: As agents increasingly have humanlike capabilities, users could develop an over reliance on them or mistakenly believe that Al assistants are fully aligned with their own interests and values. To what extent should humanlike characteristics be incorporated into the design of agents? What processes can be created to enable real-time detection of potential harms in human-agent interactions?

拟人化: 随着智能体逐渐具备类人能力, 用户可能对其产生过度依赖, 或误以为AI助手完全符合自身利益和价值观。 应在多大程度上将类人特征融入智能体设计? 如何建立实时检测人机交互潜在危害的机制?

Find more content like this on the McKinsey Insights App

在麦肯锡洞察App上发现更多类似内容

McKinsey's most recent "State of Al" survey found that more than 72 percent of companies surveyed are deploying Al solutions, with a growing interest in gen Al. Given that activity, it would not be surprising to see companies begin to incorporate frontier technologies such as agents into their planning processes and future Al road maps. Agent-driven automation remains an exciting proposition, with the potential to revolutionize whole industries, bringing a new speed of action to work.

麦肯锡最新的"人工智能现状"调查发现,超过72%的受访公司正在部署人工智能解决方案,且对生成式AI的兴趣日益增长。鉴于这种活跃态势,企业开始将智能体等前沿技术纳入规划流程和未来AI路线图并不令人意外。智能体驱动的自动化仍是激动人心的命题,有望彻底革新整个行业,为工作带来全新的行动速度。

That said, the technology is still in its early stages, and there is much development required before its full capabilities can be realized.The increased complexity and autonomy of these systems pose a host of challenges and risks. And if deploying Al agents is akin to adding new workers to the team, just like their human team members, agents will

话虽如此,这项技术仍处于早期阶段,需要大量开发才能实现其全部能力。这些系统日益增长的复杂性和自主性带来了一系列挑战和风险。如果部署AI智能体 (AI Agent) 类似于为团队新增成员,那么正如人类团队成员一样,智能体将

require considerable testing, training, and coaching before they can be trusted to operate independently. But even in these earliest of days, it's not hard to envision the expansive opportunities this new generation of virtual colleagues could potentially unleash.

在能够放心地让它们独立运作之前,还需要大量的测试、培训和指导。但即便是在这最早期的阶段,我们也不难想象新一代虚拟同事可能带来的广阔机遇。

We are celebrating the 6 O th birthday of the McKinsey Quarterly with a yearlong campaign featuring fourissues on major themes related to the future of business and society, as well as related interactive s, collections from the magazine's archives,and more.This article is part of the campaign's Future of Technology issue. Sign up for the McKinsey Quarterly alert list to be notified as soon as other new Quarterly articles are published.