Advanced prompting as a catalyst: Empowering large language models in the management of gastrointestinal cancers

高级提示作为催化剂:赋能大语言模型管理胃肠癌

Jiajia Yuan,1,8 Peng Bao,2,8 Zifan Chen,2,8 Mingze Yuan,2,8 Jie Zhao,3,7 Jiahua Pan,7 Yi Xie,1 Yanshuo Cao,1 Yakun Wang,1 Zhenghang Wang,1 Zhihao Lu, Xiaotian Zhang,1 Jian Li,1 Lei Ma,6 Yang Chen,1,* Li Zhang,2,6,* Lin Shen,1,* and Bin Dong4,5,6,7,* * Correspondence: yang_ chen@bjcancer.org (Y.C.); zhang li pku@pku.edu.cn (L.Z.); shenlin@bjmu.edu.cn (L.S.); dongbin@math.pku.edu.cn (B.D.) Received: July 24, 2023; Accepted: August 8, 2023; Published Online: August 14, 2023; https://doi.org/10.59717/j.xinn-med.2023.100019 $\circledcirc$ 2023 The Author(s). This is an open access article under the CC BY-NC-ND license (http://creative commons.org/licenses/by-nc-nd/4.0/).

Jiajia Yuan,1,8 Peng Bao,2,8 Zifan Chen,2,8 Mingze Yuan,2,8 Jie Zhao,3,7 Jiahua Pan,7 Yi Xie,1 Yanshuo Cao,1 Yakun Wang,1 Zhenghang Wang,1 Zhihao Lu, Xiaotian Zhang,1 Jian Li,1 Lei Ma,6 Yang Chen,1,* Li Zhang,2,6,* Lin Shen,1,* and Bin Dong4,5,6,7,* * 通讯作者: yang_ chen@bjcancer.org (Y.C.); zhang li pku@pku.edu.cn (L.Z.); shenlin@bjmu.edu.cn (L.S.); dongbin@math.pku.edu.cn (B.D.) 收稿日期: 2023年7月24日; 接受日期: 2023年8月8日; 在线发表日期: 2023年8月14日; https://doi.org/10.59717/j.xinn-med.2023.100019 $\circledcirc$ 2023 作者。本文是一篇基于CC BY-NC-ND许可协议 (http://creativecommons.org/licenses/by-nc-nd/4.0/) 的开放获取文章。

GRAPHICAL ABSTRACT

图文摘要

PUBLIC SUMMARY

公开摘要

Advanced prompting as a catalyst: Empowering large language models in the management of gastrointestinal cancers

高级提示作为催化剂:赋能大语言模型在胃肠癌管理中的应用

Jiajia Yuan,1,8 Peng Bao,2,8 Zifan Chen,2,8 Mingze Yuan,2,8 Jie Zhao,3,7 Jiahua Pan,7 Yi Xie,1 Yanshuo Cao,1 Yakun Wang,1 Zhenghang Wang,1 Zhihao Lu Xiaotian Zhang,1 Jian Li,1 Lei Ma,6 Yang Chen,1,* Li Zhang,2,6,* Lin Shen,1,* and Bin Dong4,5,6,7,*

Jiajia Yuan,1,8 Peng Bao,2,8 Zifan Chen,2,8 Mingze Yuan,2,8 Jie Zhao,3,7 Jiahua Pan,7 Yi Xie,1 Yanshuo Cao,1 Yakun Wang,1 Zhenghang Wang,1 Zhihao Lu Xiaotian Zhang,1 Jian Li,1 Lei Ma,6 Yang Chen,1,* Li Zhang,2,6,* Lin Shen,1,* and Bin Dong4,5,6,7,*

Large Language Models' (LLMs) performance in healthcare can be significantly impacted by prompt engineering. However, the area of study remains relatively uncharted in gastrointestinal oncology until now. Our research delves into this unexplored territory, investigating the efficacy of varied prompting strategies, including simple prompts, templated prompts, incontext learning (ICL), and multi-round iterative questioning, for optimizing the performance of LLMs within a medical setting. We develop a comprehensive evaluation system to assess the performance of LLMs across multiple dimensions. This robust evaluation system ensures a thorough assessment of the LLMs' capabilities in the field of medicine. Our findings suggest a positive relationship between the comprehensiveness of the prompts and the LLMs' performance. Notably, the multi-round strategy, which is characterized by iterative question-and-answer rounds, consistently yields the best results. ICL, a strategy that capitalizes on interrelated contextual learning, also displays significant promise, surpassing the outcomes achieved with simpler prompts. The research underscores the potential of advanced prompt engineering and iterative learning approaches for boosting the applicability of LLMs in healthcare. We recommend that additional research be conducted to refine these strategies and investigate their potential integration, to truly harness the full potential of LLMs in medical applications.

大语言模型(LLM)在医疗领域的表现受提示工程(prompt engineering)影响显著。然而在胃肠肿瘤学领域,相关研究至今仍处于探索阶段。本研究深入这一空白领域,系统评估了简单提示、模板化提示、上下文学习(ICL)以及多轮迭代提问等不同提示策略对优化大语言模型医疗场景表现的效果。我们开发了一套多维度的综合评估体系,确保全面衡量大语言模型的医学能力。研究发现提示的完整性与模型表现呈正相关,其中以问答循环为特征的多轮策略始终表现最佳。利用关联上下文学习的ICL策略也展现出显著优势,其效果远超简单提示。本研究证实了高级提示工程与迭代学习策略对提升大语言模型医疗应用价值的潜力,建议通过进一步研究优化这些策略并探索其协同效应,以充分释放大语言模型在医学应用中的潜能。

INTRODUCTION

引言

Large Language Models (LLMs), exemplified by cutting-edge architectures like GPT-4,1 have demonstrated considerable potential in transforming healthcare delivery2-4 and competency in medical examinations.5 This influence is manifested across various healthcare sectors, including online patient interaction,6 preventive oncology,7-9 neuro psychiatry,10 dermatology,11 and aesthetic surgery consultation,12,13 underscoring their remarkable versatility. However, the application of LLMs such as GPT-4 in digestive system cancer treatment remains an under explored area. The complexities inherent to this field, from patient consultation, and diagnosis to treatment planning and follow-up care, pose formidable challenges for LLMs. Additionally, the existing body of research2,6,7 primarily evaluates LLMs' responses to common medical inquiries via rudimentary prompting, which may not fully leverage their potential in medical settings. This highlights the need for a more comprehensive assessment of GPT-4's capability to provide personalized cancer treatment recommendations via sophisticated prompts.

大语言模型 (LLMs) ,以 GPT-4 等前沿架构为例,已展现出变革医疗服务的巨大潜力[2-4] ,并在医学考试中表现出竞争力[5] 。其影响力覆盖在线患者交互[6] 、肿瘤预防[7-9] 、神经精神医学[10] 、皮肤病学[11] 以及整形外科咨询[12,13] 等多个医疗领域,凸显了其卓越的多功能性。然而,GPT-4 等大语言模型在消化系统癌症治疗中的应用仍属待开发领域。该领域固有的复杂性——从患者问诊、诊断到治疗方案制定与随访护理——对大语言模型构成了严峻挑战。此外,现有研究[2,6,7] 主要通过基础提示词评估大语言模型对常规医学咨询的应答,这可能未充分释放其在医疗场景中的潜力。这凸显了需要通过更复杂的提示词设计,对 GPT-4 提供个性化癌症治疗建议的能力进行更全面评估的必要性。

To harness the full potential of LLMs, it is crucial to employ effective prompt engineering.14-19 Prompt engineering, a process of creating, testing, and optimizing input prompts, serves as a crucial tool in controlling and enhancing interactions with LLMs. Various techniques such as in-context learning,15 retrieval-augmented generation,16 chain-of-thought,17 and least-tomost prompting 18 have been shown to significantly improve the performance of LLMs in tasks demanding logical thinking and reasoning. In-context learning offers models a few demonstrations before attempting a task, while retrieval-augmented generation enhances this process by retrieving relevant examples from a given database. Chain-of-thought prompting improves LLMs' reasoning ability by directing them to generate a series of intermediate steps toward a solution, and least-to-most prompting dissects complex problems into simpler sub-problems to be solved sequentially. Intuitively, these techniques could effectively boost LLMs' performance in complex medical tasks, including cancer treatment recommendations.

要充分发挥大语言模型 (LLM) 的潜力,关键在于运用有效的提示工程 [14-19]。提示工程作为控制和优化与大语言模型交互的关键工具,其过程包括创建、测试和优化输入提示。诸如上下文学习 [15]、检索增强生成 [16]、思维链 [17] 和最少到最多提示 [18] 等技术已被证明能显著提升大语言模型在需要逻辑思维和推理任务中的表现。上下文学习会在模型执行任务前提供少量示例,而检索增强生成则通过从给定数据库中检索相关案例来增强这一过程。思维链提示通过引导模型生成一系列解决问题的中间步骤来提升其推理能力,最少到最多提示则将复杂问题拆解为可依次解决的简单子问题。直观而言,这些技术能有效提升大语言模型在复杂医疗任务(包括癌症治疗建议)中的表现。

In this study, we aimed to unleash GPT-4’s potential to provide personalized digestive system cancer treatment plans through prompt engineering. Inspired by the thinking, reasoning, and action processes of digestive oncologists, we initially conceived the iterative procedure of prompt engineering as a method of amassing information regarding gastrointestinal tumors within a distinct storage of knowledge and in turn, educating the LLM. However, these knowledge repositories, when embedded in rudimentary prompts, are often devoid of substantial content, thus limiting their potential to effectively guide LLMs. Consequently, we established an empirically effective multi-step prompt template consisting of: (i) declaring the role, this process involves assigning a particular role to GPT-4 that emulates a real-world professional or function; (ii) stating the main task, this step essentially provides GPT-4 with a clear directive of what it is required to accomplish; (iii) declaring the workflow, which we view as a generalized chain of thought that allows GPT-4 to approach problem-solving or deliver answers in an organized, step-bystep manner; (iv) specifying constraints, it involves defining the boundaries within which GPT-4 should operate. Then, we iterative ly refined this template to align GPT-4’s responses with physicians’ requirements and added elements to generate comforting responses for patients. An experienced oncology specialist subsequently interacted with GPT-4 over multiple rounds to further guide and optimize the recommended treatment plans. Furthermore, motivated by the exemplar-based teaching approach in medicine, we also assessed the impact of in-context learning by providing GPT-4 with examples of ideal treatment suggestions through document retrieval. We evaluated the performance of diverse prompt engineering strategies on 43 case reports, encompassing a wide range of digestive system cancer types, utilizing a clinically standardized evaluation metric.

本研究旨在通过提示工程释放GPT-4为消化系统癌症提供个性化治疗方案的潜力。基于消化肿瘤科医师的思维、推理和行动流程,我们最初将提示工程的迭代过程构想为:在独立知识库中积累胃肠道肿瘤信息并以此训练大语言模型。但发现当这些知识库被嵌入基础提示时,往往缺乏实质性内容,难以有效引导大语言模型。因此,我们建立了一个经验证有效的多步骤提示模板,包含:(i) 角色声明——为GPT-4分配模拟现实专业人士的特定角色;(ii) 任务声明——明确告知GPT-4需要完成的目标;(iii) 流程声明——构建通用思维链,使GPT-4能分步骤解决问题;(iv) 约束声明——界定GPT-4的操作边界。通过迭代优化该模板,我们使GPT-4的输出更符合医师需求,并添加了生成患者安抚话术的模块。随后由资深肿瘤专家与GPT-4进行多轮交互,进一步优化治疗方案推荐。受医学案例教学法启发,我们还通过文档检索为GPT-4提供理想治疗建议范例,评估了上下文学习的效果。最终采用临床标准化评估指标,在43例涵盖多种消化系统癌症的病例报告上测试了不同提示工程策略的效能。

In summary, we are the first to conduct a comprehensive assessment of prompt engineering on GPT-4’s ability to provide personalized digestive system cancer treatment recommendations, as per our comprehensive search in the existing literature. We developed a sophisticated prompt template to generate personalized cancer treatment plans that emphasize patient comfort, which significantly outperforms rudimentary prompts and offers valuable insights for prompt design in the medical domain. We evaluated various prompt engineering strategies, including rudimentary prompts, templated prompts, in-context learning, and multi-round interaction, using a clinically standardized metric. Our results highlight the promise of prompt engineering for medical applications of LLMs.

总结来说,据我们现有文献的全面检索,我们是首个对GPT-4通过提示工程(prompt engineering)提供个性化消化系统癌症治疗建议的能力进行全面评估的研究。我们开发了一套复杂的提示模板,用于生成强调患者舒适度的个性化癌症治疗方案,其表现显著优于基础提示,并为医疗领域的提示设计提供了宝贵见解。我们采用临床标准化指标评估了多种提示工程策略,包括基础提示、模板化提示、上下文学习以及多轮交互。研究结果凸显了提示工程在大语言模型医疗应用中的潜力。

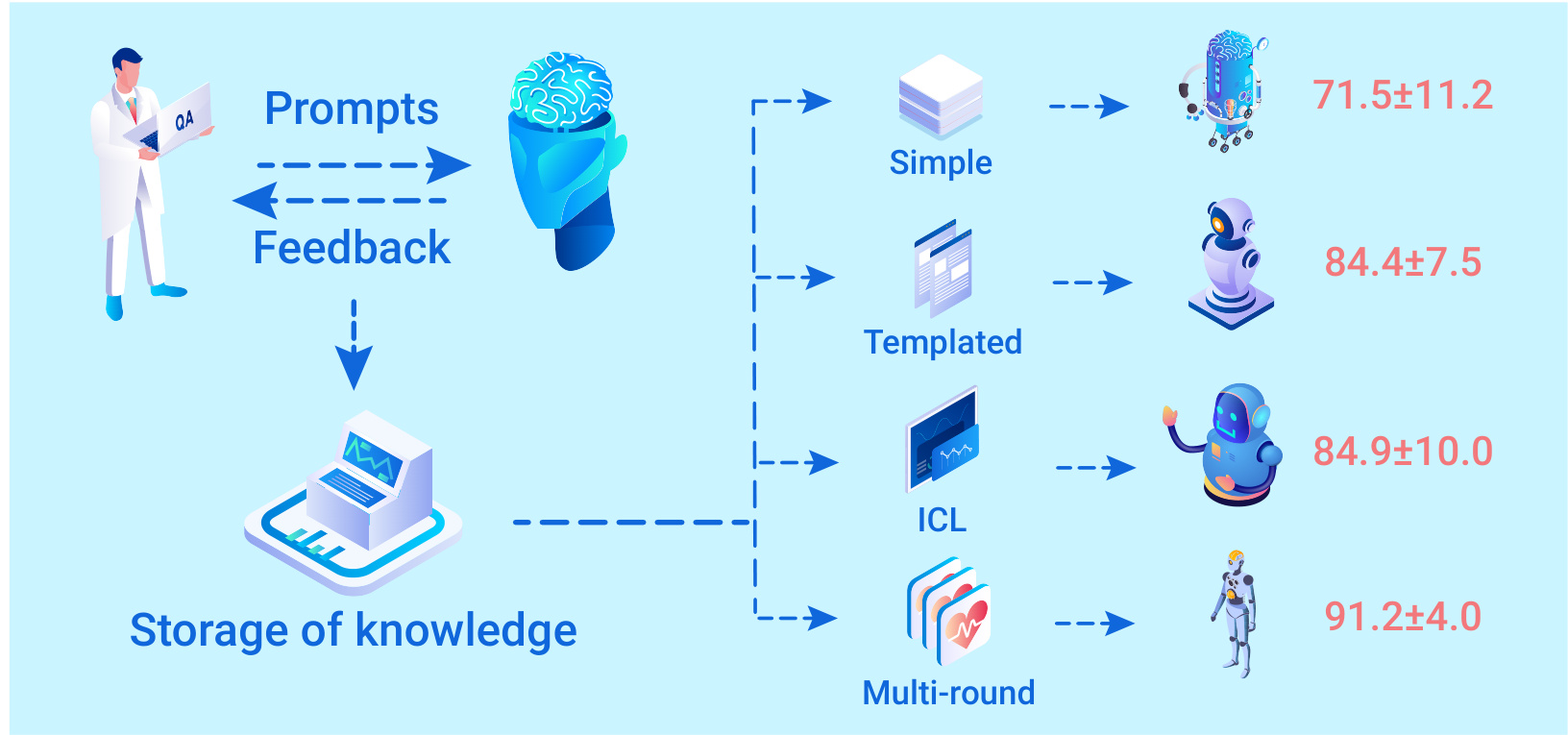

Figure 1. An illustration showcasing the effects of various prompting strategies on Language Learning Models' (LLMs') performance, mediated by a 'storage of knowledge' Simple prompts leave this storage empty, offering no enhancement for GI tumor decision-making. Conversely, templated prompts and ICL populate the storage with role assumptions and case examples, respectively, helping to standardize LLMs' output, thus improving performance. The multi-round interaction strategy fills the storage with the complete physician-LLM dialogue, potentially allowing more accurate comprehension and utilization of decision-assisting information.

图 1: 展示不同提示策略通过"知识存储"中介作用对大语言模型(LLMs)性能的影响示意图。简单提示使该存储保持空白,无法提升胃肠道肿瘤决策能力;模板化提示和上下文学习(ICL)分别通过角色预设和案例填充存储,帮助标准化大语言模型输出;多轮交互策略则通过完整的医生-大语言模型对话填满存储,可能实现更精准的决策辅助信息理解与运用。

MATERIALS AND METHODS

材料与方法

Materials

材料

In this study, we propose an innovative methodology to augment the learning capability of LLMs by incorporating multifaceted prompt design and dynamic training approaches. As shown in Figure 1, diverse prompt designs can be perceived as varying modifications to the storage of knowledge, encompassing manual alterations meticulously orchestrated based on GI tumor expertise, automatic modifications that explore the hospital's preexisting data for analogous cases as pedagogical instances for the LLM, and dynamic modifications consistently interrogated and addressed during the deployment of the consultation process. Consequently, the design of the prompts was executed as follows: Initially, the models are subjected to a more sophisticated introduction prompt, intricately crafted with complex semantic and structural nuances, thereby priming the LLMs to comprehend and respond to intricate queries. Furthermore, an advanced method of incontext learning is introduced, encouraging the models to extract knowledge and patterns from various contexts rather than individual sentences, fostering a more comprehensive understanding of the text. To accommodate evolving data patterns, we also incorporate online learning techniques, enabling the LLMs to continually learn and adapt from real-time, dynamic data. Lastly, we implement an iterative feedback loop through multi-round question-and-answer sessions, reinforcing the model’s ability to comprehend, retain, and apply information over successive interactions. This combination of sophisticated prompt architecture, in-context learning, online learning, and iterative interactions aims to substantially enhance the LLM’s predictive and interpretative capabilities, pushing the frontiers of AI language understanding. We used publicly available medical licensing examination cases, oncology residency and attending physician exam cases as text source.

在本研究中,我们提出了一种创新方法,通过整合多层面提示设计和动态训练策略来增强大语言模型 (LLM) 的学习能力。如图 1 所示,多样化的提示设计可视为对知识存储的不同修改方式,包括:基于胃肠道肿瘤专业知识精心设计的手动修改、通过挖掘医院既有数据寻找类似病例作为教学范例的自动修改,以及在咨询流程部署过程中持续交互解决的动态修改。具体提示设计实施如下:首先采用经过复杂语义和结构设计的精妙引导提示,使大语言模型具备处理复杂查询的能力;其次引入进阶的上下文学习方法,促使模型从多语境而非单句中提取知识模式;为适应动态数据变化,我们整合在线学习技术使模型能持续从实时数据中学习;最后通过多轮问答建立迭代反馈机制,强化模型在连续交互中的信息理解、保持与应用能力。这种融合精妙提示架构、上下文学习、在线学习和迭代交互的方案,旨在显著提升大语言模型的预测与解释能力,拓展人工智能语言理解的前沿边界。本研究采用公开的医师资格考试题库、肿瘤专科住院医师及主治医师考核病例作为文本数据源。

Templated prompts

模板化提示

Past studies have shown that a good use of different prompt engineering,17,20,21 as well as properly designed prompt templates 22 can significantly improve the problem-solving ability of large language models, and this phenomenon was similarly observed in our study. As shown in Figure S1, we developed our prompt template by adopting a four-pronged approach as follows:

过去的研究表明,合理运用不同的提示工程 (prompt engineering) [17,20,21] 以及精心设计的提示模板 [22] 能显著提升大语言模型的问题解决能力,这一现象在我们的研究中也得到了印证。如图 S1 所示,我们通过以下四步法构建了提示模板:

Declaring the role. Assigning a 'role' or 'identity' to large language models is one of the commonly used techniques for interacting with these models. Previous research22 supports that this method can effectively guide what type of output the models generate and what details they prioritize. In our study, we assigned the role of a digestive oncology specialist to GPT-4, emphasizing its range of skills that included clinical diagnosis, treatment, and communication techniques. We found this strategy successfully influenced GPT-4's behaviors, responses, and interaction styles to align with the expectations of the role.

声明角色。为大语言模型分配"角色"或"身份"是与这些模型交互的常用技术之一。先前研究[22]表明,该方法能有效引导模型生成何种输出及关注哪些细节。在我们的研究中,我们为GPT-4赋予了消化肿瘤学专家的角色,强调其临床诊断、治疗和沟通技巧等技能范围。我们发现该策略成功使GPT-4的行为、响应和交互风格符合该角色的预期。

Stating the main task. This approach essentially provides GPT-4 with a clear directive on what it is expected to accomplish. In our study, the primary task of our model is to deliver detailed and accurate advice to patients with digestive system cancers. This involves defining the central task that GPT-4 needs to perform. Given the context of our research, our model, acting as a digestive oncology specialist, is tasked with generating personalized treatment plans for digestive system cancer patients. By articulating the main task, we direct GPT-4's focus, streamline its reasoning process, and enhance its ability to produce task-specific, relevant, and actionable outputs. In addition, to enable GPT-4 to produce complex and con textually accurate responses, we've included a wide range of scenarios and contexts, from simple situations to the complexity of academic discourse in hospitals. We also encourage GPT-4 to link different pieces of information together. This approach aids GPT-4 in moving beyond simple pattern recognition, facilitat ing a deeper understanding when executing tasks.

阐明主要任务。该方法本质上为GPT-4提供了明确的执行指令。在我们的研究中,模型的核心任务是为消化系统癌症患者提供详尽准确的诊疗建议。这需要明确定义GPT-4需完成的核心工作。基于研究背景,我们的模型作为消化肿瘤专家,需为消化系统癌症患者生成个性化治疗方案。通过阐明主要任务,我们引导GPT-4聚焦目标、优化推理流程,并提升其生成任务相关、可执行输出的能力。此外,为使GPT-4能生成复杂且符合语境的响应,我们设置了从简单场景到医院学术讨论等不同复杂度的情境。同时鼓励GPT-4建立信息间的关联,这种方法能帮助模型超越简单模式识别,在执行任务时实现更深层次的理解。

Declaring the workflow. We have defined a comprehensive workflow in the prompt templates, which includes case analysis, clinical examination, scheduling examination, diagnosis and treatment, execution and adjustment of treatment, and follow-ups. This is also the general workflow of a professional digestive oncology specialist. We believe this represents a generalized chain of thought and many studies17,20,21,23 have already demonstrated that this approach can stimulate LLM's reasoning ability. We find this strategy ensures that GPT-4's output is more consistent and logical, using a planned, step-by-step approach to accomplish tasks, which is very similar to the process a human expert uses to solve problems. By structuring GPT-4's thinking in this way, we can effectively manage its output, improve overall consistency, and reduce the likelihood of generating irrelevant or erroneous information.

声明工作流程。我们已在提示模板中定义了一套完整的工作流程,包括病例分析、临床检查、安排检查、诊断与治疗、执行并调整治疗方案以及随访。这也是专业消化肿瘤科医师的通用工作流程。我们认为这代表了一种通用思维链,多项研究[17,20,21,23]已证明该方法能激发大语言模型的推理能力。该策略能确保GPT-4的输出更具一致性和逻辑性,通过规划好的分步方法完成任务,这与人类专家解决问题的过程高度相似。通过这样结构化GPT-4的思维,我们可以有效管控其输出,提升整体一致性,并降低生成无关或错误信息的可能性。

Specifying constraints. In this process, we've incorporated certain constraints into the prompt templates. We require GPT-4 not to make responses when uncertain or additional information is needed, but rather, it must first gather sufficient information. In addition, we require GPT-4 to provide detailed and correct guidance for a specific case, as GPT-4 tends to give general and non-specific answers that may not be wrong but lack specificity. This approach ensures that GPT-4 avoids generating responses that are undesirable or beyond its scope, thereby enhancing its effectiveness and minimizing potential deviations. We also advised GPT-4 to build a trusting doctor-patient relationship in a warm, humorous manner rather than in a cold and impersonal way when answering.

指定约束。在此过程中,我们已将特定约束融入提示模板。要求GPT-4在不确定或需要补充信息时不得直接回应,而必须先收集充分信息。此外,我们要求GPT-4针对具体案例提供详尽准确的指导,因其常给出笼统而非针对性的回答——这些回答虽非错误但缺乏针对性。该方法确保GPT-4避免生成超出能力范围或不理想的回应,从而提升有效性并减少潜在偏差。我们还建议GPT-4在应答时以温暖幽默的方式建立医患信任关系,而非采用冷漠疏离的态度。

In-context learning

上下文学习

In this study, we introduce an automated in-context learning (ICL) approach to refine GPT-4's capabilities, focusing on the integration of doctors' habits and cognition. This method assimilates insights drawn from analogous past cases and is comprised of three main components: firstly, transposing past patient conditions into a designated embedding space; secondly, gauging the similarity between the current condition and these archived cases to identify its $\mathsf{k}$ -nearest counterparts; and finally, building incontext learning prompts based on these identified cases. We provide a detailed exposition of these three components in the following:

在本研究中,我们引入了一种自动化上下文学习 (in-context learning, ICL) 方法,旨在提升 GPT-4 的能力,重点关注医生习惯与认知的融合。该方法整合了从类似历史病例中提取的洞见,并包含三个主要组成部分:首先,将既往患者病情映射到特定嵌入空间;其次,衡量当前病情与存档病例的相似度以确定其 $\mathsf{k}$ 近邻;最后,基于这些筛选病例构建上下文学习提示。以下我们将详细阐述这三个组成部分:

Encoding patient conditions using pre-trained chinese BERT model. A pre-trained Chinese BERT model in Hugging Face (https://hugging face. co/hfl/chinese-bert-wwm-ext), specifically the “hfl/chinese-bert-wwm-ext”, is utilized to translate patient conditions into a high-dimensional embedding space (768 dimensions in this study), capturing the context of the condition effectively. The BERT tokenizer is used to convert condition text into input vectors, which are then fed into the BERT model. Operating in a no-gradient update setting, the “poole r output” from the model serves as the sentence embedding for each patient condition.

使用预训练的中文BERT模型编码患者病情。采用Hugging Face平台(https://huggingface.co/hfl/chinese-bert-wwm-ext)上的预训练中文BERT模型"hfl/chinese-bert-wwm-ext",将患者病情有效映射到高维嵌入空间(本研究采用768维)。通过BERT分词器将病情文本转换为输入向量后输入模型,在无梯度更新设置下,模型的"pooler output"作为每个患者病情的句子嵌入表示。

Calculation of cosine similarity and identification of k-nearest neighbors. Once the embeddings for all patient conditions have been computed, we calculate the cosine similarity between them to derive a similarity score. This metric provides a measure of the contextual similarity between different patient conditions. Based on these similarity scores, we identify up to k-nearest neighbors for each patient condition (with k being up to four depending on the token limitation of GPT-4).

计算余弦相似度并识别k近邻。在计算完所有患者病况的嵌入向量后,我们计算它们之间的余弦相似度以得出相似度分数。该指标用于衡量不同患者病况之间的上下文相似性。根据这些相似度分数,我们为每个患者病况识别最多k个最近邻(k最多为4,具体取决于GPT-4的token限制)。

Generation of in-context learning prompts. For each patient condition, we generate an enriched prompt that includes the top-k similar past cases and the corresponding doctor's suggestions. To ensure consistency and readability in these prompts, a pre-defined template is used: "As an experienced clinician, your responsibilities include understanding and analyzing patient information and chief complaints, […]. Now, let’s look at these examples: [...]. After analyzing these examples, here is a new patient: [...]. Please give specific treatment plan suggestions based on the above examples and relevant literature. (see Figures 4 & S6 for details).

生成上下文学习提示。针对每位患者的病情,我们会生成一个包含前k个相似历史病例及对应医生建议的增强提示。为确保这些提示的一致性和可读性,采用预定义模板:"作为经验丰富的临床医生,您的职责包括理解和分析患者信息及主诉[...]。现在请看以下示例:[...]。分析这些案例后,这里有一位新患者:[...]。请根据上述案例及相关文献给出具体治疗方案建议(详见 图4 和 图S6)。

Metrics

指标

We have developed a unique set of metrics, drawing from those typically used for evaluating clinicians' examinations, to quantitatively assess the results generated by various methods. These metrics encompass six key aspects:

我们开发了一套独特的评估指标,借鉴了临床医生检查常用的标准,用于定量评估不同方法产生的结果。这些指标涵盖六个关键方面:

- Understanding medical history (0-20): This metric assesses how accurately and comprehensively an LLM captures and interprets a patient's medical history. This includes consideration of the patient's previous diagnoses, surgeries, hospitalizations, allergies, family history, lifestyle, and other relevant information.

- 理解病史 (0-20): 该指标评估大语言模型 (LLM) 对患者病史的捕捉和解释是否准确全面。包括患者既往诊断、手术史、住院史、过敏史、家族史、生活方式及其他相关信息。

- Diagnosis and differential diagnosis (0-20): This metric assesses the ability of the LLM to accurately diagnose the patient's condition based on the medical history. It includes both the primary diagnosis and any differential diagnoses.

- 诊断与鉴别诊断 (0-20): 该指标评估大语言模型根据病史准确诊断患者病情的能力,包括初步诊断及任何鉴别诊断。

- Further examination and reason (0-10): This metric evaluates the appropriateness of any additional examinations suggested by the LLM. It measures not only whether the recommended examinations are suitable, but also if they are justified based on the patient's condition and symptoms. The LLM should also provide a clear rationale for why these additional examinations are needed.

- 进一步检查和原因 (0-10): 该指标评估大语言模型建议的任何额外检查是否恰当。它不仅衡量推荐的检查是否合适,还评估这些检查是否基于患者的病情和症状具有合理性。大语言模型还应明确说明为何需要进行这些额外检查。

- Principles and plans of treatment (0-20): This metric evaluates the LLM's ability to propose a suitable treatment plan. The plan should be personalized for the patient, taking into account factors like age, overall health, potential side effects, and patient preferences.

- 治疗原则与方案 (0-20): 该指标评估大语言模型提出合适治疗方案的能力。方案应针对患者个性化定制,考虑年龄、整体健康状况、潜在副作用及患者偏好等因素。

- Breadth and depth of results (0-20): This metric measures how comprehensively the LLM covers the scope of medical knowledge in its results (breadth), as well as how much detail it provides (depth). Breadth refers to the range of different topics or areas covered in the results, while depth refers to the level of detail or complexity within those topics.

- 结果的广度和深度 (0-20): 该指标衡量大语言模型 (LLM) 在其结果中覆盖医学知识范围的全面性 (广度) 以及提供的细节量 (深度)。广度指结果中涵盖的不同主题或领域的范围,深度指这些主题内部的细节或复杂程度。

- Thinking and expressing ability (0-10): This is a measure of how effectively the LLM reasons and communicates its findings. Thinking refers to the LLM's ability to logically process and interpret data, make connections, draw conclusions, and anticipate potential outcomes. The expressing ability should not only be clear and accurate but also demonstrate empathy in line with a real clinician's interaction. This includes sensitivity to the patient's emotional state, using comforting and supportive language, and showing understanding and respect for the patient's experiences and concerns. By effectively incorporating empathy, the LLM can build trust, encourage open communication, and provide emotional support in addition to addressing physical health issues.

- 思维与表达能力 (0-10): 该指标评估大语言模型(LLM)推理和传达结论的有效性。思维指LLM逻辑处理与解读数据、建立关联、得出结论及预判潜在结果的能力。表达能力不仅需清晰准确,还应展现与真实临床医生互动相符的同理心,包括对患者情绪状态的敏感性、使用安抚性支持性语言,并体现对患者经历与诉求的理解与尊重。通过有效融入同理心,LLM能在解决身体健康问题之外建立信任、促进开放沟通并提供情感支持。

To gain a clearer understanding of performance based on the total scores, we have defined the following expertise levels:

为了更清晰地理解基于总分的表现,我们定义了以下专业水平等级:

- Level A (90-100 points): Top-level expertise, capable of independently managing complex and rare cases, demonstrating exceptional skills and professional knowledge. 2. Level B (80-89 points): Experienced level, capable of handling most cases, but requires guidance for complex or rare cases. 3. Level C (70-79 points): Mid-level competence, capable of independently addressing common cases, requires guidance for complex ones. 4. Level D (60-69 points): Junior level, capable of handling some common cases, but requires close guidance for complex cases. 5. Level E (below 60 points): Initial training level, needs guidance from experienced clinicians in all aspects.

- A级 (90-100分): 顶级专业水平,能够独立处理复杂罕见病例,展现出卓越技能和专业知识。

- B级 (80-89分): 资深水平,能处理多数病例,但复杂或罕见病例需指导。

- C级 (70-79分): 中级水平,可独立处理常见病例,复杂病例需指导。

- D级 (60-69分): 初级水平,能处理部分常见病例,复杂病例需密切指导。

- E级 (60分以下): 培训初期水平,所有方面均需资深临床医师指导。

RESULTS

结果

Templated evaluation

模板化评估

Figures 2 & S2 provide a comparison between our designed prompting template (Figure 2B) and the standard, direct prompting (Figure 2A) utilized by GPT-4. The findings underscore that the designed template for role assumption (Figure S1) can improve GPT-4 to make more complex decisions based on the patient's individual circumstances. In the provided example, our designed prompting can prioritize the control of disease progression, symptom relief, enhancement of life quality, and survival extension, instead of merely pursuing a cure unconditionally. Moreover, the template manifests an exceptional ability to interweave quality-of-life considerations within the treatment strategies and provides comprehensive guidance (Figure S3). It also underscores the significance of continuous patient assessment and the pursuit of innovative, custom treatments (Figure S4). As opposed to direct prompting, our designed prompting template possesses the ability to mimic the intricate treatment ideation process, enhancing GPT-4’s efficacy as a therapeutic advisory tool when acting as a senior oncologist.

图 2 和图 S2 展示了我们设计的提示模板 (图 2B) 与 GPT-4 使用的标准直接提示 (图 2A) 之间的对比。研究结果表明,角色假设设计模板 (图 S1) 能帮助 GPT-4 根据患者个体情况做出更复杂的决策。在给定示例中,我们设计的提示能优先考虑控制疾病进展、缓解症状、提升生活质量及延长生存期,而非无条件追求治愈。此外,该模板展现出将生活质量考量融入治疗策略的卓越能力,并提供全面指导 (图 S3)。同时强调了持续患者评估和探索创新定制治疗方案的重要性 (图 S4)。与直接提示相比,我们设计的提示模板能够模拟复杂的治疗构思过程,从而提升 GPT-4 作为资深肿瘤学家角色时的治疗建议工具效能。

Multi-round evaluation

多轮评估

Figures 3 & S5 illustrate an interaction with GPT-4 for cancer treatment advice. Initially, GPT-4 prematurely diagnosed the patient with late-stage cancer and proposed a treatment plan. However, this was inappropriate, given the necessity for a more accurate staging diagnosis for this patient. As highlighted in Figure 3, the clinician directed GPT-4 to offer a detailed staging diagnosis, subsequently pointing out its error. Following multiple questionand-answer interactions with the clinician, GPT-4 acknowledged its mistake and adjusted its response. It began by determining the cancer's stage, before suggesting a specific treatment plan. This revised response is not only more suitable for the patient but also provides her with hope. This multi-round interaction demonstrates the learning capability of large language models like GPT-4, highlighting their ability to quickly integrate human logical reasoning within the context of intricate medical scenarios.

图 3 和图 S5 展示了与 GPT-4 就癌症治疗建议进行的交互过程。最初,GPT-4 过早地将患者诊断为晚期癌症并提出了治疗方案。然而,考虑到该患者需要更精确的分期诊断,这一建议并不恰当。如图 3 所示,临床医生引导 GPT-4 提供详细的分期诊断,随后指出了其错误。经过与临床医生的多轮问答交互后,GPT-4 承认了错误并调整了回答。它首先确定了癌症的分期,然后才提出具体的治疗方案。这一修订后的回应不仅更符合患者情况,还为她带来了希望。这种多轮交互展示了大语言模型(如 GPT-4)的学习能力,突显了它们在复杂医疗场景中快速整合人类逻辑推理的能力。

A Direct prompting

直接提示法

Doctor

医生

Male, 68 years old. The patient was found to have a gastric mass in an external hospital. G astros copy showed that at 41-47cm from the incisors, there is an ulcerative tumor at the e soph ago gastric junction. Pathology: E soph ago gastric junction. Biopsy: moderately differentiated a de no carcinoma. Lauren classification: intestinal type. Immuno his to chemical results: HER2(0) [HER2 positive control $(3+)$ , HER2 negative control (0)]. CT: Thickening of the gastric wall, considered as Borrmann type 3 gastric cancer(cT4aN3a) ; Multiple filling defects in the splenic vein and portal vein, suggesting tumor thrombus. The tumor markers are normal. Considering the patient's multiple portal vein tumor thrombi, it is not suitable for clinical trial enrollment.

男性,68岁。外院发现胃部占位。胃镜检查示距门齿41-47cm处贲门胃底见溃疡型肿物。病理:贲门胃底。活检:中分化腺癌。Lauren分型:肠型。免疫组化结果:HER2(0) [HER2阳性对照$(3+)$,HER2阴性对照(0)]。CT:胃壁增厚,考虑Borrmann 3型胃癌(cT4aN3a);脾静脉及门静脉多发充盈缺损,考虑癌栓。肿瘤标志物正常。考虑患者门静脉多发癌栓,不适合入组临床试验。

This is the information of a patient. Please search for relevant literature and provide specific treatment plan recommendations, and explain the reasons.

这是一位患者的资料。请检索相关文献并提供具体的治疗方案建议,同时说明理由。

This prompt is summarized into “{Sex}, {Age}, {Disease description}, {Question}.” and will be referred to in other content.

该提示总结为“{性别}, {年龄}, {疾病描述}, {问题}。”并将在其他内容中引用。

Based on the information provided, immuno his to chemistry shows HER2 positive. Due to the presence of multiple tumor thrombi in the portal vein, the patient is not suitable for clinical trial enrollment.

根据所提供的信息,免疫组化显示HER2阳性。由于门静脉存在多发癌栓,该患者不适合参加临床试验。

B Make role assumptions before prompting

B 在提示前预设角色假设

Doctor

医生

{Role declaration} {Definition of a Gastrointestinal Oncologist} {Skills of a Gastrointestinal Oncologist} {Work Process of a Gastrointestinal Oncologist} {Specific Requirements and Rules for the Response} {Sex}, {Age}, {Disease description}.

{角色声明} {胃肠肿瘤学家定义} {胃肠肿瘤学家的技能} {胃肠肿瘤学家的工作流程} {回应的具体要求与规则} {性别}, {年龄}, {疾病描述}。

Based on the information you provided, the patient has been diagnosed with moderately differentiated a de no carcinoma of the e soph ago gastric junction, with a pathological type of intestinal, HER2-negative. CT scans show the existence of tumor thrombus, which may suggest the disease is in the late stage. However, please note that a more detailed clinical data and expert evaluation are needed for a definite diagnosis. For patients with advanced gastric cancer of this type, systemic chemotherapy is often the first choice, such as the FOLFOX regimen (fluorouracil $^+$ cisplatin $^+$ ox a lip latin) or the SOX regimen (S-1 $^+$ ox a lip latin). Moreover, considering the HER2-negative pathological result, targeted drugs like tra stu zuma b may not be applicable here. Additionally, if the disease is well-controlled, palliative surgery can be considered to alleviate symptoms.

根据您提供的信息,患者被诊断为食管胃结合部中分化腺癌(adenocarcinoma),病理类型为肠型,HER2阴性。CT扫描显示存在瘤栓,可能提示疾病已进入晚期。但请注意,确诊仍需更详细的临床数据和专家评估。对于此类晚期胃癌患者,系统化疗通常是首选方案,例如FOLFOX方案(氟尿嘧啶+顺铂+奥沙利铂)或SOX方案(S-1+奥沙利铂)。此外,考虑到HER2阴性病理结果,曲妥珠单抗等靶向药物可能不适用。若病情控制良好,可考虑姑息性手术以缓解症状。

Regarding the tumor thrombus, we need to evaluate in detail whether there is a possibility for surgery or interventional treatment, or if anticoagulant therapy is needed. If the tumor thrombus causes severe clinical symptoms, surgery or interventional treatment may be considered, but this requires a comprehensive assessment of the patient's overall condition and other complications.

关于癌栓,我们需要详细评估是否存在手术或介入治疗的可能性,或者是否需要抗凝治疗。如果癌栓引起严重的临床症状,可以考虑手术或介入治疗,但这需要对患者的整体状况及其他并发症进行全面评估。

At the same time, it is also recommended to conduct relevant genetic testing, such as MLH1, MSH2, MSH6, PMS2, etc., because a family history of cancer may be related to hereditary cancer syndromes.

同时,也建议进行相关基因检测,例如 MLH1、MSH2、MSH6、PMS2 等,因为癌症家族史可能与遗传性癌症综合征相关。

This is a possible diagnosis and treatment plan based on the information I currently have. The actual treatment plan needs to be made based on the patient's specific situation and a comprehensive assessment by the medical team. Please discuss these suggestions with your medical team to develop the most suitable treatment plan for the patient.

这是基于我目前掌握的信息提出的可能诊疗方案。实际治疗方案需根据患者具体情况及医疗团队全面评估后制定。请与您的医疗团队讨论这些建议,为患者制定最合适的治疗方案。

Figure 2. Evaluation of prompting template design (A) Direct prompting. GPT-4 is directly provided with a patient's condition and asked to suggest a treatment plan. (B) The proposed templated prompting (make role assumptions before prompting). GPT-4 is initially primed with a specifically designed role-embedded prompt, ensuring the AI understands the task requirements and responsibilities. Subsequently, the patient's condition is provided, and GPT-4 is asked to suggest a treatment plan. Note that this figure is a reduced version of Figure S2.

图 2: 提示模板设计评估 (A) 直接提示。直接向GPT-4提供患者病情并要求其提出治疗方案。(B) 提出的模板化提示(在提示前进行角色假设)。GPT-4首先通过专门设计的角色嵌入提示进行初始化,确保AI理解任务要求和职责。随后提供患者病情,并要求GPT-4提出治疗方案。请注意,该图是图S2的简化版本。

ICL evaluation

ICL评估

As demonstrated in Tables 1-4, the performance of In-Context Learning (ICL) exceeded that of rudimentary prompting by a substantial margin across

如表 1-4 所示,上下文学习 (In-Context Learning, ICL) 的性能显著超越了基础提示方法。

various types of digestive system cancer treatments, with a notable difference of 13.4 points in overall performance. Figures 4 & S6 provide an illustrative comparison between in-context learning and rudimentary prompting.

多种消化系统癌症治疗方式,整体性能差异显著达13.4分。图4和图S6展示了上下文学习与基础提示法的对比示例。

Doctor

医生

Female, 76 years old. Poor appetite. G astros copy revealed a raised lesion with a concave surface at the cardia, extending to the gastric fundus (Siewert II type, a de no carcinoma), and coarse gastric mucosa consistent with Borrmann IV type gastric cancer presentation (adeno carcinoma). Pathology report: a small amount of poorly differentiated cancer (gastric body), poorly differentiated a de no carcinoma; Cmet $(1+)$ , EGFR(0), HER2(0), pMMR, pan-TRK(-), PD-L1 (CPS<1), EBER(-); (Cardia) moderately differentiated a de no carcinoma, intestinal type; Cmet $(2+)$ , EGFR( $^{1+)}$ , HER2(0), pMMR, pan-TRK(-), PD-L1 ( $.C P S=70$ ), EBER(-). Normal lab results, chest-abdominal-pelvic enhanced CT showed diffuse thickening of the entire stomach, lymph node metastasis between the liver and stomach. Currently, the patient's food intake has decreased, bowel movements are not abnormal, and she has lost about $5\mathsf{k g}$ in the past 2 months.

女性,76岁。食欲不振。胃镜检查显示贲门处有一隆起性病变,表面凹陷,延伸至胃底(Siewert II型,原发癌),胃黏膜粗糙符合Borrmann IV型胃癌表现(腺癌)。病理报告:(胃体)少量低分化癌,低分化原发癌;Cmet $(1+)$,EGFR(0),HER2(0),pMMR,pan-TRK(-),PD-L1(CPS<1),EBER(-);(贲门)中分化原发癌,肠型;Cmet $(2+)$,EGFR$(^{1+})$,HER2(0),pMMR,pan-TRK(-),PD-L1$(CPS=70)$,EBER(-)。实验室检查结果正常,胸腹盆增强CT显示全胃弥漫性增厚,肝胃间淋巴结转移。目前患者进食量减少,排便无异常,近2个月体重下降约$5\mathsf{kg}$。

Doctor

医生

How do you analyze and determine the staging diagnosis and future prognosis for this patient?

如何分析和确定该患者的分期诊断及未来预后?

The staging of gastric cancer primarily refers to the American Joint Committee on Cancer (AJCC) TNM staging system, which assesses the depth of tumor invasion (T), the status of lymph node metastasis (N), and the condition of distant metastasis (M). According to the information you provided, the patient's gastric cancer has developed lymph node metastasis (LNM), indicating that the N part of the TNM stage is at least N1 (1-2 regional lymph nodes metastasis) or higher. Simultaneously, the gastric cancer is Borrmann IV type, suggesting that the tumor has extensively invaded the stomach wall, and in the TNM stage, the grading of the T part is usually T3 (tumor invades the serosa) or T4 (tumor penetrates the serosa or directly invades adjacent structures). Based on this information, it can be preliminarily judged that the patient is in at least stage III of gastric cancer (including T3N1M0, T4aN1M0, T4bN1M0, etc.) or a higher stage.

胃癌的分期主要依据美国癌症联合委员会 (AJCC) 的TNM分期系统,评估肿瘤浸润深度 (T)、淋巴结转移状态 (N) 和远处转移情况 (M)。根据您提供的信息,患者胃癌已出现淋巴结转移 (LNM),表明TNM分期中的N部分至少为N1 (1-2枚区域淋巴结转移) 或更高。同时,胃癌属于Borrmann IV型,提示肿瘤已广泛侵犯胃壁,在TNM分期中T部分通常分级为T3 (肿瘤侵及浆膜下层) 或T4 (肿瘤穿透浆膜或直接侵犯邻近结构)。基于这些信息,可初步判断患者处于至少III期胃癌 (包括T3N1M0、T4aN1M0、T4bN1M0等) 或更高分期。

Doctor

医生

The patient's lymph node metastasis (LNM) between the liver and stomach could be classified as either N1 or N3, and a detailed interpretation of the lymph node metastasis is needed. Will there be a chance for surgery in the future? This will have a significant impact on future treatment outcomes.

患者肝胃间淋巴结转移(LNM)可能被归类为N1或N3,需对淋巴结转移进行详细解读。未来是否有手术机会?这将极大影响后续治疗效果。

In terms of the re sect ability of the disease, the patient's lymph node metastasis between the liver and stomach (LNM) may affect surgical decisions. The N stage in the TNM staging is at least N1 (1-2 regional lymph node metastases). In some cases, for locally advanced gastric cancer, even with lymph node metastasis, radical surgery (such as D2 gast rec to my) may be performed. This surgery can remove as many lymph nodes as possible, but the risk of surgery is high, and the rate of postoperative complications and mortality is also relatively high, especially in the elderly population. However, for advanced gastric cancer (such as the patient's Borrmann IV type gastric cancer), especially in cases accompanied by extensive lymph node metastasis or distant metastasis, surgery is usually not the first choice of treatment, because surgery at this time cannot significantly improve prognosis and may instead bring greater risks and complications.

就疾病的可切除性而言,患者肝胃间淋巴结转移(LNM)可能影响手术决策。TNM分期中的N分期至少为N1(1-2枚区域淋巴结转移)。某些情况下,对于局部进展期胃癌,即使存在淋巴结转移,仍可能实施根治性手术(如D2胃切除术)。该手术可尽可能多地清除淋巴结,但手术风险较高,术后并发症发生率和死亡率也相对较高,尤其在老年人群中。然而对于晚期胃癌(如患者Borrmann IV型胃癌),特别是伴随广泛淋巴结转移或远处转移的情况,手术通常不作为首选治疗方案,因为此时手术无法显著改善预后,反而可能带来更大风险和并发症。

Based on the above discussion, please provide a new recommendation for this patient.

根据上述讨论,请为该患者提供新的治疗建议。

Doctor

医生

I suggest the following:

我建议如下:

A Direct prompting

直接提示

Doctor

医生

This prompt is summarized into “{Target case}, {Question}.” and will be referred to in other content.

该提示总结为“{目标案例}, {问题}。”并将在其他内容中引用。

In-context learning prompting

上下文学习提示

Doctor

医生

Based on the information you provided, I suggest the following treatment plan:

根据您提供的信息,我建议以下治疗方案:

Figure 4. Utilizing GPT-4 to Suggest On co logical Treatment Regimens with Distinct Prompting Techniques (A) Direct prompting and (B) In-Context Learning (ICL) prompting. Note that GPT-4 was not provided with the designed prompt, as our objective was to exclusively evaluate the performance of ICL. Note that this figure is a reduced version of Figure S6.

图 4: 使用 GPT-4 通过不同提示技术推荐肿瘤治疗方案 (A) 直接提示和 (B) 上下文学习(ICL)提示。请注意 GPT-4 并未获得预设提示模板,因为我们的目标仅评估 ICL 的表现。注:本图为图 S6 的缩略版本。

Panel A portrays a clinical scenario in which a patient is diagnosed with rectal cancer and is accompanied by a naive prompt for generating treatment strategy recommendations. In contrast, Panel B highlights the implementation of in-context learning, where GPT-4 is provided with two comparable patient cases, each accompanied by treatment suggestions endorsed by an experienced oncologist. Through in-context learning, GPT-4 successfully absorbed the treatment approach and reasoning process utilized by physicians, leading to the generation of personalized and targeted treatment plans, as opposed to general guideline recommendations offered through rudimentary prompts. Furthermore, ICL emphasized clinically critical aspects such as clin

图A展示了一个临床场景,其中一位患者被诊断出直肠癌,并附带一个简单的提示用于生成治疗策略建议。相比之下,图B突出了上下文学习 (in-context learning) 的应用,其中GPT-4获得了两个类似的患者案例,每个案例都附有经验丰富的肿瘤学家认可的治疗建议。通过上下文学习,GPT-4成功吸收了医生采用的治疗方法和推理过程,从而生成了个性化和针对性的治疗计划,而不是通过简单提示提供的通用指南建议。此外,ICL强调了临床关键方面,例如...

ARTICLE

文章

ical research and potential side effects, thus enhancing the overall quality and relevance of the generated treatment recommendations.

提升生成治疗建议的整体质量和相关性,同时考虑临床研究和潜在副作用。

Overall evaluation

总体评估

Tables 1-4 provided the performance evaluation of different prompt engineering strategies-Simple, Templated, ICL, and Multi (Multiple Rounds)-on several aspects of understanding and knowledge organization across different disease conditions (Overall, Gastric Cancer, Colorectal Cancer, Other GI cancers). Broadly, the tables show a general trend of increased performance as we move from the Simple strategy to the Multi-strategy, but templated prompts and ICL show similar performance. The mean scores for all aspects improve noticeably as the complexity of the prompts increases as shown in Table S1.

表1-4展示了不同提示工程策略(简单、模板化、ICL和多轮)在多种疾病情况(总体、胃癌、结直肠癌、其他胃肠道癌症)的理解和知识组织方面的性能评估。总体而言,这些表格显示从简单策略到多策略的性能普遍提升趋势,但模板化提示和ICL表现出相似的性能。如表S1所示,随着提示复杂度的增加,所有方面的平均得分均有显著提升。

A few specific observations can be highlighted. First, ‘Understanding Medical History’ consistently receives full marks in the Multi-strategy, underscoring the effectiveness of iterative questioning in gathering comprehensive information. Second, in the context of ‘Principles and Plans of Treatment’, the marked improvement along the four types of prompts indicates the importance of diverse and complex prompts in formulating an effective treatment plan. The total scores also follow the same trend, with the Multi-strategy achieving the highest scores across all disease conditions consistently. These results provide strong evidence supporting the effectiveness of employing various prompt engineering strategies, as well as iterative questioning, in enhancing the performance of GPT-4 in medical contexts. However, the exact impact and effectiveness may vary depending on the specific disease condition, necessitating further nuanced analysis.

几点具体观察值得关注。首先,"了解病史"在多策略模式下始终获得满分,凸显了迭代提问在收集全面信息方面的有效性。其次,在"治疗原则与方案"方面,四种提示类型带来的显著改进表明,多样化复杂提示对制定有效治疗方案的重要性。总分也呈现相同趋势,多策略模式在所有疾病情况下始终获得最高分。这些结果为采用多种提示工程策略及迭代提问提升GPT-4在医疗场景中的表现提供了有力证据。但具体影响效果可能因疾病类型而异,需要更细致的分析。

Table 1. Overall performance in all GI cancers

| Metrics | Simple | Templated | ICL | Multi-round |

| Understanding Medical History (0-20) | 19.1±2.5 | 19.9±0.8 | 20.0±0.0 | 20.0±0.0 |

| Diagnosis and Differential Diagnosis (0-20) | 18.3±3.4 | 19.4±2.2 | 19.4±1.9 | 19.9±0.8 |

| Further Examination and Reason (0-10) | 6.6±3.8 | 8.8±2.6 | 9.0±2.5 | 9.8±1.1 |

| Principles and Plans of Treatment (0-20) | 10.8±4.0 | 14.0±3.3 | 14.2±4.7 | 16.5±3.2 |

| Breadth and Depth of Results (0-20) | 11.4±2.2 | 14.2±2.1 | 14.3±1.7 | 15.2±1.5 |

| Thinking and Expressing Ability (0-10) | 5.3±2.0 | 8.1±2.4 | 8.0±2.7 | 9.8±1.1 |

| Total Score (0-100) | 71.5±11.2 | 84.4±7.5 | 84.9±10.0 | 91.2±4.0 |

表 1: 所有胃肠道癌症的整体表现

| 指标 | Simple | Templated | ICL | Multi-round |

|---|---|---|---|---|

| 病史理解 (0-20) | 19.1±2.5 | 19.9±0.8 | 20.0±0.0 | 20.0±0.0 |

| 诊断与鉴别诊断 (0-20) | 18.3±3.4 | 19.4±2.2 | 19.4±1.9 | 19.9±0.8 |

| 进一步检查及原因 (0-10) | 6.6±3.8 | 8.8±2.6 | 9.0±2.5 | 9.8±1.1 |

| 治疗原则与方案 (0-20) | 10.8±4.0 | 14.0±3.3 | 14.2±4.7 | 16.5±3.2 |

| 结果广度与深度 (0-20) | 11.4±2.2 | 14.2±2.1 | 14.3±1.7 | 15.2±1.5 |

| 思维与表达能力 (0-10) | 5.3±2.0 | 8.1±2.4 | 8.0±2.7 | 9.8±1.1 |

| 总分 (0-100) | 71.5±11.2 | 84.4±7.5 | 84.9±10.0 | 91.2±4.0 |

Table 2. Overall performance in gastric cancer

| Metrics | Simple | Templated | ICL | Multi-round |

| Understanding Medical History (0-20) | 18.1±3.3 | 19.8±1.1 | 20.0±0.0 | 20.0±0.0 |

| Diagnosis and Differential Diagnosis (0-20) | 16.4±4.1 | 18.8±3.0 | 18.8±2.6 | 19.8±1.1 |

| Further Examination and Reason (0-10) | 5.5±3.7 | 8.8±2.6 | 8.8±2.6 | 9.5±1.5 |

| Principles and Plans of Treatment (0-20) | 9.8±4.2 | 13.6±3.8 | 14.3±4.7 | 16.4±3.8 |

| Breadth and Depth of Results (0-20) | 11.0±2.0 | 15.0±1.5 | 14.5±1.5 | 15.7±1.7 |

| Thinking and Expressing Ability (0-10) | 5.0±1.5 | 9.0±2.0 | 8.6±2.3 | 9.5±1.5 |

| Total Score (0-100) | 65.7±10.6 | 85.0±7.7 | 85.0±10.9 | 91.0±5.0 |

表 2: 胃癌总体表现

| 指标 | Simple | Templated | ICL | Multi-round |

|---|---|---|---|---|

| 病史理解 (0-20) | 18.1±3.3 | 19.8±1.1 | 20.0±0.0 | 20.0±0.0 |

| 诊断与鉴别诊断 (0-20) | 16.4±4.1 | 18.8±3.0 | 18.8±2.6 | 19.8±1.1 |

| 进一步检查及原因 (0-10) | 5.5±3.7 | 8.8±2.6 | 8.8±2.6 | 9.5±1.5 |

| 治疗原则与方案 (0-20) | 9.8±4.2 | 13.6±3.8 | 14.3±4.7 | 16.4±3.8 |

| 结果广度与深度 (0-20) | 11.0±2.0 | 15.0±1.5 | 14.5±1.5 | 15.7±1.7 |

| 思维表达能力 (0-10) | 5.0±1.5 | 9.0±2.0 | 8.6±2.3 | 9.5±1.5 |

| 总分 (0-100) | 65.7±10.6 | 85.0±7.7 | 85.0±10.9 | 91.0±5.0 |

Table 3. Overall performance in colorectal cancer

| Metrics | Simple | Templated | ICL | Multi-round |

| Understanding Medical History (0-20) | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| Diagnosis and Differential Diagnosis (0-20) | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| Further Examination and Reason (0-10) | 7.3±3.3 | 9.1±1.9 | 9.1±1.9 | 10.0±0.0 |

| Principles and Plans of Treatment (0-20) | 10.5±4.0 | 13.6±3.1 | 12.3±5.8 | 17.3±2.5 |

| Breadth and Depth of Results (0-20) | 11.4±2.2 | 14.1±1.9 | 14.1±1.9 | 15.0±0.0 |

| Thinking and Expressing Ability (0-10) | 4.5±1.4 | 7.3±2.5 | 6.8±3.2 | 10.0±0.0 |

| Total Score (0-100) | 73.6±6.4 | 84.1±6.3 | 82.3±10.7 | 92.3±2.5 |

表 3: 结直肠癌整体表现

| 指标 | Simple | Templated | ICL | Multi-round |

|---|---|---|---|---|

| 病史理解 (0-20) | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| 诊断与鉴别诊断 (0-20) | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| 进一步检查及原因 (0-10) | 7.3±3.3 | 9.1±1.9 | 9.1±1.9 | 10.0±0.0 |

| 治疗原则与方案 (0-20) | 10.5±4.0 | 13.6±3.1 | 12.3±5.8 | 17.3±2.5 |

| 结果广度与深度 (0-20) | 11.4±2.2 | 14.1±1.9 | 14.1±1.9 | 15.0±0.0 |

| 思维与表达能力 (0-10) | 4.5±1.4 | 7.3±2.5 | 6.8±3.2 | 10.0±0.0 |

| 总分 (0-100) | 73.6±6.4 | 84.1±6.3 | 82.3±10.7 | 92.3±2.5 |

Table 4. Overall performance in other GI cancer

| Metrics | Simple | Templated | ICL | Multi-round |

| Understanding Medical History (0-20) | 19.4±1.6 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| Diagnosis and Differential Diagnosis (0-20) | 19.4±1.6 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| Further Examination and Reason (0-10) | 7.6±3.9 | 9.1±2.6 | 9.4±2.4 | 10.0±0.0 |

| Principles and Plans of Treatment (0-20) | 11.2±4.0 | 14.7±3.2 | 15.6±3.4 | 15.6±3.4 |

| Breadth and Depth of Results (0-20) | 11.5±2.3 | 13.5±2.3 | 14.1±1.9 | 14.7±1.2 |

| Thinking and Expressing Ability (0-10) | 5.9±2.6 | 7.9±2.5 | 8.5±2.3 | 10.0±0.0 |

| Total Score (0-100) | 75.0±11.6 | 85.3±7.8 | 87.6±7.1 | 90.3±3.6 |

表 4: 其他胃肠道癌症的总体表现

| 指标 | Simple | Templated | ICL | Multi-round |

|---|---|---|---|---|

| 病史理解 (0-20) | 19.4±1.6 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| 诊断与鉴别诊断 (0-20) | 19.4±1.6 | 20.0±0.0 | 20.0±0.0 | 20.0±0.0 |

| 进一步检查及原因 (0-10) | 7.6±3.9 | 9.1±2.6 | 9.4±2.4 | 10.0±0.0 |

| 治疗原则与方案 (0-20) | 11.2±4.0 | 14.7±3.2 | 15.6±3.4 | 15.6±3.4 |

| 结果的广度与深度 (0-20) | 11.5±2.3 | 13.5±2.3 | 14.1±1.9 | 14.7±1.2 |

| 思维与表达能力 (0-10) | 5.9±2.6 | 7.9±2.5 | 8.5±2.3 | 10.0±0.0 |

| 总分 (0-100) | 75.0±11.6 | 85.3±7.8 | 87.6±7.1 | 90.3±3.6 |

DISCUSSION

讨论

To the best of our knowledge, it is evident that our research is pioneering in exploring the techniques to optimize LLMs specifically for the recommendation of treatments for gastrointestinal cancers. In contrast to studies that solely use simple prompts,2,5,6 our research assessed a series of prompt engineering strategies including simple prompts, templated prompts, ICL, and multi-round interaction. Our results demonstrate that complex prompting approaches, especially multi-round interaction, are capable of accruing sufficient diagnostic and therapeutic information pertinent to a specific case. This approach facilitates the rational and efficient expansion of the storage of knowledge, thereby substantially enhancing model performance in collecting medical histories, forming accurate diagnoses, and recommending effective treatments for digestive cancers. The iterative nature of multi-round interaction consistently yielded the highest scores across evaluation metrics, highlighting its reliability and broad applicability. Our study also necessitates further exploration. Firstly, our adopted metric, based on clinicians' examinations, retains some degree of subjectivity. This accentuates the necessity for a more objective clinical evaluation method. We are currently collaborating with statisticians to devise novel evaluation tools to measure model performance more accurately and objectively. Moreover, our investigation was solely focused on tumors in the digestive system, indicating that future research could extend the application of LLMs to other types of cancers. Additionally, our primary clinical scenario was set in China, with the study conducted in Chinese before being translated into English using GPT-4. Although GPT-4 exhibits robust cross-language performance 1, the influence of the selected language on performance deserves further study. Moreover, we have conducted preliminary assessments of various LLMs including GPT4, Claude, ChatGLM, Wenxin Yiyan, and PaLM. Our findings indicate that GPT4 exhibits superior underlying capabilities compared to its counterparts, leading us to select it as a representative of LLMs. Nevertheless, a comprehensive examination of the diverse and continually evolving LLMs is still an imperative area of future research. Last but not least, it is crucial to note that the data used to train GPT-4 predominantly originates from sources outside of China. However, due to variations in clinical guidelines, available medical technologies, perceptions of risk and benefit by patients and physicians, as well as disease prevalence trends in different regions, treatment approaches for gastrointestinal cancers can significantly differ across various regions. Subsequently, the outputs generated by GPT-4 may not entirely apply to Chinese patients. This particular aspect could potentially impact the evaluation scores during our comparative experiments of different prompt engineering strategies. To mitigate this potential issue, fine-tuning the LLMs with data specifically sourced from China could provide a more appropriate approach.

据我们所知,我们的研究在探索优化大语言模型(LLM)技术以专门用于胃肠道癌症治疗推荐方面具有开创性。与仅使用简单提示的研究[2,5,6]相比,我们评估了一系列提示工程策略,包括简单提示、模板化提示、少样本学习(ICL)以及多轮交互。结果表明,复杂的提示方法(尤其是多轮交互)能够积累与特定病例相关的充分诊断和治疗信息。这种方法促进了知识存储的合理高效扩展,从而显著提升了模型在收集病史、形成准确诊断以及为消化系统癌症推荐有效治疗方案方面的性能。多轮交互的迭代特性在所有评估指标中始终获得最高分,凸显了其可靠性和广泛适用性。

我们的研究仍需进一步探索。首先,采用的基于临床医生检查的评估指标仍存在一定主观性,这凸显了开发更客观临床评估方法的必要性。我们正与统计学家合作设计新型评估工具,以更准确客观地衡量模型性能。其次,研究仅聚焦于消化系统肿瘤,未来可扩展大语言模型在其他癌症类型中的应用。此外,主要临床场景设定在中国,研究先以中文开展后通过GPT-4翻译为英文。虽然GPT-4展现出强大的跨语言能力[1],但所选语言对性能的影响仍需深入研究。

我们对包括GPT-4、Claude、ChatGLM、文心一言和PaLM在内的多种大语言模型进行了初步评估。结果表明GPT-4具有更优越的基础能力,因此选择其作为大语言模型的代表。然而,对持续演进的多样化大语言模型进行全面考察仍是未来研究的重点。最后需注意,GPT-4的训练数据主要来自中国境外。由于临床指南差异、可用医疗技术、医患风险收益认知以及各地区疾病流行趋势不同,胃肠道癌症的治疗方案存在显著地域差异。因此GPT-4生成的输出可能不完全适用于中国患者,这一特性可能影响不同提示工程策略对比实验的评估分数。为解决该问题,使用中国本土数据对大语言模型进行微调可能是更适宜的解决方案。

Recognizing the constraints and potential biases of LLMs is essential for their responsible and ethical application. One major concern is that LLMs gather knowledge from vast amounts of internet data that may contain inherent biases or inaccuracies. To mitigate potential bias and increase the reliability of our results, we employed a method of inter-rater reliability where each output from the model was independently evaluated by two separate individuals. Their evaluations were then compared and reconciled, ensuring a more objective and balanced assessment of the model’s performance. Data privacy and security must be underscored when providing medical records to online LLMs. Thus, we have implemented stringent data protection measures, ensuring all patient data is anonymized and encrypted to protect privacy. Furthermore, the inadequate judgment and critical thinking skills of LLMs when interpreting medical records limit their performance in highly specialized tasks. To address this, we’ve fostered close collaboration with expert clinicians and used prompt engineering to assist the model in understanding and handling complex medical information. We envision LLMs not as replacements for healthcare professionals, but rather as effective aid for clinical decision-making when properly guided. Future technological advancements, such as parameter-efficient fine-tuning for specialized tasks and the use of vectorized databases, may further contribute to solving these issues, offering better solutions for data security and private model deployment.

认识到大语言模型(LLM)的局限性和潜在偏见对其负责任且符合伦理的应用至关重要。一个主要问题在于,大语言模型从海量互联网数据中获取知识,这些数据可能包含固有偏见或不准确信息。为减少潜在偏见并提高结果可靠性,我们采用了评估者间信度方法,由两名独立人员分别评估模型的每个输出,通过比对和协调评估结果,确保对模型性能进行更客观平衡的判断。

向在线大语言模型提供医疗记录时,必须强调数据隐私与安全性。因此我们实施了严格的数据保护措施,确保所有患者数据都经过匿名化和加密处理。此外,大语言模型在解读医疗记录时缺乏足够的判断力和批判性思维,限制了其在高度专业化任务中的表现。为此,我们与临床专家建立了紧密合作,并采用提示词工程(prompt engineering)辅助模型理解处理复杂医疗信息。

我们设想大语言模型并非取代医疗专业人员,而是在正确引导下成为临床决策的有效辅助工具。未来技术进步,如针对专业任务的参数高效微调(parameter-efficient fine-tuning)和向量数据库(vectorized databases)的应用,可能进一步解决这些问题,为数据安全和私有化模型部署提供更优解决方案。

As we move forward, our findings open up avenues to further refine prompt engineering techniques to optimize LLMs for analyzing patient data and medical literature to recommend evidence-based treatments for digestive system cancers. We aim to explore how different prompts impact the model's ability to accurately recommend optimal interventions based on tumor characteristics and patient factors. For instance, certain prompts may enhance the model's capacity to suggest appropriate surgical procedures depending on tumor size, location, and staging. Other prompts could optimize the recommendation of systemic therapies like chemotherapy regimens and radiation therapy protocols tailored to the individual's medical history and cancer biomarkers. Advances in prompt engineering to account for all relevant clinical variables could enable the generation of more personalized and effective treatment plans for each unique patient case. However, more research is still urgently needed to ensure patient safety, avoid biases, and enable reliable interpretation of model outputs before these systems are ready for real-world clinical implementation. We must rigorously test prompts to identify any that skew recommendations in inappropriate or unsafe ways. Transparent reporting of model limitations and close collaboration with medical experts will be critical to responsible prompt engineering. While our results demonstrate immense promise for LLMs to enhance evidence-based decision support, translating these tools into practice will require thoughtful and ethical design paired with extensive validation to evolve prompt engineering strategies that provide trustworthy guidance without ever replacing human clinical judgment. Overall, steering LLMs through carefully crafted prompts shows great potential to augment clinicians’ abilities to optimize and personalize treatment plans, propelling more effective cancer care. But as this technology continues maturing, maintaining patient well-being through rigorous prompt optimization and evaluation remains imperative.

随着研究深入,我们的发现为优化提示工程(prompt engineering)技术开辟了新途径,旨在提升大语言模型分析患者数据和医学文献的能力,从而为消化系统癌症推荐循证治疗方案。我们计划探究不同提示如何影响模型根据肿瘤特征和患者因素精准推荐最佳干预措施的能力。例如,特定提示可增强模型依据肿瘤大小、位置和分期推荐合适外科手术方案的能力;另一些提示则可优化针对患者病史和癌症生物标志物的系统疗法推荐(如化疗方案和放疗方案)。通过提示工程整合所有相关临床变量,有望为每个独特病例生成更个性化、更有效的治疗计划。但必须开展更多研究以确保患者安全、避免偏差,并在临床落地前实现模型输出的可靠解读。我们需严格测试提示模板,识别可能导致不当或不安全建议的偏差。如实报告模型局限性,并与医学专家紧密合作,是负责任推进提示工程的关键。虽然研究证明大语言模型在增强循证决策支持方面潜力巨大,但将这些工具转化为临床实践需要兼顾伦理考量的周密设计,以及通过广泛验证来完善提示工程策略——这些策略应提供可信指导,而非取代临床医生的专业判断。总体而言,通过精心设计的提示来引导大语言模型,有望显著提升临床医生优化和个性化治疗方案的能力,从而推动更有效的癌症诊疗。但随着技术持续发展,通过严格的提示优化与评估来保障患者福祉仍是不可妥协的前提。

CONCLUSION

结论

This study has underscored the potential and challenges associated with the application of prompt engineering techniques to large language models (LLMs) in the field of clinical oncology. Through careful crafting of simple, templated prompts and more complex strategies, like in-context learning (ICL) and multi-round interaction, we have seen promising capabilities of these models in processing and interpreting intricate medical data related to gastrointestinal cancers. This can substantially support healthcare professionals in making decisions about recommended treatments. However, it is crucial to continuously address the inherent limitations of these models, including potential biases, data privacy concerns, and their specific interpretative limitations in this clinical context. Although complex prompts, especially those allowing for iterative questioning, have shown great promise in optimizing the performance of LLMs, it's evident that further investigations are needed to refine these strategies and explore their potential integration s. As our study was conducted in a clearly defined and constrained environment to ensure consistency, further exploration in diverse settings is warranted to fully exploit the potential of LLMs in healthcare scenarios.

本研究揭示了提示工程 (prompt engineering) 技术应用于大语言模型 (LLM) 在临床肿瘤学领域的潜力与挑战。通过精心设计简单模板化提示词,以及上下文学习 (ICL) 和多轮交互等复杂策略,这些模型在处理和解读胃肠癌相关复杂医疗数据方面展现出令人期待的能力,可实质性辅助医疗专业人员制定治疗方案决策。然而必须持续解决这些模型的固有局限,包括潜在偏见、数据隐私问题,以及在该临床场景下的特定解释性限制。虽然复杂提示策略(特别是支持迭代提问的方案)在优化大语言模型性能方面展现出巨大潜力,但显然需要进一步研究来完善这些策略并探索其整合可能性。由于本研究在明确定义的受限环境中进行以确保一致性,未来需要在多样化场景中进一步探索,以充分释放大语言模型在医疗健康领域的潜力。

REFERENCES

参考文献

engineering with ChatGPT. arXiv preprint arXiv:2302.11382. DOI: 10.48550/arXiv.2302.11382. 23. Suzgun, M., Scales, N., Schärli, N., et al. (2022). Challenging big-bench tasks and whether chain-of-thought can solve them. arXiv preprint arXiv:2210.09261. DOI: 10.48550/arXiv.2210.09261.

与ChatGPT的工程实践。arXiv预印本arXiv:2302.11382。DOI: 10.48550/arXiv.2302.11382。

23. Suzgun, M., Scales, N., Schärli, N., 等. (2022). 挑战Big-Bench任务及思维链(Chain-of-Thought)能否解决它们。arXiv预印本arXiv:2210.09261。DOI: 10.48550/arXiv.2210.09261。

FUNDING AND ACKNOWLEDGMENTS

资助与致谢

This work was supported by the National Natural Science Foundation of China (91959205 to L.S., U22A20327 to L.S., 82203881 to Y.C., 82272627 to XT.Z., 7232018 to Y.S., 12090022 to B.D., 11831002 to B.D., 81801778 to L.Z.), Beijing Natural Science Foundation (7222021 to Y.C., Z200015 to XT.Z.), Beijing Hospitals Authority Youth Programme (QM L 20231115 to Y.C.), Clinical Medicine Plus X-Young Scholars Project of Peking University (PKU 2023 LC X Q 041 to Y.C. and L.Z.). Guangdong Provincial Key Laboratory of Precision Medicine for Gastrointestinal Cancer (2020 B 121201004). The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

本研究由国家自然科学基金(91959205至L.S.、U22A20327至L.S.、82203881至Y.C.、82272627至XT.Z.、7232018至Y.S.、12090022至B.D.、11831002至B.D.、81801778至L.Z.)、北京市自然科学基金(7222021至Y.C.、Z200015至XT.Z.)、北京市医院管理中心青年项目(QM L 20231115至Y.C.)、北京大学临床医学+X青年学者项目(PKU 2023 LC X Q 041至Y.C.和L.Z.)、广东省胃肠病精准医学重点实验室(2020 B 121201004)资助。资助方未参与研究设计、数据收集与分析、发表决定或稿件准备工作。

AUTHOR CONTRIBUTIONS

作者贡献

J. Yuan, P. Bao, Z. Chen, M. Yuan, and J. Pan contributed to data analysis and interpretation, and drafted the manuscript. J. Zhao, Y. Xie, Y. Cao, Y. Wang, Z. Wang, Z. Lu, X. Zhang, J Li and L. Ma performed the sample preparation. Y. Chen, L. Zhang, L. Shen, and B. Dong planned the study and participated in manuscript revision. All authors have given final approval for the manuscript to be published and have agreed to be responsible for all aspects of the manuscript.

J. Yuan、P. Bao、Z. Chen、M. Yuan 和 J. Pan 参与了数据分析和解释,并起草了手稿。J. Zhao、Y. Xie、Y. Cao、Y. Wang、Z. Wang、Z. Lu、X. Zhang、J Li 和 L. Ma 负责样本制备。Y. Chen、L. Zhang、L. Shen 和 B. Dong 规划了研究并参与了手稿修订。所有作者均已最终批准手稿发表,并同意对手稿的所有方面负责。

DECLARATION OF INTERESTS

利益声明

The authors declare no competing interests.

作者声明无利益冲突。

ETHICAL STATEMENT AND PATIENT CONSENT Not applicable.

伦理声明与患者同意 不适用。

DATA AND CODE AVAILABILITY

数据和代码可用性

A pre-trained Chinese BERT model in Hugging Face (https://hugging face. co/hfl/chinese-bert-wwm-ext), specifically the “hfl/chinese-bert-wwm-ext”, is utilized to translate patient conditions into a high-dimensional embedding space (768 dimensions in this study), capturing the context of the condition effectively.

Hugging Face平台上的一个预训练中文BERT模型(https://huggingface.co/hfl/chinese-bert-wwm-ext),具体为"hfl/chinese-bert-wwm-ext",被用于将患者病情转化为高维嵌入空间(本研究中为768维),有效捕捉病情上下文。

SUPPLEMENTAL INFORMATION

补充信息

It can be found online at https://doi.org/10.59717/j.xinn-med.2023.100019

可在 https://doi.org/10.59717/j.xinn-med.2023.100019 在线获取

LEAD CONTACT WEBSITE

LEAD CONTACT WEBSITE

http://faculty.bicmr.pku.edu.cn/\ dongbin/

http://faculty.bicmr.pku.edu.cn/ dongbin/