Generative Adversarial Networks

论文中英文对照合集 : https://aiqianji.com/blog/articles

author Ian J. Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Ward-Farley, Sherjil Ozair, Aaron Courville, Yoshua Bengio,

Universite de Montreal, 2014

ABSTRACT

We propose a new framework for estimating generative models via an adversarial process, in which we simultaneously train two models: a generative model G that captures the data distribution, and a discriminative model D that estimates the probability that a sample came from the training data rather than G. The training procedure for G is to maximize the probability of D making a mistake. This framework corresponds to a minimax two-player game. In the space of arbitrary functions G and D, a unique solution exists, with G recovering the training data distribution and D equal to 12 everywhere. In the case where G and D are defined by multilayer perceptrons, the entire system can be trained with backpropagation. There is no need for any Markov chains or unrolled approximate inference networks during either training or generation of samples. Experiments demonstrate the potential of the framework through qualitative and quantitative evaluation of the generated samples.

我们提出了一个新的框架,用于通过对抗过程估算生成模型,在该框架中,我们同时训练两个模型:生成模型 G 捕获数据分布和判别模型 D估计样本来是否来自训练数据的可能性 。G 的训练过程是使 D 犯错误的概率最大化。这个框架相当于最小最大化的双人博弈。在任意函数 G 和 D 的空间中,存在唯一解,其中,G 恢复训练数据分布,D 处在1/2处。在 G 和 D 由多层感知机定义的情况下,整个系统可以通过反向传播进行训练。在训练或者样本生成期间,不需要任何马尔可夫链或展开的近似推理网络。实验通过对生成的样本进行定性和定量评估,证明了该框架的潜力。

1. Introduction

The promise of deep learning is to discover rich, hierarchical models [2] that represent probability distributions over the kinds of data encountered in artificial intelligence applications, such as natural images, audio waveforms containing speech, and symbols in natural language corpora. So far, the most striking successes in deep learning have involved discriminative models, usually those that map a high-dimensional, rich sensory input to a class label [14, 22]. These striking successes have primarily been based on the backpropagation and dropout algorithms, using piecewise linear units [19, 9, 10] which have a particularly well-behaved gradient . Deep generative models have had less of an impact, due to the difficulty of approximating many intractable probabilistic computations that arise in maximum likelihood estimation and related strategies, and due to difficulty of leveraging the benefits of piecewise linear units in the generative context. We propose a new generative model estimation procedure that sidesteps these difficulties. 1

深度学习的希望是发现丰富的,层次化的模型[ 2 ],该模型 表示人工智能应用中遇到的各种数据的概率分布,例如自然图像,包含语音的音频波形以及自然语言语料库中的符号。到目前为止,在深度学习最显着的成就都涉及判别模型,通常是那些高维,丰富的感觉输入映射到类标签 [ 14,22 ]。这些引人注目的成功已经主要基于所述反向传播和差的算法,使用分段线性单元 [ 19,9,10 ]具有特别好表现的渐变。深度生成模型的影响较小,这是由于难以对最大似然估计和相关策略中出现的许多棘手的概率计算进行逼近,以及由于难以在生成上下文中利用分段线性单位的优势。我们提出了一种新的生成模型估计程序,可以规避这些困难。

In the proposed adversarial nets framework, the generative model is pitted against an adversary: a discriminative model that learns to determine whether a sample is from the model distribution or the data distribution. The generative model can be thought of as analogous to a team of counterfeiters, trying to produce fake currency and use it without detection, while the discriminative model is analogous to the police, trying to detect the counterfeit currency. Competition in this game drives both teams to improve their methods until the counterfeits are indistiguishable from the genuine articles.

在提出的对抗网络框架中,生成模型与一个对手相对立:一个判别模型,该模型学习确定样本是来自模型分布还是来自数据分布。生成模型可以被认为类似于一组伪造者,试图生产假币并在未经检测的情况下使用它,而区分模型类似于警察,试图发现伪币。在这场比赛中,竞争驱动两队提升它们的方法,直到伪币无法被区分。

This framework can yield specific training algorithms for many kinds of model and optimization algorithm. In this article, we explore the special case when the generative model generates samples by passing random noise through a multilayer perceptron, and the discriminative model is also a multilayer perceptron. We refer to this special case as adversarial nets. In this case, we can train both models using only the highly successful backpropagation and dropout algorithms [17] and sample from the generative model using only forward propagation. No approximate inference or Markov chains are necessary.

该框架可以针对多种模型和优化算法产生特定的训练算法。在本文中,我们探讨了特殊情况,即生成模型通过将随机噪声传递给多层感知器来生成样本,而判别模型也是多层感知器。我们称这种特殊情况为对抗网。在这种情况下,我们可以仅使用非常成功的反向传播和丢失算法来训练这两个模型 [ 17 ], 而仅使用正向传播从生成模型中进行采样。不需要近似推断或马尔可夫链。

2 RELATED WORK 相关工作

An alternative to directed graphical models with latent variables are undirected graphical models with latent variables, such as restricted Boltzmann machines (RBMs) [27, 16], deep Boltzmann machines (DBMs) [26] and their numerous variants. The interactions within such models are represented as the product of unnormalized potential functions, normalized by a global summation/integration over all states of the random variables. This quantity (the partition function) and its gradient are intractable for all but the most trivial instances, although they can be estimated by Markov chain Monte Carlo (MCMC) methods. Mixing poses a significant problem for learning algorithms that rely on MCMC [3, 5].

Deep belief networks (DBNs) [16] are hybrid models containing a single undirected layer and several directed layers. While a fast approximate layer-wise training criterion exists, DBNs incur the computational difficulties associated with both undirected and directed models.

Alternative criteria that do not approximate or bound the log-likelihood have also been proposed, such as score matching [18] and noise-contrastive estimation (NCE) [13]. Both of these require the learned probability density to be analytically specified up to a normalization constant. Note that in many interesting generative models with several layers of latent variables (such as DBNs and DBMs), it is not even possible to derive a tractable unnormalized probability density. Some models such as denoising auto-encoders [30] and contractive autoencoders have learning rules very similar to score matching applied to RBMs. In NCE, as in this work, a discriminative training criterion is employed to fit a generative model. However, rather than fitting a separate discriminative model, the generative model itself is used to discriminate generated data from samples a fixed noise distribution. Because NCE uses a fixed noise distribution, learning slows dramatically after the model has learned even an approximately correct distribution over a small subset of the observed variables.

Finally, some techniques do not involve defining a probability distribution explicitly, but rather train a generative machine to draw samples from the desired distribution. This approach has the advantage that such machines can be designed to be trained by back-propagation. Prominent recent work in this area includes the generative stochastic network (GSN) framework [5], which extends generalized denoising auto-encoders [4]: both can be seen as defining a parameterized Markov chain, i.e., one learns the parameters of a machine that performs one step of a generative Markov chain. Compared to GSNs, the adversarial nets framework does not require a Markov chain for sampling. Because adversarial nets do not require feedback loops during generation, they are better able to leverage piecewise linear units [19, 9, 10], which improve the performance of backpropagation but have problems with unbounded activation when used ina feedback loop. More recent examples of training a generative machine by back-propagating into it include recent work on auto-encoding variational Bayes [20] and stochastic backpropagation [24].

定向图形模型的替代与潜变量无向图形模型与潜变量,如受限玻尔兹曼机(RBMS) [ 27,16 ],深波尔兹曼机(DBMS) [ 26 ]以及它们的多种变型。这些模型中的交互表示为未归一化的潜在函数的乘积,该函数通过对随机变量所有状态的全局求和/积分来归一化。此数量(分区功能)及其梯度对于除最琐碎的情况以外的所有情况都是难以解决的,尽管可以通过马尔可夫链蒙特卡洛(MCMC)方法进行估算。混合带来了显著问题学习依赖MCMC算法 [ 3,5 ]。

深度信念网络(DBN) [ 16 ]是包含单个无向层和多个有向层的混合模型。虽然存在快速的近似逐层训练准则,但DBN会引起与无向和有向模型相关的计算困难。

还提出了不近似或限制对数似然性的替代标准,例如得分匹配 [ 18 ] 和噪声对比估计(NCE) [ 13 ]。这两种方法都要求对学习到的概率密度进行解析指定,直到归一化常数为止。请注意,在许多有趣的具有多层潜在变量的生成模型(例如DBN和DBM)中,甚至不可能得出可控的非归一化概率密度。一些模型,例如去噪自动编码器 [ 30 ]压缩自动编码器的学习规则与应用于RBM的得分匹配非常相似。在NCE中,就像在这项工作中一样,采用判别性训练标准来适应生成模型。但是,生成模型本身不是用于拟合单独的判别模型,而是用于从固定噪声分布的样本中辨别生成的数据。由于NCE使用固定的噪声分布,因此在模型学习到观察变量的一小部分甚至是近似正确的分布之后,学习速度就会显着降低。

最后,某些技术不涉及明确定义概率分布,而是训练生成机从所需分布中提取样本。这种方法的优势在于,可以将此类机器设计为通过反向传播进行训练。该领域最近的杰出工作包括生成随机网络(GSN)框架[ 5 ],该框架 扩展了广义降噪自动编码器 [ 4 ]。:两者都可以看作是定义了一个参数化的马尔可夫链,即,人们可以学习执行生成马尔可夫链的一个步骤的机器的参数。与GSN相比,对抗网络框架不需要马尔可夫链进行采样。由于对抗性网不产生过程中需要反馈回路,它们能够更好地利用分段线性单位 [ 19,9,10 ],INA反馈回路使用时提高反向传播的性能,但有无限的激活问题。通过向后传播来训练生成机器的最新示例包括有关自动编码变体贝叶斯的最新工作 [ 20 ] 和随机反向传播 [ 24 ]。

3. Adversarial nets

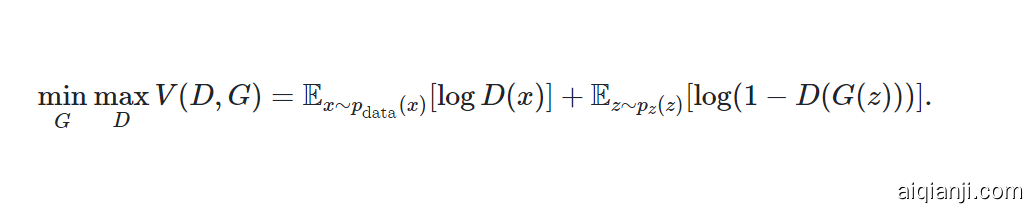

The adversarial modeling framework is most straightforward to apply when the models are both multilayer perceptrons. To learn the generator’s distribution $p_g$ over data x, we define a prior on input noise variables $p_z(z)$, then represent a mapping to data space as G(z;θg), where G is a differentiable function represented by a multilayer perceptron with parameters θg. We also define a second multilayer perceptron D(x;θd) that outputs a single scalar. D(x) represents the probability that x came from the data rather than $p_g$. We train D to maximize the probability of assigning the correct label to both training examples and samples from G. We simultaneously train G to minimize log(1−D(G(z))):

In other words, D and G play the following two-player minimax game with value function V(G,D):

当模型都是多层感知机时,对抗模型最容易直接应用。要训练生成器在数据x上的分布$p_g$,我们定义一个噪音输入变量prior $p_z(z)$,接着表达一个到数据空间的映射$G(z; \theta_g)$,其中G是一个参数为$\theta_g$的多层感知机表达的可微函数。我们定义另一个多层感知机$D(x;\theta_d)$,输出一个标量。$D(x)$表达了x来自数据而不是$p_g$的概率。我们训练D,使它为来自数据和来自G的样本赋予正确标签的概率最高。我们同时训练G来最小化$\log (1 - (D(G(z)))$。

换句话说,D和G玩双人minimax游戏,得分函数V(G, D):

In the next section, we present a theoretical analysis of adversarial nets, essentially showing that the training criterion allows one to recover the data generating distribution as G and D are given enough capacity, i.e., in the non-parametric limit. See Figure 1 for a less formal, more pedagogical explanation of the approach. In practice, we must implement the game using an iterative, numerical approach. Optimizing D to completion in the inner loop of training is computationally prohibitive, and on finite datasets would result in overfitting. Instead, we alternate between k steps of optimizing D and one step of optimizing G. This results in D being maintained near its optimal solution, so long as G changes slowly enough. This strategy is analogous to the way that SML/PCD [31, 29] training maintains samples from a Markov chain from one learning step to the next in order to avoid burning in a Markov chain as part of the inner loop of learning. The procedure is formally presented in Algorithm 1.

在下一部分中,我们将对对抗网进行理论分析,从本质上表明,训练准则允许人们恢复数据生成的分布,如下所示: G 和 d被赋予足够的容量,即在非参数限制内。有关该方法的较不正式,较教学性的说明,请参见图 1。在实践中,我们必须使用迭代的数值方法来实现游戏。最佳化d在训练的内循环中完成训练在计算上是禁止的,在有限的数据集上会导致过度拟合。相反,我们进行k步优化D,一步优化G。使D保持在接近最优解,G变化得足够慢。这一策略类似SML/PCD。 [ 31,29 ]训练样本保持从一个学习步骤马尔可夫链到下一个,以避免在一个Markov链燃烧作为学习的内回路的一部分。该过程在算法1中正式提出 。

In practice, equation 1 may not provide sufficient gradient for G to learn well. Early in learning, when G is poor, D can reject samples with high confidence because they are clearly different from the training data. In this case, log(1−D(G(z))) saturates. Rather than training G to minimize log(1−D(G(z))) we can train G to maximize logD(G(z)). This objective function results in the same fixed point of the dynamics of G and D but provides much stronger gradients early in learning.

实际应用中,等式1也许不能为G训练提供有效的梯度。训练早期,G还不充分时,D很容易拒绝来自它的样本,因为与训练集差得比较远。这一情况下$\log (1 - D(G(z)))$就饱和了。与其训练G,让它最小化$\log (1 - D(G(z)))$,不如让它最大化$\log D(G(z))$。这一目标函数在训练早期能提供强得多的梯度。

4. Theoretical Results

The generator G implicitly defines a probability distribution pg as the distribution of the samples G(z) obtained when z∼pz. Therefore, we would like Algorithm 1 to converge to a good estimator of pdata, if given enough capacity and training time. The results of this section are done in a non-parametric setting, e.g. we represent a model with infinite capacity by studying convergence in the space of probability density functions.

We will show in section 4.1 that this minimax game has a global optimum for pg=pdata. We will then show in section 4.2 that Algorithm 1 optimizes Eq 1, thus obtaining the desired result.

生成器G隐式地定义了当$z \sim p_z$时获得的样本G(z)的概率分布$p_g$。因此我们希望当容量和时间足够时,算法1能收敛到$p_data$的好估计。

Algorithm 1 Minibatch stochastic gradient descent training of generative adversarial nets. The number of steps to apply to the discriminator, k, is a hyperparameter. We used k=1, the least expensive option, in our experiments.

算法1 生成对抗网络的Minibatch随机梯度下降训练。应用于鉴别器的步骤数,ķ是超参数。我们用了ķ=1个,这是我们实验中最便宜的选择。

4.1 Global Optimality 全局优化

Global Optimality of $ p_g = p_ {data} $

We first consider the optimal discriminator D for any given generator G.

Proposition 1.

For G fixed, the optimal discriminator D is

先考虑一个不管给什么生成器G,最优的discriminator D。

Proposition 1. 当G固定时,最优D是:

Proof.

The training criterion for the discriminator D, given any generator G, is to maximize the quantity V(G,D)

Proof 对于D的训练标准是,给予任何G,最大化数量V(G, D)

For any$(a,b) \in \mathbb R ^2 $\ ${0,0 }$, the function y→alog(y)+blog(1−y) achieves its maximum in [0,1] at $\frac a {a+b}$. The discriminator does not need to be defined outside of Supp( $p_{data}$)∪Supp($p_g$), concluding the proof.

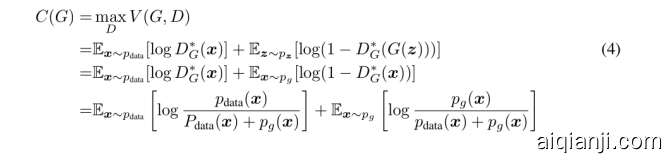

Note that the training objective for D can be interpreted as maximizing the log-likelihood for estimating the conditional probability P(Y=y|x), where Y indicates whether x comes from $p_{data}$ (with y=1) or from $p_g$ (with y=0). The minimax game in Eq. 1 can now be reformulated as:

对于任意$(a,b) \in \mathbb R ^2 $\ ${0,0 }$,函数 $y \to a \log (y) + b \log (1-y) $在[0, 1]间极值点在$\frac a {a+b}$

D的训练目标可解读为最大化估计条件概率$P(Y = y | x)$的log-likelihood,其中Y指示了x来自$p_{data}$(y = 1)还是$p_g$(y=0)。等式1中的minimax游戏可以变换为:

Theorem 1.

The global minimum of the virtual training criterion C(G) is achieved if and only if pg=pdata. At that point, C(G) achieves the value −log4.

定理Theorem 1 Virtual training criterion C(G)的全局最小值当且仅当$p_g = p_{data}$时取得,该点C(G)的值为-log4

Proof. 当$p_g = p_{data}$时,$D^{\star}_G(x) = \frac 1 2$(等式2),因此通过查看等式4 $D^*G(x) = \frac 1 2$时,我们发现$C(G) = \log \frac 1 2 + \log \frac 1 2 = - \log 4$。要确定这是C(G)的最优值,且仅在$p_g = p{data}$时达到,观察:

通过从$C(G) = V(D^{\star}_G, G)$中减去这一表达式,我们得到:

其中KL是Kullback-Leibler divergence。我们认出了这个表达式中模型分布和数据生成过程中的Jensen-Shannon divergence:

Kullback-Leibler divergence](https://en.wikipedia.org/wiki/Kullback%E2%80%93Leibler_divergence),又称相对熵,是两个概率分布差异的度量。应用包括信息系统中的相对(香农)熵 relative (Shanno) entropy,连续时间序列中的随机性 randomness in continuous time-series,还有与统计模型相比时的信息增益information gain。

$$

C(G)=−log(4)+2⋅JSD(p_{data}∥p_g) (6)

$$

Since the Jensen–Shannon divergence between two distributions is always non-negative and zero only when they are equal, we have shown that $C^{\star} = - \log (4)$is the global minimum of C(G) and that the only solution is $p_g = p_{data}$, i.e., the generative model perfectly replicating the data generating process.

因为两个分布的JSD总是非负的,且在它们相等时为0,我们已证明$C^{\star} = - \log (4)$是C(G)的全局最小,当且仅当$p_g = p_{data}$时取得,也就是生成模型完美复制了数据生成过程。

4.2 Convergence of Algorithm 1 ### 算法1的收敛

Proposition 2.

If G and D have enough capacity, and at each step of Algorithm 1, the discriminator is allowed to reach its optimum given G, and pg is updated so as to improve the criterion

Proposition 2. 如果G和D容量足够,而且在算法1中的每一步,discriminator都能达到给定G下其最优,且$p_g$被更新以提升标准:

then $p_g$ converges to $p_{data}$

那么$p_g$能收敛为$p_{data}$

Proof.

Consider V(G,D)=U(pg,D) as a function of $p_g$ as done in the above criterion. Note that U($p_g$,D) is convex in $p_g$. The subderivatives of a supremum of convex functions include the derivative of the function at the point where the maximum is attained. In other words, if f(x)=supα∈Afα(x) and fα(x) is convex in x for every α, then ∂fβ(x)∈∂f if β=argsupα∈Afα(x). This is equivalent to computing a gradient descent update for $p_g$ at the optimal D given the corresponding G. supDU($p_g$,D) is convex in $p_g$ with a unique global optima as proven in Thm 1, therefore with sufficiently small updates of $p_g$, $p_g$ converges to $p_x$, concluding the proof.

Proof. 假设$V(G, D) = U(p_g, D)$是之前标准下完成的$p_g$的一个函数。注意$U(p_g, D)$是$p_g$的一个凸面contex。凸函数的上确界supremum的次导数subderivative包含了函数达到极大值时的偏导数。换句话说,如果$f(x) = sup {α∈A} f{α} (x)$对任何$\alpha$都是x中的凸,那么∂fβ(x)∈∂f 如果$ \beta = \arg \sup {\alpha \in A} f{\alpha} (x) $。这等价于在给定G时,计算$p_g$在最优D的一个梯度下降。如定理1中证明过,$\sup _D U($p_g$,D)$是$p_g$中的一个凸,且有全局最优值,因此$p_g$有充分小的更新时,它能收敛为$p_x$,得证。

In practice, adversarial nets represent a limited family of pg distributions via the function G(z;θg), and we optimize θg rather than pg itself. Using a multilayer perceptron to define G introduces multiple critical points in parameter space. However, the excellent performance of multilayer perceptrons in practice suggests that they are a reasonable model to use despite their lack of theoretical guarantees.

实际中,对抗网络通过函数$G(z;\theta_g)$表达一个$p_g$分布的limited family,所以我们优化$\theta_g$而不是$p_g$本身。使用一个多层感知机来定义G会为参数空间引入多个关键点。但实际应用中多层感知机的优秀性能说明它们是一个合理的模型,尽管缺乏理论支持。

5. Experiments 实验

We trained adversarial nets an a range of datasets including MNIST[23], the Toronto Face Database (TFD) [28], and CIFAR-10 [21]. The generator nets used a mixture of rectifier linear activations [19, 9] and sigmoid activations, while the discriminator net used maxout [10] activations. Dropout [17] was applied in training the discriminator net. While our theoretical framework permits the use of dropout and other noise at intermediate layers of the generator, we used noise as the input to only the bottommost layer of the generator network.

我们在多个数据集上训练对抗网络,生成网络混合使用ReLU和sigmoid激活,辨识网络使用maxout$^{[10]}$激活。训练辨识网络时使用了Dropout。尽管理论上生成网络可以使用Dropout,中间层也能使用noise,我们仅在生成器最底层使用了noise作为输入。

| Model | MNIST | TFD |

|---|---|---|

| DBN [3] | 138±2 | 1909±66 |

| Stacked CAE [3] | 121±1.6 | 2110±50 |

| Deep GSN [6] | 214±1.1 | 1890±29 |

| Adversarial nets | 225±2 | 2057±26 |

Table 1: Parzen window-based log-likelihood estimates. The reported numbers on MNIST are the mean log-likelihood of samples on test set, with the standard error of the mean computed across examples. On TFD, we computed the standard error across folds of the dataset, with a different σ chosen using the validation set of each fold. On TFD, σ was cross validated on each fold and mean log-likelihood on each fold were computed. For MNIST we compare against other models of the real-valued (rather than binary) version of dataset.

We estimate probability of the test set data under pg by fitting a Gaussian Parzen window to the samples generated with G and reporting the log-likelihood under this distribution. The σ parameter of the Gaussians was obtained by cross validation on the validation set. This procedure was introduced in Breuleux et al. [8] and used for various generative models for which the exact likelihood is not tractable [25, 3, 5]. Results are reported in Table 1. This method of estimating the likelihood has somewhat high variance and does not perform well in high dimensional spaces but it is the best method available to our knowledge. Advances in generative models that can sample but not estimate likelihood directly motivate further research into how to evaluate such models.

我们通过将G生成的样本拟合到一个Gaussian Parzen window并报告在这个分布下的log似然率,估计测试集数据在$p_g$下的概率。高斯的参数$\sigma$通过在验证集上的交叉验证获得。此过程在Breuleux等人中进行了介绍。[ 8 ] 并用于各种生成模型的量,精确可能性是不易处理 [ 25,3,5 ]。结果记录在表1中。这种估计可能性的方法具有较高的方差,并且在高维空间中表现不佳,但这是我们所掌握的最佳方法。可以采样但无法估计可能性的生成模型的进步直接促使人们进一步研究如何评估此类模型。这种估计可能性的方法具有较高的方差,并且在高维空间中表现不佳,但这是我们所掌握的最佳方法。可以采样但无法估计可能性的生成模型的进步直接促使人们进一步研究如何评估此类模型。

In Figures 2 and 3 we show samples drawn from the generator net after training. While we make no claim that these samples are better than samples generated by existing methods, we believe that these samples are at least competitive with the better generative models in the literature and highlight the potential of the adversarial framework.

图2图3中是训练后的生成器的样本。

Figure 2: Visualization of samples from the model. Rightmost column shows the nearest training example of the neighboring sample, in order to demonstrate that the model has not memorized the training set. Samples are fair random draws, not cherry-picked. Unlike most other visualizations of deep generative models, these images show actual samples from the model distributions, not conditional means given samples of hidden units. Moreover, these samples are uncorrelated because the sampling process does not depend on Markov chain mixing. a) MNIST b) TFD c) CIFAR-10 (fully connected model) d) CIFAR-10 (convolutional discriminator and “deconvolutional” generator)

Figure 3: Digits obtained by linearly interpolating between coordinates in z space of the full model.

6. Advantages and disadvantages

This new framework comes with advantages and disadvantages relative to previous modeling frameworks. The disadvantages are primarily that there is no explicit representation of $p_g(x)$, and that D must be synchronized well with G during training (in particular, G must not be trained too much without updating D, in order to avoid “the Helvetica scenario” in which G collapses too many values of z to the same value of x to have enough diversity to model pdata), much as the negative chains of a Boltzmann machine must be kept up to date between learning steps. The advantages are that Markov chains are never needed, only backprop is used to obtain gradients, no inference is needed during learning, and a wide variety of functions can be incorporated into the model. Table 2 summarizes the comparison of generative adversarial nets with other generative modeling approaches.

The aforementioned advantages are primarily computational. Adversarial models may also gain some statistical advantage from the generator network not being updated directly with data examples, but only with gradients flowing through the discriminator. This means that components of the input are not copied directly into the generator’s parameters. Another advantage of adversarial networks is that they can represent very sharp, even degenerate distributions, while methods based on Markov chains require that the distribution be somewhat blurry in order for the chains to be able to mix between modes.

与之前的模型框架相比,这一新框架有着优缺点。缺点主要在于没有$p_g(x)$的显示表达,而且在训练中D和G必须同步得很好(特别是在不更新D时,G不能训练太多,来避免“the Helvetica scenario”模式坍塌),如同波兹曼机的Negative chain必须在训练步骤中保持最新。优点是不需要马尔科夫链,仅需反向传播以获得梯度,训练中无需inference,许多函数都能包含进这一模型。表2是总结。

前述优点主要是计算上的。对抗模型还可以从生成器网络中获得一些统计上的优势,该生成器网络不直接使用数据示例进行更新,而仅使用流经鉴别器的梯度进行更新。这意味着输入的组成部分不会直接复制到生成器的参数中。对抗网络的另一个优点是它们可以表示非常尖锐的分布,甚至是简并的分布,而基于马尔可夫链的方法则要求分布有些模糊,以使链能够在模式之间进行混合。

Table 2: Challenges in generative modeling: a summary of the difficulties encountered by different approaches to deep generative modeling for each of the major operations involving a model.

7. Conclusions and future work 结论与未来工作

This framework admits many straightforward extensions:

- A conditional generative model p(x∣c) can be obtained by adding c as input to both G and D.

- Learned approximate inference can be performed by training an auxiliary network to predict z given x. This is similar to the inference net trained by the wake-sleep algorithm [15] but with the advantage that the inference net may be trained for a fixed generator net after the generator net has finished training.

- One can approximately model all conditionals p(xS∣xS/) where S is a subset of the indices of x by training a family of conditional models that share parameters. Essentially, one can use adversarial nets to implement a stochastic extension of the deterministic MP-DBM [11].

- Semi-supervised learning: features from the discriminator or inference net could improve performance of classifiers when limited labeled data is available.

- Efficiency improvements: training could be accelerated greatly by divising better methods for coordinating G and D or determining better distributions to sample z from during training.

本框架可以有许多直接扩展:

- 为G和D增加c作为输入,就能得到条件生成模型$p(x|c)$

- 通过训练一个额外的给定x预测z的网络,能得到learned approximate inference。这类似于wake-sleep算法训练得到的inference,但优点在于它是被训练完成的固定生成器训练的。

- 可以通过训练共享参数的family of conditional模型近似所有条件$p(x_S | x_{\sout S})$,其中S是x的指数的子集。本质上,可以使用对抗网络来实现MP-DBM的随机扩展。

- 半监督学习:当标注数据受限时,来自discriminator或推理网的特征能有助于分类器的性能。

- 效率提升:可以通过采用更好的协调方法来大大加快培训速度G 和 d 或从训练中确定更好的分布样本 ž 。

This paper has demonstrated the viability of the adversarial modeling framework, suggesting that these research directions could prove useful.

本文已经证明了对抗建模框架的可行性,表明这些研究方向可能被证明是有用的。

Acknowledgments

We would like to acknowledge Patrice Marcotte, Olivier Delalleau, Kyunghyun Cho, Guillaume Alain and Jason Yosinski for helpful discussions. Yann Dauphin shared his Parzen window evaluation code with us. We would like to thank the developers of Pylearn2 [12] and Theano [7, 1], particularly Frédéric Bastien who rushed a Theano feature specifically to benefit this project. Arnaud Bergeron provided much-needed support with LaTeX typesetting. We would also like to thank CIFAR, and Canada Research Chairs for funding, and Compute Canada, and Calcul Québec for providing computational resources. Ian Goodfellow is supported by the 2013 Google Fellowship in Deep Learning. Finally, we would like to thank Les Trois Brasseurs for stimulating our creativity.

我们要感谢Patrice Marcotte,Olivier Delalleau,Kyunghyun Cho,Guillaume Alain和Jason Yosinski的有益讨论。Yann Dauphin与我们分享了他的Parzen窗口评估代码。我们要感谢Pylearn2的开发 [ 12 ] 和Theano [ 7,1 ],尤其是FrédéricBastien,他特意抢先Theano功能,以使该项目受益。Arnaud Bergeron提供了LaTeX排版急需的支持。我们还要感谢CIFAR,加拿大研究主席的资助,Compute Canada和CalculQuébec提供的计算资源。Ian Goodfellow获得了2013年Google深度学习研究金的支持。最后,我们要感谢Les Trois Brasseurs激发了我们的创造力。

References

[1] Bastien, F., Lamblin, P., Pascanu, R., Bergstra, J., Goodfellow, I. J., Bergeron, A., Bouchard, N., and Bengio, Y. (2012). Theano: new features and speed improvements. Deep Learning and Unsupervised Feature Learning NIPS 2012 Workshop.

[2] Bengio, Y. (2009). Learning deep architectures for AI. Now Publishers.

[3] Bengio, Y., Mesnil, G., Dauphin, Y., and Rifai, S. (2013a). Better mixing via deep representations. In ICML’13.

[4] Bengio, Y., Yao, L., Alain, G., and Vincent, P. (2013b). Generalized denoising auto-encoders as generative models. In NIPS26. Nips Foundation.

[5] Bengio, Y., Thibodeau-Laufer, E., and Yosinski, J. (2014a). Deep generative stochastic networks trainable by backprop. In ICML’14.

[6] Bengio, Y., Thibodeau-Laufer, E., Alain, G., and Yosinski, J. (2014b). Deep generative stochastic networks trainable by backprop. In Proceedings of the 30th International Conference on Machine Learning (ICML’14).

[7] Bergstra, J., Breuleux, O., Bastien, F., Lamblin, P., Pascanu, R., Desjardins, G., Turian, J., Warde-Farley, D., and Bengio, Y. (2010). Theano: a CPU and GPU math expression compiler. In Proceedings of the Python for Scientific Computing Conference (SciPy). Oral Presentation.

[8] Breuleux, O., Bengio, Y., and Vincent, P. (2011). Quickly generating representative samples from an RBM-derived process. Neural Computation, 23(8), 2053–2073.

[9] Glorot, X., Bordes, A., and Bengio, Y. (2011). Deep sparse rectifier neural networks. In AISTATS’2011.

[10] Goodfellow, I. J., Warde-Farley, D., Mirza, M., Courville, A., and Bengio, Y. (2013a). Maxout networks. In ICML’2013.

[11] Goodfellow, I. J., Mirza, M., Courville, A., and Bengio, Y. (2013b). Multi-prediction deep Boltzmann machines. In NIPS’2013.

[12] Goodfellow, I. J., Warde-Farley, D., Lamblin, P., Dumoulin, V., Mirza, M., Pascanu, R., Bergstra, J., Bastien, F., and Bengio, Y. (2013c). Pylearn2: a machine learning research library. arXiv preprint arXiv:1308.4214.

[13] Gutmann, M. and Hyvarinen, A. (2010). Noise-contrastive estimation: A new estimation principle for unnormalized statistical models. In AISTATS’2010.

[14] Hinton, G., Deng, L., Dahl, G. E., Mohamed, A., Jaitly, N., Senior, A., Vanhoucke, V., Nguyen, P., Sainath, T., and Kingsbury, B. (2012a). Deep neural networks for acoustic modeling in speech recognition. IEEE Signal Processing Magazine, 29(6), 82–97.

[15] Hinton, G. E., Dayan, P., Frey, B. J., and Neal, R. M. (1995). The wake-sleep algorithm for unsupervised neural networks. Science, 268, 1558–1161.

[16] Hinton, G. E., Osindero, S., and Teh, Y. (2006). A fast learning algorithm for deep belief nets. Neural Computation, 18, 1527–1554.

[17] Hinton, G. E., Srivastava, N., Krizhevsky, A., Sutskever, I., and Salakhutdinov, R. (2012b). Improving neural networks by preventing co adaptation of feature detectors. Technical report, arXiv:1207.0580.

[18] Hyvarinen, A. (2005). Estimation of non-normalized statistical models using score matching. ¨ J. Machine Learning Res., 6.

[19] Jarrett, K., Kavukcuoglu, K., Ranzato, M., and LeCun, Y. (2009). What is the best multi-stage architecture for object recognition? In Proc. International Conference on Computer Vision (ICCV’09), pages 2146–2153. IEEE.

[20] Kingma, D. P. and Welling, M. (2014). Auto-encoding variational bayes. In Proceedings of the International Conference on Learning Representations (ICLR).

[21] Krizhevsky, A. and Hinton, G. (2009). Learning multiple layers of features from tiny images. Technical report, University of Toronto.

[22] Krizhevsky, A., Sutskever, I., and Hinton, G. (2012). ImageNet classification with deep convolutional neural networks. In NIPS’2012.

[23] LeCun, Y., Bottou, L., Bengio, Y., and Haffner, P. (1998). Gradient-based learning applied to document recognition. Proceedings of the IEEE, 86(11), 2278–2324.

[24] Rezende, D. J., Mohamed, S., and Wierstra, D. (2014). Stochastic backpropagation and approximate inference in deep generative models. Technical report, arXiv:1401.4082.

[25] Rifai, S., Bengio, Y., Dauphin, Y., and Vincent, P. (2012). A generative process for sampling contractive auto-encoders. In ICML’12.

[26] Salakhutdinov, R. and Hinton, G. E. (2009). Deep Boltzmann machines. In AISTATS’2009, pages 448– 455.

[27] Smolensky, P. (1986). Information processing in dynamical systems: Foundations of harmony theory. In D. E. Rumelhart and J. L. McClelland, editors, Parallel Distributed Processing, volume 1, chapter 6, pages 194–281. MIT Press, Cambridge.

[28] Susskind, J., Anderson, A., and Hinton, G. E. (2010). The Toronto face dataset. Technical Report UTML TR 2010-001, U. Toronto.

[29] Tieleman, T. (2008). Training restricted Boltzmann machines using approximations to the likelihood gradient. In W. W. Cohen, A. McCallum, and S. T. Roweis, editors, ICML 2008, pages 1064–1071. ACM.

[30] Vincent, P., Larochelle, H., Bengio, Y., and Manzagol, P.-A. (2008). Extracting and composing robust features with denoising autoencoders. In ICML 2008.

[31] Younes, L. (1999). On the convergence of Markovian stochastic algorithms with rapidly decreasing ergodicity rates. Stochastics and Stochastic Reports, 65(3), 177–228.