A Survey on Evaluation of Large Language Models

大语言模型评估综述

Large language models (LLMs) are gaining increasing popularity in both academia and industry, owing to their unprecedented performance in various applications. As LLMs continue to play a vital role in both research and daily use, their evaluation becomes increasingly critical, not only at the task level, but also at the society level for better understanding of their potential risks. Over the past years, significant efforts have been made to examine LLMs from various perspectives. This paper presents a comprehensive review of these evaluation methods for LLMs, focusing on three key dimensions: what to evaluate, where to evaluate, and how to evaluate. Firstly, we provide an overview from the perspective of evaluation tasks, encompassing general natural language processing tasks, reasoning, medical usage, ethics, education, natural and social sciences, agent applications, and other areas. Secondly, we answer the ‘where’ and ‘how’ questions by diving into the evaluation methods and benchmarks, which serve as crucial components in assessing the performance of LLMs. Then, we summarize the success and failure cases of LLMs in different tasks. Finally, we shed light on several future challenges that lie ahead in LLMs evaluation. Our aim is to offer invaluable insights to researchers in the realm of LLMs evaluation, thereby aiding the development of more proficient LLMs. Our key point is that evaluation should be treated as an essential discipline to better assist the development of LLMs. We consistently maintain the related open-source materials at: https://github.com/MLGroupJLU/LLM-eval-survey.

大语言模型 (LLM) 凭借其在各类应用中的卓越表现,正日益受到学术界和工业界的广泛关注。随着大语言模型在研究和日常使用中扮演越来越重要的角色,对其评估也显得愈发关键——这不仅涉及任务层面的分析,更需从社会层面理解其潜在风险。过去几年中,研究者们从多角度对大语言模型进行了深入考察。本文系统梳理了这些评估方法,围绕三个核心维度展开:评估内容、评估场景与评估方法。

首先,我们从评估任务的角度进行概述,涵盖通用自然语言处理任务、推理、医疗应用、伦理、教育、自然科学与社会科学、智能体应用及其他领域。其次,通过剖析评估方法与基准测试(这些构成评估大语言模型性能的关键要素),我们解答了"何处评估"与"如何评估"的问题。随后,我们总结了大语言模型在不同任务中的成功与失败案例。最后,我们揭示了大语言模型评估领域未来面临的若干挑战。

本文旨在为大语言模型评估领域的研究者提供宝贵洞见,从而助力开发更高效的大语言模型。我们的核心观点是:应当将评估视为一门重要学科,以更好地促进大语言模型的发展。相关开源材料持续维护于:https://github.com/MLGroupJLU/LLM-eval-survey

CCS Concepts: • Computing methodologies $\rightarrow$ Natural language processing; Machine learning.

CCS概念:• 计算方法 $\rightarrow$ 自然语言处理;机器学习。

Additional Key Words and Phrases: large language models, evaluation, model assessment, benchmark

其他关键词和短语:大语言模型 (Large Language Models)、评估、模型评估、基准测试

ACM Reference Format:

ACM 参考文献格式:

Yupeng Chang, Xu Wang, Jindong Wang, Yuan Wu, Linyi Yang, Kaijie Zhu, Hao Chen, Xiaoyuan Yi, Cunxiang Wang, Yidong Wang, Wei Ye, Yue Zhang, Yi Chang, Philip S. Yu, Qiang Yang, and Xing Xie. 2018. A Survey on Evaluation of Large Language Models . J. ACM 37, 4, Article 111 (August 2018), 45 pages. https://doi.org/ XXXXXXX.XXXXXXX

常宇鹏、王旭、王金东、吴元、杨林一、朱凯杰、陈浩、易晓媛、王存祥、王一东、叶伟、张悦、常毅、Philip S. Yu、杨强、谢幸。2018。大语言模型评估综述。《ACM杂志》37卷4期,文章111(2018年8月),45页。https://doi.org/XXXXXXX.XXXXXXX

1 INTRODUCTION

1 引言

Understanding the essence of intelligence and establishing whether a machine embodies it poses a compelling question for scientists. It is generally agreed upon that authentic intelligence equips us with reasoning capabilities, enables us to test hypotheses, and prepares for future eventualities [92]. In particular, Artificial Intelligence (AI) researchers focus on the development of machine-based intelligence, as opposed to biologically based intellect [136]. Proper measurement helps to understand intelligence. For instance, measures for general intelligence in human individuals often encompass IQ tests [12].

理解智能的本质并判断机器是否具备智能,是科学家们面临的一个引人深思的问题。人们普遍认为,真正的智能使我们具备推理能力、能够验证假设并为未来可能发生的情况做好准备 [92]。人工智能 (AI) 研究者尤其关注基于机器的智能发展,而非基于生物学的智能 [136]。恰当的测量有助于理解智能。例如,针对人类个体通用智能的测量通常包括智商测试 [12]。

Within the scope of AI, the Turing Test [193], a widely recognized test for assessing intelligence by discerning if responses are of human or machine origin, has been a longstanding objective in AI evolution. It is generally believed among researchers that a computing machine that successfully passes the Turing Test can be considered as intelligent. Consequently, when viewed from a wider lens, the chronicle of AI can be depicted as the timeline of creation and evaluation of intelligent models and algorithms. With each emergence of a novel AI model or algorithm, researchers invariably scrutinize its capabilities in real-world scenarios through evaluation using specific and challenging tasks. For instance, the Perceptron algorithm [49], touted as an Artificial General Intelligence (AGI) approach in the 1950s, was later revealed as inadequate due to its inability to resolve the XOR problem. The subsequent rise and application of Support Vector Machines (SVMs) [28] and deep learning [104] have marked both progress and setbacks in the AI landscape. A significant takeaway from previous attempts is the paramount importance of AI evaluation, which serves as a critical tool to identify current system limitations and inform the design of more powerful models.

在AI领域内,图灵测试[193]作为一项通过辨别响应来源(人类或机器)来评估智能的广受认可测试,长期以来一直是AI发展的目标。研究者普遍认为,能成功通过图灵测试的计算机器可被视为具有智能。因此,从更宏观的视角看,AI的发展史可被描述为智能模型与算法创建及评估的时间线。每当新型AI模型或算法出现,研究者总会通过特定挑战性任务的评估来检验其实际场景中的能力。例如,20世纪50年代被誉为通用人工智能(AGI)方法的感知机算法[49],后因无法解决XOR问题而被证明存在局限。随后支持向量机(SVM)[28]与深度学习[104]的兴起与应用,既标志着AI领域的进步,也伴随着挫折。从过往尝试中获得的重要启示是:AI评估具有至关重要的意义,它既是发现当前系统缺陷的关键工具,也能为设计更强大模型提供依据。

Recently, large language models (LLMs) have incited substantial interest across both academic and industrial domains [11, 219, 257]. As demonstrated by existing work [15], the great performance of LLMs has raised promise that they could be AGI in this era. LLMs possess the capabilities to solve diverse tasks, contrasting with prior models confined to solving specific tasks. Due to its great performance in handling different applications such as general natural language tasks and domain-specific ones, LLMs are increasingly used by individuals with critical information needs, such as students or patients.

近年来,大语言模型(LLM)在学术界和工业界引发了广泛关注[11, 219, 257]。现有研究表明[15],大语言模型的卓越性能预示着它们可能成为这个时代的通用人工智能(AGI)。与以往局限于解决特定任务的模型不同,大语言模型具备处理多样化任务的能力。由于其在通用自然语言任务和领域特定任务等不同应用场景中的出色表现,越来越多具有关键信息需求的人群(如学生或患者)开始使用大语言模型。

Evaluation is of paramount prominence to the success of LLMs due to several reasons. First, evaluating LLMs helps us better understand the strengths and weakness of LLMs. For instance, the Prompt Bench [264] benchmark illustrates that current LLMs are sensitive to adversarial prompts, thus a careful prompt engineering is necessary for better performance. Second, better evaluations can provide better guidance for human-LLMs interaction, which could inspire future interaction design and implementation. Third, the broad applicability of LLMs underscores the paramount importance of ensuring their safety and reliability, particularly in safety-sensitive sectors such as financial institutions and healthcare facilities. Finally, as LLMs are becoming larger with more emergent abilities, existing evaluation protocols may not be enough to evaluate their capabilities and potential risks. Therefore, we aim to raise awareness in the community of the importance to LLMs evaluations by reviewing the current evaluation protocols and most importantly, shed light on future research about designing new LLMs evaluation protocols.

评估对于大语言模型(LLM)的成功至关重要,原因如下:首先,评估能帮助我们更好地理解大语言模型的优势与局限。例如,Prompt Bench [264]基准测试表明当前大语言模型对对抗性提示(prompt)敏感,因此需要精心设计提示以获得更好性能。其次,更好的评估能为人类与大语言模型的交互提供指导,启发未来的交互设计与实现。第三,大语言模型的广泛应用凸显了确保其安全可靠性的极端重要性,特别是在金融机构和医疗机构等安全敏感领域。最后,随着大语言模型规模扩大并涌现出更多新能力,现有评估方案可能不足以全面评估其能力与潜在风险。因此,我们旨在通过梳理当前评估方案来提高学界对评估重要性的认知,更重要的是为设计新型大语言模型评估方案的未来研究指明方向。

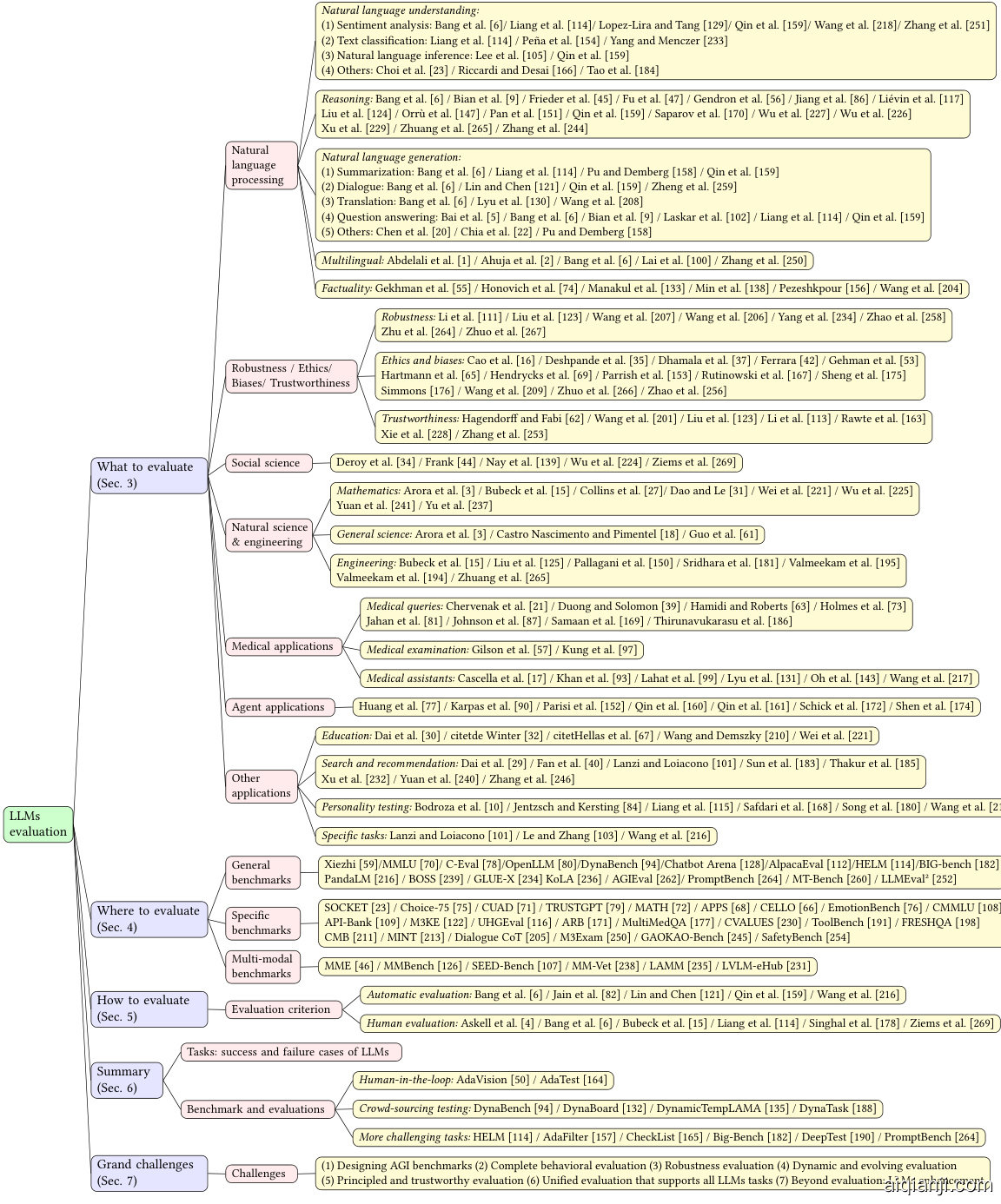

Fig. 1. Structure of this paper.

图 1: 本文结构。

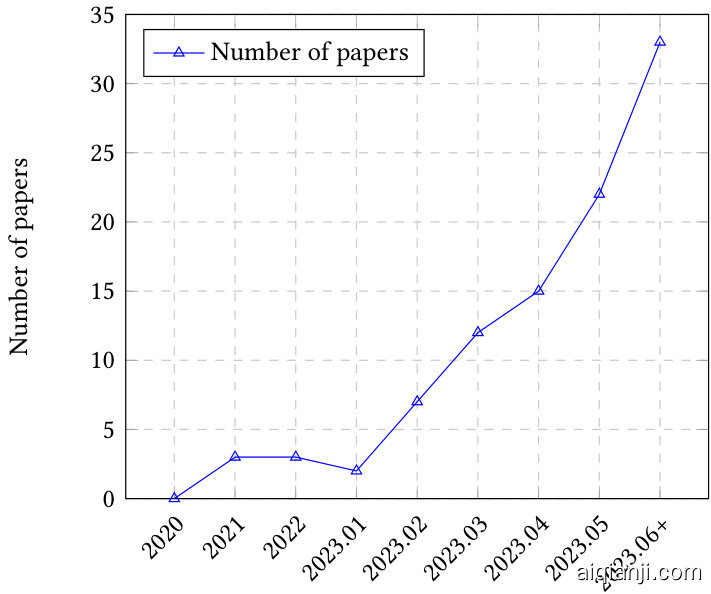

With the introduction of ChatGPT [145] and GPT-4 [146], there have been a number of research efforts aiming at evaluating ChatGPT and other LLMs from different aspects (Figure 2), encompassing a range of factors such as natural language tasks, reasoning, robustness, trustworthiness, medical applications, and ethical considerations. Despite these efforts, a comprehensive overview capturing the entire gamut of evaluations is still lacking. Furthermore, the ongoing evolution of LLMs has also presented novel aspects for evaluation, thereby challenging existing evaluation protocols and reinforcing the need for thorough, multifaceted evaluation techniques. While existing research such as Bubeck et al. [15] claimed that GPT-4 can be seen as sparks of AGI, others contest this claim due to the human-crafted nature of its evaluation approach.

随着ChatGPT [145]和GPT-4 [146]的推出,已有大量研究从不同角度(图 2)评估ChatGPT及其他大语言模型,涵盖自然语言任务、推理、鲁棒性、可信度、医疗应用和伦理考量等多方面因素。尽管存在这些研究,目前仍缺乏全面涵盖所有评估维度的综述。此外,大语言模型的持续演进也催生了新的评估方向,这对现有评估方案提出了挑战,并凸显了多维度深度评估技术的必要性。虽然Bubeck等人[15]等研究声称GPT-4可视为通用人工智能的雏形,但由于其评估方法的人工设计特性,这一论断仍存在争议。

This paper serves as the first comprehensive survey on the evaluation of large language models. As depicted in Figure 1, we explore existing work in three dimensions: 1) What to evaluate, 2) Where to evaluate, and 3) How to evaluate. Specifically, “what to evaluate" encapsulates existing evaluation tasks for LLMs, “where to evaluate" involves selecting appropriate datasets and benchmarks for evaluation, while “how to evaluate" is concerned with the evaluation process given appropriate tasks and datasets. These three dimensions are integral to the evaluation of LLMs. We subsequently discuss potential future challenges in the realm of LLMs evaluation.

本文是关于大语言模型 (Large Language Model) 评估的首篇综合性综述。如图 1 所示,我们从三个维度探讨现有工作:1) 评估内容 (What to evaluate),2) 评估场景 (Where to evaluate),3) 评估方法 (How to evaluate)。具体而言,"评估内容"涵盖现有的大语言模型评估任务,"评估场景"涉及选择合适的评估数据集和基准测试,而"评估方法"则关注在给定任务和数据集情况下的评估流程。这三个维度构成了大语言模型评估的完整体系。最后我们讨论了大语言模型评估领域未来可能面临的挑战。

The contributions of this paper are as follows:

本文的贡献如下:

The paper is organized as follows. In Sec. 2, we provide the basic information of LLMs and AI model evaluation. Then, Sec. 3 reviews existing work from the aspects of “what to evaluate”. After that, Sec. 4 is the “where to evaluate” part, which summarizes existing datasets and benchmarks. Sec. 5 discusses how to perform the evaluation. In Sec. 6, we summarize the key findings of this paper. We discuss grand future challenges in Sec. 7 and Sec. 8 concludes the paper.

本文结构如下。第2节介绍大语言模型(LLM)和AI模型评估的基础知识。第3节从"评估内容"角度综述现有工作。第4节讨论"评估载体",总结现有数据集和基准测试。第5节阐述评估方法。第6节归纳本文核心发现。第7节探讨未来重大挑战,第8节总结全文。

2 BACKGROUND

2 背景

2.1 Large Language Models

2.1 大语言模型 (Large Language Models)

Language models (LMs) [36, 51, 96] are computational models that have the capability to understand and generate human language. LMs have the transformative ability to predict the likelihood of word sequences or generate new text based on a given input. N-gram models [13], the most common type of LM, estimate word probabilities based on the preceding context. However, LMs also face challenges, such as the issue of rare or unseen words, the problem of over fitting, and the difficulty in capturing complex linguistic phenomena. Researchers are continuously working on improving LM architectures and training methods to address these challenges.

语言模型 (Language Model, LM) [36, 51, 96] 是能够理解和生成人类语言的计算模型。它们具有预测词序列概率或根据给定输入生成新文本的变革性能力。N元语法模型 (N-gram) [13] 作为最常见的语言模型类型,通过上文语境来估算词汇概率。然而语言模型仍面临诸多挑战,包括罕见词或未登录词问题、过拟合现象以及复杂语言现象捕捉困难等。研究人员正持续改进模型架构与训练方法以应对这些挑战。

Large Language Models (LLMs) [19, 91, 257] are advanced language models with massive parameter sizes and exceptional learning capabilities. The core module behind many LLMs such as GPT-3 [43], Instruct GP T [149], and GPT-4 [146] is the self-attention module in Transformer [197] that serves as the fundamental building block for language modeling tasks. Transformers have revolutionized the field of NLP with their ability to handle sequential data efficiently, allowing for parallel iz ation and capturing long-range dependencies in text. One key feature of LLMs is in-context learning [14], where the model is trained to generate text based on a given context or prompt. This enables LLMs to generate more coherent and con textually relevant responses, making them suitable for interactive and conversational applications. Reinforcement Learning from Human Feedback (RLHF) [25, 268] is another crucial aspect of LLMs. This technique involves fine-tuning the model using human-generated responses as rewards, allowing the model to learn from its mistakes and improve its performance over time.

大语言模型 (LLM) [19, 91, 257] 是具有海量参数规模和卓越学习能力的先进语言模型。GPT-3 [43]、InstructGPT [149] 和 GPT-4 [146] 等众多大语言模型的核心模块均采用 Transformer [197] 的自注意力机制,该机制是语言建模任务的基础构建模块。Transformer 通过高效处理序列数据的能力彻底改变了自然语言处理领域,既能实现并行化计算,又能捕捉文本中的长距离依赖关系。

大语言模型的关键特性之一是上下文学习 (in-context learning) [14],该技术通过训练模型根据给定上下文或提示生成文本。这使得大语言模型能够生成更具连贯性和上下文相关性的响应,因此非常适用于交互式和对话式应用场景。

基于人类反馈的强化学习 (RLHF) [25, 268] 是大语言模型的另一项重要技术。该方法通过将人类生成的响应作为奖励信号来微调模型,使模型能够从错误中学习并持续提升性能。

Fig. 2. Trend of LLMs evaluation papers over time (2020 - Jun. 2023, including Jul. 2023.).

图 2: 大语言模型 (LLM) 评估论文随时间变化趋势 (2020年-2023年6月,含2023年7月)。

In an auto regressive language model, such as GPT-3 and PaLM [24], given a context sequence $X$ , the LM tasks aim to predict the next token $y$ . The model is trained by maximizing the probability of the given token sequence conditioned on the context, i.e., $P(y|X)=P(y|x_ {1},x_ {2},...,x_ {t-1})$ , where $x_ {1},x_ {2},...,x_ {t-1}$ are the tokens in the context sequence, and $t$ is the current position. By using the chain rule, the conditional probability can be decomposed into a product of probabilities at each position:

在自回归语言模型(如GPT-3和PaLM [24])中,给定上下文序列$X$,语言模型的任务旨在预测下一个token $y$。模型通过最大化给定token序列在上下文条件下的概率进行训练,即$P(y|X)=P(y|x_ {1},x_ {2},...,x_ {t-1})$,其中$x_ {1},x_ {2},...,x_ {t-1}$是上下文序列中的token,$t$表示当前位置。通过链式法则,该条件概率可分解为各位置概率的乘积:

$$

P(y|X)=\prod_ {t=1}^{T}P(y_ {t}|x_ {1},x_ {2},...,x_ {t-1}),

$$

$$

P(y|X)=\prod_ {t=1}^{T}P(y_ {t}|x_ {1},x_ {2},...,x_ {t-1}),

$$

where $T$ is sequence length. In this way, the model predicts each token at each position in an auto regressive manner, generating a complete text sequence.

其中 $T$ 是序列长度。通过这种方式,模型以自回归的方式预测每个位置的token,生成完整的文本序列。

One common approach to interacting with LLMs is prompt engineering [26, 222, 263], where users design and provide specific prompt texts to guide LLMs in generating desired responses or completing specific tasks. This is widely adopted in existing evaluation efforts. People can also engage in question-and-answer interactions [83], where they pose questions to the model and receive answers, or engage in dialogue interactions, having natural language conversations with LLMs. In conclusion, LLMs, with their Transformer architecture, in-context learning, and RLHF capabilities, have revolutionized NLP and hold promise in various applications. Table 1 provides a brief comparison of traditional ML, deep learning, and LLMs.

与大语言模型交互的一种常见方法是提示工程 [26, 222, 263],用户通过设计和提供特定提示文本来引导大语言模型生成期望的响应或完成特定任务。这种方法在现有评估工作中被广泛采用。人们还可以进行问答交互 [83],即向模型提出问题并获取答案,或参与对话交互,与大语言模型进行自然语言对话。总之,凭借其 Transformer 架构、上下文学习能力和 RLHF 技术,大语言模型彻底改变了自然语言处理领域,并在多种应用中展现出潜力。表 1 简要对比了传统机器学习、深度学习与大语言模型的差异。

Chang et al.

Chang et al.

Table 1. Comparison of Traditional ML, Deep Learning, and LLMs

| Comparison | TraditionalML | DeepLearning | LLMs |

| TrainingDataSize | Large | Large | Verylarge |

| FeatureEngineering | Manual | Automatic | Automatic |

| ModelComplexity | Limited | Complex | VeryComplex |

| Interpretability | Good | Poor | Poorer |

| Performance | Moderate | High | Highest |

| HardwareRequirements | Low | High | VeryHigh |

表 1: 传统机器学习 (Traditional ML) 、深度学习 (Deep Learning) 与大语言模型 (LLMs) 对比

| 对比项 | 传统机器学习 | 深度学习 | 大语言模型 |

|---|---|---|---|

| 训练数据量 | 大 | 大 | 极大 |

| 特征工程 | 手动 | 自动 | 自动 |

| 模型复杂度 | 有限 | 复杂 | 极复杂 |

| 可解释性 | 良好 | 差 | 更差 |

| 性能表现 | 中等 | 高 | 最高 |

| 硬件需求 | 低 | 高 | 极高 |

Fig. 3. The evaluation process of AI models.

图 3: AI模型的评估流程。

2.2 AI Model Evaluation

2.2 AI模型评估

AI model evaluation is an essential step in assessing the performance of a model. There are some standard model evaluation protocols, including $k$ -fold cross-validation, holdout validation, leave one out cross-validation (LOOCV), bootstrap, and reduced set [8, 95]. For instance, $k$ -fold crossvalidation divides the dataset into $k$ parts, with one part used as a test set and the rest as training sets, which can reduce training data loss and obtain relatively more accurate model performance evaluation [48]; Holdout validation divides the dataset into training and test sets, with a smaller calculation amount but potentially more significant bias; LOOCV is a unique $k$ -fold cross-validation method where only one data point is used as the test set [223]; Reduced set trains the model with one dataset and tests it with the remaining data, which is computationally simple, but the applicability is limited. The appropriate evaluation method should be chosen according to the specific problem and data characteristics for more reliable performance indicators.

AI模型评估是衡量模型性能的关键步骤。常见的标准评估方法包括 $k$ 折交叉验证 (k-fold cross-validation)、留出法 (holdout validation)、留一交叉验证 (LOOCV)、自助法 (bootstrap) 和缩减集法 (reduced set) [8, 95]。例如:$k$ 折交叉验证将数据集划分为 $k$ 份,其中一份作为测试集,其余作为训练集,这种方法能减少训练数据损失并获得更准确的模型性能评估 [48];留出法将数据划分为训练集和测试集,计算量较小但可能产生较大偏差;留一交叉验证是 $k$ 折交叉验证的特例,每次仅用一个数据点作为测试集 [223];缩减集法使用一个数据集训练模型并用剩余数据测试,计算简单但适用性有限。应根据具体问题和数据特征选择合适的评估方法,以获取更可靠的性能指标。

Figure 3 illustrates the evaluation process of AI models, including LLMs. Some evaluation protocols may not be feasible to evaluate deep learning models due to the extensive training size. Thus, evaluation on a static validation set has long been the standard choice for deep learning models. For instance, computer vision models leverage static test sets such as ImageNet [33] and MS COCO [120] for evaluation. LLMs also use GLUE [200] or SuperGLUE [199] as the common test sets.

图 3: AI模型的评估流程,包括大语言模型。由于训练数据规模庞大,部分评估方案可能不适用于深度学习模型评估。因此,静态验证集评估长期以来都是深度学习模型的标准选择。例如,计算机视觉模型采用ImageNet [33]和MS COCO [120]等静态测试集进行评估,大语言模型则普遍使用GLUE [200]或SuperGLUE [199]作为测试集。

As LLMs are becoming more popular with even poorer interpret ability, existing evaluation protocols may not be enough to evaluate the true capabilities of LLMs thoroughly. We will introduce recent evaluations of LLMs in Sec. 5.

随着大语言模型 (LLM) 日益普及但其可解释性却更差,现有评估方法可能不足以全面衡量大语言模型的真实能力。我们将在第5节介绍近期的大语言模型评估进展。

3 WHAT TO EVALUATE

3 评估内容

What tasks should we evaluate LLMs to show their performance? On what tasks can we claim the strengths and weaknesses of LLMs? In this section, we divide existing tasks into the following categories: natural language processing, robustness, ethics, biases and trustworthiness, social sciences, natural science and engineering, medical applications, agent applications (using LLMs as agents), and other applications.

我们应该评估大语言模型在哪些任务上的表现?在哪些任务上我们可以宣称大语言模型的优势与不足?本节将现有任务划分为以下类别:自然语言处理、鲁棒性、伦理、偏见与可信度、社会科学、自然科学与工程、医疗应用、智能体应用(将大语言模型作为AI智能体使用)以及其他应用。

3.1 Natural Language Processing Tasks

3.1 自然语言处理任务

The initial objective behind the development of language models, particularly large language models, was to enhance performance on natural language processing tasks, encompassing both understanding and generation. Consequently, the majority of evaluation research has been primarily focused on natural language tasks. Table 2 summarizes the evaluation aspects of existing research, and we mainly highlight their conclusions in the following.2

语言模型(尤其是大语言模型)最初的发展目标是提升自然语言处理任务(包括理解和生成)的性能。因此,大多数评估研究主要聚焦于自然语言任务。表2总结了现有研究的评估维度,下文将重点阐述其结论。

3.1.1 Natural language understanding. Natural language understanding represents a wide spectrum of tasks that aims to obtain a better understanding of the input sequence. We summarize recent efforts in LLMs evaluation from several aspects.

3.1.1 自然语言理解。自然语言理解涵盖了一系列旨在更好地理解输入序列的任务。我们从多个方面总结了大语言模型 (LLM) 评估的最新进展。

Sentiment analysis is a task that analyzes and interprets the text to determine the emotional inclination. It is typically a binary (positive and negative) or triple (positive, neutral, and negative) class classification problem. Evaluating sentiment analysis tasks is a popular direction. Liang et al. [114] and Zeng et al. [243] showed that the performance of the models on this task is usually high. ChatGPT’s sentiment analysis prediction performance is superior to traditional sentiment analysis methods [129] and comes close to that of GPT-3.5 [159]. In fine-grained sentiment and emotion cause analysis, ChatGPT also exhibits exceptional performance [218]. In low-resource learning environments, LLMs exhibit significant advantages over small language models [251], but the ability of ChatGPT to understand low-resource languages is limited [6]. In conclusion, LLMs have demonstrated commendable performance in sentiment analysis tasks. Future work should focus on enhancing their capability to understand emotions in under-resourced languages.

情感分析是一项通过分析和解读文本来确定情感倾向的任务。它通常是二元(正面和负面)或三元(正面、中性和负面)分类问题。评估情感分析任务是当前热门研究方向。Liang等人[114]和Zeng等人[243]的研究表明,模型在该任务上的表现通常较高。ChatGPT的情感分析预测性能优于传统情感分析方法[129],并接近GPT-3.5的水平[159]。在细粒度情感及情感原因分析中,ChatGPT同样展现出卓越性能[218]。在低资源学习环境下,大语言模型相比小语言模型具有显著优势[251],但ChatGPT对低资源语言的理解能力有限[6]。综上所述,大语言模型在情感分析任务中展现了值得称赞的表现。未来工作应着重提升其对低资源语言情感理解的能力。

Text classification and sentiment analysis are related fields, text classification not only focuses on sentiment, but also includes the processing of all texts and tasks. The work of Liang et al. [114] showed that GLM-130B was the best-performed model, with an overall accuracy of $85.8%$ for miscellaneous text classification. Yang and Menczer [233] found that ChatGPT can produce credibility ratings for a wide range of news outlets, and these ratings have a moderate correlation with those from human experts. Furthermore, ChatGPT achieves acceptable accuracy in a binary classification scenario $\mathrm{AUC}{=}0.89$ ). Peña et al. [154] discussed the problem of topic classification for public affairs documents and showed that using an LLM backbone in combination with SVM class if i ers is a useful strategy to conduct the multi-label topic classification task in the domain of public affairs with accuracies over $85%$ . Overall, LLMs perform well on text classification and can even handle text classification tasks in unconventional problem settings as well.

文本分类与情感分析是相关领域,文本分类不仅关注情感,还包括对所有文本和任务的处理。Liang等人[114]的研究表明,GLM-130B是性能最佳的模型,在杂项文本分类任务中总体准确率达到$85.8%$。Yang和Menczer[233]发现,ChatGPT能够为各类新闻机构生成可信度评级,这些评级与人类专家的评估结果具有中等程度相关性。此外,ChatGPT在二元分类场景中达到了可接受的准确率$\mathrm{AUC}{=}0.89$)。Peña等人[154]探讨了公共事务文档的主题分类问题,证明将大语言模型骨干网络与SVM分类器结合使用,是在公共事务领域执行多标签主题分类任务的有效策略,准确率超过$85%$。总体而言,大语言模型在文本分类任务中表现优异,甚至能处理非常规问题设置下的文本分类任务。

Natural language inference (NLI) is the task of determining whether the given “hypothesis” logically follows from the “premise”. Qin et al. [159] showed that ChatGPT outperforms GPT-3.5 for NLI tasks. They also found that ChatGPT excels in handling factual input that could be attributed to its RLHF training process in favoring human feedback. However, Lee et al. [105] observed LLMs perform poorly in the scope of NLI and further fail in representing human disagreement, which indicates that LLMs still have a large room for improvement in this field.

自然语言推理 (NLI) 是判断给定"假设"是否从"前提"中逻辑推导出的任务。Qin等[159]发现ChatGPT在NLI任务上表现优于GPT-3.5。他们还指出ChatGPT擅长处理事实性输入,这可能归因于其RLHF训练过程对人类反馈的偏好。然而Lee等[105]观察到,大语言模型在NLI范围内表现不佳,且无法有效表征人类分歧,这表明大语言模型在该领域仍有很大改进空间。

Semantic understanding refers to the meaning or understanding of language and its associated concepts. It involves the interpretation and comprehension of words, phrases, sentences, and the relationships between them. Semantic processing goes beyond the surface level and focuses on understanding the underlying meaning and intent. Tao et al. [184] comprehensively evaluated the event semantic processing abilities of LLMs covering understanding, reasoning, and prediction about the event semantics. Results indicated that LLMs possess an understanding of individual events, but their capacity to perceive the semantic similarity among events is constrained. In reasoning tasks, LLMs exhibit robust reasoning abilities in causal and intentional relations, yet their performance in other relation types is comparatively weaker. In prediction tasks, LLMs exhibit enhanced predictive capabilities for future events with increased contextual information. Riccardi and Desai [166] explored the semantic proficiency of LLMs and showed that these models perform poorly in evaluating basic phrases. Furthermore, GPT-3.5 and Bard cannot distinguish between meaningful and nonsense phrases, consistently classifying highly nonsense phrases as meaningful. GPT-4 shows significant improvements, but its performance is still significantly lower than that of humans. In summary, the performance of LLMs in semantic understanding tasks is poor. In the future, we can start from this aspect and focus on improving its performance on this application.

语义理解 (Semantic understanding) 指对语言及其相关概念的含义或理解。它涉及对词语、短语、句子及其间关系的解释与理解。语义处理超越了表层结构,专注于理解深层含义和意图。Tao等人[184]全面评估了大语言模型在事件语义处理方面的能力,涵盖了对事件语义的理解、推理和预测。结果表明,大语言模型能够理解单个事件,但其感知事件间语义相似性的能力有限。在推理任务中,大语言模型在因果和意图关系上表现出强大的推理能力,但在其他关系类型上表现相对较弱。在预测任务中,随着上下文信息的增加,大语言模型对未来事件的预测能力有所提升。Riccardi和Desai[166]探究了大语言模型的语义能力,发现这些模型在评估基本短语时表现不佳。此外,GPT-3.5和Bard无法区分有意义和无意义的短语,始终将高度无意义的短语归类为有意义。GPT-4显示出显著改进,但其性能仍远低于人类水平。总之,大语言模型在语义理解任务中表现欠佳。未来,我们可以从这一方面入手,重点提升其在该应用中的性能。

Table 2. Summary of evaluation on natural language processing tasks: NLU (Natural Language Understanding, including SA (Sentiment Analysis), TC (Text Classification), NLI (Natural Language Inference) and other NLU tasks), Reasoning, NLG (Natural Language Generation, including Summ. (Sum mari z ation), Dlg. (Dialogue), Tran (Translation), QA (Question Answering) and other NLG tasks), and Multilingual tasks (ordered by the name of the first author).

| Reference | NLU | RNG. | Mul. | ||||||||

| SA TC | NLI | Others | Summ. | Dlg. | NLG Tran. | QA | Others | ||||

| Abdelali et al. [1] | |||||||||||

| Ahuja et al. [2] | |||||||||||

| Bian et al. [9] | √ | √ | |||||||||

| Bang et al. [6] | √ | √ | √ | √ | √ | √ | |||||

| Bai et al. [5] | √ | ||||||||||

| Chen et al. [20] | |||||||||||

| Choi et al. [23] | √ | ||||||||||

| Chia et al. [22] | √ | ||||||||||

| Frieder et al. [45] | √ | ||||||||||

| Fu et al. [47] | |||||||||||

| Gekhman et al. [55] | |||||||||||

| Gendron et al. [56] | |||||||||||

| Honovich et al. [74] | √ | √ | √ | √ | |||||||

| Jiang et al. [86] | |||||||||||

| Lai et al. [100] | √ | ||||||||||

| Laskar et al. [102] | √ | √ | √ | √ | √ | √ | |||||

| Lopez-Lira and Tang [129] | √ | ||||||||||

| Liang et al. [114] | √ | √ | |||||||||

| Lee et al. [105] | √ | ||||||||||

| Lin and Chen [121] | √ | ||||||||||

| Liévin et al. [117] | √ | ||||||||||

| Liu et al. [124] | |||||||||||

| Lyu et al. [130] | √ | ||||||||||

| Manakul et al. [133] | √ | ||||||||||

| Min et al. [138] | |||||||||||

| Orru et al. [147] | √ | ||||||||||

| Pan et al. [151] | √ | ||||||||||

| Pena et al. [154] | √ | ||||||||||

| Pu and Demberg [158] | √ | √ | |||||||||

| Pezeshkpour [156] | √ | ||||||||||

| Qin et al. [159] | √ | √ | √ | √ | √ | √ | |||||

| Riccardi and Desai [166] | √ | ||||||||||

| Saparov et al. [170] | √ | ||||||||||

| Tao et al. [184] Wang et al. [208] | |||||||||||

J. ACM, Vol. 37, No. 4, Article 111. Publication date: August 2018.

表 2: 自然语言处理任务评估总结: NLU (自然语言理解, 包括 SA (情感分析), TC (文本分类), NLI (自然语言推理) 和其他 NLU 任务), Reasoning (推理), NLG (自然语言生成, 包括 Summ. (摘要), Dlg. (对话), Tran (翻译), QA (问答) 和其他 NLG 任务), 以及 Multilingual (多语言) 任务 (按第一作者姓名排序)。

| Reference | NLU | RNG. | NLG | Mul. | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| SA TC | NLI | Others | Summ. | Dlg. | Tran. | QA | Others | ||||

| Abdelali et al. [1] | |||||||||||

| Ahuja et al. [2] | |||||||||||

| Bian et al. [9] | √ | √ | |||||||||

| Bang et al. [6] | √ | √ | √ | √ | √ | √ | |||||

| Bai et al. [5] | √ | ||||||||||

| Chen et al. [20] | |||||||||||

| Choi et al. [23] | √ | ||||||||||

| Chia et al. [22] | √ | ||||||||||

| Frieder et al. [45] | √ | ||||||||||

| Fu et al. [47] | |||||||||||

| Gekhman et al. [55] | |||||||||||

| Gendron et al. [56] | |||||||||||

| Honovich et al. [74] | √ | √ | √ | √ | |||||||

| Jiang et al. [86] | |||||||||||

| Lai et al. [100] | √ | ||||||||||

| Laskar et al. [102] | √ | √ | √ | √ | √ | √ | |||||

| Lopez-Lira and Tang [129] | √ | ||||||||||

| Liang et al. [114] | √ | √ | |||||||||

| Lee et al. [105] | √ | ||||||||||

| Lin and Chen [121] | √ | ||||||||||

| Liévin et al. [117] | √ | ||||||||||

| Liu et al. [124] | |||||||||||

| Lyu et al. [130] | √ | ||||||||||

| Manakul et al. [133] | √ | ||||||||||

| Min et al. [138] | |||||||||||

| Orru et al. [147] | √ | ||||||||||

| Pan et al. [151] | √ | ||||||||||

| Pena et al. [154] | √ | ||||||||||

| Pu and Demberg [158] | √ | √ | |||||||||

| Pezeshkpour [156] | √ | ||||||||||

| Qin et al. [159] | √ | √ | √ | √ | √ | √ | |||||

| Riccardi and Desai [166] | √ | ||||||||||

| Saparov et al. [170] | √ | ||||||||||

| Tao et al. [184] Wang et al. [208] |

J. ACM, 第 37 卷, 第 4 期, 文章 111. 出版日期: 2018 年 8 月。

In social knowledge understanding, Choi et al. [23] evaluated how well models perform at learning and recognizing concepts of social knowledge and the results revealed that despite being much smaller in the number of parameters, finetuning supervised models such as BERT lead to much better performance than zero-shot models using state-of-the-art LLMs, such as GPT [162], GPT-J-6B [202] and so on. This statement demonstrates that supervised models significantly outperform zero-shot models in terms of performance, highlighting that an increase in parameters does not necessarily guarantee a higher level of social knowledge in this particular scenario.

在社会知识理解方面,Choi等人[23]评估了模型在学习与识别社会知识概念方面的表现。结果显示,尽管参数量级小得多,但经过微调的监督式模型(如BERT)性能显著优于采用最先进大语言模型(如GPT[162]、GPT-J-6B[202]等)的零样本模型。这一结论表明,在该特定场景下,监督式模型在性能上明显超越零样本模型,同时揭示参数量的增加并不必然带来社会知识理解能力的提升。

3.1.2 Reasoning. The task of reasoning poses significant challenges for an intelligent AI model. To effectively tackle reasoning tasks, the models need to not only comprehend the provided information but also utilize reasoning and inference to deduce answers when explicit responses are absent. Table 2 reveals that there is a growing interest in evaluating the reasoning ability of LLMs, as evidenced by the increasing number of articles focusing on exploring this aspect. Currently, the evaluation of reasoning tasks can be broadly categorized into mathematical reasoning, commonsense reasoning, logical reasoning, and domain-specific reasoning.

3.1.2 推理。推理任务对智能AI模型提出了重大挑战。要有效解决推理任务,模型不仅需要理解所提供的信息,还需在缺乏明确答案时运用推理和推断能力得出结论。表2显示,随着探讨该领域的文章数量增加,评估大语言模型推理能力的研究兴趣正持续升温。目前推理任务的评估可大致分为数学推理、常识推理、逻辑推理和领域特定推理四大类。

ChatGPT exhibits a strong capability for arithmetic reasoning by outperforming GPT-3.5 in the majority of tasks [159]. However, its proficiency in mathematical reasoning still requires improvement [6, 45, 265]. On symbolic reasoning tasks, ChatGPT is mostly worse than GPT-3.5, which may be because ChatGPT is prone to uncertain responses, leading to poor performance [6]. Through the poor performance of LLMs on task variants of counter factual conditions, Wu et al. [227] showed that the current LLMs have certain limitations in abstract reasoning ability. On abstract reasoning, Gendron et al. [56] found that existing LLMs have very limited ability. In logical reasoning, Liu et al. [124] indicated that ChatGPT and GPT-4 outperform traditional fine-tuning methods on most benchmarks, demonstrating their superiority in logical reasoning. However, both models face challenges when handling new and out-of-distribution data. ChatGPT does not perform as well as other LLMs, including GPT-3.5 and BARD [159, 229]. This is because ChatGPT is designed explicitly for chatting, so it does an excellent job of maintaining rationality. FLAN-T5, LLaMA, GPT-3.5, and PaLM perform well in general deductive reasoning tasks [170]. GPT-3.5 is not good at keeping oriented for reasoning in the inductive setting [229]. For multi-step reasoning, Fu et al. [47] showed PaLM and Claude2 are the only two model families that achieve similar performance (but still worse than the GPT model family). Moreover, LLaMA-65B is the most robust open-source LLMs to date, which performs closely to code-davinci-002. Some papers separately evaluate the performance of ChatGPT on some reasoning tasks: ChatGPT generally performs poorly on commonsense reasoning tasks, but relatively better than non-text semantic reasoning [6]. Meanwhile, ChatGPT also lacks spatial reasoning ability, but exhibits better temporal reasoning. Finally, while the performance of ChatGPT is acceptable on causal and analogical reasoning, it performs poorly on multi-hop reasoning ability, which is similar to the weakness of other LLMs on complex reasoning [148]. In professional domain reasoning tasks, zero-shot Instruct GP T and Codex are capable of complex medical reasoning tasks, but still need to be further improved [117]. In terms of language insight issues, Orrù et al. [147] demonstrated the potential of ChatGPT for solving verbal insight problems, as ChatGPT’s performance was comparable to that of human participants. It should be noted that most of the above conclusions are obtained for specific data sets. In contrast, more complex tasks have become the mainstream benchmarks for assessing the capabilities of LLMs. These include tasks such as mathematical reasoning [226, 237, 244] and structured data inference [86, 151]. Overall, LLMs show great potential in reasoning and show a continuous improvement trend, but still face many challenges and limitations, requiring more in-depth research and optimization.

ChatGPT在算术推理方面展现出强大能力,在多数任务中表现优于GPT-3.5 [159]。但其数学推理能力仍需提升 [6, 45, 265]。在符号推理任务中,ChatGPT大多逊于GPT-3.5,这可能源于其易产生不确定响应而导致表现不佳 [6]。Wu等人 [227] 通过大语言模型在反事实条件任务变体上的糟糕表现,揭示了当前大语言模型在抽象推理能力上的局限。Gendron等 [56] 发现现有大语言模型的抽象推理能力非常有限。Liu等 [124] 指出在逻辑推理方面,ChatGPT和GPT-4在多数基准测试中超越传统微调方法,但两者处理新数据和分布外数据时仍面临挑战。ChatGPT在包括GPT-3.5和BARD在内的其他大语言模型中表现并不突出 [159, 229],因其专为对话设计而更擅长保持合理性。FLAN-T5、LLaMA、GPT-3.5和PaLM在一般演绎推理任务中表现良好 [170],但GPT-3.5不擅长归纳推理中的方向保持 [229]。Fu等 [47] 表明在多步推理中,PaLM和Claude2是仅有的两个性能接近(但仍逊于GPT系列)的模型系列,而LLaMA-65B是当前最稳健的开源大语言模型,其表现接近code-davinci-002。部分研究单独评估了ChatGPT在特定推理任务中的表现:其在常识推理任务中普遍较差,但优于非文本语义推理 [6];缺乏空间推理能力却展现出较好的时间推理能力;虽然在因果和类比推理中表现尚可,但在多跳推理能力上表现欠佳,这与其它大语言模型在复杂推理中的弱点相似 [148]。在专业领域推理任务中,零样本InstructGPT和Codex能处理复杂医疗推理任务,但仍需改进 [117]。Orrù等 [147] 证实ChatGPT在语言洞察问题解决上具有与人类参与者相当的表现潜力。需注意上述结论多基于特定数据集得出,而数学推理 [226, 237, 244] 和结构化数据推断 [86, 151] 等更复杂任务已成为评估大语言模型能力的主流基准。总体而言,大语言模型展现出持续进步的推理潜力,但仍面临诸多挑战与局限,需要更深入的研究和优化。

3.1.3 Natural language generation. NLG evaluates the capabilities of LLMs in generating specific texts, which consists of several tasks, including sum mari z ation, dialogue generation, machine translation, question answering, and other open-ended generation tasks.

3.1.3 自然语言生成

NLG评估大语言模型在生成特定文本方面的能力,包括摘要、对话生成、机器翻译、问答和其他开放式生成任务。

Sum mari z ation is a generation task that aims to learn a concise abstract for the given sentence. In this evaluation, Liang et al. [114] found that TNLG v2 (530B) [179] achieved the highest score in both scenarios, followed by OPT (175B) [247] in second place. The fine-tuned Bart [106] is still better than zero-shot ChatGPT. Specifically, ChatGPT demonstrates comparable zero-shot performance to the text-davinci-002 [6], but performs worse than GPT-3.5 [159]. These findings indicate that LLMs, particularly ChatGPT, have a general performance in sum mari z ation tasks.

摘要生成是一项旨在为给定句子学习简洁摘要的生成任务。在该评估中,Liang等人[114]发现TNLG v2(530B)[179]在两种场景下均获得最高分,OPT(175B)[247]位列第二。经过微调的Bart[106]仍优于零样本ChatGPT。具体而言,ChatGPT在零样本性能上与text-davinci-002[6]相当,但表现逊于GPT-3.5[159]。这些发现表明,大语言模型(特别是ChatGPT)在摘要生成任务中具有通用性能。

Evaluating the performance of LLMs on dialogue tasks is crucial to the development of dialogue systems and improving human-computer interaction. Through such evaluation, the natural language processing ability, context understanding ability and generation ability of the model can be improved, so as to realize a more intelligent and more natural dialogue system. Both Claude and ChatGPT generally achieve better performance across all dimensions when compared to GPT-3.5 [121, 159]. When comparing the Claude and ChatGPT models, both models demonstrate competitive performance across different evaluation dimensions, with Claude slightly outperforming ChatGPT in specific configurations. Research by Bang et al. [6] underscores that fully fine-tuned models tailored for specific tasks surpass ChatGPT in both task-oriented and knowledge-based dialogue contexts. Additionally, Zheng et al. [259] have curated a comprehensive LLMs conversation dataset, LMSYS-Chat-1M, encompassing up to one million samples. This dataset serves as a valuable resource for evaluating and advancing dialogue systems.

评估大语言模型(LLM)在对话任务中的表现对开发对话系统和改进人机交互至关重要。通过此类评估,可以提升模型的自然语言处理能力、上下文理解能力和生成能力,从而实现更智能、更自然的对话系统。与GPT-3.5相比[121, 159],Claude和ChatGPT在所有维度上通常都表现更优。在对比Claude和ChatGPT模型时,两者在不同评估维度上都展现出竞争力,其中Claude在特定配置下略优于ChatGPT。Bang等人的研究[6]表明,针对特定任务完全微调的模型在任务导向型和知识型对话场景中都优于ChatGPT。此外,Zheng等人[259]整理了一个包含多达百万样本的综合性大语言模型对话数据集LMSYS-Chat-1M,该数据集为评估和改进对话系统提供了宝贵资源。

While LLMs are not explicitly trained for translation tasks, they can still demonstrate strong performance. Wang et al. [208] demonstrated that ChatGPT and GPT-4 exhibit superior performance in comparison to commercial machine translation (MT) systems, as evaluated by humans. Additionally, they outperform most document-level NMT methods in terms of sacreBLEU scores. During contrastive testing, ChatGPT shows lower accuracy in comparison to traditional translation models. However, GPT-4 demonstrates a robust capability in explaining discourse knowledge, even though it may occasionally select incorrect translation candidates. The findings from Bang et al. [6] indicated that ChatGPT performs $\mathrm{X to Eng}$ translation well, but it still lacks the ability to perform $\mathrm{Eng to X}$ translation. Lyu et al. [130] investigated several research directions in MT utilizing LLMs. This study significantly contributes to the advancement of MT research and highlights the potential of LLMs in enhancing translation capabilities. In summary, while LLMs perform satisfactorily in several translation tasks, there is still room for improvement, e.g., enhancing the translation capability from English to non-English languages.

虽然大语言模型并未针对翻译任务进行专门训练,但其仍能展现出强劲性能。Wang等人[208]通过人工评估证明,ChatGPT和GPT-4相较于商用机器翻译(MT)系统具有更优异的表现。此外,它们在sacreBLEU分数上也超越了大多数文档级神经机器翻译(NMT)方法。在对比测试中,ChatGPT相比传统翻译模型准确率较低,但GPT-4展现出强大的语篇知识解释能力,尽管偶尔会误选翻译候选词。Bang等人[6]的研究表明,ChatGPT在$\mathrm{X to Eng}$翻译任务中表现良好,但在$\mathrm{Eng to X}$翻译方面仍有不足。Lyu等人[130]探索了利用大语言模型进行机器翻译的多个研究方向,该研究显著推动了机器翻译领域的进展,并凸显了大语言模型提升翻译能力的潜力。总体而言,虽然大语言模型在多项翻译任务中表现令人满意,但仍有改进空间,例如提升英语到非英语语言的翻译能力。

Question answering is a crucial technology in the field of human-computer interaction, and it has found wide application in scenarios like search engines, intelligent customer service, and QA systems. The measurement of accuracy and efficiency in QA models will have significant implications for these applications. According to Liang et al. [114], among all the evaluated models, Instruct GP T davinci v2 (175B) exhibited the highest performance in terms of accuracy, robustness, and fairness across the 9 QA scenarios. Both GPT-3.5 and ChatGPT demonstrate significant advancements compared to GPT-3 in their ability to answer general knowledge questions. In most domains, ChatGPT surpasses GPT-3.5 by more than $2%$ in terms of performance [9, 159]. However, ChatGPT performs slightly weaker than GPT-3.5 on the Commonsense QA and Social IQA benchmarks. This can be attributed to ChatGPT’s cautious nature, as it tends to decline to provide an answer when there is insufficient information available. Fine-tuned models, such as Vícuna and ChatGPT, exhibit exceptional performance with near-perfect scores, surpassing models that lack supervised fine-tuning by a significant margin [5, 6]. Laskar et al. [102] evaluated the effectiveness of ChatGPT on a range of academic datasets, including various tasks such as answering questions, summarizing text, generating code, reasoning with commonsense, solving math problems, translating languages, detecting bias, and addressing ethical issues. Overall, LLMs showcase flawless performance on QA tasks and hold the potential for further enhancing their proficiency in social, event, and temporal commonsense knowledge in the future.

问答是人机交互领域的一项关键技术,已广泛应用于搜索引擎、智能客服和问答系统等场景。问答模型的准确性和效率衡量对这些应用具有重要意义。根据Liang等人[114]的研究,在所有评估模型中,Instruct GPT davinci v2 (175B)在9个问答场景的准确性、鲁棒性和公平性方面表现出最高性能。GPT-3.5和ChatGPT在回答常识性问题方面相比GPT-3都有显著提升。在大多数领域,ChatGPT的性能比GPT-3.5高出超过$2%$[9, 159]。但ChatGPT在Commonsense QA和Social IQA基准测试中表现略逊于GPT-3.5,这归因于ChatGPT的谨慎特性——当信息不足时往往会拒绝回答。经过微调的模型(如Vícuna和ChatGPT)表现出近乎完美的卓越性能,显著超越未经监督微调的模型[5, 6]。Laskar等人[102]评估了ChatGPT在学术数据集上的有效性,涵盖问答、文本摘要、代码生成、常识推理、数学解题、语言翻译、偏见检测和伦理问题处理等任务。总体而言,大语言模型在问答任务中展现出无懈可击的性能,并有望在未来进一步提升其对社会、事件和时间常识知识的掌握能力。

There are also other generation tasks to explore. In the field of sentence style transfer, Pu and Demberg [158] demonstrated that ChatGPT surpasses the previous SOTA supervised model through training on the same subset for few-shot learning, as evident from the higher BLEU score. However, when it comes to controlling the formality of sentence style, ChatGPT’s performance still differs significantly from human behavior. In writing tasks, Chia et al. [22] discovered that LLMs exhibit consistent performance across various categories such as informative, professional, argumentative, and creative writing. This finding implies that LLMs possess a general proficiency in writing capabilities. In text generation quality, Chen et al. [20] revealed that ChatGPT excels in assessing text quality from multiple angles, even in the absence of reference texts, surpassing the performance of most existing automated metrics. Employing ChatGPT to generate numerical scores for text quality emerged as the most reliable and effective approach among the various testing methods studied.

还有其他生成任务值得探索。在句子风格转换领域,Pu和Demberg[158]通过在同一子集上进行少样本学习训练,证明ChatGPT超越了之前的SOTA监督模型,这一点从更高的BLEU分数中可以看出。然而,在控制句子风格的正式程度方面,ChatGPT的表现仍与人类行为存在显著差异。在写作任务中,Chia等人[22]发现大语言模型在信息性、专业性、议论性和创意写作等各类别中表现一致,这表明大语言模型具备通用的写作能力。在文本生成质量方面,Chen等人[20]揭示ChatGPT即使在没有参考文本的情况下,也能从多角度出色评估文本质量,其表现超越大多数现有自动化指标。在研究的各种测试方法中,使用ChatGPT生成文本质量的数值评分被证明是最可靠有效的方案。

3.1.4 Multilingual tasks. While English is the predominant language, many LLMs are trained on mixed-language training data. The combination of multilingual data indeed helps LLMs gain the ability to process inputs and generate responses in different languages, making them widely adopted and accepted across the globe. However, due to the relatively recent emergence of this technology, LLMs are primarily evaluated on English data, leading to a potential oversight of evaluating their multilingual performance. To address this, several articles have provided comprehensive, open, and independent evaluations of LLMs’ performance on various NLP tasks in different non-English languages. These evaluations offer valuable insights for future research and applications.

3.1.4 多语言任务。虽然英语是主导语言,但许多大语言模型 (LLM) 都基于混合语言训练数据进行训练。多语言数据的结合确实帮助大语言模型获得了处理不同语言输入和生成响应的能力,使其在全球范围内得到广泛采用和认可。然而,由于该技术出现时间相对较短,大语言模型主要基于英语数据进行评估,可能导致对其多语言性能评估的忽视。为解决这一问题,多篇论文对不同非英语语言中各种 NLP 任务上的大语言模型性能进行了全面、开放且独立的评估。这些评估为未来研究和应用提供了宝贵见解。

Abdelali et al. [1] evaluated the performance of ChatGPT in standard Arabic NLP tasks and observed that ChatGPT exhibits lower performance compared to SOTA models in the zero-shot setting for most tasks. Ahuja et al. [2], Bang et al. [6], Lai et al. [100], Zhang et al. [250] utilized a greater number of languages across multiple datasets, encompassing a wider range of tasks, and conducted a more comprehensive evaluation of LLMs, including BLOOM, Vicuna, Claude, ChatGPT, and GPT-4. The results indicated that these LLMs perform poorly when it came to non-Latin languages and languages with limited resources. Despite translating the input to English and using it as the query, generative LLMs still displays subpar performance across tasks and languages compared to SOTA models [2]. Furthermore, Bang et al. [6] highlighted that ChatGPT still faces a limitation in translating sentences written in non-Latin script languages with rich linguistic resources. The aforementioned demonstrates that there are numerous challenges and ample opportunities for enhancement in multilingual tasks for LLMs. Future research should prioritize achieving multilingual balance and addressing the challenges faced by non-Latin languages and low-resource languages, with the aim of better supporting users worldwide. At the same time, attention should be paid to the impartiality and neutrality of the language in order to mitigate any potential biases, including English bias or other biases, that could impact multilingual applications.

Abdelali等人[1]评估了ChatGPT在标准阿拉伯语NLP任务中的表现,发现ChatGPT在多数任务的零样本设置下性能低于SOTA模型。Ahuja等人[2]、Bang等人[6]、Lai等人[100]、Zhang等人[250]使用了更多语言跨多数据集,涵盖更广泛的任务范围,并对BLOOM、Vicuna、Claude、ChatGPT和GPT-4等大语言模型进行了更全面的评估。结果表明,这些大语言模型在处理非拉丁语系和资源匮乏语言时表现欠佳。即使将输入翻译为英语作为查询,生成式大语言模型在跨任务和跨语言场景中仍表现逊于SOTA模型[2]。此外,Bang等人[6]指出ChatGPT在翻译具有丰富语言资源的非拉丁文字语言时仍存在局限。上述研究表明,大语言模型在多语言任务中仍面临诸多挑战和改进机遇。未来研究应优先实现多语言平衡,解决非拉丁语系和低资源语言面临的挑战,以更好地服务全球用户。同时需关注语言的公正性与中立性,以减轻可能影响多语言应用的潜在偏见(包括英语偏见或其他偏见)。

3.1.5 Factuality. Factuality in the context of LLMs refers to the extent to which the information or answers provided by the model align with real-world truths and verifiable facts. Factuality in LLMs significantly impacts a variety of tasks and downstream applications, such as QA systems, information extraction, text sum mari z ation, dialogue systems, and automated fact-checking, where incorrect or inconsistent information could lead to substantial misunderstandings and misinterpretations. Evaluating factuality is of great importance in order to trust and efficiently use these models. This includes the ability of these models to maintain consistency with known facts, avoid generating misleading or false information (known as “factual hallucination"), and effectively learn and recall factual knowledge. A range of methodologies have been proposed to measure and improve the factuality of LLMs.

3.1.5 事实性。大语言模型中的事实性指模型提供的信息或答案与现实世界真相及可验证事实的吻合程度。大语言模型的事实性显著影响各类任务与下游应用,例如问答系统、信息抽取、文本摘要、对话系统和自动事实核查,其中错误或不一致信息可能导致严重误解与误判。评估事实性对于信任并高效使用这些模型至关重要,包括模型保持与已知事实一致性的能力、避免生成误导或虚假信息(即"事实性幻觉")的能力,以及有效学习与回忆事实性知识的能力。目前已提出多种方法来衡量和改进大语言模型的事实性。

Wang et al. [204] assessed the internal knowledge capabilities of several large models, namely Instruct GP T, ChatGPT-3.5, GPT-4, and BingChat [137], by examining their ability to answer open questions based on the Natural Questions [98] and TriviaQA [88] datasets. The evaluation process involved human assessment. The results of the study indicated that while GPT-4 and BingChat can provide correct answers for more than $80%$ of the questions, there is still a remaining gap of over $15%$ to achieve complete accuracy. In the work of Honovich et al. [74], they conducted a review of current factual consistency evaluation methods and highlighted the absence of a unified comparison framework and the limited reference value of related scores compared to binary labels. To address this, they transformed existing fact consistency tasks into binary labels, specifically considering only whether there is a factual conflict with the input text, without factoring in external knowledge. The research discovered that fact evaluation methods founded on natural language inference and question generation answering exhibit superior performance and can complement each other. Pez es hk pour [156] proposed a novel metric, based on information theory, to assess the inclusion of specific knowledge in LLMs. The metric utilized the concept of uncertainty in knowledge to measure factual ness, calculated by LLMs filling in prompts and examining the probability distribution of the answer. The paper discussed two methods for injecting knowledge into LLMs: explicit inclusion of knowledge in the prompts and implicit fine-tuning of the LLMs using knowledge-related data. The study demonstrated that this approach surpasses traditional ranking methods by achieving an accuracy improvement of over $30%$ . Gekhman et al. [55] improved the method for evaluating fact consistency in sum mari z ation tasks. It proposed a novel approach that involved training student NLI models using summaries generated by multiple models and annotated by LLMs to ensure fact consistency. The trained student model was then used for sum mari z ation fact consistency evaluation. Manakul et al. [133] operated on two hypotheses regarding how LLMs generate factual or hallucinated responses. It proposed the use of three formulas (BERTScore [249], MQAG [134] and n-gram) to evaluate factuality and employed alternative LLMs to gather token probabilities for black-box language models. The study discovered that simply computing sentence likelihood or entropy helped validate the factuality of the responses. Min et al. [138] broke down text generated by LLMs into individual “atomic" facts, which were then evaluated for their correctness. The FActScore is used to measure the performance of estimators through the calculation of F1 scores. The paper tested various estimators and revealed that current estimators still have some way to go in effectively addressing the task. Lin et al. [119] introduced the TruthfulQA dataset, designed to cause models to make mistakes. Multiple language models were tested by providing factual answers. The findings from these experiments suggest that simply scaling up model sizes may not necessarily improve their truthfulness, and recommendations are provided for the training approach. This dataset has become widely used for evaluating the factuality of LLMs [89, 146, 192, 220].

Wang等[204]通过考察InstructGPT、ChatGPT-3.5、GPT-4和BingChat[137]在Natural Questions[98]与TriviaQA[88]数据集上回答开放问题的能力,评估了这些大模型的内部知识能力。该评估过程采用人工判定的方式,研究结果表明:虽然GPT-4和BingChat能对超过$80%$的问题给出正确答案,但距离完全准确仍存在超过$15%$的差距。Honovich等[74]对现有事实一致性评估方法进行综述,指出当前缺乏统一比较框架,且相关分数相比二元标签的参考价值有限。为此他们将现有事实一致性任务转化为二元标签,特别仅考虑是否与输入文本存在事实冲突,而不纳入外部知识。研究发现基于自然语言推理和问答生成的事实评估方法表现更优,且能形成互补。Pez es hk pour[156]提出基于信息论的新指标来评估大语言模型对特定知识的包含程度,该指标利用知识不确定性的概念来衡量事实性,通过大语言模型填充提示词并考察答案概率分布来计算。论文讨论了两种向大语言模型注入知识的方法:在提示词中显式包含知识,以及使用相关知识数据隐式微调大语言模型。研究表明该方法超越传统排序方法,准确率提升超过$30%$。Gekhman等[55]改进了摘要任务中的事实一致性评估方法,提出通过大语言模型标注多模型生成的摘要来训练学生NLI模型,进而用于摘要事实一致性评估的新方案。Manakul等[133]基于大语言模型生成事实性或幻觉性响应的两种假设,提出使用BERTScore[249]、MQAG[134]和n-gram三种公式评估事实性,并采用替代大语言模型为黑盒语言模型收集token概率。研究发现单纯计算句子似然或熵有助于验证响应的真实性。Min等[138]将大语言模型生成文本分解为独立"原子"事实进行正确性评估,通过计算F1分数使用FActScore衡量评估器性能。论文测试了多种评估器,揭示当前评估器在有效解决该任务方面仍有提升空间。Lin等[119]提出TruthfulQA数据集,该数据集旨在诱使模型犯错,通过提供事实性答案测试了多种语言模型。实验结果表明单纯扩大模型规模未必能提升真实性,并对训练方法提出建议。该数据集已成为评估大语言模型事实性的常用基准[89, 146, 192, 220]。

Table 3. Summary of LLMs evaluation on robustness, ethics, biases, and trustworthiness (ordered by the name of the first author).

| Reference | Robustness | Ethics and biases | Trustworthiness |

| Cao et al. [16] | |||

| Dhamala et al. [37] | |||

| Deshpande et al. [35] | |||

| Ferrara [42] | |||

| Gehman et al. [53] | |||

| Hartmann et al. [65] | |||

| Hendrycks et al. [69] | |||

| Hagendorff and Fabi [62] | √ | ||

| Li et al. [111] | √ | ||

| Liu et al.[123] | |||

| Liu et al. [123] | |||

| Li et al. [113] | |||

| Parrish et al. [153] | |||

| Rutinowski et al.[167] | √ | ||

| Rawte et al. [163] | √ | ||

| Sheng et al. [175] | |||

| Simmons [176] | |||

| Wang et al. [207] | √ | ||

| Wang et al. [206] | |||

| Wang et al. [201] | √ | √ | |

| Wang et al. [209] | |||

| Xie et al. [228] | |||

| Yang et al. [234] | |||

| Zhao et al. [258] | √ | ||

| Zhuo et al. [267] | √ | ||

| Zhu et al. [264] | |||

| Zhuo et al. [266] | |||

| Zhang et al. [253] | √ |

表 3: 大语言模型在鲁棒性、伦理、偏见和可信度方面的评估总结 (按第一作者姓名排序)

| 参考文献 | 鲁棒性 | 伦理与偏见 | 可信度 |

|---|---|---|---|

| Cao等 [16] | |||

| Dhamala等 [37] | |||

| Deshpande等 [35] | |||

| Ferrara [42] | |||

| Gehman等 [53] | |||

| Hartmann等 [65] | |||

| Hendrycks等 [69] | |||

| Hagendorff和Fabi [62] | √ | ||

| Li等 [111] | √ | ||

| Liu等 [123] | |||

| Liu等 [123] | |||

| Li等 [113] | |||

| Parrish等 [153] | |||

| Rutinowski等 [167] | √ | ||

| Rawte等 [163] | √ | ||

| Sheng等 [175] | |||

| Simmons [176] | |||

| Wang等 [207] | √ | ||

| Wang等 [206] | |||

| Wang等 [201] | √ | √ | |

| Wang等 [209] | |||

| Xie等 [228] | |||

| Yang等 [234] | |||

| Zhao等 [258] | √ | ||

| Zhuo等 [267] | √ | ||

| Zhu等 [264] | |||

| Zhuo等 [266] | |||

| Zhang等 [253] | √ |

3.2 Robustness, Ethic, Bias, and Trustworthiness

3.2 鲁棒性、伦理、偏见与可信度

The evaluation encompasses crucial aspects of robustness, ethics, biases, and trustworthiness. These factors have gained increasing importance in assessing the performance of LLMs comprehensively.

评估涵盖了鲁棒性、伦理、偏见和可信度等关键方面。这些因素在全面评估大语言模型性能时日益重要。

3.2.1 Robustness. Robustness studies the stability of a system when facing unexpected inputs. Specifically, out-of-distribution (OOD) [207] and adversarial robustness are two popular research topics for robustness. Wang et al. [206] is an early work that evaluated ChatGPT and other LLMs from both the adversarial and OOD perspectives using existing benchmarks such as AdvGLUE [203], ANLI [140], and DDXPlus [41] datasets. Zhuo et al. [267] evaluated the robustness of semantic parsing. Yang et al. [234] evaluated OOD robustness by extending the GLUE [200] dataset. The results of this study emphasize the potential risks to the overall system security when manipulating visual input. For vision-language models, Zhao et al. [258] evaluated LLMs on visual input and transferred them to other visual-linguistic models, revealing the vulnerability of visual input. Li et al. [111] provided an overview of OOD evaluation for language models: adversarial robustness, domain generalization, and dataset biases. Bridging these lines of research, the authors conducted a comparative analysis, unifying the three approaches. They succinctly outlined the data-generation processes and evaluation protocols for each line of study, all while emphasizing the prevailing challenges and future research prospects. Additionally, Liu et al. [123] introduced a large-scale robust visual instruction dataset to enhance the performance of large-scale multi-modal models in handling relevant images and human instructions.

3.2.1 鲁棒性

鲁棒性研究系统在面对意外输入时的稳定性。具体而言,分布外 (out-of-distribution, OOD) [207] 和对抗鲁棒性是鲁棒性研究的两个热门方向。Wang等人[206] 是早期通过AdvGLUE [203]、ANLI [140] 和DDXPlus [41] 等现有基准数据集,从对抗和OOD角度评估ChatGPT及其他大语言模型的工作。Zhuo等人[267] 评估了语义解析的鲁棒性。Yang等人[234] 通过扩展GLUE [200] 数据集评估了OOD鲁棒性。该研究结果强调了操纵视觉输入对整体系统安全的潜在风险。针对视觉语言模型,Zhao等人[258] 在视觉输入上评估大语言模型并将其迁移至其他视觉语言模型,揭示了视觉输入的脆弱性。Li等人[111] 综述了语言模型的OOD评估:对抗鲁棒性、领域泛化和数据集偏差。作者通过对比分析桥接这三类研究,统一了三种方法,简要概述了每类研究的数据生成流程和评估协议,同时强调了当前挑战与未来研究方向。此外,Liu等人[123] 提出了大规模鲁棒视觉指令数据集,以提升大规模多模态模型处理相关图像和人类指令的性能。

For adversarial robustness, Zhu et al. [264] evaluated the robustness of LLMs to prompts by proposing a unified benchmark called Prompt Bench. They comprehensively evaluated adversarial text attacks at multiple levels (character, word, sentence, and semantics). The results showed that contemporary LLMs are vulnerable to adversarial prompts, highlighting the importance of the models’ robustness when facing adversarial inputs. As for new adversarial datasets, Wang et al. [201] introduced AdvGLUE $^{++}$ benchmark data for assessing adversarial robustness and implemented a new evaluation protocol to scrutinize machine ethics via jail breaking system prompts.

在对抗鲁棒性方面,Zhu等人[264]通过提出名为Prompt Bench的统一基准,评估了大语言模型对提示的鲁棒性。他们从多个层面(字符、单词、句子和语义)全面评估了对抗性文本攻击。结果表明,当代大语言模型容易受到对抗性提示的影响,凸显了模型在面对对抗性输入时鲁棒性的重要性。至于新的对抗数据集,Wang等人[201]引入了AdvGLUE$^{++}$基准数据用于评估对抗鲁棒性,并通过越狱系统提示实施新的评估协议来审查机器伦理。

3.2.2 Ethic and bias. LLMs have been found to internalize, spread, and potentially magnify harmful information existing in the crawled training corpora, usually, toxic languages, like offensiveness, hate speech, and insults [53], as well as social biases like stereotypes towards people with a particular demographic identity (e.g., gender, race, religion, occupation, and ideology) [175]. More recently, Zhuo et al. [266] used conventional testing sets and metrics [37, 53, 153] to perform a systematic evaluation of ChatGPT’s toxicity and social bias, finding that it still exhibits noxious content to some extend. Taking a further step, Deshpande et al. [35] introduced role-playing into the model and observed an increase in generated toxicity up to 6x. Furthermore, such role-playing also caused biased toxicity towards specific entities. Different from simply measuring social biases, Ferrara [42] investigated the sources, underlying mechanisms, and corresponding ethical consequences of these biases potentially produced by ChatGPT. Beyond social biases, LLMs have also been assessed by political tendency and personality traits [65, 167] based questionnaires like the Political Compass Test and MBTI test, demonstrating a propensity for progressive views and an ENFJ personality type. In addition, LLMs like GPT-3 were found to have moral biases [176] in terms of the Moral Foundation theory [58]; The study conducted by [69] reveals that existing LMs have potential in ethical judgment, but still need improvement. [256] proposes a Chinese conversational bias evaluation dataset CHBias, discovers bias risks in pre-trained models, and explores debiasing methods. Moreover, in the assessment of GPT-4 alignment, [209] discovered a systematic bias. ChatGPT is also observed to exhibit somewhat bias on cultural values [16]. Wang et al. [201] also incorporated an evaluation dataset specifically aimed at gauging stereotype bias, using both targeted and untargeted system prompts. All these ethical issues might elicit serious risks, impeding the deployment of LLMs and having a profound negative impact on society.

3.2.2 伦理与偏见

研究发现,大语言模型(LLM)会内化、传播并可能放大训练语料中已有的有害信息,通常包括攻击性言论、仇恨言论和侮辱性语言等毒性内容[53],以及针对特定人群(如性别、种族、宗教、职业和意识形态)的社会偏见[175]。最近,Zhuo等人[266]采用传统测试集和指标[37,53,153]对ChatGPT的毒性和社会偏见进行了系统评估,发现其仍在一定程度上表现出有害内容。Deshpande等人[35]进一步将角色扮演引入模型,观察到生成内容的毒性最高增加了6倍,且这种角色扮演还会导致针对特定实体的偏见性毒性。Ferrara[42]不同于简单地测量社会偏见,而是研究了ChatGPT可能产生这些偏见的来源、内在机制及相应的伦理后果。除社会偏见外,研究者还通过政治倾向测试(Political Compass Test)和MBTI测试等问卷评估了大语言模型的政治倾向与人格特质[65,167],发现其表现出进步主义倾向和ENFJ人格类型。此外,基于道德基础理论(Moral Foundation theory)[58],研究发现GPT-3等模型存在道德偏见[176];[69]的研究表明现有语言模型虽具备伦理判断潜力,但仍需改进。[256]提出了中文对话偏见评估数据集CHBias,发现预训练模型的偏见风险并探索了去偏方法。在GPT-4对齐评估中,[209]还发现了系统性偏差。研究也观察到ChatGPT在文化价值观方面存在一定偏差[16]。Wang等人[201]还构建了专门评估刻板偏见的数据集,同时采用定向和非定向系统提示。这些伦理问题可能引发严重风险,阻碍大语言模型部署,并对社会产生深远的负面影响。

3.2.3 Trustworthiness. Some work focuses on other trustworthiness problems in addition to robustness and ethics.3 In their 2023 study, Decoding Trust, Wang et al. [201] offered a multifaceted exploration of trustworthiness vulnerabilities in the GPT models, especially GPT-3.5 and GPT-4. Their evaluation expanded beyond the typical trustworthiness concerns to include eight critical aspects: toxicity, stereotype bias, adversarial and out-of-distribution robustness, robustness to adversarial demonstrations, privacy, machine ethics, and fairness. Decoding Trust’s investigation employs an array of newly constructed scenarios, tasks, and metrics. They revealed that while GPT-4 often showcases improved trustworthiness over GPT-3.5 in standard evaluations, it is simultaneously more susceptible to attacks.

3.2.3 可信度。除鲁棒性和伦理问题外,部分研究还关注其他可信度问题。Wang等人在2023年的研究《Decoding Trust》[201]中对GPT模型(尤其是GPT-3.5和GPT-4)的可信度漏洞进行了多维度探究。该评估超越了典型可信度范畴,涵盖八大关键维度:毒性、刻板偏见、对抗性与分布外鲁棒性、对抗演示鲁棒性、隐私性、机器伦理以及公平性。研究通过构建全新场景、任务和度量体系发现:尽管GPT-4在标准评估中通常表现出优于GPT-3.5的可信度,但其对攻击的敏感性也更高。

In another study by Hagendorff and Fabi [62], LLMs with enhanced cognitive abilities were evaluated. They found that these models can avoid common human intuitions and cognitive errors, demonstrating super-rational performance. By utilizing cognitive reflection tests and semantic illusion experiments, the researchers gained insights into the psychological aspects of LLMs. This method offers new perspectives for evaluating model biases and ethical issues that may not have been previously identified. Furthermore, a study by [228] brings attention to a significant concern: the consistency of judgment in LLMs diminishes notably when faced with disruptions such as questioning, negation, or misleading cues, even if their initial judgments were accurate. The research delves into various prompting methods designed to mitigate this issue and successfully demonstrates their efficacy.

在Hagendorff和Fabi的另一项研究[62]中,评估了具备增强认知能力的大语言模型。他们发现这些模型能够避开常见的人类直觉和认知错误,展现出超理性的表现。通过采用认知反射测试和语义错觉实验,研究人员深入理解了大语言模型的心理层面。这种方法为评估模型偏差和以往可能未被发现的伦理问题提供了新视角。此外,[228]的一项研究指出了一个重要问题:大语言模型在面对质疑、否定或误导性线索等干扰时,其判断一致性会显著下降,即便初始判断是准确的。该研究深入探讨了旨在缓解这一问题的多种提示方法,并成功证明了其有效性。

LLMs are capable of generating coherent and seemingly factual text. However, the information generated can include factual inaccuracies or statements ungrounded in reality, a phenomenon known as hallucination [163, 253]. Evaluating these issues helps improve the training methods of LLMs to reduce the occurrence of hallucinations. For the evaluation of illusions in large-scale visual models, Liu et al. [123] introduced a comprehensive and robust large-scale visual instruction dataset: LRV-Instruction. Through the GAVIE method, they fine-tuned the evaluation visual instructions, and experimental results demonstrated that LRV-Instruction effectively alleviates illusions in LLMs. In addition, Li et al. [113] conducted an assessment of illusions in large-scale visual language models, revealing through experiments that the distribution of objects in visual instructions significantly impacts object illusions in LVLMs. To enhance the assessment of object illusions in LVLMs, they introduced a polling-based query method, known as POPE. This method provides an improved evaluation of object illusions in LVLMs.

大语言模型(LLM)能够生成连贯且看似真实的文本。然而,生成的信息可能包含事实错误或脱离现实的陈述,这种现象被称为幻觉(hallucination) [163, 253]。评估这些问题有助于改进大语言模型的训练方法以减少幻觉发生。针对大规模视觉模型中的幻觉评估,Liu等人[123]提出了一个全面且鲁棒的大规模视觉指令数据集:LRV-Instruction。通过GAVIE方法,他们对评估视觉指令进行了微调,实验结果表明LRV-Instruction有效缓解了大语言模型中的幻觉问题。此外,Li等人[113]对大规模视觉语言模型中的幻觉进行了评估,实验表明视觉指令中物体的分布会显著影响LVLM中的物体幻觉。为提升LVLM中物体幻觉的评估效果,他们提出了一种基于投票的查询方法POPE,该方法为LVLM中的物体幻觉提供了更优的评估方案。

3.3 Social Science

3.3 社会科学

Social science involves the study of human society and individual behavior, including economics, sociology, political science, law, and other disciplines. Evaluating the performance of LLMs in social science is important for academic research, policy formulation, and social problem-solving. Such evaluations can help improve the applicability and quality of models in the social sciences, increasing understanding of human societies and promoting social progress.

社会科学涉及对人类社会中个体行为的研究,包括经济学、社会学、政治学、法学等学科。评估大语言模型(LLM)在社会科学中的表现对于学术研究、政策制定和社会问题解决具有重要意义。此类评估有助于提升模型在社会科学领域的适用性和质量,增进对人类社会的理解并推动社会进步。

Wu et al. [224] evaluated the potential use of LLMs in addressing scaling and measurement issues in social science and found that LLMs can generate meaningful responses regarding political ideology and significantly improve text-as-data methods in social science.

Wu等[224]评估了大语言模型在解决社会科学领域扩展性和测量问题方面的潜在应用,发现大语言模型能够生成关于政治意识形态的有意义响应,并显著改进社会科学中的文本即数据方法。

In computational social science (CSS) tasks, Ziems et al. [269] presented a comprehensive evaluation of LLMs on several CSS tasks. During classification tasks, LLMs exhibit the lowest absolute performance on event argument extraction, character tropes, implicit hate, and empathy classification, achieving accuracy below $40%$ . These tasks either involve complex structures (event arguments) or subjective expert taxonomies with semantics that differ from those learned during LLM pre training. Conversely, LLMs achieve the best performance on misinformation, stance, and emotion classification. When it comes to generation tasks, LLMs often produce explanations that surpass the quality of gold references provided by crowd workers. In summary, while LLMs can greatly enhance the traditional CSS research pipeline, they cannot completely replace it.

在计算社会科学(CSS)任务中,Ziems等人[269]对大语言模型(LLM)在多项CSS任务上进行了全面评估。在分类任务中,大语言模型在事件论元提取、角色刻板印象、隐性仇恨和共情分类等任务上表现最差,准确率低于$40%$。这些任务要么涉及复杂结构(事件论元),要么包含与大语言模型预训练所学语义存在差异的主观专家分类体系。相比之下,大语言模型在虚假信息检测、立场分析和情感分类任务中表现最佳。在生成任务方面,大语言模型生成的解释往往优于众包工作者提供的黄金参考标准。总体而言,虽然大语言模型能显著提升传统CSS研究流程的效率,但仍无法完全取代该流程。

Some articles also evaluate LLMs on legal tasks. The zero-shot performance of LLMs is mediocre in legal case judgment sum mari z ation. LLMs have several problems, including incomplete sentences and words, meaningless sentences merge, and more serious errors such as inconsistent and hallucinated information [34]. The results showed that further improvement is necessary for LLMs to be useful for case judgment sum mari z ation by legal experts. Nay et al. [139] indicated that LLMs, particularly when combined with prompting enhancements and the correct legal texts, could perform better but not yet at expert tax lawyer levels.

一些文章还评估了大语言模型在法律任务上的表现。在法律案件判决摘要任务中,大语言模型的零样本表现平平。这些模型存在若干问题,包括句子和词语不完整、无意义句子合并,以及更严重的错误,如信息不一致和幻觉生成[34]。结果表明,要让大语言模型对法律专家的案件判决摘要工作有所帮助,还需要进一步改进。Nay等人[139]指出,大语言模型在结合提示增强和正确法律文本的情况下表现会更好,但尚未达到税务律师专家的水平。

Lastly, within the realm of psychology, Frank [44] adopted an interdisciplinary approach and drew insights from developmental psychology and comparative psychology to explore alternative methods for evaluating the capabilities of LLMs. By integrating different perspectives, researchers can deepen their understanding of the essence of cognition and effectively leverage the potential of advanced technologies such as large language models, while mitigating potential risks.

最后,在心理学领域,Frank [44] 采用跨学科方法,借鉴发展心理学和比较心理学的观点,探索评估大语言模型 (LLM) 能力的替代方法。通过整合不同视角,研究人员能够深化对认知本质的理解,有效利用大语言模型等先进技术的潜力,同时规避潜在风险。

In conclusion, the utilization of LLMs has significantly benefited individuals in addressing social science-related tasks, leading to improved work efficiency. The outputs produced by LLMs serve as valuable resources for enhancing productivity. However, it is crucial to acknowledge that existing LLMs cannot completely replace human professionals in this domain.

总之,大语言模型(LLM)的应用显著帮助人们处理社会科学相关任务,提升了工作效率。大语言模型生成的输出成为提高生产力的宝贵资源。但必须认识到,现有的大语言模型尚无法完全替代该领域的人类专业人士。

Table 4. Summary of evaluations on natural science and engineering tasks based on three aspects: Mathematics, General science and Engineering (ordered by the name of the first author).

| Reference | Mathematics | Generalscience | Engineering |

| Arora et al.[3] | |||

| Bubeck et al.[15] | |||

| CastroNascimento andPimentel[18] | |||

| Collins et al. [27] | |||

| Dao and Le [31] | |||

| Guo et al. [61] | |||

| Liu et al. [125] | |||

| Pallagani et al. [150] | |||

| Sridhara et al.[181] | |||

| Valmeekam et al.[194] | |||

| Valmeekam et al.[195] | |||

| Wei et al. [221] | |||

| Wu et al. [225] | √ | ||

| Yuan et al. [241] | |||

| Yu et al. [237] | |||

| Zhuang et al.[265] | √ |

表 4: 基于数学、通用科学和工程三个方面的自然科学与工程任务评估总结(按第一作者姓名排序)。

| 参考文献 | 数学 | 通用科学 | 工程 |

|---|---|---|---|

| Arora et al. [3] | |||

| Bubeck et al. [15] | |||

| CastroNascimento and Pimentel [18] | |||

| Collins et al. [27] | |||

| Dao and Le [31] | |||

| Guo et al. [61] | |||

| Liu et al. [125] | |||

| Pallagani et al. [150] | |||

| Sridhara et al. [181] | |||

| Valmeekam et al. [194] | |||

| Valmeekam et al. [195] | |||

| Wei et al. [221] | |||

| Wu et al. [225] | √ | ||

| Yuan et al. [241] | |||

| Yu et al. [237] | |||

| Zhuang et al. [265] | √ |

3.4 Natural Science and Engineering

3.4 自然科学与工程

Evaluating the performance of LLMs in natural science and engineering can help guide applications and development in scientific research, technology development, and engineering studies.

评估大语言模型 (LLM) 在自然科学与工程领域的性能表现,有助于指导科研、技术开发和工程研究中的应用与发展。

3.4.1 Mathematics. For fundamental mathematical problems, most large language models (LLMs) demonstrate proficiency in addition and subtraction, and possess some capability in multiplication. However, they face challenges when it comes to division, exponentiation, trigonometry functions, and logarithm functions. On the other hand, LLMs exhibit competence in handling decimal numbers, negative numbers, and irrational numbers [241]. In terms of performance, ChatGPT and GPT-4 outperform other models significantly, showcasing their superiority in solving mathematical tasks [221]. These two models have a distinct advantage in dealing with large numbers (greater than 1e12) and complex, lengthy mathematical queries. GPT-4 outperforms ChatGPT by achieving a significant increase in accuracy of 10 percentage points and a reduction in relative error by $50%$ , due to its superior division and trigonometry abilities, proper understanding of irrational numbers, and consistent step-by-step calculation of long expressions.

3.4.1 数学。针对基础数学问题,大多数大语言模型(LLM)能熟练完成加减运算,并具备一定的乘法能力,但在除法、幂运算、三角函数和对数函数方面存在困难。另一方面,LLM在处理小数、负数和无理数时表现尚可[241]。性能方面,ChatGPT和GPT-4显著优于其他模型,展现出解决数学任务的卓越能力[221]。这两个模型在处理大数(大于1e12)和复杂冗长的数学问题时具有明显优势。得益于更优的除法和三角函数能力、对无理数的正确理解以及长表达式分步计算的稳定性,GPT-4以10个百分点的准确率提升和$50%$的相对误差降低超越了ChatGPT。

When confronted with complex and challenging mathematical problems, LLMs exhibit subpar performance. Specifically, GPT-3 demonstrates nearly random performance, while GPT-3.5 shows improvement, and GPT-4 performs the best [3]. Despite the advancements made in the new models, it is important to note that the peak performance remains relatively low compared to that of experts and these models lack the capability to engage in mathematical research [15]. The specific tasks of algebraic manipulation and calculation continue to pose challenges for GPTs [15, 27]. The primary reasons behind GPT-4’s low performance in these tasks are errors in algebraic manipulation and difficulties in retrieving pertinent domain-specific concepts. Wu et al. [225] evaluated the use of GPT-4 on difficult high school competition problems and GPT-4 reached $60%$ accuracy on half of the categories. Intermediate algebra and pre calculus can only be solved with a low accuracy rate of around $20%$ . ChatGPT is not good at answering questions on topics including derivatives and applications, Oxyz spatial calculus, and spatial geometry [31]. Dao and Le [31], Wei et al. [221] showed that ChatGPT’s performance worsens as task difficulty increases: it correctly answered $83%$ of the questions at the recognition level, $62%$ at the comprehension level, $27%$ at the application level, and only $10%$ at the highest cognitive complexity level. Given those problems at higher knowledge levels tend to be more complex, requiring in-depth understanding and problem-solving skills, such results are to be expected.

面对复杂且具有挑战性的数学问题时,大语言模型表现欠佳。具体而言,GPT-3的表现近乎随机,GPT-3.5有所改进,而GPT-4表现最佳[3]。尽管新模型取得了进展,但需注意其峰值性能仍远低于专家水平,且这些模型不具备开展数学研究的能力[15]。代数运算和计算等特定任务仍对GPT系列模型构成挑战[15, 27]。GPT-4在这些任务中表现不佳的主要原因是代数运算错误和领域特定概念检索困难。Wu等人[225]评估了GPT-4在高中数学竞赛难题上的表现,其在半数题型中达到60%准确率,但中级代数与微积分预备知识相关问题的正确率仅约20%。ChatGPT在导数应用、Oxyz空间微积分和空间几何等主题的问题解答中表现较差[31]。Dao和Le[31]、Wei等人[221]的研究表明,ChatGPT的表现随任务难度提升而下降:在识别层级问题中正确率为83%,理解层级为62%,应用层级为27%,而在最高认知复杂度层级仅达10%。鉴于高知识层级的问题往往更复杂,需要深度理解与解题技巧,此类结果实属预期。

These results indicate that the effectiveness of LLMs is highly influenced by the complexity of problems they encounter. This finding holds significant implications for the design and development of optimized artificial intelligence systems capable of successfully handling these challenging tasks.

这些结果表明,大语言模型(LLM)的有效性深受其遇到问题复杂性的影响。这一发现对设计和开发能够成功处理这些挑战性任务的优化人工智能系统具有重要意义。

3.4.2 General science. Further improvements are needed in the application of LLMs in the field of chemistry. Castro Nascimento and Pimentel [18] presented five straightforward tasks from various subareas of chemistry to assess ChatGPT’s comprehension of the subject, with accuracy ranging from $25%$ to $100%$ . Guo et al. [61] created a comprehensive benchmark that encompasses 8 practical chemistry tasks, which is designed to assess the performance of LLMs (including GPT-4, GPT-3.5, and Davinci-003) for each chemistry task. Based on the experiment results, GPT-4 demonstrates superior performance compared to the other two models. [3] showed that LLMs perform worse on physics problems than chemistry problems, probably because chemistry problems have lower inference complexity than physics problems in this setting. There are limited evaluation studies on LLMs in the field of general science, and the current findings indicate that further improvement is needed in the performance of LLMs within this domain.

3.4.2 基础科学

大语言模型在化学领域的应用仍需进一步改进。Castro Nascimento 和 Pimentel [18] 通过化学各子领域的五项基础任务评估 ChatGPT 的化学理解能力,其准确率介于 $25%$ 至 $100%$ 之间。Guo 等学者 [61] 构建了涵盖 8 项实际化学任务的综合基准,用于评估 GPT-4、GPT-3.5 和 Davinci-003 等大语言模型在化学任务中的表现。实验结果表明,GPT-4 的性能优于另外两个模型。[3] 指出大语言模型在物理问题上的表现逊于化学问题,这可能是因为在当前设定中化学问题的推理复杂度低于物理问题。目前针对基础科学领域的大语言模型评估研究较为有限,现有结果表明该领域的大语言模型性能仍需提升。

3.4.3 Engineering. Within engineering, the tasks can be organized in ascending order of difficulty, including code generation, software engineering, and commonsense planning.

3.4.3 工程领域。在工程领域,任务可按难度升序组织,包括代码生成、软件工程和常识规划。

In code generation tasks, the smaller LLMs trained for the tasks are competitive in performance, and CodeGen-16B [141] is comparable in performance to ChatGPT using a larger parameter setting, reaching about a $78%$ match [125]. Despite facing challenges in mastering and comprehending certain fundamental concepts in programming languages, ChatGPT showcases a commendable level of coding level [265]. Specifically, ChatGPT has developed superior skills in dynamic programming, greedy algorithm, and search, surpassing highly capable college students, but it struggles in data structure, tree, and graph theory. GPT-4 demonstrates an advanced ability to generate code based on given instructions, comprehend existing code, reason about code execution, simulate the impact of instructions, articulate outcomes in natural language, and execute pseudocode effectively [15].

在代码生成任务中,专为该任务训练的小型大语言模型在性能上具有竞争力,CodeGen-16B [141] 的性能与采用更大参数规模的 ChatGPT 相当,匹配率约为 $78%$ [125]。尽管在掌握和理解编程语言的某些基础概念方面面临挑战,ChatGPT 仍展现出值得称赞的编码水平 [265]。具体而言,ChatGPT 在动态规划、贪心算法和搜索方面表现出色,超越了能力出众的大学生,但在数据结构、树和图论方面存在困难。GPT-4 则展现出更高级的能力:能根据给定指令生成代码、理解现有代码、推理代码执行过程、模拟指令影响、用自然语言阐述结果,并能有效执行伪代码 [15]。

In software engineering tasks, ChatGPT generally performs well and provides detailed responses, often surpassing both human expert output and SOTA output. However, for certain tasks such as code vulnerability detection and information retrieval-based test prior it iz ation, the current version of ChatGPT fails to provide accurate answers, rendering it unsuitable for these specific tasks [181].

在软件工程任务中,ChatGPT通常表现优异,能提供详细回复,其输出质量常超越人类专家和SOTA(State-of-the-art)成果。但对于代码漏洞检测和基于信息检索的测试优先级划分等特定任务,当前版本的ChatGPT无法给出准确答案,因此不适用于这些具体场景 [181]。

In commonsense planning tasks, LLMs may not perform well, even in simple planning tasks where humans excel [194, 195]. Pallagani et al. [150] demonstrated that the fine-tuned CodeT5 [214] performs the best across all considered domains, with the shortest inference time. Moreover, it explored the capability of LLMs for plan generalization and found that their generalization capabilities appear to be limited. It turns out that LLMs can handle simple engineering tasks, but they perform poorly on complex engineering tasks.

在常识性规划任务中,大语言模型可能表现不佳,即使在人类擅长的简单规划任务中也是如此 [194, 195]。Pallagani等人 [150] 证明,经过微调的CodeT5 [214] 在所有考虑的领域中表现最佳,且推理时间最短。此外,研究还探讨了大语言模型在规划泛化方面的能力,发现其泛化能力似乎有限。事实证明,大语言模型可以处理简单的工程任务,但在复杂的工程任务上表现较差。

3.5 Medical Applications

3.5 医疗应用

The application of LLMs in the medical field has recently received significant attention. As a result, this section aims to provide a comprehensive review of the ongoing efforts dedicated to implementing LLMs in medical applications. We have categorized these applications into three aspects as shown in Table 5: medical query, medical examination, and medical assistants. A detailed examination of these categories will enhance our understanding of the potential impact and advantages that LLMs can bring to the medical domain.

大语言模型 (LLM) 在医疗领域的应用近期受到广泛关注。因此,本节旨在全面综述当前将大语言模型应用于医疗场景的研究进展。如表 5 所示,我们将这些应用划分为三类:医疗问询、医学检验和医疗助手。深入分析这些类别将有助于我们理解大语言模型为医疗领域带来的潜在影响与优势。

Table 5. Summary of evaluations on medical applications based on the three aspects: Medical queries, Medical assistants, and Medical examination (ordered by the name of the first author).

| Reference | Medical queries | Medicalexamination | Medicalassistants |

| Cascella et al.[17] | |||

| Chervenaket al.[21] | |||

| Duong and Solomon [39] | √ | ||

| Gilson et al. [57] | |||

| Hamidi and Roberts[63] | |||

| Holmes et al. [73] | |||

| Jahan et al. [81] | √ | ||

| Johnson et al. [87] | √ | ||

| Khan et al. [93] | √ | ||

| Kung et al. [97] | √ | ||

| Lahat et al. [99] | |||

| Lyu et al. [131] | √ | ||

| Oh et al. [143] | √ | ||

| Samaan et al. [169] | |||

| Thirunavukarasu et al.[186] | |||

| Wang et al. [217] | √ |

表 5: 基于医学查询、医学助手和医学检查三方面的评估总结 (按第一作者姓名排序)

| 参考文献 | 医学查询 | 医学检查 | 医学助手 |

|---|---|---|---|

| Cascella 等人 [17] | |||

| Chervenak 等人 [21] | |||

| Duong 和 Solomon [39] | √ | ||

| Gilson 等人 [57] | |||

| Hamidi 和 Roberts [63] | |||

| Holmes 等人 [73] | |||

| Jahan 等人 [81] | √ | ||

| Johnson 等人 [87] | √ | ||

| Khan 等人 [93] | √ | ||

| Kung 等人 [97] | √ | ||

| Lahat 等人 [99] | |||

| Lyu 等人 [131] | √ | ||

| Oh 等人 [143] | √ | ||

| Samaan 等人 [169] | |||

| Thirunavukarasu 等人 [186] | |||

| Wang 等人 [217] | √ |