What are Diffusion Models?

July 11, 2021 · 26 min · Lilian Weng

Table of Contents

[Updated on 2021-09-19: Highly recommend this blog post on score-based generative modeling by Yang Song (author of several key papers in the references)].

[Updated on 2022-08-27: Added classifier-free guidance, GLIDE, unCLIP and Imagen.

[Updated on 2022-08-31: Added latent diffusion model.

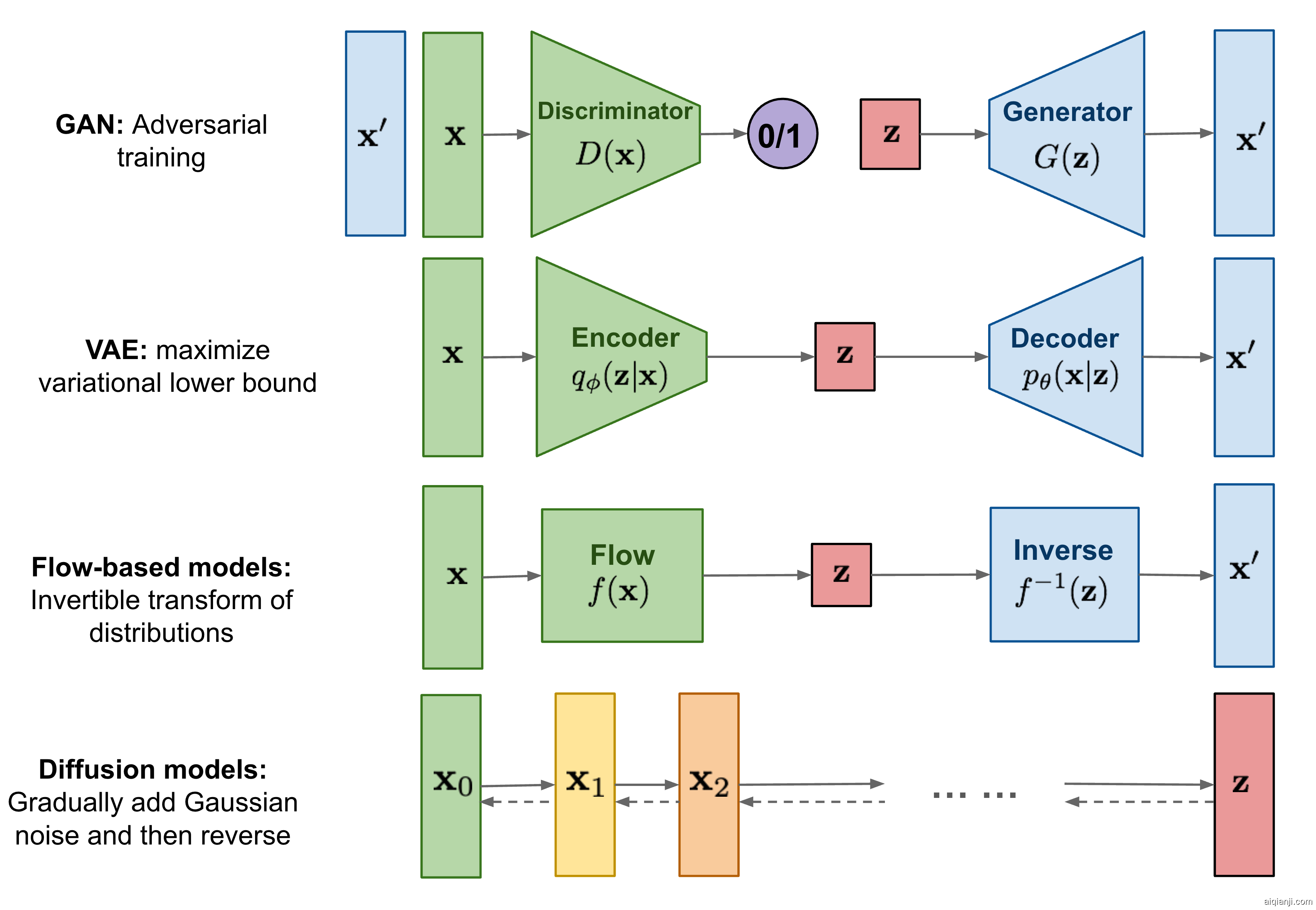

So far, I’ve written about three types of generative models, GAN, VAE, and Flow-based models. They have shown great success in generating high-quality samples, but each has some limitations of its own. GAN models are known for potentially unstable training and less diversity in generation due to their adversarial training nature. VAE relies on a surrogate loss. Flow models have to use specialized architectures to construct reversible transform.

Diffusion models are inspired by non-equilibrium thermodynamics. They define a Markov chain of diffusion steps to slowly add random noise to data and then learn to reverse the diffusion process to construct desired data samples from the noise. Unlike VAE or flow models, diffusion models are learned with a fixed procedure and the latent variable has high dimensionality (same as the original data).

Fig. 1. Overview of different types of generative models.# What are Diffusion Models?

Fig. 1. Overview of different types of generative models.# What are Diffusion Models?

Several diffusion-based generative models have been proposed with similar ideas underneath, including diffusion probabilistic models (Sohl-Dickstein et al., 2015), noise-conditioned score network (NCSN; Yang & Ermon, 2019), and denoising diffusion probabilistic models (DDPM; Ho et al. 2020).

Forward diffusion process

Given a data point sampled from a real data distribution x0∼q(x), let us define a forward diffusion process in which we add small amount of Gaussian noise to the sample in T steps, producing a sequence of noisy samples x1,…,xT. The step sizes are controlled by a variance schedule {βt∈(0,1)}t=1T.

q(xt|xt−1)=N(xt;1−βtxt−1,βtI)q(x1:T|x0)=∏t=1Tq(xt|xt−1)

$$

q(\mathbf{x}t \vert \mathbf{x}{t-1}) = \mathcal{N}(\mathbf{x}t; \sqrt{1 - \beta_t} \mathbf{x}{t-1}, \beta_t\mathbf{I}) \quad

q(\mathbf{x}_{1:T} \vert \mathbf{x}0) = \prod^T{t=1} q(\mathbf{x}t \vert \mathbf{x}{t-1})

$$

The data sample x0 gradually loses its distinguishable features as the step t becomes larger. Eventually when T→∞, xT is equivalent to an isotropic Gaussian distribution.

Fig. 2. The Markov chain of forward (reverse) diffusion process of generating a sample by slowly adding (removing) noise. (Image source: Ho et al. 2020 with a few additional annotations)A nice property of the above process is that we can sample xt at any arbitrary time step t in a closed form using reparameterization trick. Let αt=1−βt and α¯t=∏i=1tαi:

Fig. 2. The Markov chain of forward (reverse) diffusion process of generating a sample by slowly adding (removing) noise. (Image source: Ho et al. 2020 with a few additional annotations)A nice property of the above process is that we can sample xt at any arbitrary time step t in a closed form using reparameterization trick. Let αt=1−βt and α¯t=∏i=1tαi:

xt=αtxt−1+1−αtϵt−1 ;where ϵt−1,ϵt−2,⋯∼N(0,I)=αtαt−1xt−2+1−αtαt−1ϵ¯t−2 ;where ϵ¯t−2 merges two Gaussians (*).=…=α¯tx0+1−α¯tϵq(xt|x0)=N(xt;α¯tx0,(1−α¯t)I)

(*) Recall that when we merge two Gaussians with different variance, N(0,σ12I) and N(0,σ22I), the new distribution is N(0,(σ12+σ22)I). Here the merged standard deviation is (1−αt)+αt(1−αt−1)=1−αtαt−1.

Usually, we can afford a larger update step when the sample gets noisier, so β1<β2<⋯<βT and therefore α¯1>⋯>α¯T.

Connection with stochastic gradient Langevin dynamics

Langevin dynamics is a concept from physics, developed for statistically modeling molecular systems. Combined with stochastic gradient descent, stochastic gradient Langevin dynamics (Welling & Teh 2011) can produce samples from a probability density p(x) using only the gradients ∇xlogp(x) in a Markov chain of updates:

xt=xt−1+δ2∇xlogq(xt−1)+δϵt,where ϵt∼N(0,I)

where δ is the step size. When T→∞,ϵ→0, xT equals to the true probability density p(x).

Compared to standard SGD, stochastic gradient Langevin dynamics injects Gaussian noise into the parameter updates to avoid collapses into local minima.

Reverse diffusion process

If we can reverse the above process and sample from q(xt−1|xt), we will be able to recreate the true sample from a Gaussian noise input, xT∼N(0,I). Note that if βt is small enough, q(xt−1|xt) will also be Gaussian. Unfortunately, we cannot easily estimate q(xt−1|xt) because it needs to use the entire dataset and therefore we need to learn a model pθ to approximate these conditional probabilities in order to run the reverse diffusion process.

pθ(x0:T)=p(xT)∏t=1Tpθ(xt−1|xt)pθ(xt−1|xt)=N(xt−1;μθ(xt,t),Σθ(xt,t))

Fig. 3. An example of training a diffusion model for modeling a 2D swiss roll data. (Image source: Sohl-Dickstein et al., 2015)It is noteworthy that the reverse conditional probability is tractable when conditioned on x0:

Fig. 3. An example of training a diffusion model for modeling a 2D swiss roll data. (Image source: Sohl-Dickstein et al., 2015)It is noteworthy that the reverse conditional probability is tractable when conditioned on x0:

q(xt−1|xt,x0)=N(xt−1;μ~(xt,x0),β~tI)

Using Bayes' rule, we have:

q(xt−1|xt,x0)=q(xt|xt−1,x0)q(xt−1|x0)q(xt|x0)∝exp(−12((xt−αtxt−1)2βt+(xt−1−α¯t−1x0)21−α¯t−1−(xt−α¯tx0)21−α¯t))=exp(−12(xt2−2αtxtxt−1+αtxt−12βt+xt−12−2α¯t−1x0xt−1+α¯t−1x021−α¯t−1−(xt−α¯tx0)21−α¯t))=exp(−12((αtβt+11−α¯t−1)xt−12−(2αtβtxt+2α¯t−11−α¯t−1x0)xt−1+C(xt,x0)))

where C(xt,x0) is some function not involving xt−1 and details are omitted. Following the standard Gaussian density function, the mean and variance can be parameterized as follows (recall that αt=1−βt and α¯t=∏i=1Tαi):

βt=1/(αtβt+11−α¯t−1)=1/(αt−α¯t+βtβt(1−α¯t−1))=1−α¯t−11−α¯t⋅βtμt(xt,x0)=(αtβtxt+α¯t−11−α¯t−1x0)/(αtβt+11−α¯t−1)=(αtβtxt+α¯t−11−α¯t−1x0)1−α¯t−11−α¯t⋅βt=αt(1−α¯t−1)1−α¯txt+α¯t−1βt1−α¯tx0

Thanks to the nice property, we can represent x0=1α¯t(xt−1−α¯tϵt) and plug it into the above equation and obtain:

μ~t=αt(1−α¯t−1)1−α¯txt+α¯t−1βt1−α¯t1α¯t(xt−1−α¯tϵt)=1αt(xt−1−αt1−α¯tϵt)

As demonstrated in Fig. 2., such a setup is very similar to VAE and thus we can use the variational lower bound to optimize the negative log-likelihood.

−logpθ(x0)≤−logpθ(x0)+DKL(q(x1:T|x0)‖pθ(x1:T|x0))=−logpθ(x0)+Ex1:T∼q(x1:T|x0)[logq(x1:T|x0)pθ(x0:T)/pθ(x0)]=−logpθ(x0)+Eq[logq(x1:T|x0)pθ(x0:T)+logpθ(x0)]=Eq[logq(x1:T|x0)pθ(x0:T)]Let LVLB=Eq(x0:T)[logq(x1:T|x0)pθ(x0:T)]≥−Eq(x0)logpθ(x0)

It is also straightforward to get the same result using Jensen’s inequality. Say we want to minimize the cross entropy as the learning objective,

LCE=−Eq(x0)logpθ(x0)=−Eq(x0)log(∫pθ(x0:T)dx1:T)=−Eq(x0)log(∫q(x1:T|x0)pθ(x0:T)q(x1:T|x0)dx1:T)=−Eq(x0)log(Eq(x1:T|x0)pθ(x0:T)q(x1:T|x0))≤−Eq(x0:T)logpθ(x0:T)q(x1:T|x0)=Eq(x0:T)[logq(x1:T|x0)pθ(x0:T)]=LVLB

To convert each term in the equation to be analytically computable, the objective can be further rewritten to be a combination of several KL-divergence and entropy terms (See the detailed step-by-step process in Appendix B in Sohl-Dickstein et al., 2015):

LVLB=Eq(x0:T)[logq(x1:T|x0)pθ(x0:T)]=Eq[log∏t=1Tq(xt|xt−1)pθ(xT)∏t=1Tpθ(xt−1|xt)]=Eq[−logpθ(xT)+∑t=1Tlogq(xt|xt−1)pθ(xt−1|xt)]=Eq[−logpθ(xT)+∑t=2Tlogq(xt|xt−1)pθ(xt−1|xt)+logq(x1|x0)pθ(x0|x1)]=Eq[−logpθ(xT)+∑t=2Tlog(q(xt−1|xt,x0)pθ(xt−1|xt)⋅q(xt|x0)q(xt−1|x0))+logq(x1|x0)pθ(x0|x1)]=Eq[−logpθ(xT)+∑t=2Tlogq(xt−1|xt,x0)pθ(xt−1|xt)+∑t=2Tlogq(xt|x0)q(xt−1|x0)+logq(x1|x0)pθ(x0|x1)]=Eq[−logpθ(xT)+∑t=2Tlogq(xt−1|xt,x0)pθ(xt−1|xt)+logq(xT|x0)q(x1|x0)+logq(x1|x0)pθ(x0|x1)]=Eq[logq(xT|x0)pθ(xT)+∑t=2Tlogq(xt−1|xt,x0)pθ(xt−1|xt)−logpθ(x0|x1)]=Eq[DKL(q(xT|x0)∥pθ(xT))⏟LT+∑t=2TDKL(q(xt−1|xt,x0)∥pθ(xt−1|xt))⏟Lt−1−logpθ(x0|x1)⏟L0]

Let’s label each component in the variational lower bound loss separately:

LVLB=LT+LT−1+⋯+L0where LT=DKL(q(xT|x0)∥pθ(xT))Lt=DKL(q(xt|xt+1,x0)∥pθ(xt|xt+1)) for 1≤t≤T−1L0=−logpθ(x0|x1)

Every KL term in LVLB (except for L0) compares two Gaussian distributions and therefore they can be computed in closed form. LT is constant and can be ignored during training because q has no learnable parameters and xT is a Gaussian noise. Ho et al. 2020 models L0 using a separate discrete decoder derived from N(x0;μθ(x1,1),Σθ(x1,1)).

Parameterization of Lt for Training Loss

Recall that we need to learn a neural network to approximate the conditioned probability distributions in the reverse diffusion process, pθ(xt−1|xt)=N(xt−1;μθ(xt,t),Σθ(xt,t)). We would like to train μθ to predict μ~t=1αt(xt−1−αt1−α¯tϵt). Because xt is available as input at training time, we can reparameterize the Gaussian noise term instead to make it predict ϵt from the input xt at time step t:

μθ(xt,t)=1αt(xt−1−αt1−α¯tϵθ(xt,t))Thus xt−1=N(xt−1;1αt(xt−1−αt1−α¯tϵθ(xt,t)),Σθ(xt,t))

The loss term Lt is parameterized to minimize the difference from μ~ :

Lt=Ex0,ϵ[12‖Σθ(xt,t)‖22‖μ~t(xt,x0)−μθ(xt,t)‖2]=Ex0,ϵ[12‖Σθ‖22‖1αt(xt−1−αt1−α¯tϵt)−1αt(xt−1−αt1−α¯tϵθ(xt,t))‖2]=Ex0,ϵ[(1−αt)22αt(1−α¯t)‖Σθ‖22‖ϵt−ϵθ(xt,t)‖2]=Ex0,ϵ[(1−αt)22αt(1−α¯t)‖Σθ‖22‖ϵt−ϵθ(α¯tx0+1−α¯tϵt,t)‖2]

Simplification

Empirically, Ho et al. (2020) found that training the diffusion model works better with a simplified objective that ignores the weighting term:

Ltsimple=Et∼[1,T],x0,ϵt[‖ϵt−ϵθ(xt,t)‖2]=Et∼[1,T],x0,ϵt[‖ϵt−ϵθ(α¯tx0+1−α¯tϵt,t)‖2]

The final simple objective is:

Lsimple=Ltsimple+C

where C is a constant not depending on θ.

Fig. 4. The training and sampling algorithms in DDPM (Image source: Ho et al. 2020)### Connection with noise-conditioned score networks (NCSN)

Fig. 4. The training and sampling algorithms in DDPM (Image source: Ho et al. 2020)### Connection with noise-conditioned score networks (NCSN)

Song & Ermon (2019) proposed a score-based generative modeling method where samples are produced via Langevin dynamics using gradients of the data distribution estimated with score matching. The score of each sample x’s density probability is defined as its gradient ∇xlogq(x). A score network sθ:RD→RD is trained to estimate it, sθ(x)≈∇xlogq(x).

To make it scalable with high-dimensional data in the deep learning setting, they proposed to use either denoising score matching (Vincent, 2011) or sliced score matching (use random projections; Song et al., 2019). Denosing score matching adds a pre-specified small noise to the data q(x~|x) and estimates q(x~) with score matching.

Recall that Langevin dynamics can sample data points from a probability density distribution using only the score ∇xlogq(x) in an iterative process.

However, according to the manifold hypothesis, most of the data is expected to concentrate in a low dimensional manifold, even though the observed data might look only arbitrarily high-dimensional. It brings a negative effect on score estimation since the data points cannot cover the whole space. In regions where data density is low, the score estimation is less reliable. After adding a small Gaussian noise to make the perturbed data distribution cover the full space RD, the training of the score estimator network becomes more stable. Song & Ermon (2019) improved it by perturbing the data with the noise of different levels and train a noise-conditioned score network to jointly estimate the scores of all the perturbed data at different noise levels.

The schedule of increasing noise levels resembles the forward diffusion process. If we use the diffusion process annotation, the score approximates sθ(xt,t)≈∇xtlogq(xt). Given a Gaussian distribution x∼N(μ,σ2I), we can write the derivative of the logarithm of its density function as ∇xlogp(x)=∇x(−12σ2(x−μ)2)=−x−μσ2=−ϵσ where ϵ∼N(0,I). Recall that q(xt|x0)∼N(α¯tx0,(1−α¯t)I) and therefore,

sθ(xt,t)≈∇xtlogq(xt)=Eq(x0)[∇xtq(xt|x0)]=Eq(x0)[−ϵθ(xt,t)1−α¯t]=−ϵθ(xt,t)1−α¯t

Parameterization of βt

The forward variances are set to be a sequence of linearly increasing constants in Ho et al. (2020), from β1=10−4 to βT=0.02. They are relatively small compared to the normalized image pixel values between [−1,1]. Diffusion models in their experiments showed high-quality samples but still could not achieve competitive model log-likelihood as other generative models.

Nichol & Dhariwal (2021) proposed several improvement techniques to help diffusion models to obtain lower NLL. One of the improvements is to use a cosine-based variance schedule. The choice of the scheduling function can be arbitrary, as long as it provides a near-linear drop in the middle of the training process and subtle changes around t=0 and t=T.

βt=clip(1−α¯tα¯t−1,0.999)α¯t=f(t)f(0)where f(t)=cos(t/T+s1+s⋅π2)

where the small offset s is to prevent βt from being too small when close to t=0.

Fig. 5. Comparison of linear and cosine-based scheduling of β_t during training. (Image source: Nichol & Dhariwal, 2021)## Parameterization of reverse process variance Σθ

Fig. 5. Comparison of linear and cosine-based scheduling of β_t during training. (Image source: Nichol & Dhariwal, 2021)## Parameterization of reverse process variance Σθ

Ho et al. (2020) chose to fix βt as constants instead of making them learnable and set Σθ(xt,t)=σt2I , where σt is not learned but set to βt or β~t=1−α¯t−11−α¯t⋅βt. Because they found that learning a diagonal variance Σθ leads to unstable training and poorer sample quality.

Nichol & Dhariwal (2021) proposed to learn Σθ(xt,t) as an interpolation between βt and β~t by model predicting a mixing vector v :

Σθ(xt,t)=exp(vlogβt+(1−v)logβ~t)

However, the simple objective Lsimple does not depend on Σθ . To add the dependency, they constructed a hybrid objective Lhybrid=Lsimple+λLVLB where λ=0.001 is small and stop gradient on μθ in the LVLB term such that LVLB only guides the learning of Σθ. Empirically they observed that LVLB is pretty challenging to optimize likely due to noisy gradients, so they proposed to use a time-averaging smoothed version of LVLB with importance sampling.

Fig. 6. Comparison of negative log-likelihood of improved DDPM with other likelihood-based generative models. NLL is reported in the unit of bits/dim. (Image source: Nichol & Dhariwal, 2021)# Speed up Diffusion Model Sampling

Fig. 6. Comparison of negative log-likelihood of improved DDPM with other likelihood-based generative models. NLL is reported in the unit of bits/dim. (Image source: Nichol & Dhariwal, 2021)# Speed up Diffusion Model Sampling

It is very slow to generate a sample from DDPM by following the Markov chain of the reverse diffusion process, as T can be up to one or a few thousand steps. One data point from Song et al. 2020: “For example, it takes around 20 hours to sample 50k images of size 32 × 32 from a DDPM, but less than a minute to do so from a GAN on an Nvidia 2080 Ti GPU.”

One simple way is to run a strided sampling schedule (Nichol & Dhariwal, 2021) by taking the sampling update every ⌈T/S⌉ steps to reduce the process from T to S steps. The new sampling schedule for generation is {τ1,…,τS} where τ1<τ2<⋯<τS∈[1,T] and S<T.

For another approach, let’s rewrite qσ(xt−1|xt,x0) to be parameterized by a desired standard deviation σt according to the nice property:

xt−1=α¯t−1x0+1−α¯t−1ϵt−1=α¯t−1x0+1−α¯t−1−σt2ϵt+σtϵ=α¯t−1x0+1−α¯t−1−σt2xt−α¯tx01−α¯t+σtϵqσ(xt−1|xt,x0)=N(xt−1;α¯t−1x0+1−α¯t−1−σt2xt−α¯tx01−α¯t,σt2I)

Recall that in q(xt−1|xt,x0)=N(xt−1;μ~(xt,x0),β~tI), therefore we have:

β~t=σt2=1−α¯t−11−α¯t⋅βt

Let σt2=η⋅β~t such that we can adjust η∈R+ as a hyperparameter to control the sampling stochasticity. The special case of η=0 makes the sampling process *deterministic*. Such a model is named the *denoising diffusion implicit model* (**DDIM**; Song et al., 2020). DDIM has the same marginal noise distribution but deterministically maps noise back to the original data samples.

During generation, we only sample a subset of S diffusion steps {τ1,…,τS} and the inference process becomes:

qσ,τ(xτi−1|xτt,x0)=N(xτi−1;α¯t−1x0+1−α¯t−1−σt2xτi−α¯tx01−α¯t,σt2I)

While all the models are trained with T=1000 diffusion steps in the experiments, they observed that DDIM (η=0) can produce the best quality samples when S is small, while DDPM (η=1) performs much worse on small S. DDPM does perform better when we can afford to run the full reverse Markov diffusion steps (S=T=1000). With DDIM, it is possible to train the diffusion model up to any arbitrary number of forward steps but only sample from a subset of steps in the generative process.

Fig. 7. FID scores on CIFAR10 and CelebA datasets by diffusion models of different settings, including DDIM (η=0) and DDPM (σ^). (Image source: Song et al., 2020)Compared to DDPM, DDIM is able to:

Fig. 7. FID scores on CIFAR10 and CelebA datasets by diffusion models of different settings, including DDIM (η=0) and DDPM (σ^). (Image source: Song et al., 2020)Compared to DDPM, DDIM is able to:

- Generate higher-quality samples using a much fewer number of steps.

- Have “consistency” property since the generative process is deterministic, meaning that multiple samples conditioned on the same latent variable should have similar high-level features.

- Because of the consistency, DDIM can do semantically meaningful interpolation in the latent variable.

Latent diffusion model (LDM; Rombach & Blattmann, et al. 2022) runs the diffusion process in the latent space instead of pixel space, making training cost lower and inference speed faster. It is motivated by the observation that most bits of an image contribute to perceptual details and the semantic and conceptual composition still remains after aggressive compression. LDM loosely decomposes the perceptual compression and semantic compression with generative modeling learning by first trimming off pixel-level redundancy with autoencoder and then manipulate/generate semantic concepts with diffusion process on learned latent.

Fig. 8. The plot for tradeoff between compression rate and distortion, illustrating two-stage compressions - perceptural and semantic comparession. (Image source: Rombach & Blattmann, et al. 2022)The perceptual compression process relies on an autoencoder model. An encoder E is used to compress the input image x∈RH×W×3 to a smaller 2D latent vector z=E(x)∈Rh×w×c , where the downsampling rate f=H/h=W/w=2m,m∈N. Then an decoder D reconstructs the images from the latent vector, x~=D(z). The paper explored two types of regularization in autoencoder training to avoid arbitrarily high-variance in the latent spaces.

Fig. 8. The plot for tradeoff between compression rate and distortion, illustrating two-stage compressions - perceptural and semantic comparession. (Image source: Rombach & Blattmann, et al. 2022)The perceptual compression process relies on an autoencoder model. An encoder E is used to compress the input image x∈RH×W×3 to a smaller 2D latent vector z=E(x)∈Rh×w×c , where the downsampling rate f=H/h=W/w=2m,m∈N. Then an decoder D reconstructs the images from the latent vector, x~=D(z). The paper explored two types of regularization in autoencoder training to avoid arbitrarily high-variance in the latent spaces.

- KL-reg: A small KL penalty towards a standard normal distribution over the learned latent, similar to VAE.

- VQ-reg: Uses a vector quantization layer within the decoder, like VQVAE but the quantization layer is absorbed by the decoder.

The diffusion and denoising processes happen on the latent vector z. The denoising model is a time-conditioned U-Net, augmented with the cross-attention mechanism to handle flexible conditioning information for image generation (e.g. class labels, semantic maps, blurred variants of an image). The design is equivalent to fuse representation of different modality into the model with cross-attention mechanism. Each type of conditioning information is paired with a domain-specific encoder τθ to project the conditioning input y to an intermediate representation that can be mapped into cross-attention component, τθ(y)∈RM×dτ:

Attention(Q,K,V)=softmax(QK⊤d)⋅Vwhere Q=WQ(i)⋅φi(zi),K=WK(i)⋅τθ(y),V=WV(i)⋅τθ(y)and WQ(i)∈Rd×dϵi,WK(i),WV(i)∈Rd×dτ,φi(zi)∈RN×dϵi,τθ(y)∈RM×dτ

Fig. 9. The architecture of latent diffusion model. (Image source: Rombach & Blattmann, et al. 2022)# Conditioned Generation

Fig. 9. The architecture of latent diffusion model. (Image source: Rombach & Blattmann, et al. 2022)# Conditioned Generation

While training generative models on images with conditioning information such as ImageNet dataset, it is common to generate samples conditioned on class labels or a piece of descriptive text.

Classifier Guided Diffusion

To explicit incorporate class information into the diffusion process, Dhariwal & Nichol (2021) trained a classifier fϕ(y|xt,t) on noisy image xt and use gradients ∇xlogfϕ(y|xt) to guide the diffusion sampling process toward the conditioning information y (e.g. a target class label) by altering the noise prediction. Recall that ∇xtlogq(xt)=−11−α¯tϵθ(xt,t) and we can write the score function for the joint distribution q(xt,y) as following,

∇xtlogq(xt,y)=∇xtlogq(xt)+∇xtlogq(y|xt)≈−11−α¯tϵθ(xt,t)+∇xtlogfϕ(y|xt)=−11−α¯t(ϵθ(xt,t)−1−α¯t∇xtlogfϕ(y|xt))

Thus, a new classifier-guided predictor ϵ¯θ would take the form as following,

ϵ¯θ(xt,t)=ϵθ(xt,t)−1−α¯t∇xtlogfϕ(y|xt)

To control the strength of the classifier guidance, we can add a weight w to the delta part,

ϵ¯θ(xt,t)=ϵθ(xt,t)−1−α¯tw∇xtlogfϕ(y|xt)

The resulting ablated diffusion model (ADM) and the one with additional classifier guidance (ADM-G) are able to achieve better results than SOTA generative models (e.g. BigGAN).

Fig. 10. The algorithms use guidance from a classifier to run conditioned generation with DDPM and DDIM. (Image source: Dhariwal & Nichol, 2021])Additionally with some modifications on the U-Net architecture, Dhariwal & Nichol (2021) showed performance better than GAN with diffusion models. The architecture modifications include larger model depth/width, more attention heads, multi-resolution attention, BigGAN residual blocks for up/downsampling, residual connection rescale by 1/2 and adaptive group normalization (AdaGN).

Fig. 10. The algorithms use guidance from a classifier to run conditioned generation with DDPM and DDIM. (Image source: Dhariwal & Nichol, 2021])Additionally with some modifications on the U-Net architecture, Dhariwal & Nichol (2021) showed performance better than GAN with diffusion models. The architecture modifications include larger model depth/width, more attention heads, multi-resolution attention, BigGAN residual blocks for up/downsampling, residual connection rescale by 1/2 and adaptive group normalization (AdaGN).

Classifier-Free Guidance

Without an independent classifier fϕ, it is still possible to run conditional diffusion steps by incorporating the scores from a conditional and an unconditional diffusion model (Ho & Salimans, 2021). Let unconditional denoising diffusion model pθ(x) parameterized through a score estimator ϵθ(xt,t) and the conditional model pθ(x|y) parameterized through ϵθ(xt,t,y). These two models can be learned via a single neural network. Precisely, a conditional diffusion model pθ(x|y) is trained on paired data (x,y), where the conditioning information y gets discarded periodically at random such that the model knows how to generate images unconditionally as well, i.e. ϵθ(xt,t)=ϵθ(xt,t,y=∅).

The gradient of an implicit classifier can be represented with conditional and unconditional score estimators. Once plugged into the classifier-guided modified score, the score contains no dependency on a separate classifier.

∇xtlogp(y|xt)=∇xtlogp(xt|y)−∇xtlogp(xt)=−11−α¯t(ϵθ(xt,t,y)−ϵθ(xt,t))ϵ¯θ(xt,t,y)=ϵθ(xt,t,y)−1−α¯tw∇xtlogp(y|xt)=ϵθ(xt,t,y)+w(ϵθ(xt,t,y)−ϵθ(xt,t))=(w+1)ϵθ(xt,t,y)−wϵθ(xt,t)

Their experiments showed that classifier-free guidance can achieve a good balance between FID (distinguish between synthetic and generated images) and IS (quality and diversity).

The guided diffusion model, GLIDE (Nichol, Dhariwal & Ramesh, et al. 2022), explored both guiding strategies, CLIP guidance and classifier-free guidance, and found that the latter is more preferred. They hypothesized that it is because CLIP guidance exploits the model with adversarial examples towards the CLIP model, rather than optimize the better matched images generation.

Scale up Generation Resolution and Quality

To generate high-quality images at high resolution, Ho et al. (2021) proposed to use a pipeline of multiple diffusion models at increasing resolutions. Noise conditioning augmentation between pipeline models is crucial to the final image quality, which is to apply strong data augmentation to the conditioning input z of each super-resolution model pθ(x|z). The conditioning noise helps reduce compounding error in the pipeline setup. U-net is a common choice of model architecture in diffusion modeling for high-resolution image generation.

Fig. 11. A cascaded pipeline of multiple diffusion models at increasing resolutions. (Image source: Ho et al. 2021])They found the most effective noise is to apply Gaussian noise at low resolution and Gaussian blur at high resolution. In addition, they also explored two forms of conditioning augmentation that require small modification to the training process. Note that conditioning noise is only applied to training but not at inference.

Fig. 11. A cascaded pipeline of multiple diffusion models at increasing resolutions. (Image source: Ho et al. 2021])They found the most effective noise is to apply Gaussian noise at low resolution and Gaussian blur at high resolution. In addition, they also explored two forms of conditioning augmentation that require small modification to the training process. Note that conditioning noise is only applied to training but not at inference.

- Truncated conditioning augmentation stops the diffusion process early at step t>0 for low resolution.

- Non-truncated conditioning augmentation runs the full low resolution reverse process until step 0 but then corrupt it by zt∼q(xt|x0) and then feeds the corrupted zt s into the super-resolution model.

The two-stage diffusion model unCLIP (Ramesh et al. 2022) heavily utilizes the CLIP text encoder to produce text-guided images at high quality. Given a pretrained CLIP model c and paired training data for the diffusion model, (x,y), where x is an image and y is the corresponding caption, we can compute the CLIP text and image embedding, ct(y) and ci(x), respectively. The unCLIP learns two models in parallel:

- A prior model P(ci|y): outputs CLIP image embedding ci given the text y.

- A decoder P(x|ci,[y]): generates the image x given CLIP image embedding ci and optionally the original text y.

These two models enable conditional generation, because

P(x|y)=P(x,ci|y)⏟ci is deterministic given x=P(x|ci,y)P(ci|y)

Fig. 12. The architecture of unCLIP. (Image source: Ramesh et al. 2022])unCLIP follows a two-stage image generation process:

Fig. 12. The architecture of unCLIP. (Image source: Ramesh et al. 2022])unCLIP follows a two-stage image generation process:

- Given a text y, a CLIP model is first used to generate a text embedding ct(y). Using CLIP latent space enables zero-shot image manipulation via text.

- A diffusion or autoregressive prior P(ci|y) processes this CLIP text embedding to construct an image prior and then a diffusion decoder P(x|ci,[y]) generates an image, conditioned on the prior. This decoder can also generate image variations conditioned on an image input, preserving its style and semantics.

Instead of CLIP model, Imagen (Saharia et al. 2022) uses a pre-trained large LM (i.e. a frozen T5-XXL text encoder) to encode text for image generation. There is a general trend that larger model size can lead to better image quality and text-image alignment. They found that T5-XXL and CLIP text encoder achieve similar performance on MS-COCO, but human evaluation prefers T5-XXL on DrawBench (a collection of prompts covering 11 categories).

When applying classifier-free guidance, increasing w may lead to better image-text alignment but worse image fidelity. They found that it is due to train-test mismatch, that is saying, because training data x stays within the range [−1,1], the test data should be so too. Two thresholding strategies are introduced:

- Static thresholding: clip x prediction to [−1,1]

- Dynamic thresholding: at each sampling step, compute s as a certain percentile absolute pixel value; if s>1, clip the prediction to [−s,s] and divide by s.

Imagen modifies several designs in U-net to make it efficient U-Net.

- Shift model parameters from high resolution blocks to low resolution by adding more residual locks for the lower resolutions;

- Scale the skip connections by 1/2

- Reverse the order of downsampling (move it before convolutions) and upsampling operations (move it after convolution) in order to improve the speed of forward pass.

They found that noise conditioning augmentation, dynamic thresholding and efficient U-Net are critical for image quality, but scaling text encoder size is more important than U-Net size.

Quick Summary

- Pros: Tractability and flexibility are two conflicting objectives in generative modeling. Tractable models can be analytically evaluated and cheaply fit data (e.g. via a Gaussian or Laplace), but they cannot easily describe the structure in rich datasets. Flexible models can fit arbitrary structures in data, but evaluating, training, or sampling from these models is usually expensive. Diffusion models are both analytically tractable and flexible

- Cons: Diffusion models rely on a long Markov chain of diffusion steps to generate samples, so it can be quite expensive in terms of time and compute. New methods have been proposed to make the process much faster, but the sampling is still slower than GAN.

Citation

Cited as:

Weng, Lilian. (Jul 2021). What are diffusion models? Lil’Log. https://lilianweng.github.io/posts/2021-07-11-diffusion-models/.

Or

@article{weng2021diffusion,

title = "What are diffusion models?",

author = "Weng, Lilian",

journal = "lilianweng.github.io",

year = "2021",

month = "Jul",

url = "https://lilianweng.github.io/posts/2021-07-11-diffusion-models/"

}

References

[1] Jascha Sohl-Dickstein et al. “Deep Unsupervised Learning using Nonequilibrium Thermodynamics.” ICML 2015.

[2] Max Welling & Yee Whye Teh. “Bayesian learning via stochastic gradient langevin dynamics.” ICML 2011.

[3] Yang Song & Stefano Ermon. “Generative modeling by estimating gradients of the data distribution.” NeurIPS 2019.

[4] Yang Song & Stefano Ermon. “Improved techniques for training score-based generative models.” NeuriPS 2020.

[5] Jonathan Ho et al. “Denoising diffusion probabilistic models.” arxiv Preprint arxiv:2006.11239 (2020). [code]

[6] Jiaming Song et al. “Denoising diffusion implicit models.” arxiv Preprint arxiv:2010.02502 (2020). [code]

[7] Alex Nichol & Prafulla Dhariwal. “Improved denoising diffusion probabilistic models” arxiv Preprint arxiv:2102.09672 (2021). [code]

[8] Prafula Dhariwal & Alex Nichol. “Diffusion Models Beat GANs on Image Synthesis." arxiv Preprint arxiv:2105.05233 (2021). [code]

[9] Jonathan Ho & Tim Salimans. “Classifier-Free Diffusion Guidance." NeurIPS 2021 Workshop on Deep Generative Models and Downstream Applications.

[10] Yang Song, et al. “Score-Based Generative Modeling through Stochastic Differential Equations." ICLR 2021.

[11] Alex Nichol, Prafulla Dhariwal & Aditya Ramesh, et al. “GLIDE: Towards Photorealistic Image Generation and Editing with Text-Guided Diffusion Models." ICML 2022.

[12] Jonathan Ho, et al. “Cascaded diffusion models for high fidelity image generation." J. Mach. Learn. Res. 23 (2022): 47-1.

[13] Aditya Ramesh et al. “Hierarchical Text-Conditional Image Generation with CLIP Latents." arxiv Preprint arxiv:2204.06125 (2022).

[14] Chitwan Saharia & William Chan, et al. “Photorealistic Text-to-Image Diffusion Models with Deep Language Understanding." arxiv Preprint arxiv:2205.11487 (2022).

[15] Rombach & Blattmann, et al. “High-Resolution Image Synthesis with Latent Diffusion Models." CVPR 2022.code